The Elder Scrolls IV: Oblivion GPU Performance

by Anand Lal Shimpi on April 26, 2006 1:07 PM EST- Posted in

- GPUs

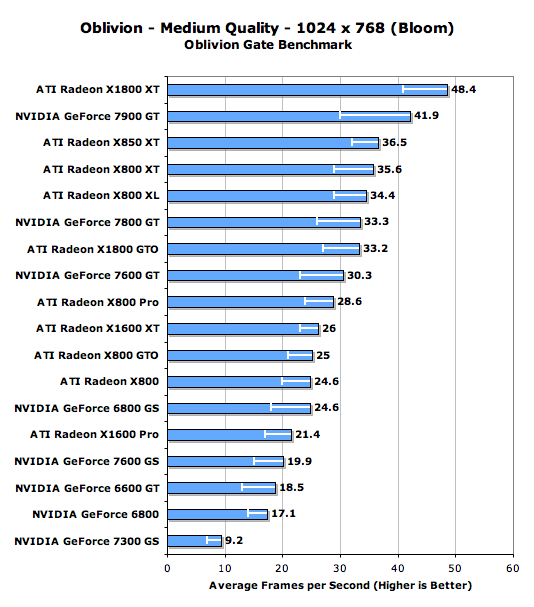

Mid Range GPU Performance w/ Bloom Enabled

Just as was the case with the high end tests, we ran a separate set of benchmarks with HDR lighting disabled to allow for comparison to ATI's Radeon X850/X800 series. The question we're looking to answer here is what upgrade options X850/X800 owners have in the mid-range market segment.

The white lines within the bars indicate minimum frame rate

With HDR disabled, if you've got anything faster than an X800 XL from ATI you are sitting very pretty. While the GeForce 7900 GT and X1800 XT offer some pretty compelling performance, high end X850/X800 owners really don't have a reason to upgrade unless they want better image quality. Even the X800 Pro performs pretty well here; fortunately (or unfortunately) for owners of ATI's X850/X800 series, if you're not going to spend a lot of money on a GPU upgrade then your best bet is actually to stay put and just turn down your detail settings.

NVIDIA's GeForce 6800 GS does reasonably well here, but it looks like Oblivion isn't very friendly to NVIDIA's GeForce 6 architecture as the vanilla ATI Radeon X800 offers similar performance.

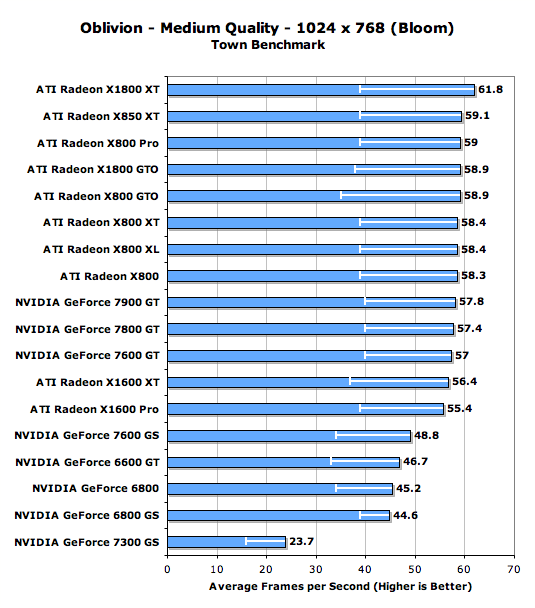

The white lines within the bars indicate minimum frame rate

The performance picture doesn't really change all that much here for Radeon X850/X800 owners, their performance is still chart topping.

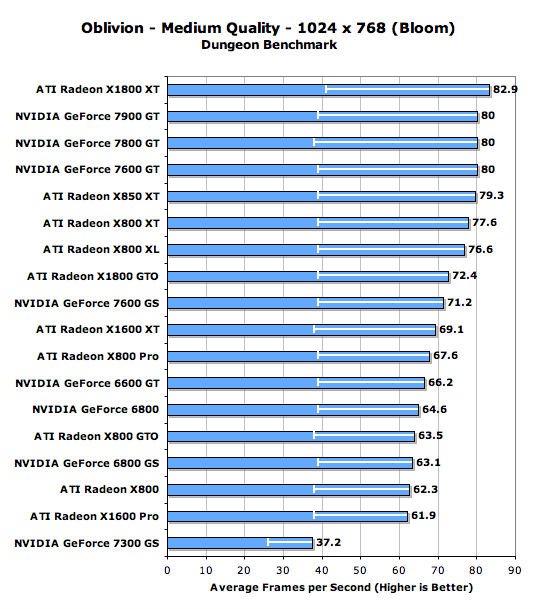

The white lines within the bars indicate minimum frame rate

100 Comments

View All Comments

hkBst - Sunday, May 28, 2006 - link

If as you say the error in your measurements is about 5% which for a 30fps score is about 1.5 fps then it is not warranted to post measurements like 31.4 fps. The .4 in there means absolutely nothing. It is clearly not a significant digit. You should either perform multiple measurements to reduce the error or post the less accurate results which you _actually measured_.markshrew - Sunday, May 14, 2006 - link

I like the new graphs you've been using lately, what software is it? thanks :)Warlock15th - Monday, May 8, 2006 - link

Check page 7 of this article, titled "Mid Range GPU Performance w/ Bloom Enabled"How come the both the vanilla 6800 and the 6600GT outperform the 6800GS? It hing the resutls for these cards were somehow entered wrongly while making the performance graphics...

othercents - Thursday, May 4, 2006 - link

Some people need to look at the overall picture on video cards instead of just saying that ATI is the best because it runs the best on Oblivion. If you look at other reviews with games like HL2 and Doom3 you will start to get a different picture of what card is better for you. Don't take this one review buy a new video card and think it is a cure for all games. Especially for whoever said that the 7900GT sucked. That is a great card and performs well in most game. If you don't want it just mail it to me.Personally I think I will wait until the new video cards come out before I purchase anything specifically for this game. Why pay the $300-$400 on a new card that performs alright when there should be new ones out near the end of the year that will perform way better. Not to mention drivers that are in the works just for this game and the patches that are coming out. My x600 is playable, so I'm satisfied.

I guess if you really need that cutting edge performance then you won't mind spending the $1200 or more to buy SLI or Crossfire video cards. This is probably the same peopel who upgrade their desktops every 6 months because there is something else better available. However for the rest of us we should probably wait until the game has 6 months of patches and new video drivers before deciding on upgrading. Experience with HL2 and almost every other game tells me that performance will get better with time.

Other

araczynski - Friday, April 28, 2006 - link

i would love to see these benchmarks compared to another exactly the same run but WITHOUT grass enabled at all. everyone and their dog knows that the grass in this game is implemented very badly, and has a tremendous impact on performance.the worst part is that the grass is quite frankly FUGLY! complete sticks out like a sore thumb in every scene i tried it in.

in any case. i'm running a 2.4@3.2 northwood/1gig/6800gt@ultra+/1280x1024,hdr,nograss,noshadows and it plays very nicely for me.

i would suspect that anyone with anything from the 7800+ & x1800+ families would be extremely happy with the game if they took off the grass and toned down the shadows.

araczynski - Friday, April 28, 2006 - link

oh yeah, and it looks teh sexy from my projector on the wall at 1280x1024 :)JarredWalton - Friday, April 28, 2006 - link

I like having the grass. Without the grass, it's just yet another game with a flat, green texture representing the ground. That hasn't been acceptable since 2003. I do think the grass could do with a bit more variation (i.e., taller in some areas, shorter in others, more or less dense, etc.), but if the added variation also increased system demands, then forget it.Of course, the grass isn't perfect. It doesn't bend around your character's feet/legs; when an object like heavy armor, a sword, a dead body, etc. falls down in tall grass, it can also make it a bit tricky to find. I do find myself disabling the grass at times just for that reason, not to mention shutting it off when there's too much going on and frame rates have slowed to a crawl. Every time I turn off the grass, though, it seems like I've started to turn Oblivion into Morrowind.

Obviously, we're still at the stage where game engines are only giving a very rough approximation of reality. We won't even discuss how the shadowing/shading/texturing on some faces and objects can look downright awful. We're at the point now where every increase in graphical realism requires an exponential increase in time by the programmers, artists, modelers, etc. as well as an exponential increase in computational power required to render the scenes.

thisisatest - Thursday, April 27, 2006 - link

Wow load up the bullshit. Not only are your framerates strange, but it seems that you somehow did something to make the x1900XTX perform worse than the x1900XT... which is impossible. The XTX bests the XT by at least 3-4FPS in the worst of cases, so we can't even consider a performance decrease to be within margin of error.We know all the x1k Radeons best any nvidia card out there now in this game because of the simple fact that SM3.0 was done better on ATI and because of the massive pixel shading power the x1900 cards have.

I don't see any AA tests as well. You forgot to mention that the game looks like garbage without AA and I know many nvidia users that prefer bloom+AA than just HDR.

Thanks for wasting my time.

JarredWalton - Thursday, April 27, 2006 - link

I'm not sure what benchmarks you're looking at, but only places where the XT comes out ahead of the XTX are in the city and dungeon benchmarks, and for all intents and purposes the two are tied. Due to the nature of benchmarking with a Bolivian (we're not playing back a recorded demo, but instead wandering through a location in a repeatable manner) the margin of error is going to be higher. The minimum frame rates are particularly prone to variations, as you might just happen to get a hard drive access that causes a frame rate drop in one run but not another.The most important tests are by far the Oblivion gate areas, and trust me you will be seen plenty of them in real gameplay. Who cares if you get 70 frames per second or what ever in the dungeon if your frame rates dropped to 20 or lower outside? Looking at those figures, the XTX comes out about 3% faster, which is pretty much what you can expect from the clock speed increase. 625/1450 vs. 650/1550... if we're GPU limited (as opposed to bandwidth limited, which appears to be the case with all Bolivian), the XTX has a whopping 4% clock speed advantage. Throw in CPU and system limitations, and you're not going to be able to tell the difference between the XT and the XTX in actual use.

As for your assertion that the game looks like garbage without anti-aliasing, I strongly disagree, as I'm sure many others do. Just because you think AA is required doesn't mean everyone feels the same. I would take HDR rendering in a heartbeat over AA in this game. That's how I've been playing the game for well over 100 hours. When running at the native panel resolution of my LCD (1920x1200), anti-aliasing is something I worry about only when everything else is maxed out. My point isn't that you're wrong, but merely that you're wrong for saying that many people prefer bloom + anti-aliasing. *Some* people will prefer that, but far more likely is that people will prefer bloom without anti-aliasing over HDR, simply because it gives you higher frame rates.

thisisatest - Friday, April 28, 2006 - link

At least 2 different "benchmarks" where the XT is ahead of the XTX. Once I see crap like this, sort of makes me doubt everything they say.Also we aren't told what settings were used in the drivers for each video card. That plays a huge role here.