The Snapdragon 8 Gen 1 Performance Preview: Sizing Up Cortex-X2

by Dr. Ian Cutress on December 14, 2021 8:00 AM ESTMachine Learning: MLPerf and AI Benchmark 4

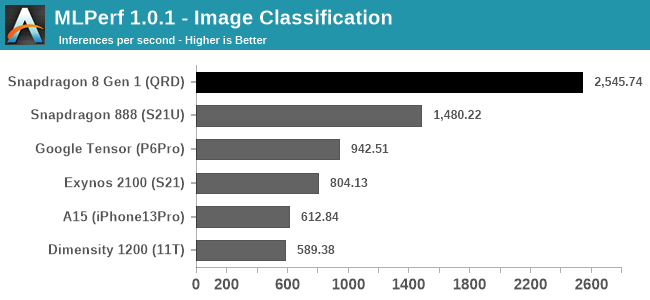

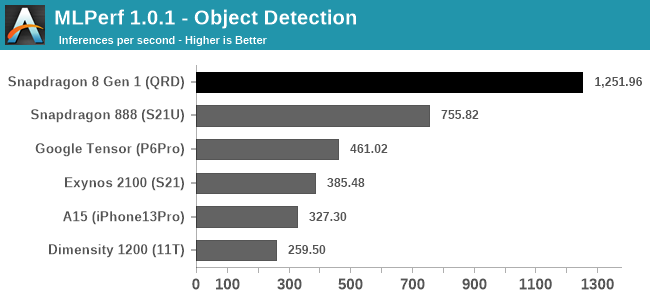

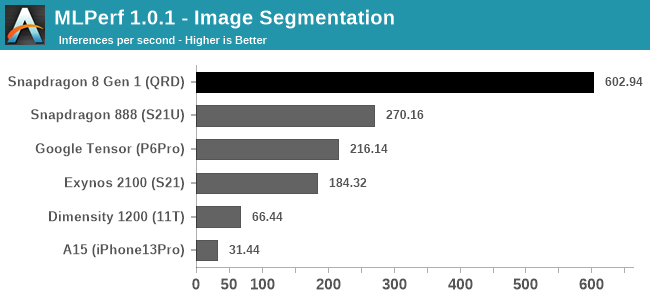

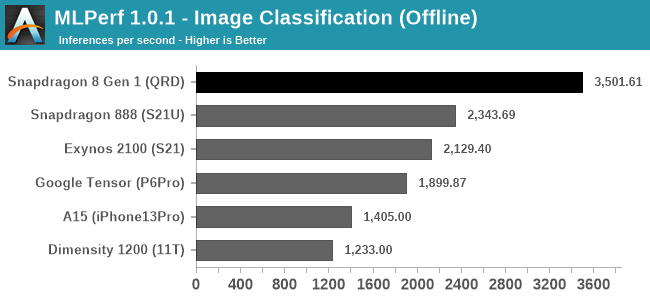

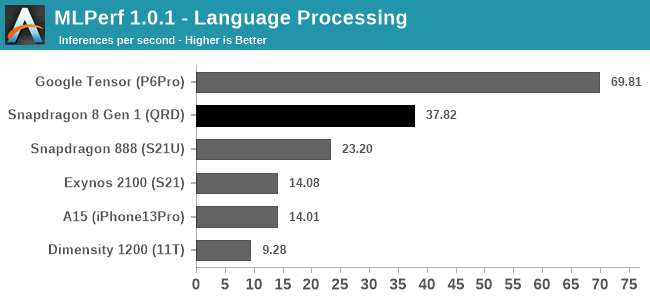

Even as a new benchmark in the space, MLPerf has been made available that runs representative workloads on devices and takes advantage of both common ML frameworks such as NNAPI as well as the respective chip libraries for each vendor. Using this benchmark on retail phones to date, Qualcomm has had the lead in almost all the tests, but given that the company is promoting a 4x increase in AI performance, it will be interesting to see if that comes across all of MLPerf’s testing scenarios.

It should be noted that Apple’s CoreML is currently not supported, hence the lack of Apple numbers here.

Across the board in these first four tests Qualcomm is making a sizable lead, going above and beyond what the S888 can do. Here we’re seeing up to a 2.2x result, making an average +75% gain. It’s not quite the 4x that Qualcomm promoted in its materials, but there’s a sizable gap with the other high-end silicon we’ve tested to date.

The only non-lead is with the language processing, where Google’s Tensor SoC is almost 2x what the S8g1 scores. This test is based on a mobileBERT model, and either for software or architecture reasons, it fits a lot better into the Google chip than any other. As smartphones increase their ML capabilities, we might see some vendors optimizing for specific workloads over others, like Google has, or offering different accelerator blocks for different models. The ML space is also fast paced, so perhaps optimizing for one type of model might not be a great strategy long-term. We will see.

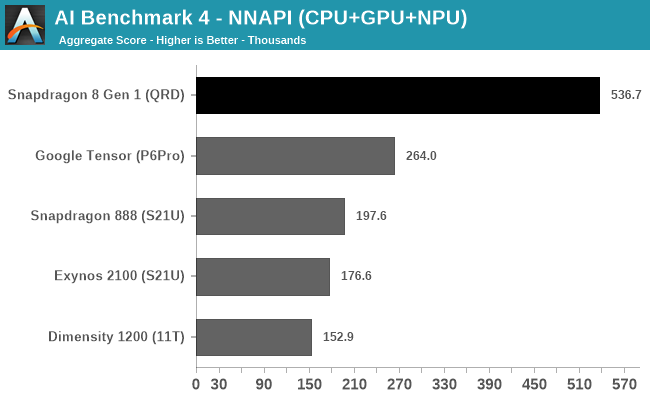

In AI Benchmark 4, running in pure NNAPI mode, the Qualcomm S8g1 takes a comfortable lead. Andrei noted in previous reviews with this test that the power consumed during this test can be quite high, up to 14 W, and this is where some chips might be able to pull ahead an efficiency advantage. Unfortunately we didn’t record power at the same time as the test, but it would be good to monitor this in the future.

169 Comments

View All Comments

skavi - Tuesday, December 14, 2021 - link

Was somewhat worried about how these types of articles would work out with Andrei gone, but I'm glad to see it's more or less business as usual.Somewhat disappointing X2 results. Could I ask if the test suites were recompiled for the chip? I wonder if any of the V9 required extensions would improve performance. SVE2, in particular, looks super nice to use IMO. Very impressive graphics performance though. I'm very interested to see power consumption.

Also, what does the sentence "It should be noted that Apple’s CoreML is currently not supported, hence the lack of Apple numbers here" mean when there are clearly A15 results in each MLPerf benchmark? Are those GPU/CPU scores? If so, I feel that should be made more clear.

ballsystemlord - Tuesday, December 14, 2021 - link

Why is Andrei gone? I never knew he left.1_rick - Tuesday, December 14, 2021 - link

It looks like he just left after his last article--his LinkedIn profile says he's self-employed as of December.shabby - Tuesday, December 14, 2021 - link

That's a shame 😕ksec - Tuesday, December 14, 2021 - link

Will he be doing Freelance for Anandtech? Which is what a lot of writers too these days.1_rick - Tuesday, December 14, 2021 - link

Try tweeting him or Ian. Probably the best way to find out.Andrei Frumusanu - Tuesday, December 14, 2021 - link

I was already freelance, albeit exclusively. But no, I'm not contributing anymore.1_rick - Tuesday, December 14, 2021 - link

Ah. Thanks for jumping in and clearing it up!tuxRoller - Tuesday, December 14, 2021 - link

Thanks so much for your work!I always looked forward to your articles and appreciated your willingness to engage with the community.

Best of luck.

eastcoast_pete - Tuesday, December 14, 2021 - link

Best of luck in your new ventures!