Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Last week, Apple had unveiled their new generation MacBook Pro laptop series, a new range of flagship devices that bring with them significant updates to the company’s professional and power-user oriented user-base. The new devices particularly differentiate themselves in that they’re now powered by two new additional entries in Apple’s own silicon line-up, the M1 Pro and the M1 Max. We’ve covered the initial reveal in last week’s overview article of the two new chips, and today we’re getting the first glimpses of the performance we’re expected to see off the new silicon.

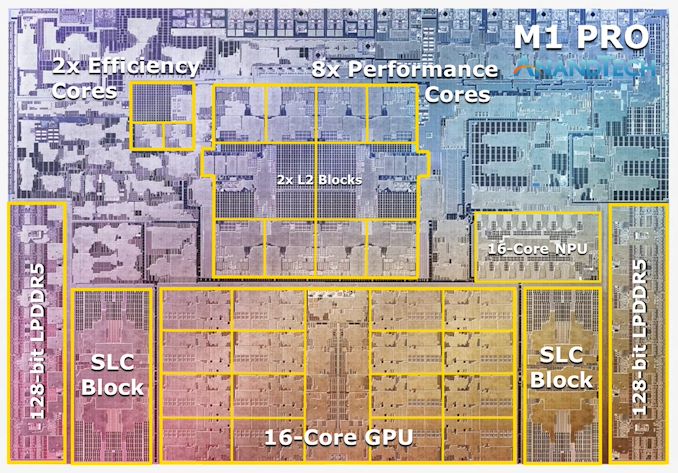

The M1 Pro: 10-core CPU, 16-core GPU, 33.7bn Transistors

Starting off with the M1 Pro, the smaller sibling of the two, the design appears to be a new implementation of the first generation M1 chip, but this time designed from the ground up to scale up larger and to more performance. The M1 Pro in our view is the more interesting of the two designs, as it offers mostly everything that power users will deem generationally important in terms of upgrades.

At the heart of the SoC we find a new 10-core CPU setup, in a 8+2 configuration, with there being 8 performance Firestorm cores and 2 efficiency Icestorm cores. We had indicated in our initial coverage that it appears that Apple’s new M1 Pro and Max chips is using a similar, if not the same generation CPU IP as on the M1, rather than updating things to the newer generation cores that are being used in the A15. We seemingly can confirm this, as we’re seeing no apparent changes in the cores compared to what we’ve discovered on the M1 chips.

The CPU cores clock up to 3228MHz peak, however vary in frequency depending on how many cores are active within a cluster, clocking down to 3132 at 2, and 3036 MHz at 3 and 4 cores active. I say “per cluster”, because the 8 performance cores in the M1 Pro and M1 Max are indeed consisting of two 4-core clusters, both with their own 12MB L2 caches, and each being able to clock their CPUs independently from each other, so it’s actually possible to have four active cores in one cluster at 3036MHz and one active core in the other cluster running at 3.23GHz.

The two E-cores in the system clock at up to 2064MHz, and as opposed to the M1, there’s only two of them this time around, however, Apple still gives them their full 4MB of L2 cache, same as on the M1 and A-derivative chips.

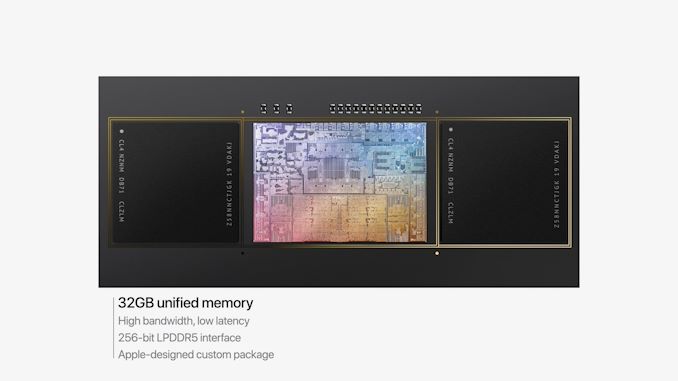

One large feature of both chips is their much-increased memory bandwidth and interfaces – the M1 Pro features 256-bit LPDDR5 memory at 6400MT/s speeds, corresponding to 204GB/s bandwidth. This is significantly higher than the M1 at 68GB/s, and also generally higher than competitor laptop platforms which still rely on 128-bit interfaces.

We’ve been able to identify the “SLC”, or system level cache as we call it, to be falling in at 24MB for the M1 Pro, and 48MB on the M1 Max, a bit smaller than what we initially speculated, but makes sense given the SRAM die area – representing a 50% increase over the per-block SLC on the M1.

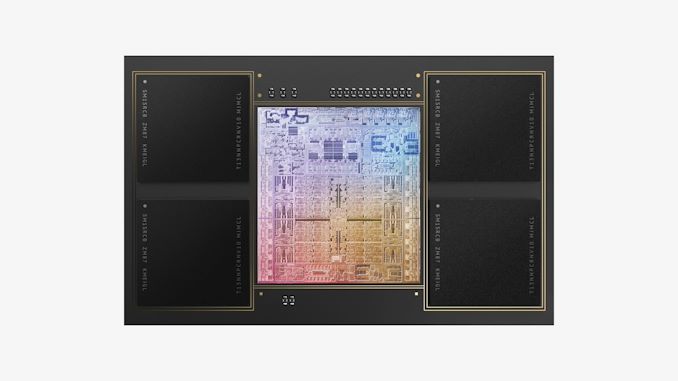

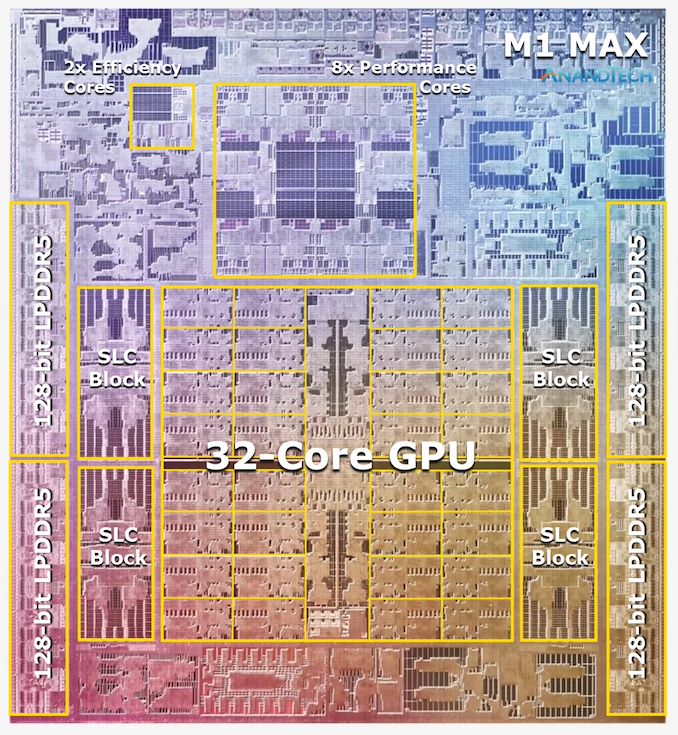

The M1 Max: A 32-Core GPU Monstrosity at 57bn Transistors

Above the M1 Pro we have Apple’s second new M1 chip, the M1 Max. The M1 Max is essentially identical to the M1 Pro in terms of architecture and in many of its functional blocks – but what sets the Max apart is that Apple has equipped it with much larger GPU and media encode/decode complexes. Overall, Apple has doubled the number of GPU cores and media blocks, giving the M1 Max virtually twice the GPU and media performance.

The GPU and memory interfaces of the chip are by far the most differentiated aspects of the chip, instead of a 16-core GPU, Apple doubles things up to a 32-core unit. On the M1 Max which we tested for today, the GPU is running at up to 1296MHz - quite fast for what we consider mobile IP, but still significantly slower than what we’ve seen from the conventional PC and console space where GPUs now can run up to around 2.5GHz.

Apple also doubles up on the memory interfaces, using a whopping 512-bit wide LPDDR5 memory subsystem – unheard of in an SoC and even rare amongst historical discrete GPU designs. This gives the chip a massive 408GB/s of bandwidth – how this bandwidth is accessible to the various IP blocks on the chip is one of the things we’ll be investigating today.

The memory controller caches are at 48MB in this chip, allowing for theoretically amplified memory bandwidth for various SoC blocks as well as reducing off-chip DRAM traffic, thus also reducing power and energy usage of the chip.

Apple’s die shot of the M1 Max was a bit weird initially in that we weren’t sure if it actually represents physical reality – especially on the bottom part of the chip we had noted that there appears to be a doubled up NPU – something Apple doesn’t officially disclose. A doubled up media engine makes sense as that’s part of the features of the chip, however until we can get a third-party die shot to confirm that this is indeed how the chip looks like, we’ll refrain from speculating further in this regard.

493 Comments

View All Comments

JfromImaginstuff - Monday, October 25, 2021 - link

Huh, niceKangal - Monday, October 25, 2021 - link

What isn't nice is gaming on macOS.We all know how bad emulation is, and whilst Apple seems to have pulled "magic" with their implementation of Metal/Rosetta2's hybrid-translation strong performance.... at the end of the day it isn't enough.

The M1X is slightly slower than the RTX-3080, at least on-paper and in synthetic benchmarks. This is the sort of hardware that we've been denied for the past 3 years. Should be great. It isn't. When it comes to the actual Gaming Performance, the M1X is slightly slower than the RTX-3060. A massive downgrade.

The silver lining is that developers will get excited, and we might see some AAA-ports over to the macOS system. Even if it's the top-100 games (non-exclusives), and if they get ported over natively, it should create a shock. We might see designers then developing games for PS5, XSX, OSX and Windows. And maybe SteamOS too. And in such a scenario, we can see native-coded games tapping into the proper M1X hardware, and show impressive performance.

The same applies for professional programs for content creators.

at_clucks - Monday, October 25, 2021 - link

"The silver lining is that developers will get excited, and we might see some AAA-ports over to the macOS"I think that's their whole point. Make developers optimize for Mac knowing that gamers would very likely choose to have their performant gaming machine in a Mac format (light, cool, low power) rather than in a hot and heavy DTR format if they had the choice of natively optimized games.

bernstein - Monday, October 25, 2021 - link

we now have 3 primary gpu api‘s:- directx (xbox, windows)

- vulkan (ps5, switch, steamos, android)

- metal (macos, ios & derivates)

Because they’re all low level & similar, most bigger engines support them all.

There used to be two for pc, one for mobile and three for consoles. And vastly different ones at that.

So it will come down to the addressable market and how fast apple evolves the api‘s. Historically windows, with its build once run two decades later has made it much much easier on devs.

yetanotherhuman - Tuesday, October 26, 2021 - link

"how fast apple evolves the api‘s"That'll be a very slow, given their history. Why they invented another API, I have no idea. Vulkan could easily be universal. It runs on Windows, which you didn't note, with great results.

Dribble - Tuesday, October 26, 2021 - link

Vulkan is too low level, it assumes nothing which means you have to right a ton of code to get to the level of Metal which assumes you have an apple device. If metal/dx are like writing in assembly language, for vulkan you start of with just machine code and have to write your own assembler first. Hence it's not really a great language to work with, if you were working with apple then metal is so much nicer.Gracemont - Wednesday, October 27, 2021 - link

Vulkan is too low level? It’s literally comparable to DX12. Like bruh, if anything the Metal API is even more low level for Apple devices cuz of it being built specifically for Apple devices. Just like how the NVAPI for the Switch is the lowest level API for that system cuz it was specifically tailored for that system, not Vulkan.Ppietra - Wednesday, October 27, 2021 - link

Gracemont, the Metal API was already being used with Intel and AMD GPUs, so not exactly a measure of "low level"NPPraxis - Tuesday, October 26, 2021 - link

"Why they invented another API, I have no idea. Vulkan could easily be universal."You're misremembering the history. Metal predates Vulkan.

Apple was basically stuck with OpenGL for a long time, which fell further and further behind as DirectX got lower level and faster. That made all of Apple's devices at a huge gaming handicap.

Then Apple invented Metal for iOS in 2014 which gave them a huge performance rendering lead on mobile devices.

They led the Mac languish for a couple years, not even updating the OpenGL version. Macs got worse and worse for games. In 2016, Vulcan came out. People speculated that Apple could adopt it.

In 2017, Apple released Metal 2 which was included in the new MacOS.

Basically, Apple had to pick between unifying MacOS (Metal) with iOS or with Linux gaming (Vulkan). Apple has gotten screwed over before by being reliant on open source third parties that fell further and further behind (OpenGL, web browsers before they helped build WebKit, etc) so it's kind of understandable that they went the Metal-on-MacOS direction since they had already built it for iOS.

I still wish Apple would add support for it (Mac: Metal and Vulkan, Windows: DirectX and Vulkan, Linux: Vulkan only), because it would really help destroy any reason for developers to target DirectX first, but I understand that they really want to push devs to Metal to make porting to iOS easier.

Eric S - Friday, October 29, 2021 - link

Everyone has their own graphics stack- Microsoft, Sony, Apple, and Nintendo all have proprietary stacks. Vulcan wants to change that, but that doesn’t solve everything. Developers still need to optimize for differences in GPUs. Apple is looking for full vertical integration which helps to have their own stack.