Wildcat Realizm 800: 3Dlabs MultiGPU First Look

by Derek Wilson on March 25, 2005 10:00 AM EST- Posted in

- GPUs

Inside the Wildcat Realizm 800

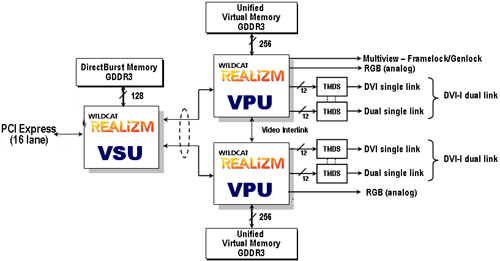

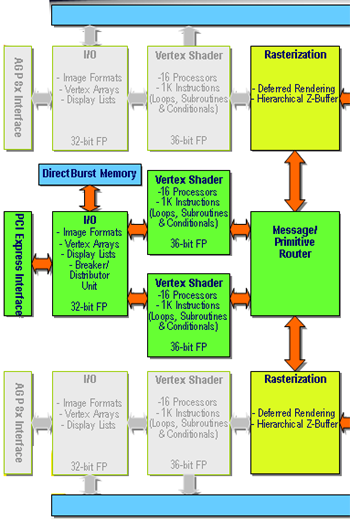

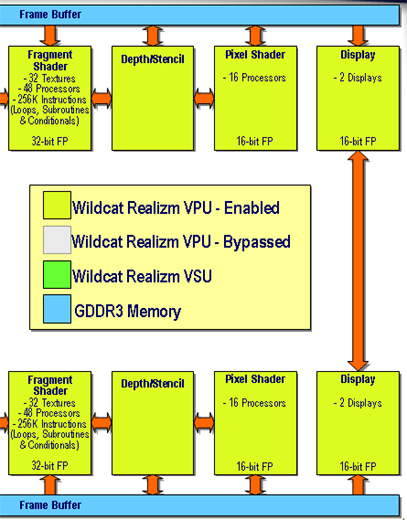

The first thing that we noticed about the Wildcat Realizm 800 was its size. This is a full length card in every way. Sporting two of the GPUs featured on the Realizm 200, the 800 needs plenty of space for silicon, RAM, and all the routing going on. For background on the GPUs referenced in this article, take a peek at our architectural analysis of the Wildcat Realizm 200.The two GPUs are connected by a discreet vertex unit that 3Dlabs calls a Vertex/Scalability Unit (VSU). This bit of hardware is responsible for the card's PCI Express interface, geometry processing for the entire scene, and splitting the workload into two parts. There's also 128MB GDDR3 "DirectBurst" memory off of the VSU (connected with a 128-bit wide interface). This memory stores rendering commands and geometry data for the VSU.

![]()

With NVIDIA's solution using separate framebuffers that are either combined in every frame or used in an alternating fashion, there are some compatibility limitations that prevent all graphics from benefiting equally from the technology. Situations can come up where one GPU will need data from a previous frame or a part of the current frame being rendered on the other GPU. Not having a unified framebuffer makes this more difficult logistically than it needs to be.

So, the VSU splits the frame first, then processes the graphics and sends fragment data on to the GPUs over two 64-bit parallel interfaces. It's unclear whether this is a physically different interface from the AGP interfaces already on the GPUs. If 3Dlabs reused the interface, it's definitely running faster than normal delivering 4.2GB/s from the VSU to each GPU.

Each GPU then handles the rest of the pipeline as it would normally have done on the Wildcat Realizm 200.

Before we head to the numbers, we'll lead off by saying that doubling the hardware doesn't usually result in a linear 2x performance increase. 3Dlabs still has not disclosed core clock speeds to us, and it may well be the case that the WR800 runs its GPUs at a slightly decreased speed from the 200. With some of our numbers, it's difficult to tell exactly what causes performance to look like it does, but we will do our best to explain our results.

Test

Here are our test system setups:| Performance Test Configuration | |

| Processor(s): | 2 x AMD Opteron 250 AMD Opteron 150 AMD Opteron FX-53 |

| RAM: | 4 x 512MB OCZ PC3200 EL ECC Registered (2 per CPU) 2 x 1024MB Kingston PC 3200 ECC Registered 2 x 1024MB OCZ PC3200 EB Platinum Edition |

| Hard Drive(s): | Seagate 120GB 7200RPM IDE (8MB Buffer) |

| Motherboard & IDE Bus Master Drivers: | AMD 8131 APIC Driver NVIDIA nForce 5.10 NVIDIA nForce 6.10 |

| Video Card(s): | ATI FireGL V5000 3Dlabs Wildcat Realizm 200 ATI FireGL X3-256 NVIDIA Quadro FX 4000 HIS Radeon X800 XT Platinum Edition IceQ II Prolink GeForce 6800 Ultra Golden Limited |

| Video Drivers: | 3Dlabs 4.04.0757 Driver ATI FireGL 8.083 Driver NVIDIA Quadro 70.41 (Beta) NVIDIA ForceWare 67.03 (6800U) ATI Catalyst 4.12 (X800) |

| Operating System(s): | Windows XP Professional SP2 |

| Motherboards: | HP WX9300 (nForce Professional) IWill DK8N v1.0 (AMD-81xx + NVIDIA nForce 3) DFI LANParty UT nF4 Ultra-D (for FireGL V5000) |

| Power Supply: | 600W OCZ Powerstream PSU |

27 Comments

View All Comments

Athlex - Tuesday, April 5, 2005 - link

I know this is a preview, but this article seemed a bit thin- no pictures of the actual hardware, no screenshots of driver config screens, would that break an NDA or something? Also, are "default professional settings" the same across brands? Seems like that might skew results if the driver defaults to different values between ProE/Solidworks/Maya, etc. Maybe a subjective appraisal of display quality could be part of this? Do these DVI ports also do analog output or is that unavailable with a dual-link DVI port?Might also be fun to see an OpenGL game benchmark on the pro cards to contrast the game cards running OpenGL apps...

Can't wait to see the roundup!

BikeDude - Thursday, March 31, 2005 - link

I too would like to see some game tests. I want a dual-link capable PCIe card and this effectively rules out all of the consumer cards! Before I fork out a lot more money for a "professional" card, I'd sure as hell would like to know that I would be able to play a mean game of Doom3 on the darn thing... (and using Photoshop is a priority as well)--

Rune

Zebo - Wednesday, March 30, 2005 - link

Why are there no game tests??? Lifes not all about work ya know.. stop and smell roses..specially if you have an office door like me.Calin - Tuesday, March 29, 2005 - link

The "Professional 3D" cards have OpenGL performance several times greater than what you can obtain from consumer cards (by consumer I mean pro gaming). Even the ATI cards for professional 3D (FireGL series) are several times faster in OpenGL than their gaming counterparts.Also, "professional 3D" cards really needs high resolution/high refresh rates outputs, and multiple outputs.

Draven31 - Tuesday, March 29, 2005 - link

The difference is in the types of instructions used most often and the precision of many operations.And, more often than not in 3D, the number of polygons involved.

JustAnAverageGuy - Saturday, March 26, 2005 - link

Tom's had a good comparison.It's a little over twice the size of a dollar bill. :)

http://www.tomshardware.com/business/20040813/sigg...

cryptonomicon - Saturday, March 26, 2005 - link

holy crap, that board is hugeJkames - Saturday, March 26, 2005 - link

arg! I meant to say "Are the differences internal instructions?"Jkames - Saturday, March 26, 2005 - link

I mean that are the differences internal instructions.Jkames - Saturday, March 26, 2005 - link

What is the difference between workstation hardware and desktop hardware? I understand that workstation is more expensive and used for professional applications but would anyone be able to elaborate on the uses of workstation hardware? Is it the internal instructions such as MMX or junk like that?Any info would be appreciated thanx.