The Samsung SSD 980 (500GB & 1TB) Review: Samsung's Entry NVMe

by Billy Tallis on March 9, 2021 10:00 AM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance. For more details, please see the overview of our 2021 Consumer SSD Benchmark Suite.

Whole-Drive Fill

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

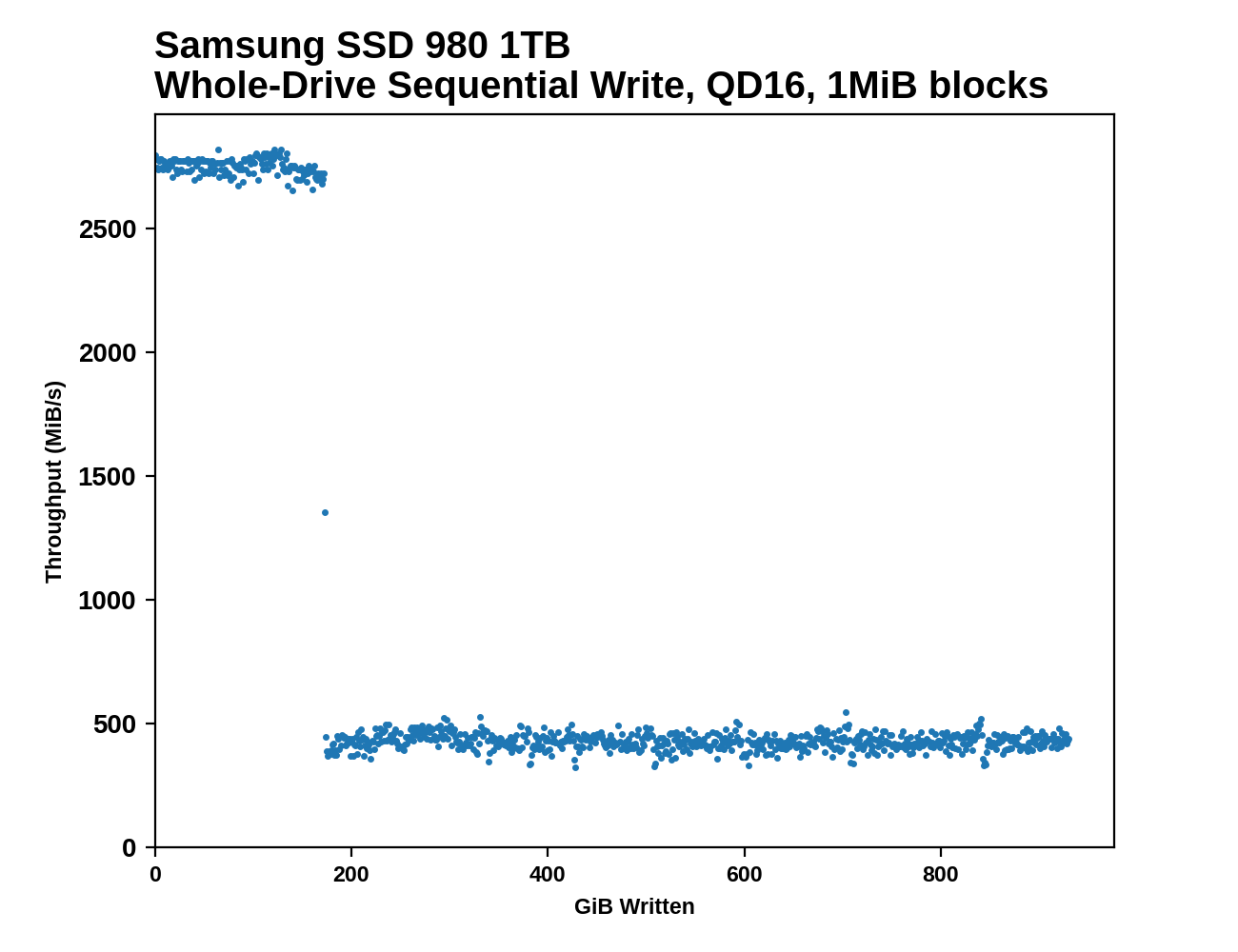

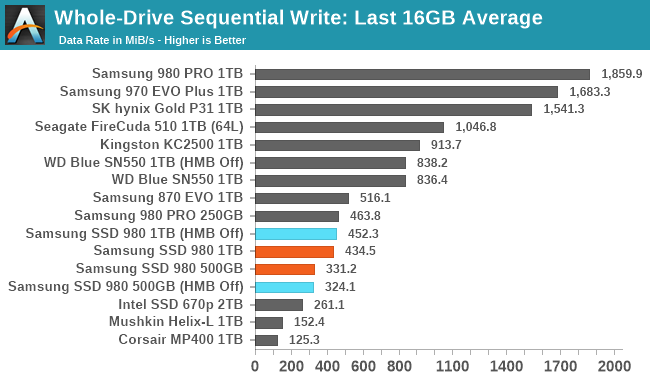

The Samsung SSD 980 clearly has larger SLC caches than the 970 EVO Plus or 980 PRO, as advertised. But post-cache performance is about a third that of the 970 EVO Plus when comparing the 1TB models, and the 500GB 980 is even slower post-cache. The apparent cache sizes are about 100GB for the 500GB model and 173GB for the 1TB model: a bit larger than advertised for the 1TB and a bit smaller than advertised for the 500 GB. On the second pass of filling the drives, the 1TB 980 never gets back up to full SLC cache speed, and the 500GB model only does for about 5GB. Samsung didn't give specs for the minimum SLC cache size, but it's clear there's not much left when the 980 is full.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

The 1TB SSD 980's performance on the whole-drive fill averages out to just a hair slower than the 1TB 870 EVO, and the 500GB model is about 25% slower than that. It's clear that both the narrower 4-channel controller and the lack of DRAM are both contributing to the lower write throughput of the SSD 980 as compared with Samsung's high-end NVMe drives.

Working Set Size

|

|||||||||

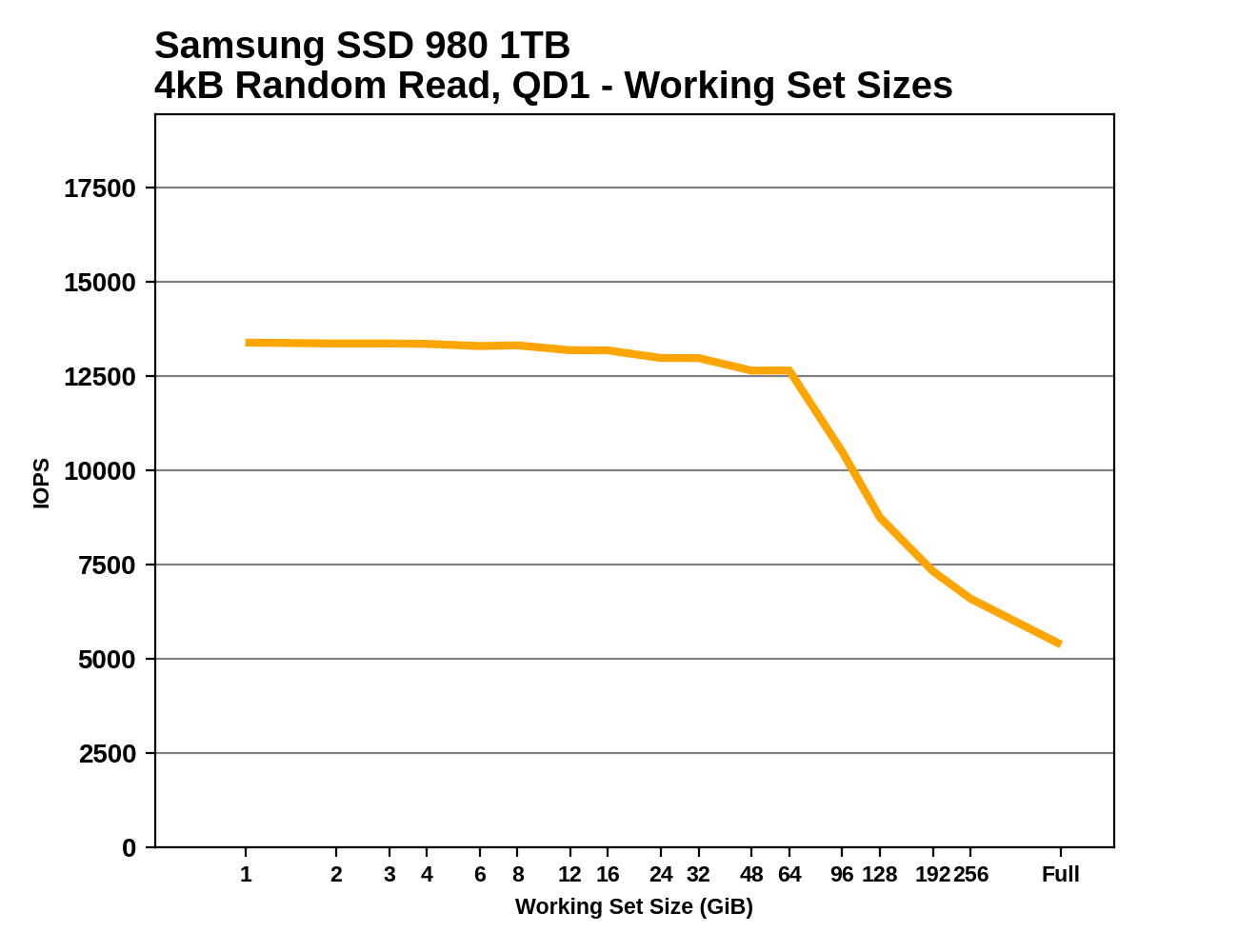

The 64MB Host Memory Buffer used by the SSD 980 is very clearly illustrated by this test. Without HMB enabled, the 980's random read performance suffers even for small working set sizes of just a few GB, indicating there's very little on-controller RAM. For extremely large working set sizes, the 980's performance drops below the SATA 870 EVO.

The WD Blue SN550 shows slightly worse random read performance than the SSD 980 for smaller working set sizes, and its smaller HMB allocation means performance starts dropping sooner. But at large working set sizes or with HMB off, the SN550 retains more performance, and the SN550 seems to have more on-chip RAM for use when HMB is unavailable.

Performance vs Block Size

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

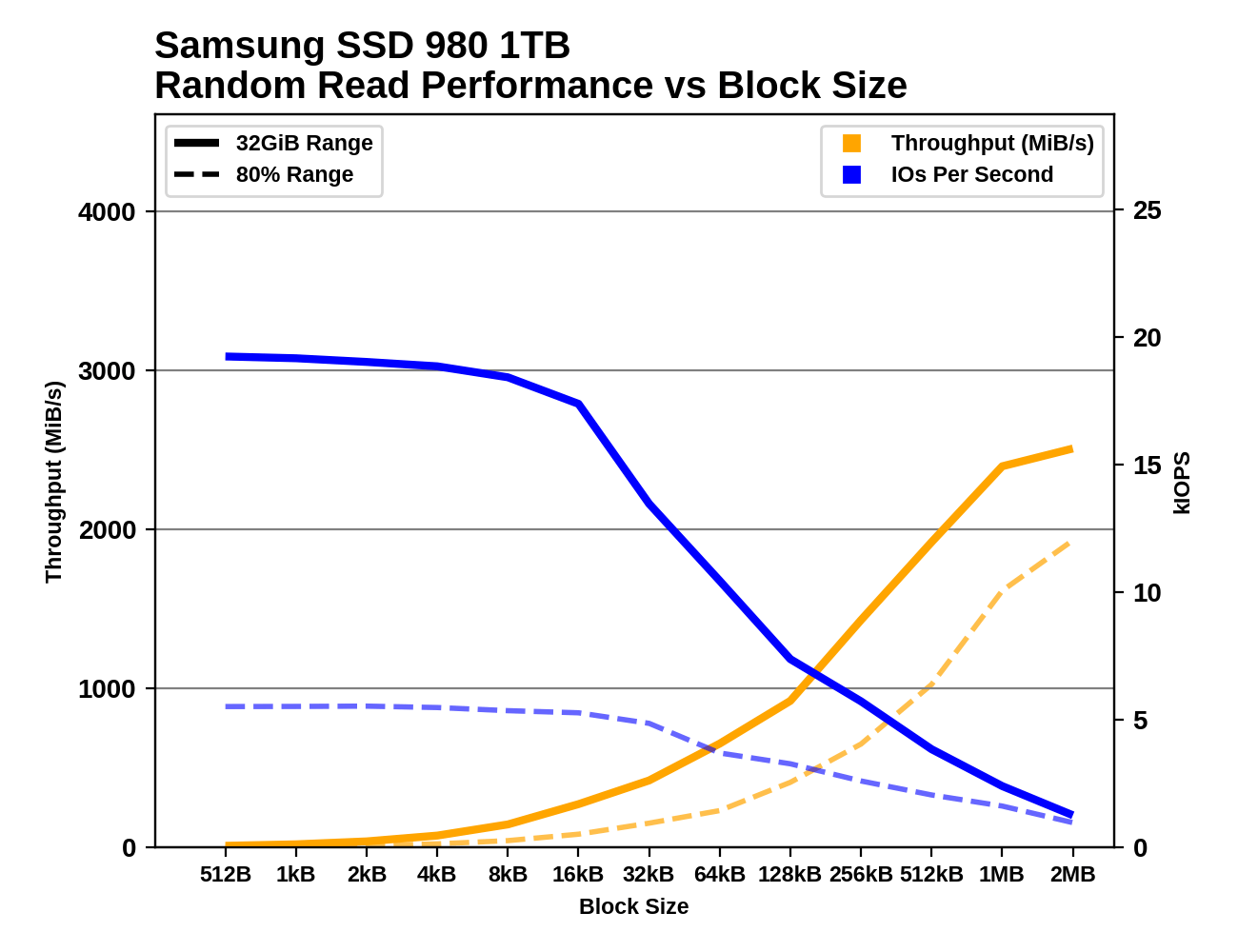

The Samsung SSD 980 maintains high random read IOPS up to block sizes of 16kB, and throughput starts to saturate once the block size is up to about 1MB. For random writes, IOPS starts dropping after 4kB, or after just 2kB when the test is hitting 80% of the drive. With HMB off, the 980 shows a clear preference for 4kB block size for random writes. Sequential reads show odd behavior for block sizes from 32kB to 128kB, with performance stalling then regressing despite increased block size. This happens for both capacities, with or without HMB, so it's a systemic quirk—and an unfortunate one, since 128kB is the usual block size for sequential IO benchmarks. For sequential writes the SSD 980 is well-behaved with smooth scaling of throughput as block sizes increase. Neither capacity of the SSD 980 runs into SLC cache size limits during these tests.

By contrast, the WD Blue SN550 shows much stronger signs of optimization specifically for 4kB block sizes: the effect is small for random reads but huge for random or sequential writes. Sub-4kB accesses are a generally not worth the trouble on the SN550. This drive also appears to occasionally run out of SLC cache during the random write block size testing.

54 Comments

View All Comments

WaltC - Tuesday, March 9, 2021 - link

Trying to fathom what "retail-ready" means...;) All of my Samsung NVMe drives--yes, even those with "pro" and "evo" in the names--were purchased at retail. Why is this drive anymore "retail-ready" than a 980 Pro, for instance, also sold at retail. Perhaps you meant to say, Samsung's "value drive," or "low-cost NVMe segment," etc, as it is no more "retail-ready" than any of Samsung's other drives--which are all sold at retail.WaltC - Tuesday, March 9, 2021 - link

I did see the "entry-level" qualifier, however. But I'm not aware that Samsung has ever manufactured an SSD that was not "retail-ready."linuxgeex - Tuesday, March 9, 2021 - link

Along with "entry-level"... Anandtech really should have benchmarked this drive on a dual-core 6th gen laptop or 8th-gen 1L desktop minipc, because that's the kind of devices this is going to land in. And using the host memory for the cache and the extra driver bloat is going to hurt those weak machines. I am certain that a SATA M.2 with DRAM would end up outperforming this for most of the actual retail customers. Where this is a fabulous deal is in HEDT machines that want a lot of cheap SSD storage to act as front cache for a RAID, because they have RAM and cores to spare, so the loss of a relatively piddling amount of RAM to the host cache and driver bloat when they have 16-32x the RAM, isn't such a big deal.Billy Tallis - Tuesday, March 9, 2021 - link

I think you're vastly overestimating what's involved in making HMB work. It's a straightforward feature for the host OS to support and does not "bloat" the NVMe driver. Allocating a 64MB buffer out of the host RAM is a drop in the bucket, or: two frames of 4k image data. The SSD will only touch the HMB a few times per IO, plus maybe a bit more often when doing heavy background operations. The total PCIe bandwidth used by HMB is vastly smaller than the bandwidth used for transferring user data to and from the SSD. That means HMB is using an even more negligible fraction of the CPU's DRAM bandwidth. The CPU execution time used by HMB is exactly zero. The resource requirements of HMB are so low that UFS has copied the feature to accelerate smartphone storage.Byte - Monday, August 30, 2021 - link

The HMB helps track the LBA which is why most SATA drives suffer so much when there is no DRAM, it takes much longer to search for the block. With HMB PCIE SSDs will suffer much much less being DRAMless than an SATA SSD.SarahKerrigan - Tuesday, March 9, 2021 - link

The article refers to it as retail-ready entry-level. As opposed to OEM entry-level, which Samsung has produced in the past.alfalfacat - Tuesday, March 9, 2021 - link

Indeed, some may remember the PM981 OEM drive that came out like 6mo before the 970 EVO, which you could get if you were willing to forgo the retail package.https://www.anandtech.com/show/12670/the-samsung-9...

antonkochubey - Tuesday, March 9, 2021 - link

Also forgo firmware updates and 5 year warranty.serendip - Thursday, March 11, 2021 - link

Samsung now has a bunch of OEM drives like the PM991, PM991a and PM9A1.https://www.samsung.com/semiconductor/ssd/client-s...

linuxgeex - Tuesday, March 9, 2021 - link

exactly - retail-channel-ready.