A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTGaming Tests: Civilization 6

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it a game that is easy to pick up, but hard to master.

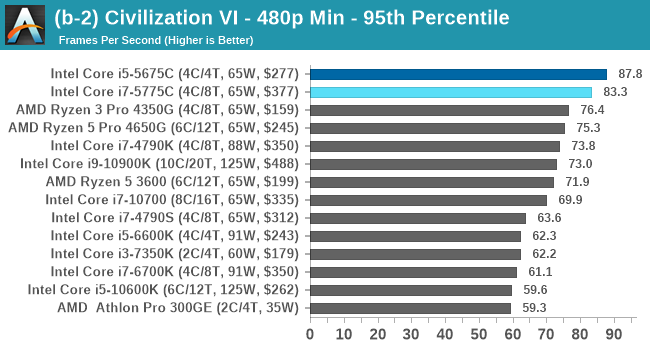

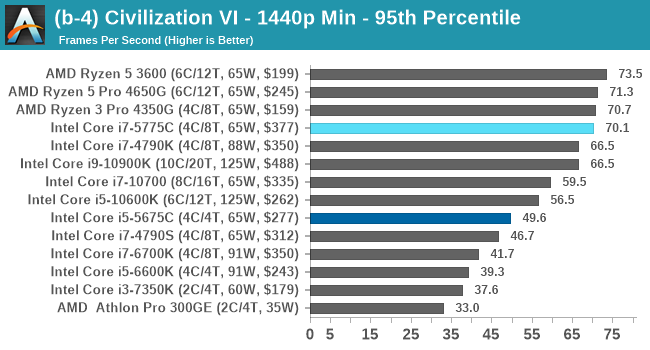

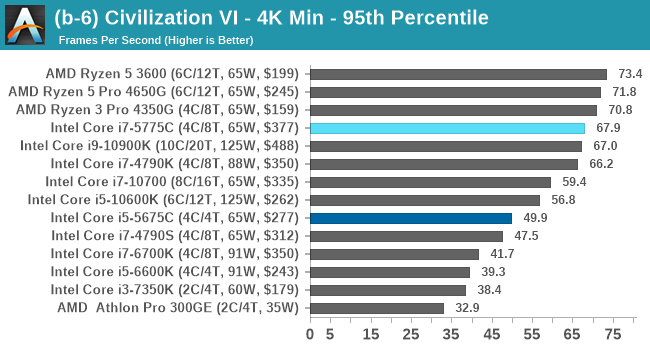

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

For this benchmark, we are using the following settings:

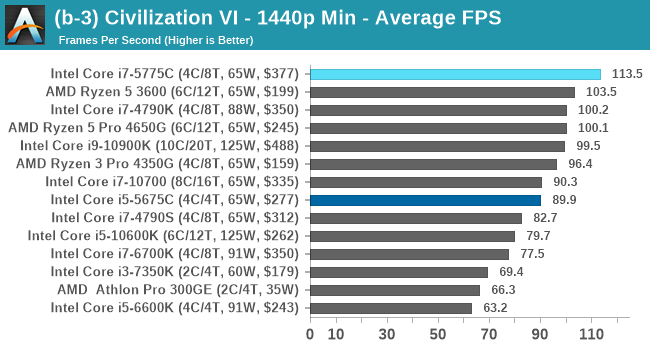

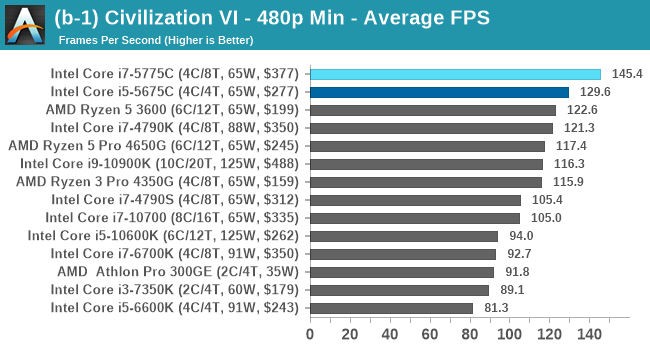

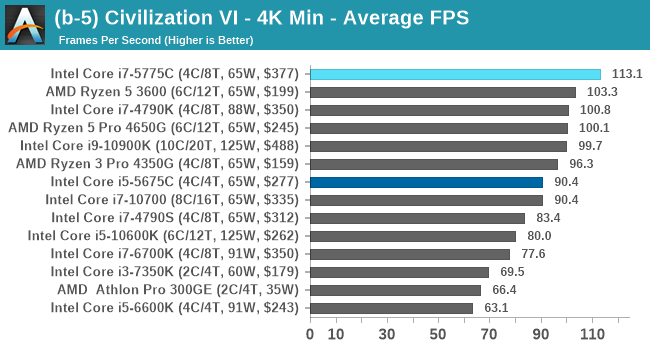

- 480p Low, 1440p Low, 4K Low, 1080p Max

For automation, Firaxis supports the in-game automated benchmark from the command line, and output a results file with frame times. We do as many runs within 10 minutes per resolution/setting combination, and then take averages and percentiles.

| AnandTech | Low Res Low Qual |

Medium Res Low Qual |

High Res Low Qual |

Medium Res Max Qual |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

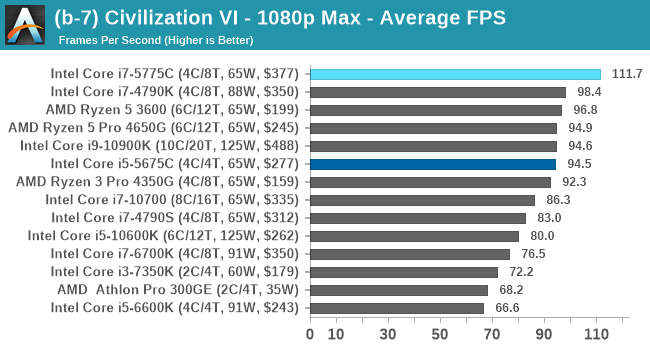

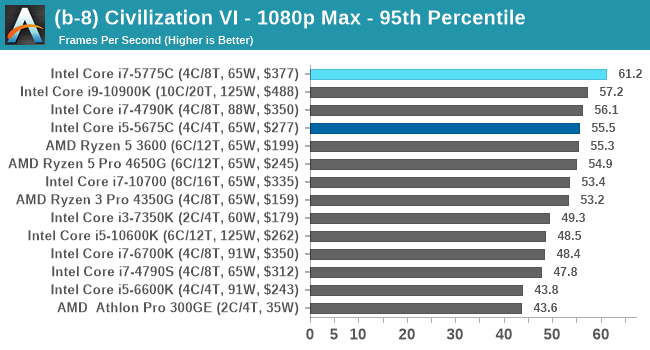

Civ 6 has always been a fan of fast CPU cores and low latency, so perhaps it isn't much of a surprise to see the Core i7 here beat out the latest processors. The Core i7 seems to generate a commanding lead, whereas those behind it seem to fall into a category around 94-96 FPS at 1080p Max settings.

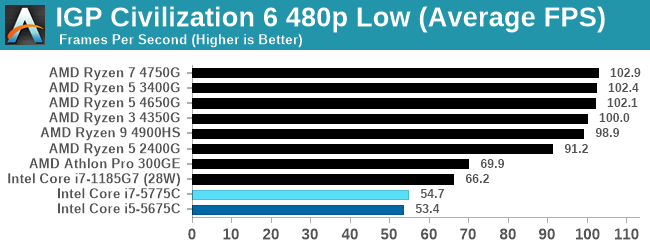

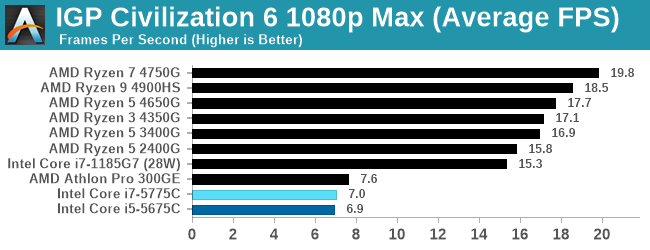

For our Integrated Tests, we run the first and last combination of settings.

When we use the integrated graphics, Broadwell isn't particularly playable here.

All of our benchmark results can also be found in our benchmark engine, Bench.

120 Comments

View All Comments

realbabilu - Monday, November 2, 2020 - link

That Larger cache maybe need specified optimized BLAS.Kurosaki - Monday, November 2, 2020 - link

Did you mean BIAS?ballsystemlord - Tuesday, November 3, 2020 - link

BLAS == Basic Linear Algebra System.Kamen Rider Blade - Monday, November 2, 2020 - link

I think there is merit to having Off-Die L4 cache.Imagine the low latency and high bandwidth you can get with shoving some stacks of HBM2 or DDR-5, whichever is more affordable and can better use the bandwidth over whatever link you're providing.

nandnandnand - Monday, November 2, 2020 - link

I'm assuming that Zen 4 will add at least 2-4 GB of L4 cache stacked on the I/O die.ichaya - Monday, November 2, 2020 - link

Waiting for this to happen... have been since TR1.nandnandnand - Monday, November 2, 2020 - link

Throw in an RDNA 3 chiplet (in Ryzen 6950X/6900X/whatever) for iGPU and machine learning, and things will get really interesting.ichaya - Monday, November 2, 2020 - link

Yep.dotjaz - Saturday, November 7, 2020 - link

That's definitely not happening. You are delusional if you think RDNA3 will appear as iGPU first.At best we can hope the next I/O die to intergrate full VCN/DCN with a few RDNA2 CUs.

dotjaz - Saturday, November 7, 2020 - link

Also doubly delusional if think think RDNA3 is any good for ML. CDNA2 is designed for that.Adding powerful iGPU to Ryzen 9 servers literally no purpose. Nobody will be satisfied with that tiny performance. Guaranteed recipe for instant failure.

The only iGPU that would make sense is a mini iGPU in I/O die for desktop/video decoding OR iGPU coupled with low end CPU for an complete entry level gaming SOC aka APU. Chiplet design almost makes no sense for APU as long as GloFo is in play.