Imagination Announces A-Series GPU Architecture: "Most Important Launch in 15 Years"

by Andrei Frumusanu on December 2, 2019 8:00 PM ESTNew ISA & ALUs: An Extremely Wide Architecture

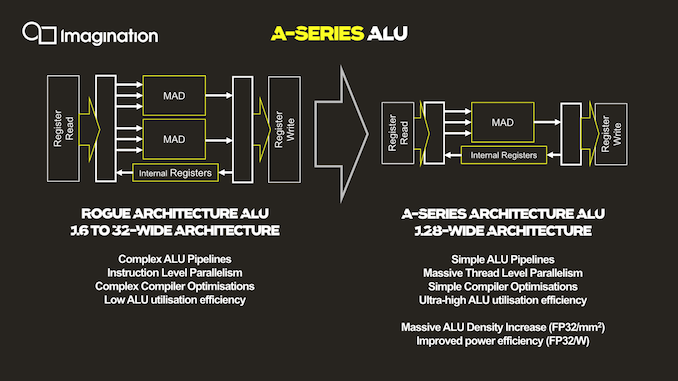

As mentioned, the ALU architecture as well as ISA of the new A-Series is fundamentally different to past Imagination GPUs, and in fact is very different from any other publicly disclosed design.

The key characteristic of the new ALU design is the fact that it’s now significantly wider than what was employed on the Rogue and Furian architectures, going to up a width of 128 execution units per cluster.

For context, the Rogue architecture used 32 thread wide wavefronts, but a single SIMD was only 16 slots wide. As a result, Rogue required two cycles to completely execute a 32-wide wavefront. This was physically widened to 32-wide SIMDs in the 8XT Furian series, executing a 32-wide wavefront in a single cycle, and was again increased to 40-wide SIMDs in the 9XTP series.

In terms of competing architectures, NVIDIA’s desktop GPUs have been 32-wide for several generations now, while AMD more recently moved from a 4x16 ALU configuration with a 64-wide wavefront to native 32-wide SIMDs and waves (with the backwards compatibility option to cluster together two ALU clusters/CUs for a 64-wide wavefront).

More relevant to Imagination’s mobile market, Arm’s recent GPU releases also have increased the width of their SIMDs, with the data paths increasing from 4 units in the G72, to 2x4 units in the G76 (8-wide wave / warp), to finally a bigger more contemporary 16-wide design with matching wavefront in the upcoming Mali-G77.

So immediately one might see Imagination’s new A-Series GPU significantly standing out from the crowd in terms of its core ALU architecture, having the widest SIMD design that we know of.

All of that said, we're a bit surprised to see Imagination use such a wide design. The problem with very wide SIMD designs is that you have to bundle together a very large number of threads in order to keep all of the hardware's execution units busy. To solve this conundrum, a key design change of the A-Series is the vast simplification of the ISA and the ALUs themselves.

Compared to the Rogue architecture as depicted in the slides, the new A-Series simplifies a execution unit from two Multiply-Add (MADD) units to only a single MADD unit. This change was actually effected in the Series-8 and Series-9 Furian architectures, however those designs still kept a secondary MUL unit alongside the MADD, which the A-Series now also does without.

The slide’s depiction of three arrows going into the MADD unit represents the three register sources for an operation, two for the multiply, and one for the addition. This is a change and an additional multiply register source compared to the Furian architecture’s MADD unit ISA.

In essence, Imagination has doubled-down on the transition from an Instruction Level Parallelism (ILP) oriented design to maximizing Thread Level Parallelism(TLP). In this respect it's quite similar to what AMD did with their GCN architecture early this decade, where they went from an ILP-heavy design to an architecture almost entirely bound by TLP.

The shift to “massive” TLP along with the much higher ALU utilization due to the simplified instructions is said to have enormously improved the density of the individual ALUs, with “massive” increases in performance/mm². Naturally, reduced area as well as elimination of redundant transistors also brings with itself an increase in power efficiency.

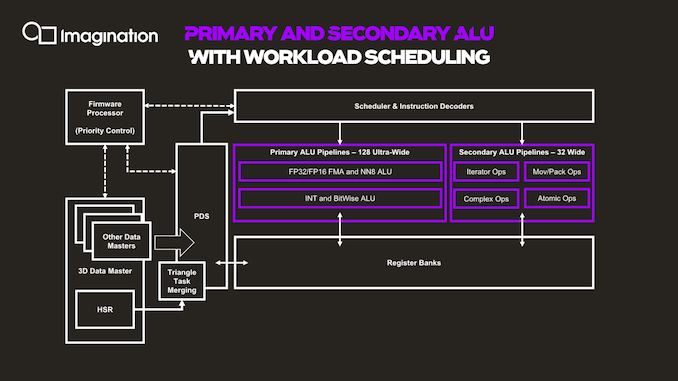

The next graphic describes the data and execution flow in the shader processor.

Things start off with a data master which kicks off work based on command queues in the memory. The 3D data master here also handles other fixed-function pre-processing, which will trigger execution of per-tile hidden surface removal and workload generation for the shader programs. The GPU here has a notion of triangle merging which groups them together into tasks in order to get better utilization of the ALUs and able to fill the 128 slots of the wavefront.

The PDS (Programmable Data Sequencer) is an allocator for resources and manager. It reserves register space for workloads and manages tasks as they’re being allocated to thread slots. The PDS is able to prefetch/preload data to local memory for upcoming threads, upon availability of the data of a thread, this becomes an active slot and is dispatched and decoded to the execution units by the instruction scheduler and decoder.

Besides the primary ALU pipeline we described earlier, there’s a secondary ALU as well. First off, a clarification on the primary ALUs is that we also find a separate execution unit for integer and bitwise operations. These units, while separate in their execution, do share the same data paths with the floating-point units, so it’s only ever possible to use one or the other. These integer units are what enable the A-Series to have high AI compute capabilities, having quad-rate INT8 throughput. In a sense, this is very similar to Arm’s NN abilities on the G76 and G77 for integer dot-product instructions, although Imagination doesn’t go into much detail on what exactly is possible.

The secondary pipeline runs at quarter rate speed, thus executing 32 threads per cycle in parallel. Here we find the more complex instructions which are more optimally executed on dedicated units, such as transcendentals, varying operations and iterators, data conversions, data moving ops as well as atomic operations.

143 Comments

View All Comments

extide - Monday, December 2, 2019 - link

Not exactly an announcement I was expecting to see, but quite interesting. A bit ballsy, but that's pretty much what they need right now. We'll see how it shapes up in actual products...Kishoreshack - Monday, December 2, 2019 - link

This if's & but's in the industry is soo irritatingif it releases on time it will compete well

according to its release schedule we might be at the ending cycle of Mali G77 & introduction of G78

So the performance gains are almost negligible

Pessimist9 - Wednesday, December 4, 2019 - link

But they said it! Quick! Everyone to their brokers STAT!Kishoreshack - Monday, December 2, 2019 - link

Companies overpromising & under delivering is normCOUGH *INTEL*

Seriously until the GPU hits the market

I'm seriously not interested in speculation

I'm over speculation need result's to even be excited about this

Until it is implemented in real world

the idea remains a big cloud of doubt

Cause many companies fail to stick to their release schedule

eek2121 - Tuesday, December 3, 2019 - link

Intel has real GPU products currently, they are just integrated with Intel CPUs. I definitely wouldn't say Intel is 'behind schedule' with Xe either. If you read between the lines, they are shipping 7nm products in 2021, and one of these products is a high performance GPU. As a matter of fact, despite what Intel would have you believe, I suspect that they'll keep their 10nm launches to a minimum and jump straight to 7nm. It also would not surprise me if there is a shake-up in Intel's future that causes their fab business to get spun off into it's own entity to ensure that stuff like this doesn't happen again. That last part is pure speculation though.I'd be rather curious to see how an Intel/AMD/Nvidia GPU stacks up against the Imagination one.

Also note that apparently they aren't shipping open source drivers, which right away is going to create issues for them. NVIDIA is one of the few companies that chose this route, and thus far it's caused nothing but problems for both NVIDIA and the community at large.

Korguz - Tuesday, December 3, 2019 - link

" they are shipping 7nm products in 2021, " yea right,, ill believe that, when it actually happens.." causes their fab business to get spun off into it's own entity" i doubt that would happen. 10nm is VERY late, because they tried to do to much at once, or there is another reason, who knows.. but to spin it off??? not likely

regsEx - Tuesday, December 3, 2019 - link

10 nm is DUV process of highest ever density. It's denser than TSMC 7FF DUV. 7 nm, which is generation of TSMC N3, is EUV. It's easier.TheinsanegamerN - Tuesday, December 3, 2019 - link

That's why they are now 4 years behind schedule, right?mode_13h - Wednesday, December 4, 2019 - link

Exactly.I wonder which hurt Intel more: their over-ambitious process engineers or their greedy management. Either way, while they were sputtering and cavitating, the competition blew right by them.

Their once-unassailable lead was outmatched by their own hubris.

Santoval - Tuesday, December 3, 2019 - link

Intel's 7nm node is apparently going to be slightly denser than TSMC's 5nm node (N5), *not* their 3nm node (N3). I have read about numbers in the range of ~185 million transistors (MTr) per mm^2 for TSMC's N5 and ~200 MTr/mm^2 for Intel's 7nm node. TSMC's N3, in turn, will be 255+ MTr/mm^2. Beside a quite higher density TSMC will switch to GAA-FETs at 3nm, so there can be no direct comparison anyway. In any case Intel will need to deliver 7nm first, and I strongly doubt they will manage to completely master EUV manufacturing of multiple layers by 2021.By the way, Intel's 10nm is not denser than TSMC's N7 DUV. Intel has developed three 10nm variants, for low, mid and high density. Only the high density (and highest performance) 10nm variant is slightly denser than TSMC's N7 DUV and even that was already outclassed in density by TSMC's N7 EUV (7nm+).

To my knowledge Intel employs the mid density 10nm variant for the mobile Ice Lake parts they just released, so they haven't released and aren't about to release any 10nm SoC, CPU or GPU that is denser than even TSMC's vanilla (DUV) N7. Their sole high density (100+ MTr/mm^2) 10nm parts are probably their newest Agilex FPGAs.