Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

by Dr. Ian Cutress on September 17, 2019 10:00 AM ESTDefining Turbo, Intel Style

Since 2008, mainstream multi-core x86 processors have come to the market with this notion of ‘turbo’. Turbo allows the processor, where plausible and depending on the design rules, to increase its frequency beyond the number listed on the box. There are tradeoffs, such as Turbo may only work for a limited number of cores, or increased power consumption / decreased efficiency, but ultimately the original goal of Turbo is to offer increased throughput within specifications, and only for limited time periods. With Turbo, users could extract more performance within the physical limits of the silicon as sold.

In the beginning, Turbo was basic. When an operating system requested peak performance from a processor, it would increase the frequency and voltage along a curve within the processor power, current, and thermal limits, or until it hit some other limitation, such as a predefined Turbo frequency look-up table. As Turbo has become more sophisticated, other elements of the design come into play: sustained power, peak power, core count, loaded core count, instruction set, and a system designer’s ability to allow for increased power draw. One laudable goal here was to allow component manufacturers the ability to differentiate their product with better power delivery and tweaked firmwares to give higher performance.

For the last 10 years, we have lived with Intel’s definition of Turbo (or Turbo Boost 2.0, technically) as the defacto understanding of what Turbo is meant to mean. Under this scheme, a processor has a sustained power level, and peak power level, a power budget, and assuming budget is available, the processor will go to a Turbo frequency based on what instructions are being run and how many cores are active. That Turbo frequency is governed by a Turbo table.

The Turbo We All Understand: Intel Turbo

So, for example. I have a hypothetical processor that has a sustained power level (PL1) of 100W. The peak power level (PL2) is 150W*. The budget for this turbo (Tau) is 20 seconds, or the equivalent of 1000 joules of energy (20*(150-100)), which is replenished at a rate of 50 joules per second. This quad core CPU has a base frequency of 3.0 GHz, but offers a single core turbo of 4.0 GHz, and 2-core to 4-core of 3.5 GHz.

So tabulated, our hypothetical processor gets these values:

| Sustained Power Level | PL1 / TDP | 100 W |

| Peak Power Level | PL2 | 150 W |

| Turbo Window* | Tau | 20 s |

| Total Power Budget* | (150-100) * 20 | 1000 J |

| *Turbo Window (and Total Power Budget) is typically defined for a given workload complexity, where 100% is a total power virus. Normally this value is around 95% | ||

*Intel provides ‘suggested’ PL2 values and ‘suggested’ Tau values to motherboard manufacturers. But ultimately these can be changed by the manufacturers – Intel allows their partners to adjust these values without breaking warranty. Intel believes that its manufacturing partners can differentiate their systems with power delivery and other features to allow a fully configurable value of PL2 and Tau. Intel sometimes works with its partners to find the best values. But the take away message about PL2 and Tau is that they are system dependent. You can read more about this in our interview with Intel’s Guy Therien.

Now please note that a workload, even a single thread workload, can be ‘light’ or it can be ‘heavy’. If I created a piece of software that was a never ending while(true) loop with no operations, then the workload would be ‘light’ on the core and not stressing all the parts of the core. A heavy workload might involve trigonometric functions, or some level of instruction-level parallelism that causes more of the core to run at the same time. A ‘heavy’ workload therefore draws more power, even though it is still contained with a single thread.

If I run a light workload that requires a single thread, it will start the processor at 4.0 GHz. If the power of that single thread is below 100W, then I will use none of my budget, as it is refilled immediately. If I then switch to a heavy workload, and the core now consumes 110W, then my 1000 joules of turbo budget would decrease by 10 joules every second. In effect, I would get 100 seconds of turbo on this workload, and when the budget is depleted, the sustained power level (PL1) would kick in and reduce the frequency to ensure that the consumption on the chip stayed at 100W. My budget of energy for turbo would not increase, because the 100 joules/second that is being added is immediately taken away by the heavy workload. This frequency may not be the 3.0 GHz base frequency – it depends on the voltage/power characteristics of the individual chip. That 3.0 GHz base value is the value that Intel guarantees on its hardware – so every one of this hypothetical processor will be a minimum of 3.0 GHz at 100W on a sustained workload.

To clarify, Intel does not guarantee any turbo speed that is part of the specification sheet.

Now with a multithreaded workload, the same thing occurs, but you are more likely to hit both the peak power level (PL2) of 150W, and the 1000 joules of budget will disappear in the 20 seconds listed in the firmware. If the chip, with a 4-core heavy workload, hits the 150W value, the frequency will be decreased to maintain 150W – so as a result we may end up with less than the ‘3.5 GHz’ four-core turbo that was listed on the box, despite being in turbo.

So when a workload is what we call ‘bursty’, with periods of heavy and light work, the turbo budget may be refilled quicker than it is used in light workloads, allowing for more turbo when the workload gets heavy again. This makes it important when benchmarking software one after another – the first run will always have the full turbo budget, but if subsequent runs do not allow the budget to refill, it may get less turbo.

As stated, that turbo power level (PL2) and power budget time (Tau) are configurable by the motherboard manufacturer. We see that on enterprise motherboards, companies often stick to Intel’s recommended settings, but with consumer overclocking motherboards, the turbo power might be 2x-5x higher, and the power budget time might be essentially infinite, allowing for turbo to remain. The manufacturer can do this if they can guarantee that the power delivery to the processor, and the thermal solution, are suitable.

(It should be noted that Intel actually uses a weighted algorithm for its budget calculations, rather than the simplistic view I’ve given here. That means that the data from 2 seconds ago is weighted more than the data from 10 seconds ago when determining how much power budget is left. However, when the power budget time is essentially infinite, as how most consumer motherboards are set today, it doesn’t particularly matter either way given that the CPUs will turbo all the time.)

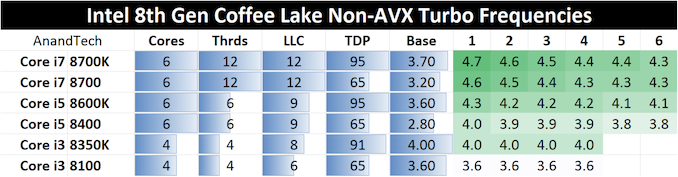

Ultimately, Intel uses what are called ‘Turbo Tables’ to govern the peak frequency for any given number of cores that are loaded. These tables assume that the processor is under the PL2 value, and there is turbo budget available. For example, here are Intel’s turbo tables for Intel’s 8th Generation Coffee Lake desktop CPUs.

So Intel provides the sustained power level (PL1, or TDP), the Base frequency (3.70 GHz for the Core i7-8700K), and a range of turbo frequencies based on the core loading, assuming the motherboard manufacturer set PL2 isn’t hit and power budget is available.

The Effect of Intel’s Turbo Regime, and Intel’s Binning

At the time, Intel did a good job in conveying its turbo strategy to the press. It helped that staying on quad-core processors for several generations meant that the actual turbo power consumption of these quad-core chips was actually lower than sustained power value, and so we had a false sense of security that turbo could go on forever. With the benefit of hindsight, the nuances relating to turbo power limits and power budgets were obfuscated, and people ultimately didn’t care on the desktop – all the turbo for all the time was an easy concept to understand.

One other key metric that perhaps went under the radar is how Intel was able to apply its turbo frequencies to the CPU.

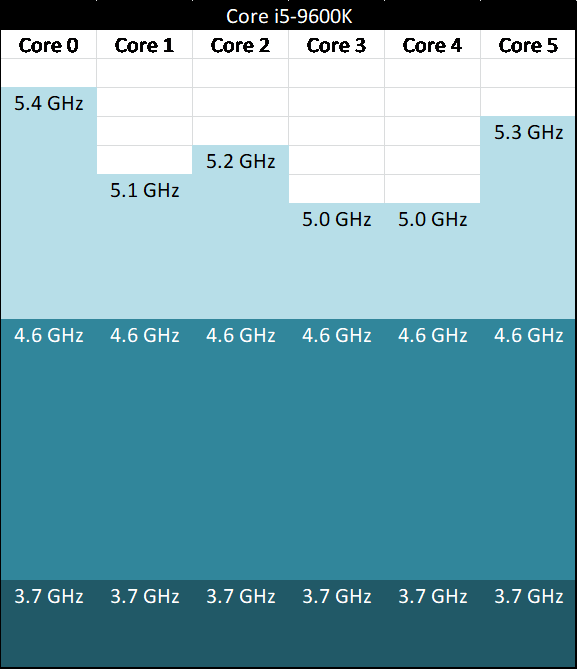

For any given CPU, any core within that design could hit the top turbo. It allowed for threads to be loaded onto whatever core was necessary, without the need to micromanage the best thread positioning for the best performance. If Intel stated that the single core turbo frequency was 4.6 GHz, then any core could go up to 4.6 GHz, even if each individual core could go beyond that.

For example, here’s a theoretical six-core Core i5-9600K, with a 3.7 GHz base frequency, and a 4.6 GHz turbo frequency. The higher numbers represent theoretical maximums of each core at the turbo voltage.

This is actually a strategy related to how Intel segments its CPUs after manufacturing, a process called binning. If a processor has the right power/thermal characteristics to reach a given frequency in a given power, then it could be labelled as the most appropriate CPU for retail and sold as such. Because Intel aimed for a homogeneous monolithic design, every core in the design was tested such that it performed equally (or almost equally) with every other core. Invariably some cores will perform better than others, if tweaked to the limits, but under Intel’s regime, it helped Intel to spread the workloads around as to not create thermal hotspots on the processor, and also level out any wear and tear that might be caused over the lifetime of the product. It also meant that in a hypervisor, every virtual machine could experience the same peak frequencies, regardless of the cores they used.

With binning, Intel (or any other company), is selecting a set of voltages and frequencies for a processor to which it is guaranteed. From the manufacturing, Intel (or others) can see the predicted lifespan of a given processor for a range of frequencies and voltages, and the ones that hit the right mark (based on internal requirements) means that a silicon chip ends up as a certain CPU. For example, if a piece of silicon does hit 9900K voltages and frequencies, but the lifespan rating of that piece of silicon is only two years, Intel might knock it down to a 9700K, which gives a predicted lifespan of fifteen years. It’s that sort of thing that determines how high a chip can perform. Obviously chips that can achieve high targets can also be reclassified as slower parts based on inventory levels or demand.

This is how the general public, the enthusiasts, and even the journalists and reviewers covering the market, have viewed Turbo for a long time. It’s a well-known part of the desktop space and to a large extent is easy to understand. If someone said ‘Turbo’ frequency, everyone was agreed on the same basic principles and no explanation was needed. We all assumed that when Turbo was mentioned, this is what they meant, and this is what it would mean for eternity.

Now insert AMD, March 2017, with its new Zen core microarchitecture. Everyone assumed Turbo would work in exactly the same way. It does not.

144 Comments

View All Comments

Dragonstongue - Tuesday, September 17, 2019 - link

ty so much for the pro article and summing in questionnot always do I come here and "want" to continue reading over...I keep to myself.

thankfully this was not such an article

o7

Iger - Thursday, September 19, 2019 - link

+1This mirrors my thoughts and feelings exactly.

mikato - Monday, September 23, 2019 - link

I completely agree. I had seen some of this with Hardware Unboxed, Gamers Nexus, Der8auer, Reddit. This was a great summary with solid explanation behind it, a more helpful way to learn about the whole issue.Now for the next issue - Hey, Ian is a doctor now, thinks he's better than all of us. Discuss... :)

(yes I knew he had a doctorate already)

azfacea - Tuesday, September 17, 2019 - link

death to intel LULPhynaz - Wednesday, September 18, 2019 - link

Drugs are bad for you, seek treatment.Smell This - Wednesday, September 18, 2019 - link

It sure is interesting that the **Chipzillah Propaganda Machine** has entered high gear/over-drive over the last several weeks after reports that the "Intel Apollo Lake CPUs May Die Sooner Than Expected" ...https://www.tomshardware.com/news/intel-apollo-lak...

Funny that, huh?

eastcoast_pete - Tuesday, September 17, 2019 - link

Thanks Ian, helpful article with good explanations!Question: The "binning by expected lifespan" caught my eye. Could you do another nice backgrounder on how overclocking affects lifespan? I believe many out there believe that there is such a thing as a free lunch. So, how fast does a CPU (or GPU) degrade if it gets pushed (overclocked and overvolted) to the still-usable limit. Maybe Ryan can chime in on the GPU aspect, especially the many "factory overclocked" cards. Thanks!

Ian Cutress - Tuesday, September 17, 2019 - link

I've been speaking to people about this to see if we can get a better understanding about manufacturing as it relates to expected product lifetimes and such. Overclocking would obviously be an extension to that. If something happens and we get some info, I'll write it up.igavus - Tuesday, September 17, 2019 - link

Aside from overclocking, it'd be interesting to know if the expected lifetime is optimized with warranty times and if what we're seeing is a step forward on the planned obsolescence path.It's sort of more important now than ever, because with 8 core being the new norm soon - we'll probably see even longer refresh cycles as workloads catch up to saturate the extra performance available. And limiting product lifetime would help curb those longer than profitable refresh cycles.

FunBunny2 - Tuesday, September 17, 2019 - link

"if what we're seeing is a step forward on the planned obsolescence path."you really, really should get this 57 Plymouth, cause your 56 Dodge has teeny tail fins.