The NVIDIA GeForce RTX 2080 Super Review: Memories of the Future

by Ryan Smith on July 23, 2019 9:00 AM EST- Posted in

- GPUs

- GeForce

- NVIDIA

- Turing

- GeForce RTX

Power, Temperatures, & Noise

Last, but not least of course, is our look at power, temperatures, and noise levels. While a high performing card is good in its own right, an excellent card can deliver great performance while also keeping power consumption and the resulting noise levels in check.

| GeForce Video Card Voltages | |||||

| RTX 2080S Boost | RTX 2080S Idle | RTX 2080 Boost | RTX 2070S Boost | ||

| 1.05v | 0.65v | 1.05v | 1.043v | ||

Overall, the voltages being used for the RTX 2080 Super are not any different than NVIDIA’s other TU104 cards – or any of their other Turing cards, for that matter. At its highest clockspeeds the card runs at 1.05v, quickly stepping down to below 1v at lower clockspeeds. The 0.65v idle voltage is among the lowest we’ve ever recorded for an NVIDIA card, however.

| GeForce Video Card Average Clockspeeds | |||||

| Game | RTX 2080S | RTX 2080 Ti | RTX 2080 | RTX 2070S | |

| Max Boost Clock | 1965MHz | 1950MHz | 1900MHz | 1950MHz | |

| Boost Clock | 1815MHz | 1545MHz | 1710MHz | 1770MHz | |

| Tomb Raider | 1937MHz | 1725MHz | 1785MHz | 1875MHz | |

| F1 2019 | 1920MHz | 1725MHz | 1785MHz | 1875MHz | |

| Assassin's Creed | 1920MHz | 1800MHz | 1815MHz | 1890MHz | |

| Metro Exodus | 1937MHz | 1755MHz | 1785MHz | 1875MHz | |

| Strange Brigade | 1920MHz | 1695MHz | 1770MHz | 1875MHz | |

| Total War: TK | 1937MHz | 1740MHz | 1785MHz | 1875MHz | |

| The Division 2 | 1937MHz | 1635MHz | 1740MHz | 1845MHz | |

| Grand Theft Auto V | 1937MHz | 1815MHz | 1815MHz | 1890MHz | |

| Forza Horizon 4 | 1937MHz | 1815MHz | 1800MHz | 1890MHz | |

Looking at clockspeeds, we can piece together a couple of interesting pieces of information. On the clockspeed side, NVIDIA hasn’t actually changed the card’s maximum clockspeed all that much. Our RTX topped out at 1900MHz, and the RTX 2080 Super is only a bit higher at 1965MHz. That they’re doing it without more voltage is a bit more interesting – it looks like chip quality may have improved a bit over the past year – but not too surprising.

What is more surprising however are the average clockspeeds we recorded for the RTX 2080 Super. In short, the card spends a lot of time at or near its top turbo bins. With temperature compensation active, our RTX 2080 Super tops out at 1937MHz; a clockspeed that it holds at for over half of our games even at 4K. Quite frankly the RTX 2080 Super is almost a boring card in this respect (in a good way); there’s just not much in the way of power throttling going on here. If anything, the hard part is getting the card above 90-95% power usage.

This, ultimately, is why the RTX 2080 Super is as fast as it is versus the vanilla RTX 2080. The extra SMs help, but it’s the extra 100-150MHz on the GPU clockspeed that’s really driving the card.

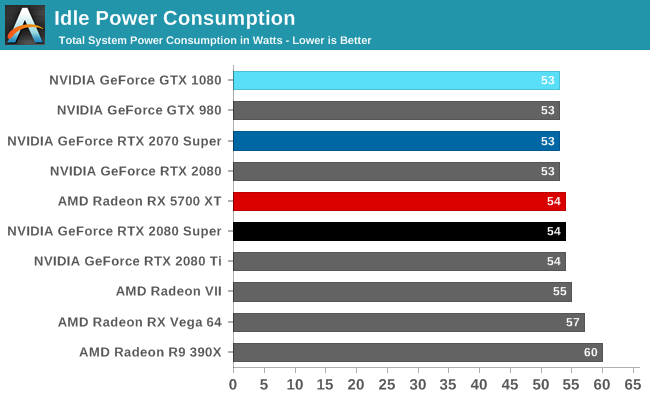

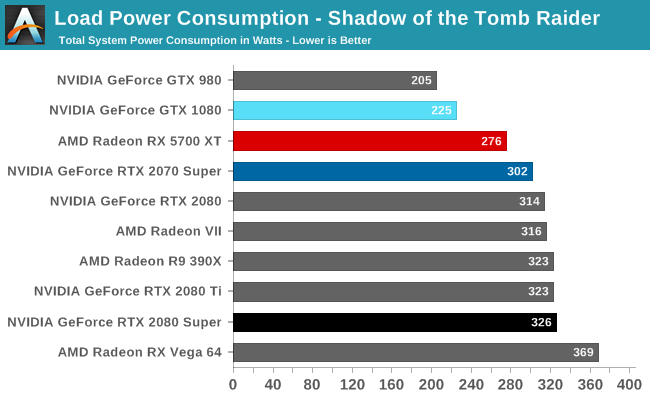

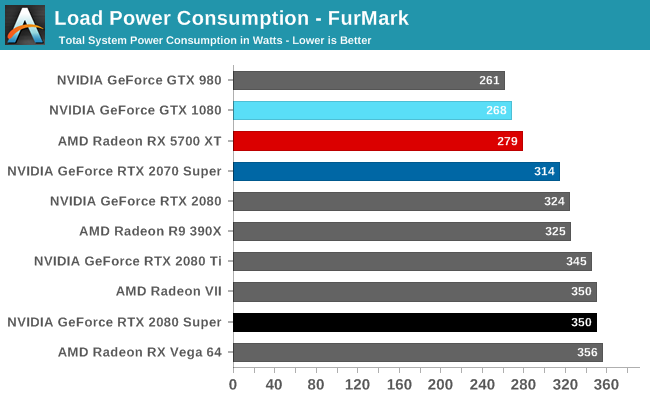

Getting to power consumption itself then, idle is effectively unchanged, exactly as we’d expect it. Load power, on the other hand, is paying the price for those 1900MHz+ clockspeeds. Under both FurMark and Tomb Raider, our RTX 2080 Super-equipped system is drawing almost the same amount of power as the RTX 2080 Ti system with a difference of just a few watts. That performance doesn’t come for free. NVIDIA’s overall power efficiency is still quite good here (the Radeon VII won’t be touching it, for example), but it’s clearly regressed a bit versus the RTX 2080 Ti and vanilla RTX 2080.

It is worth noting, however, that often the card was clockspeed-limited rather than power limited. So while Tomb Raider was specifically picked to be a punishing game – a task it delivered on here – I fully expect that the RTX 2080 Super is drawing a bit less than the RTX 2080 Ti in around half of our other games.

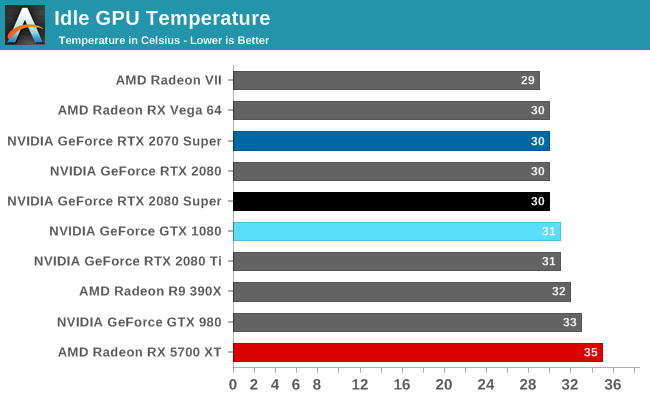

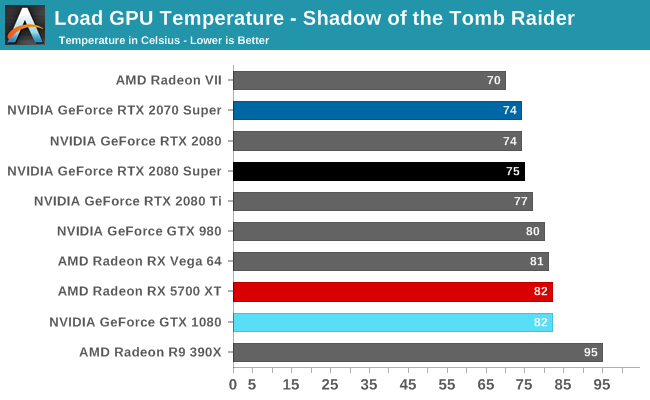

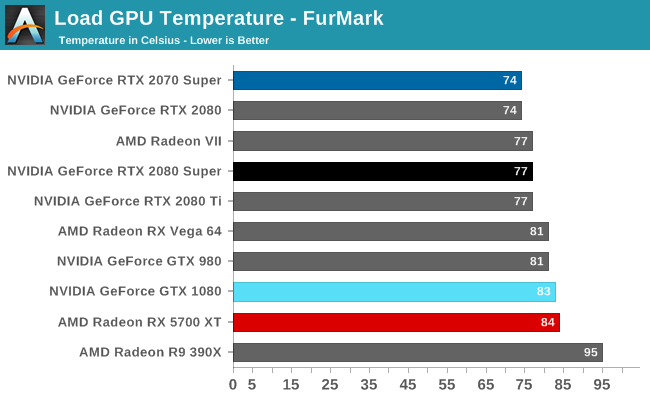

With higher power consumption and the same cooler comes higher temperatures. Even FurMark’s 77C is still several degrees below the card’s 84C thermal throttle point, but it is a very straightforward consequence of the increased power consumption.

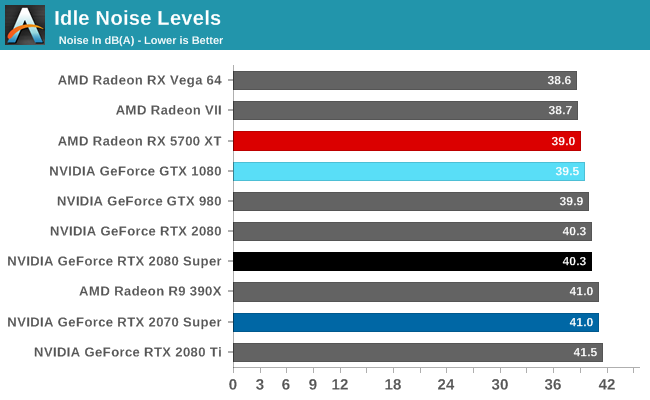

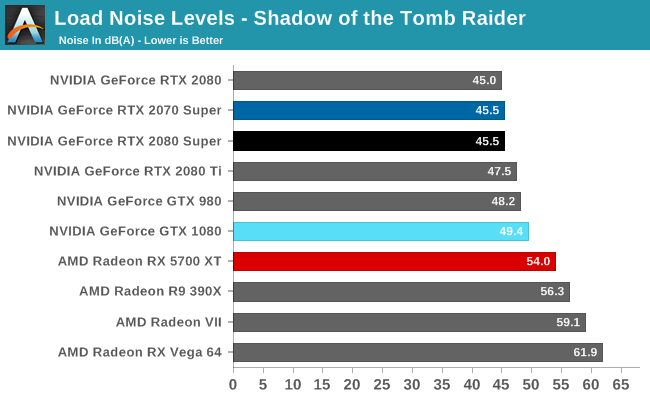

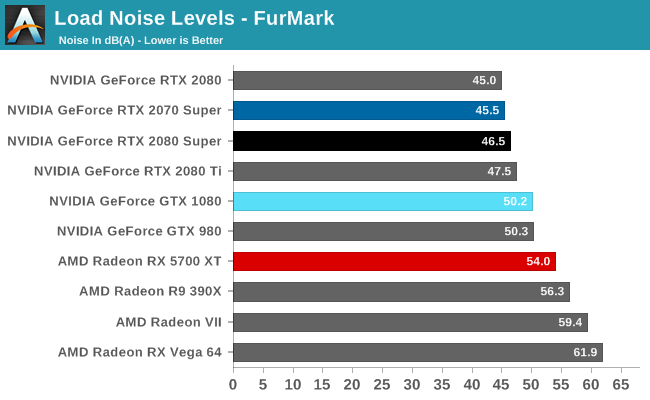

Last, but not least, we have noise. Again this is the same cooler as the RTX 2080 & RTX 2080 Ti, so the card has to work a bit harder to keep itself cool versus the original RTX 2080. The net result is that the RTX 2080 Super splits the difference between the original RTX 2080 and the RTX 2080 Ti, peaking at 46.5 dB(A). This is unlikely to be a very noticeable change as compared to the RTX 2080, but it’s louder none the less. I’m actually a bit surprised it didn’t pull even with the RTX 2080 Ti, but then our RTX 2080 Ti seems to run just a bit loud period – even at idle it’s a bit louder.

111 Comments

View All Comments

willis936 - Tuesday, July 23, 2019 - link

I think there is an error on the first page comparison table: 2080 Ti memory clock.Also first I guess.

willis936 - Tuesday, July 23, 2019 - link

Also the last paragraph of the conclusion should have "barring" rather than "baring".Ryan Smith - Tuesday, July 23, 2019 - link

This is what happens when you get overeager with copying & pasting... Thanks!extide - Tuesday, July 23, 2019 - link

and you say ending the bundle when I think you mean extendingRyan Smith - Tuesday, July 23, 2019 - link

The fault with that one lies solely with Word!boozed - Tuesday, July 23, 2019 - link

You guys really need a "corrections" link so the comments section isn't full of people pointing out typos and malapropisms (I'm guilty of the latter myself, though).Cheers for the review

RSAUser - Wednesday, July 24, 2019 - link

They rather need a grammarly subscription.willis936 - Tuesday, July 23, 2019 - link

496 GB/s for $700. I'm curious to see a retrospective of GPU memory bandwidth vs. cost over the last ten years. It feels like it's really sat still compared to transistor count. Are GPU caches getting bigger? Even then there is little that can be done about the main memory bandwidth requirements of SIMD workloads. We have faster interconnects yet the buses are staying the same or getting smaller.Stuka87 - Tuesday, July 23, 2019 - link

Bandwidth matters less now than it did many years ago thanks different types of compression being used. You can fit more data into the same amount of bandwidth now than you could years ago.willis936 - Tuesday, July 23, 2019 - link

Lossy compression isn't free. At some point the user will say "this looks bad". If that wasn't the case then why not compress every 64x64 tile to 1 KB? It's dependent on the data's entropy and many textures are high entropy. It's nice to have tuneable control over a soft cap, but it isn't a magic bullet that makes things better.Lossless compression would be bad in this application. No one should make a system that imposes a maximum allowed entropy on artists.

Memory bandwidth always has and remains to be the bottleneck of SIMD systems.