NVMe 1.4 Specification Published: Further Optimizing Performance and Reliability

by Billy Tallis on June 14, 2019 1:00 PM EST

Just over two years after the last major update, a new version of the NVM Express protocol specification for SSDs has been published. In recent years the NVMe standards body has taken a different approach to adding new features to the specification: rather than bundle them up into major spec updates that are published years apart, new features that are ready have been individually ratified and published as Technical Proposals (TPs) so that vendors can begin implementing and deploying support for those features without delay and without having to target a mere draft standard. Some of these features were implemented and publicly demonstrated by vendors just a few months after the NVMe 1.3 spec was published.

NVMe 1.4 incorporates 28 TPs that build atop NVMe 1.3, plus the various corrections and clarifications that went into versions 1.3a through 1.3d. Overall, NVMe 1.4 seems to be a much bigger update than 1.3 was. Several sections now have more in-depth explanations of new and existing features, so the specification is easier to understand even though it has grown from 298 pages for 1.3d to 403 pages for 1.4. Most of the diagrams below are straight out of the spec itself, and are much appreciated.

As usual, the new features aren't all relevant to all use cases for NVMe SSDs: some only make sense for embedded systems or hyperscaler deployments making heavy use of NVMe over Fabrics and virtualization, and as a result most of the new features are optional for SSDs to implement. The companion standards NVMe Management Interface and NVMe over Fabrics have also been evolving: NVMe-MI 1.1 was ratified in December, and NVMe over TCP has emerged as a third transport protocol for NVMeoF, joining the Fiber Channel and RDMA transports. Some of the additions to the base NVMe specification serve to accommodate changes to these companion standards.

The new optional features require updates to both the SSDs and the NVMe drivers in operating systems; without support on both sides, drives will fall back to using only older feature sets. Some changes higher up the software stack will also be required in order to make meaningful use of the new capabilities; in particular, many storage administration tools will benefit from being aware of new information and capabilities provided by SSDs. These software updates often take longer to develop than the relevant SSD firmware changes, so support for these new features will be showing up in specialized environments long before they are used by general-purpose OS distributions.

The NVMe SSD market is at the beginning of a period of major performance improvement enabled by the transition to PCIe 4.0, but this doesn't require any changes to the NVMe spec. The NVMe 1.4 spec does include some performance optimizations that rely on being smarter about how the storage is used, with better cooperation between the SSD and the host system. The other big category of new features pertains to error handling, with particular relevance to RAID rebuilds. Below are highlights from the new specification, but this is not an exhaustive list of what's new, and our analysis of potential use cases may not match what the hardware vendors are planning.

More Block Size and Alignment hints

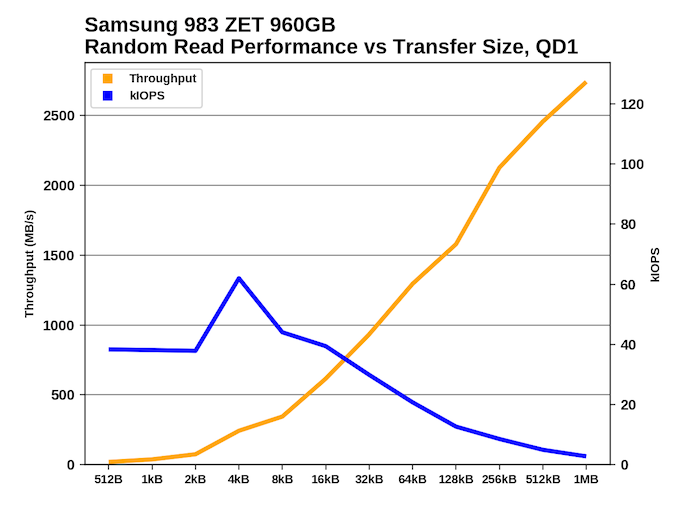

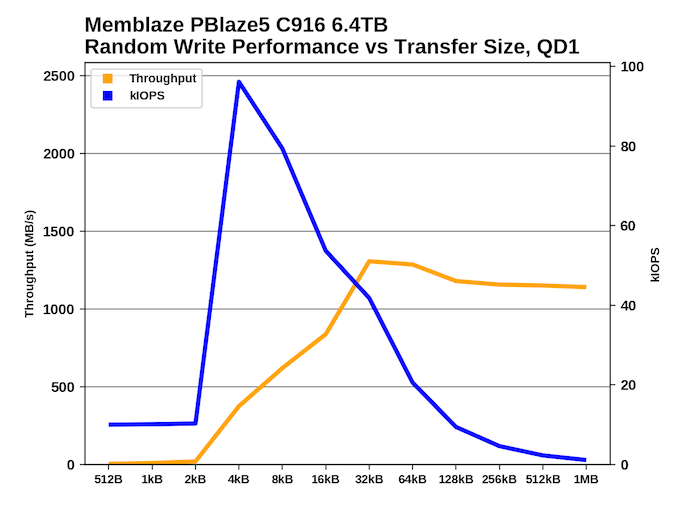

NVMe SSDs behave like regular block devices with sector sizes that are usually 512 bytes or 4kB. Modern NAND flash memory has native page sizes larger than 4kB, and erase block sizes measured in megabytes. This mismatch is the source of most of the complexity in the flash translation layer implemented by each SSD. The FTL allows software to continue to function correctly with the fiction that their storage has small block sizes, but some awareness of the real block and page sizes can allow the operating system or applications to make the job easier for the SSD and enable higher performance. The NVMe 1.3 spec introduced the Namespace Optimal IO Boundary feature, allowing SSDs to inform the host system of the basic alignment requirements for read and write commands to perform best. We've seen cases of drives that allow small block size access, but have very poor performance for transfers smaller than 4kB:

In the worst cases, drives should really just be dropping support for 512B sectors and defaulting to 4kB sectors, but where compatibility with older systems is required, hints about what access patterns work well can help. NVMe 1.4 gives SSDs the ability to communicate much more detailed information so that write and deallocate (TRIM) commands can match page and erase block sizes.

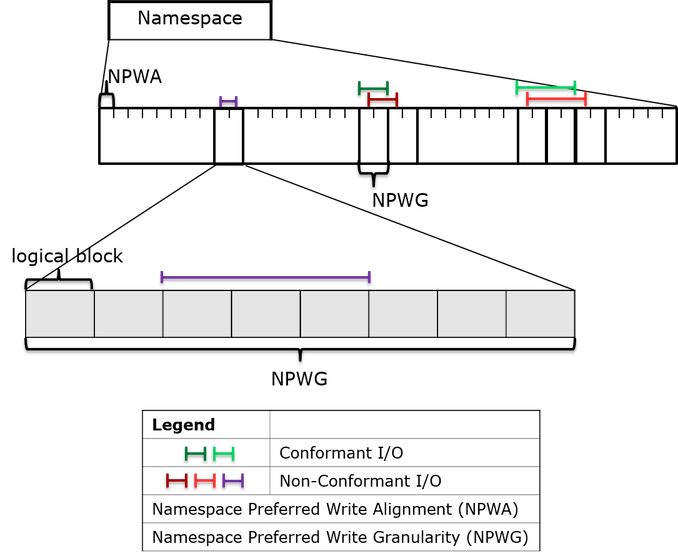

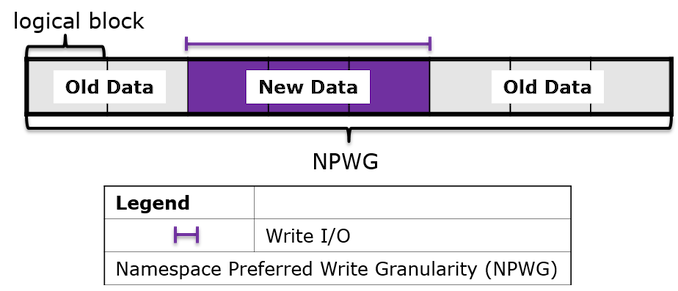

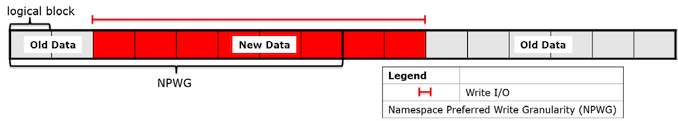

Drives may now report Namespace Preferred Write Alignment and Namespace Preferred Write Granularity values that will minimize the read-modify-write cycles that result from writing to only part of a NAND page. Likewise, the Namespace Preferred Deallocate Alignment and Namespace Preferred Deallocate Granularity apply to NVMe deallocate commands, the analog of ATA TRIM commands. Deallocate/TRIM commands that cover small data ranges or large but misaligned ranges are hard for SSDs to handle without increasing write amplification defeating much of the purpose of using explicit deallocation commands in the first place.

Above: Undersized writes may require the SSD to perform a read-modify-write operation

Below: Optimally sized but misaligned writes also hurt performance and increase write amplification

Drives that support the NVMe 1.3 Streams feature can also provide hints for the preferred write and deallocate granularity when using Streams, and these values will usually be a multiple of the above hints.

The responsibility for making good use of these hints will mostly fall to the OS and filesystem. RAID stripe sizes and filesystem block sizes can be set based on this information, and applications like databases that try to optimize storage performance by bypassing much of the OS's storage stack should also pay heed.

Faster Error Detection and Recovery

NVMe 1.4 introduces several new features to help with handling unrecoverable read errors and corrupted data, especially in RAID and similar scenarios where the host system may be able to recover data more quickly by simply fetching it from somewhere else.

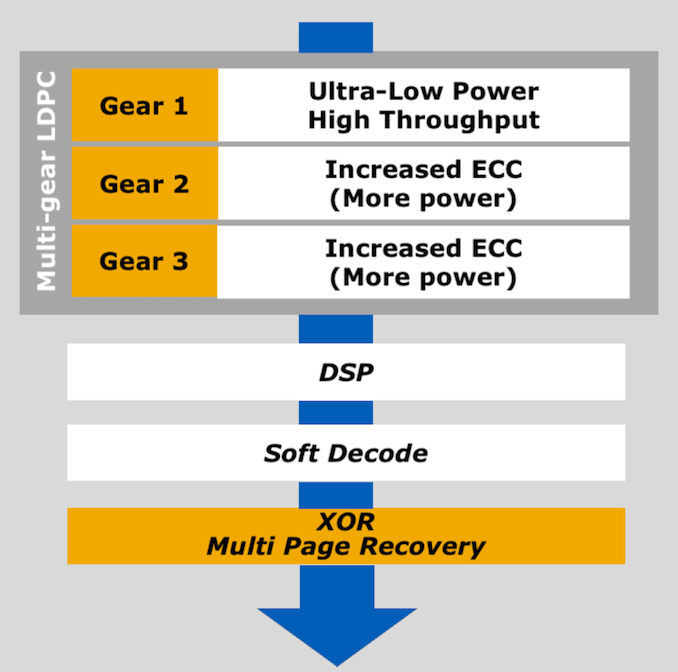

The Read Recovery Level feature lets the host system configure how hard the SSD should try to recover corrupted data. SSDs usually have several layers of error correction, each more robust but slower and more power-hungry than the last. In a RAID-1 or similar scenario, the host system will usually prefer to get an error quickly so it can try reading the same data from the other side of the mirror rather than wait for the drive to re-try a read and fall back to slower levels of ECC. NVMe already supports Time-Limited Error Recovery (TLER), but this only allows the host system to put a cap on error handling time in increments of 100ms. Read Recovery Levels allow drives to provide up to 16 different levels of error handling strategies, but drives implementing this feature are only required to implement a minimum of two different modes. This feature is configured on a per- NVM Set level.

Western Digital's client NVMe controller error correction layers

For proactively avoiding unrecoverable read errors, NVMe 1.4 adds Verify and Get LBA Status commands. The Verify command is simple: it does everything a normal read command does, except for returning the data to the host system. If a read command would return an error, a verify command will return the same error. If a read command would be successful, a verify command will be as well. This makes it possible to do a low-level scrub of the stored data without being bottlenecked by the host interface bandwidth. Some SSDs will react to a fixable ECC error by moving or re-writing degraded data, and a verify command should trigger the same behavior. Overall, this should reduce the need for filesystem-level checksum scrubbing/verification. Each Verify command is tagged with a bit indicating whether the SSD should fail fast or try hard to recover data, similar to but overriding the above Read Recovery Level setting.

The Get LBA Status feature allows the drive to provide the host with a list of blocks that will probably result in an unrecoverable read error if a read or verify command is attempted. The SSD may have already detected ECC errors during automatic background scanning, or in severe cases it may be able to report which LBAs are affected by the failure of an entire NAND die or channel. The Get LBA Status feature also can be used to ask the drive to perform a scan of selected data ranges before returning the list of probably-unrecoverable blocks.

When the host system finds out about corrupted or lost data either from the Get LBA Status feature or from issuing read or verify commands and receiving an error in response, it can re-write that data to the same LBAs using a copy obtained elsewhere (backups or RAID recovery) and then carry on using those logical blocks normally, while the SSD will retire the affected physical blocks if necessary.

Persistent Memory Region

Most NVMe SSDs have a substantial amount of DRAM in addition to flash memory. The primary purpose of this DRAM is to serve as a cache for the flash translation layer's tables that track the mapping between logical block addresses and physical flash memory addresses. But NVMe has been exploring other ways to put that DRAM to use. The 1.2 spec introduced the Controller Memory Buffer, which makes some of the SSD's DRAM directly accessible through PCI address space. This allows the IO command submission and completion queues to live in the SSD's memory instead of the host CPU's memory, which can reduce latency on the submission side, and can cut out some unnecessary copying in NVMe over Fabrics situations where peer to peer DMA between the SSD and the network card allows data to bypass the host DRAM entirely. The new Persistent Memory Region (PMR) feature in NVMe 1.4 operates similarly—the host system can read or write to this memory directly using basic PCIe transfers, without any of the overhead of command queues. In practice a Controller Memory Buffer is usually intended to be used in support of normal NVMe operation, but a PMR won't be involved in any of that. Instead, it's a general-purpose chunk of memory that is made persistent thanks to the same power loss protection capacitors that allow a typical enterprise SSD's internal caches to be safely flushed in the event of an unexpected loss of host power. The contents of the PMR will automatically be written out to flash, and when the host system comes back up it can ask the SSD to reload the contents of the PMR.

The performance and capacity of a PMR won't come anywhere close to what NVDIMMs can provide, but a PMR provides some of the same benefits. Accessing the PMR is much simpler and faster than constructing a NVMe IO command and waiting for its completion. A typical implementation of the PMR feature will be able to accept a very high volume of writes without wearing out any flash memory, because its contents only need to be saved to flash in the event of a power failure. This makes a PMR a great place to store a database or filesystem journal that sees constant writes and can easily become a bottleneck.

(Lite-On has implemented a similar feature in one of their datacenter SSDs, exposing a portion of its capacitor-protected DRAM as an additional NVMe namespace alongside the regular flash storage namespace. This provides similar performance consistency to a PMR and doesn't require any application or driver changes to support, but the raw performance can't be quite as high as a PMR.)

Source: NVM Express

14 Comments

View All Comments

Arbie - Friday, June 14, 2019 - link

Does this have any implications for motherboard hardware in the near future (six months or so)? Or in fact for any hardware not part of the SSD? From the text it seems like it doesn't, unless I missed something.Excellent article BTW; thanks.

dooferorg - Friday, June 14, 2019 - link

As with anything, technology always marches on. At least if a motherboard supports PCIe3, or soon PCIe4 then you'll be able to get appropriate cards to interface these newer drives with.Billy Tallis - Friday, June 14, 2019 - link

Motherboards are only minimally involved with NVMe; basically just for booting. Otherwise, they're just routing PCIe lanes from the CPU to the SSD and are blind to the higher-level protocols.bobhumplick - Saturday, June 15, 2019 - link

so does this mean that nvme 1.4 will be back compat with current boards if new drives are used? or will new hardware be needed? i know the other person basically asked that but i didnt fully understand if an answer had been given. seems like as long as the drive supports it as well as the ssd then it should work. unless the bios has to support it?cygnus1 - Monday, June 17, 2019 - link

As was mentioned, this is software change so I don't know that anything would be necessary depending on the changes in the protocol. But if anything is needed, I think a motherboard would just need to have a firmware update to enable NVMe 1.4 support. I haven't seen it said anywhere, but I would think as long as the device could boot and everything after that is just passed between the SSD and the OS, then the OS and the SSD could possibly just negotiate up to 1.4 even if the motherboard only supported 1.3 for boot purposes.CheapSushi - Friday, June 14, 2019 - link

I really like the idea of there being more variety for addon cards or storage drives that can act as accelerators or coprocessors.willis936 - Saturday, June 15, 2019 - link

Good stuff. I hope anyone who implements PMR also does periodic (like once a second) synchronization to storage. Otherwise it’s pointless to even bother sending the file across PCIe in the first place.boeush - Saturday, June 15, 2019 - link

You miss the point of PMR. It doesn't need to sync periodically, because it is automatically guaranteed persistence across power cuts. That's what makes it different from regular RAM, and provides the reason for sending the file across PCIe.willis936 - Saturday, June 15, 2019 - link

Backup power on drives is used to flush data that is cached in RAM to NAND. You can’t guarantee when power will be restored. If a design treats data that users care about as disposable then it’s a bad design. If it’s data the user doesn’t care about then why is it getting sent to storage at all? Main memory works fine. It costs very little to have the drive sync to NAND.extide - Sunday, June 16, 2019 - link

It syncs when the power goes out. It doesn't need to at any other time.