The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTTU117: Tiny Turing

Before we take a look at the Zotac card and our benchmark results, let’s take a moment to go over the heart of the GTX 1650: the TU117 GPU.

TU117 is for most practical purposes a smaller version of the TU116 GPU, retaining the same core Turing feature set, but with fewer resources all around. Altogether, coming from the TU116 NVIDIA has shaved off one-third of the CUDA cores, one-third of the memory channels, and one-third of the ROPs, leaving a GPU that’s smaller and easier to manufacture for this low-margin market.

Still, at 200mm2 in size and housing 4.7B transistors, TU117 is by no means a simple chip. In fact, it’s exactly the same die size as GP106 – the GPU at the heart of the GeForce GTX 1060 series – so that should give you an idea of how performance and transistor counts have (slowly) cascaded down to cheaper products over the last few years.

Overall, NVIDIA’s first outing with their new GPU is an interesting one. Looking at the specs of the GTX 1650 and how NVIDIA has opted to price the card, it’s clear that NVIDIA is holding back a bit. Normally the company launches two low-end cards at the same time – a card based on a fully-enabled GPU and a cut-down card – which they haven’t done this time. This means that NVIDiA is sitting on the option of rolling out a fully-enabled TU117 card in the future if they want to.

By the numbers, the actual CUDA core count differences between GTX 1650 and a theoretical fully-enabled GTX 1650 Ti are quite limited – to the point where I doubt a few more CUDA cores alone would be worth it – however NVIDIA also has another ace up its sleeve in the form of GDDR6 memory. If the conceptually similar GTX 1660 Ti is anything to go by, a fully-enabled TU117 card with a small bump in clockspeeds and 4GB of GDDR6 could probably pull far enough ahead of the vanilla GTX 1650 to justify a new card, perhaps at $179 or so to fill NVIDIA’s current product stack gap.

The bigger question is where performance would land, and if it would be fast enough to completely fend off the Radeon RX 570. Despite the improvements over the years, bandwidth limitations are a constant challenge for GPU designers, and NVIDIA’s low-end cards have been especially boxed in. Coming straight off of standard GDDR5, the bump to GDDR6 could very well put some pep into TU117’s step. But the price sensitivity of this market (and NVIDIA’s own margin goals) means that it may be a while until we see such a card; GDDR6 memory still fetches a price premium, and I expect that NVIDIA would like to see this come down first before rolling out a GDDR6-equipped TU117 card.

Turing’s Graphics Architecture Meets Volta’s Video Encoder

While TU117 is a pure Turing chip as far as its core graphics and compute architecture is concerned, NVIDIA’s official specification tables highlight an interesting and unexpected divergence in related features. As it turns out, TU117 has incorporated an older version of NVIDIA’s NVENC video encoder block than the other Turing cards. Rather than using the Turing block, it uses the video encoding block from Volta.

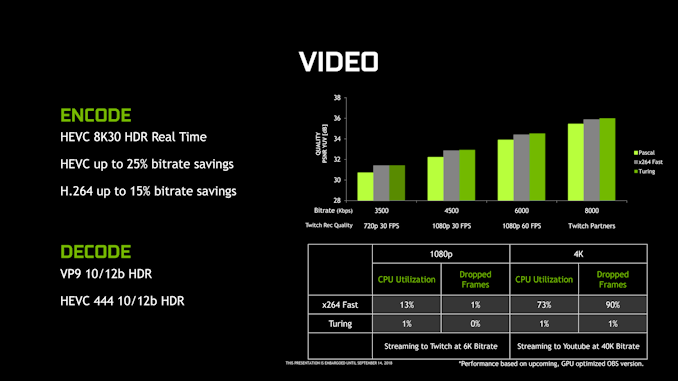

But just what does the Turing NVENC block offer that Volta’s does not? As it turns out, it’s just a single feature: HEVC B-frame support.

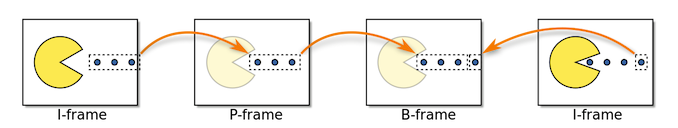

While it wasn’t previously called out by NVIDIA in any of their Turing documentation, the NVENC block that shipped with the other Turing cards added support for B(idirectional) Frames when doing HEVC encoding. B-frames, in a nutshell, are a type of advanced frame predication for modern video codecs. Notably, B-frames incorporate information about both the frame before them and the frame after them, allowing for greater space savings versus simpler uni-directional P-frames.

I, P, and B-Frames (Petteri Aimonen / PD)

This bidirectional nature is what make B-frames so complex, and this especially goes for video encoding. As a result, while NVIDIA has supported hardware HEVC encoding for a few generations now, it’s only with Turing that they added B-frame support for that codec. The net result is that relative to Volta (and Pascal), Turing’s NVENC block can achieve similar image quality with lower bitrates, or conversely, higher image quality at the same bitrate. This is where a lot of NVIDIA’s previously touted “25% bitrate savings” for Turing come from.

Past that, however, the Volta and Turing NVENC blocks are functionally identical. Both support the same resolutions and color depths, the same codecs, etc, so while TU117 misses out on some quality/bitrate optimizations, it isn’t completely left behind. Total encoder throughput is a bit less clear, though; NVIDIA’s overall NVENC throughput has slowly ratcheted up over the generations, in particular so that their GPUs can serve up an ever-larger number of streams when being used in datacenters.

Overall this is an odd difference to bake into a GPU when the other 4 members of the Turing family all use the newer encoder, and I did reach out to NVIDIA looking for an explanation for why they regressed on the video encoder block. The answer, as it turns out, came down to die size: NVIDIA’s engineers opted to use the older encoder to keep the size of the already decently-sized 200mm2 chip from growing even larger. Unfortunately NVIDIA isn’t saying just how much larger Turing’s NVENC block is, so it’s impossible to say just how much die space this move saved. However, that the difference is apparently enough to materially impact the die size of TU117 makes me suspect it’s bigger than we normally give it credit for.

In any case, the impact to GTX 1650 will depend on the use case. HTPC users should be fine as this is solely about encoding and not decoding, so the GTX 1650 is as good for that as any other Turing card. And even in the case of game streaming/broadcasting, this is (still) mostly H.264 for compatibility and licensing reasons. But if you fall into a niche area where you’re doing GPU-accelerated HEVC encoding on a consumer card, then this is a notable difference that may make the GTX 1650 less appealing than the TU116-powered GTX 1660.

126 Comments

View All Comments

Marlin1975 - Friday, May 3, 2019 - link

Not a bad card, but it is a bad price.drexnx - Friday, May 3, 2019 - link

yep, but if you look at the die size, you can see that they're kinda stuck - huge generational die size increase vs GP107, and even RX570/580 are only 232mm2 compared to 200mm2.I can see how AMD can happily sell 570s for the same price since that design has been long paid for vs. Turing and the MFG costs shouldn't be much higher

Karmena - Tuesday, May 7, 2019 - link

Check the prices of RX570, they cost 120$ on newegg. And you can get one under 150$tarqsharq - Tuesday, May 7, 2019 - link

And the RX570's come with The Division 2 and World War Z right now.You can get the ASrock version with 8GB VRAM for only $139!

0ldman79 - Sunday, May 19, 2019 - link

Problem is on an OEM box you'll have to upgrade the PSU as well.Dealing with normies for customers, the good ones will understand, but most of them wouldn't have bought a crappy OEM box in the first place. Most normies will buy the 1650 alone.

AMD needs 570ish performance without the need for auxiliary power.

Yojimbo - Friday, May 3, 2019 - link

Depending on the amount of gaming done, it probably saves over 50 dollars in electricity costs over a 2 year period compared to the RX 570. Of course the 570 is a bit faster on average.JoeyJoJo123 - Friday, May 3, 2019 - link

Nobody in their right mind that's specifically on the market for an aftermarket GPU (a buying decision that comes about BECAUSE they're dissatisfied with the current framerate or performance of their existing, or lack of, a GPU) is making their primary purchasing decision on power savings alone. In other words, people aren't saying "Man, my ForkNight performance is good, but my power bills are too high! In order to remedy the exorbitant cost of my power bill, I'm going to go out and purchase a $150 GPU (which is more than 1 month of my power bill alone), even if it offers the same performance of my current GPU, just to save money on my power bill!"Someone might make that their primary purchasing decision for a power supply, because outside of being able to supply a given wattage for the system, the only thing that matters is its efficiency, and yes, over the long term higher efficiency PSUs are better built, last longer, and provide a justifiable hidden cost savings.

Lower power for the same performance at a similar enough price can be a tie-breaker between two competing options, but that's not the case here for the 1650. It has essentially outpriced itself from competing viably in the lower budget GPU market.

Yojimbo - Friday, May 3, 2019 - link

I don't know what you consider being in a right mind is, but anyone making a cost sensitive buying decision that is not considering total cost of ownership is not making his decision correctly. The electricity is not free unless one has some special arrangement. It will be paid for and it will reduce one's wealth and ability to make other purchases.logamaniac - Friday, May 3, 2019 - link

So I assume you measure the efficiency of the AC unit in your car and how it relates to your gas mileage over duration of ownership as well? since you're so worried about every calculation in making that buying decision?serpretetsky - Friday, May 3, 2019 - link

It doesn't really change the argument if he does or does not take into account his AC unit in his car. Electricity is not free. You can ignore the price of electricity if you want, but your decision to ignore it or not does not change the total cost of ownership. (I'm not defending the electricity calculations above, I haven't verified them)