The Mate 20 & Mate 20 Pro Review: Kirin 980 Powering Two Contrasting Devices

by Andrei Frumusanu on November 16, 2018 8:10 AM EST- Posted in

- Smartphones

- Huawei

- Mobile

- Kirin 980

- Mate 20

- Mate 20 Pro

Kirin 980 Second Generation NPU - NNAPI Tested

We’ve tested the first generation Kirin NPU back in January in our Kirin 970 review – Back then, we were quite limited in terms of benchmarking tests we were able to run, and I mostly relied on Master Lu’s AI test. This is still around, and we’ve also used it in performance testing Apple’s new A12 neural engine. Unfortunately or the Mate 20’s, the benchmark isn’t compatible yet as it seemingly doesn’t use HiSilicon’s HiAI API on the phones, and falls back to a CPU implementation for processing.

Google had finalised the NNAPI back in Android 8.1, and how most of the time these things go, we first need an API to come out before we can see applications be able to make use of exotic new features such as dedicated neural inferencing engines.

“AI-Benchmark” is a new tool developed by Andrey Ignatov from the Computer Vision Lab at ETH Zürich in Switzerland. The new benchmark application, is as far as I’m aware, one of the first to make extensive use of Android’s new NNAPI, rather than relying on each SoC vendor’s own SDK tools and APIs. This is an important distinction to AIMark, as AI-Benchmark should be better able to accurately represent the resulting NN performance as expected from an application which uses the NNAPI.

Andrey extensive documents the workloads such as the NN models used as well as what their function is, and has also published a paper on his methods and findings.

One thing to keep in mind, is that the NNAPI isn’t just some universal translation layer that is able to magically run a neural network model on an NPU, but the API as well as the SoC vendor’s underlying driver must be able to support the exposed functions and be able to run this on the IP block. The distinction here lies between models which use features that are to date not yet supported by the NNAPI, and thus have to fall back to a CPU implementation, and models which can be hardware accelerated and operate on quantized INT8 or FP16 data. There’s also models relying on FP32 data, and here again depending on the underlying driver this can be either run on the CPU or for example on the GPU.

For the time being, I’m withholding from using the app’s scores and will simply rely on individual comparisons between each test’s inference time. Another presentational difference is that we’ll go through the test results based on the targeted model acceleration type.

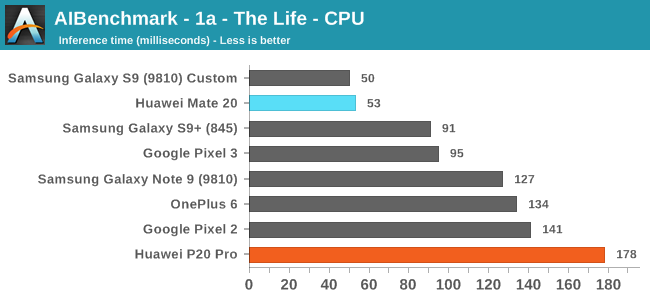

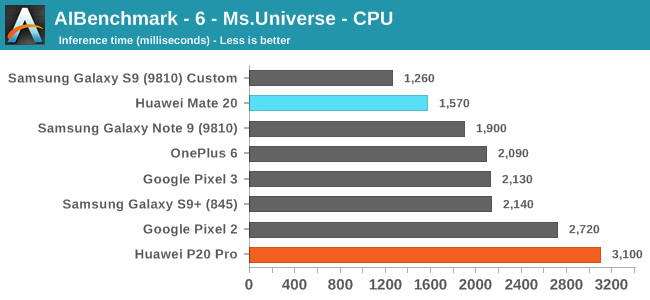

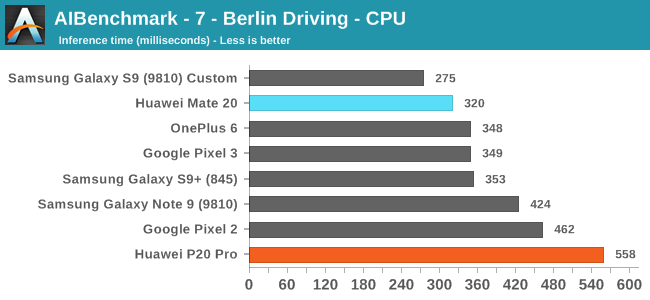

The first three CPU tests rely on models which have functions that are not yet supported by the NNAPI. Here what matters for the performance is just the CPU performance as well as the performance response time. The latter I mention, because the workload is transactional in its nature and we are just testing a single image inference. This means that mechanisms such as DVFS and scheduler responsiveness can have a huge impact on the results. This is best demonstrated by the fact that my custom kernel of the Exynos 9810 in the Galaxy S9 performs significantly better than the stock kernel of the same chip of the Note9 in the same above results.

Still, comparing the Huawei P20 Pro (most up to date software stack with Kirin 970) to the new Mate 20, we see some really impressive results of the latter. This both showcases the performance of the A76 cores, as well as possibly improvements in HiSilicon’s DVFS/scheduler.

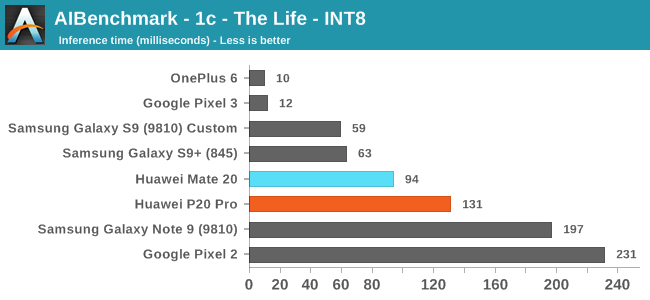

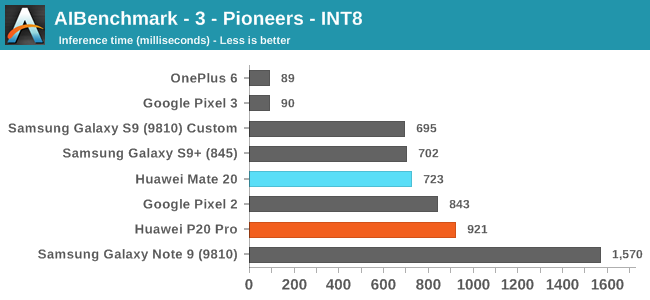

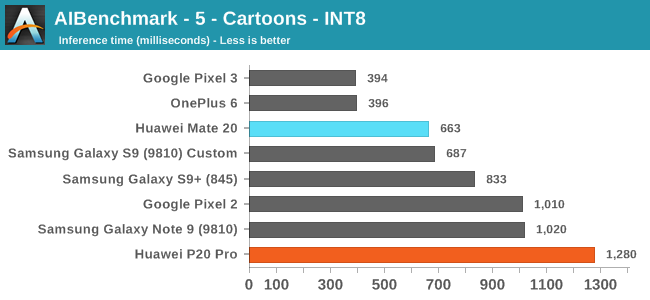

Moving onto the next set of tests, these are based on 8-bit integer quantized NN models. Unfortunately for the Huawei phones, HiSilicons NNAPI drivers still doesn’t seem to expose acceleration to the hardware. Andrey had shared with me that in communications with Huawei, is that they plan to rectify this in a future version of the driver.

Effectively, these tests also don’t use the NPU on the Kirins, and it’s again a showcase of the CPU performance.

On the Qualcomm devices, we see the OnePlus 6 and Pixel 3 far ahead in performance, even compared to the same chipset Galaxy S9+. The reason for this is that both of these phones are running a new updated NNAPI driver from Qualcomm which came along with the Android 9/P BSP update. Here acceleration if facilitated through the HVX DSPs.

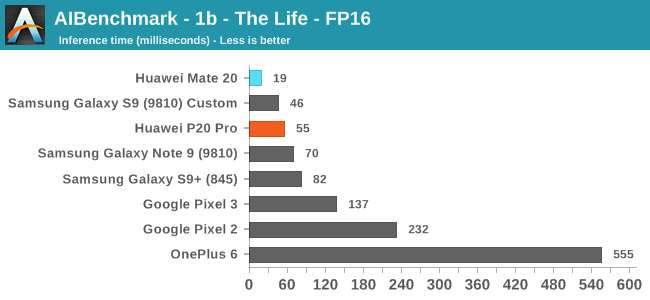

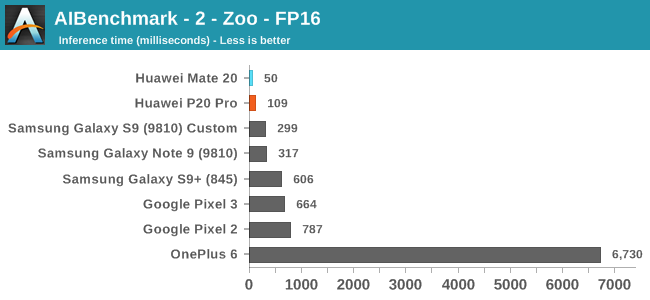

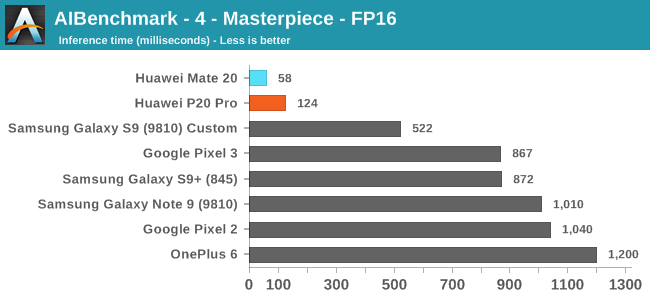

Moving on to the FP16 tests, here we finally see the Huawei devices make use of the NPU, and post some leading scores both on the old and new generation SoCs. Here the Kirin 980’s >2x NPU improvement finally materialises, with the Mate 20 showcasing a big lead.

I’m not sure if the other devices are running the workloads on the CPU or on the GPU, and the OnePlus 6 seems to suffer from some very odd regression in its NNAPI drivers that makes it perform an order of magnitude worse than other platforms.

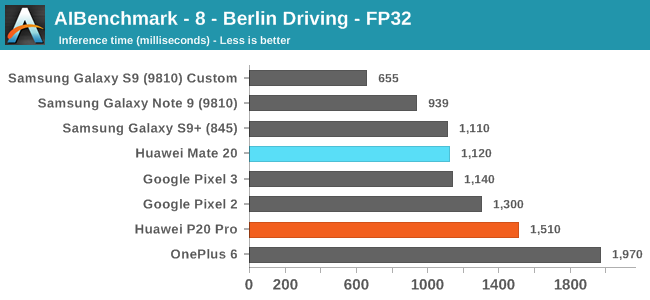

Finally on the last FP32 model test, most phones should be running the workload on the CPU again. There’s a more limited improvement on the part of the Mate 20.

Overall, AI-Benchmark was at least able to validate some of Huawei’s NPU performance claims, even though that the real conclusion we should be drawing from these results is that most devices with NNAPI drivers are currently just inherently immature and still very limited in their functionality, which sadly enough again is a sad contrast compared where Apple’s CoreML ecosystem is at today.

I refer back to my conclusion from early in the year regarding the Kirin 970: I still don’t see the NPU as something that obviously beneficial to users, simply because we just don’t have the software applications available to make use of the hardware. I’m not sure to what extent Huawei uses the NPU for camera processing, but other than such first-party use-cases, NPUs currently still seems something mostly inconsequential to device experience

141 Comments

View All Comments

SydneyBlue120d - Friday, November 16, 2018 - link

I'd like to see some mobile speed test compared with Snapdragons and Intel modems :)Great review as always, included the Lorem Impsum in the video recording video page :P

Andrei Frumusanu - Friday, November 16, 2018 - link

Cellular tests are extremely hard to do in non-controlled environments, it's something I'm afraid of doing again as in the past I've found issues with my past mobile carrier that really opened my eyes as to just how much the tests are affected by the base station's configuration.I'm editing the video page, will shortly update it.

jjj - Friday, November 16, 2018 - link

When you do the GPU's perf per W, why use peak not hot state average? And any chance you have any hot perf per W per mm2 data, would be interesting to see that.Have you checked if the Pto with max brightness throttles harder and by how much?

Hope LG can sort out power consumption for their OLED or they'll have quite some issues with foldable displays.

Andrei Frumusanu - Friday, November 16, 2018 - link

It's a fair point, it's something I can do in the future but I'd have to go back measure across a bunch of deices.What did you mean by Pto? The power consumption of the screen at max brightness should be quite high if you're showing very high APL content, so by definition that is 2-3W more to the TDP, even though it's dissipated on a large area.

jjj - Friday, November 16, 2018 - link

The Pro question was about the SoC throttling harder because of the display, could be and should be the case to some extend, especially if they did nothing about mitigating it but was curious how large the impact might be in a worse case scenario. - so SoC throttling with display at min power vs max power.Also related to this, does heat impact how brightness is auto-adjusted?

Andrei Frumusanu - Friday, November 16, 2018 - link

The 3D benchmarks are quite low APL so they shouldn't represent any notable difference in power. Manhattan for example is very dark.jjj - Friday, November 16, 2018 - link

I suppose you could run your CPU power virus while manipulating the display too, just to see the impact. The delta between min and max power for the display is so huge that you got to wonder how it impacts SoC perf.Chitti - Friday, November 16, 2018 - link

Nothing about speakers and sound output via USB-C and in Bluetooth earphones ?Andrei Frumusanu - Friday, November 16, 2018 - link

I'll add a speaker section over the weekend, the Mate 20 Pro's speakers have a good amount of bass and mid-range, however there's some lacking presence in the higher frequencies, as well as some obvious reverberations on the back glass panel. Covering up the USB-C port where the sound is coming from muffles things hard, so this is a big no-no in terms of audio quality when you have it plugged in.The Mate 20 just has the bottom mono speaker, which is a disadvantage in itself. Here there's some lack of bass and mid-range, with a very tinny bottom firing sound.

The USB-C headphones are good, but lacking a bit in clarify / lower high frequencies. They're ok but definitely not as good as Apple/Samsung/LG's included units.

jjj - Friday, November 16, 2018 - link

Went over the review quickly, is there anything on the proprietary microSD and storage (NAND) in general, just point me to the right page if possible.