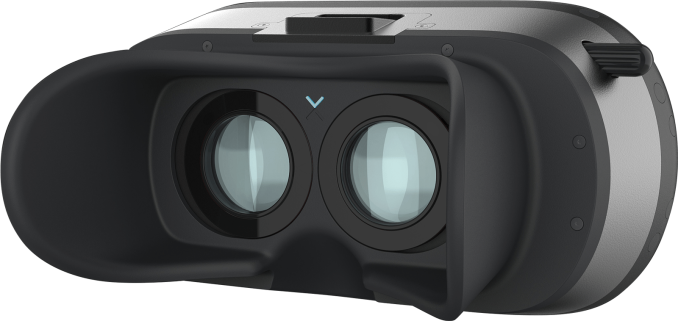

VR Startup Varjo Announces Shipping of High Resolution Headset Prototype, Aimed at Professional Markets

by Nate Oh on November 30, 2017 6:45 AM EST

Today at Slush 2017, Finnish VR startup Varjo Technologies is announcing that their first formal prototype headset will be shipping within the next month, as well as unveiling their collaboration efforts with a number of new development partners. While we don’t often cover these smaller companies, there are a few notable elements to Varjo’s endeavors. First is the hardware itself: Varjo’s VR headset incorporates two displays where the inner central region has very high resolution and pixel density, surrounded by a coarser lower resolution peripheral region. The second is the niche: Varjo is focusing solely on the professional market.

Update (11/30/2017): Article was updated to reflect the latest information available, including concrete details of the Alpha Prototype.

The headset – and the company itself for that matter – was outed this past June when Varjo exited their startup stealth period, and at that time they gave private demoes to press and analysts with a modified Oculus Rift (CV1), calling the setup their "20|20" prototype. In that configuration, the Oculus display provided the 100 degree FOV peripheral and two 1920 x 1080 OLED microdisplays, courtesy of Sony DSC-RX100M4 compact cameras, provided the in-focus 20 degree region in 0.7 inches (diagonal) for a pixel density of around 3000 ppi.

Scientifically, what Varjo is adopting is a form of foveated projection – mimicking the human vision’s out-of-focus peripheral and in-focus center. Foveation is widely considered to be one of the critical steps to the next generation of VR headsets, as matching the human eye's own capabilities is, if executed correctly, a more direct means to having high pixel density HMDs without all of the manufacturing challenges of building a continuous high density HMD, and without the massive rendering workload required to fill such an HMD. Various GPU vendors have already been working on foveated rendering, and now with Varjo's prototype we're going to start seeing forms of foveated projection.

For their new Alpha Prototype HMD, the high density zone, called the "Varjo Bionic display," features for each eye 1920 x 1080 at 8 bpp, with a 35 degree horizontal FOV and refresh rate to-be-determined later. The outer peripheral, or the "context display," runs at a 100 degree FOV 1080 x 1200 at 90Hz and 8bpp, one for each eye. Varjo did mention that current prototypes are running at 60Hz, but that 120Hz rates are currently undergoing testing and is seen as a reachable goal. As for latency, Varjo stated that the the displays are "typical row-updateable displays, with microsecond switch time" and so note that latency is generally low.

The two displays are complemented by an optical combiner and gaze tracker so that the focused regions are in line with a user’s gaze, though to be clear it is the 'projections' that are moving. More concretely, the Alpha Prototype features integrated 100Hz stereo eye-tracking, as well as Steam VR tracking with controller support. As a whole, Varjo sometimes calls these combined technologies as “Bionic display” as well, and in the past has used that term metonymically for the headset itself. While not present on the Oculus based prototype, their headset concepts and test types have external cameras to allow for mixed reality (MR) and augmented reality (AR) functionality via video see-through, as opposed to the optical see-through of HMDs like HoloLens. These elements continue to be absent from the Alpha Prototype, with Varjo looking to bring in mixed reality later next year.

As for miscellaneous support, the prototype does not have integrated speakers or headphone jack, both of which are aspects Varjo wants to include in the future. Only projects compiled on Unreal or Unity will run on the Alpha Prototype, with Unreal 4.16 or later and Unity 2017.1.1f1 or later recommended. Varjo is additionally recommending two DisplayPorts and two USB 3.0 ports, though it is not clear if the HMD can operate without all four of the connections. Otherwise, Varjo will supply all the software updates for the device.

Taking a step back, what Varjo is going for is essentially removing the screen-door effect of current VR HMDs via by offering an area of very high pixel density. But the tradeoff with that pixel density is the limited size and FOV of the OLED microdisplays, and thus foveated projection, with Varjo filling in the rest of the FOV with a lower resolution. Executed correctly, this means that the high resolution display is always aligned with the center of the user's FOV – where a user's eyes can actually resolve a high level of detail – while the coarser peripheral vision is exposed to a lower pixel density. As mentioned earlier, in principle this allows for the benefits of high resolution rendering without all of the drawbacks of a full FOV high density display.

However this is not to say that Varjo's approach isn't without its own challenges. Such a solution only works if A) the displays are visually seamless, B) the displays have synchronized high refresh rates, and C) eye-tracking ensures that the focused high density region is always at where a user’s gaze is, all elements that Varjo is aiming for. These become especially important once video see-through based AR/MR is in the picture. The retrofitted Oculus highlighted some of these concerns, as some press observed jitter due to the disjointed refresh rates; for the Alpha Prototype, the context display still features the Oculus/Vive specifications of dual 1080 x 1200 at 90Hz. It also remains to be seen how objectively capable Varjo's eye tracking can keep up with rapid eye and head movement.

The other side benefit is that using off-the-shelf-ish Sony microdisplays significantly reduces costs and man-hours for rapid prototyping, as demonstrated by the retrofitted Oculus. So in the full context, the idea of mimicking human vision is deeply intertwined with the design and engineering choices, marketing appeal of human-eye resolution notwithstanding.

Another thing to note is that the Sony microdisplays are around $850, which by itself is more than many consumer HMDs already on the market. The Varjo headset is intended for the professional market and will be priced that way; Varjo described a typical user as using dual Quadro P6000s, which is not only an immense amount of graphical horsepower but also around $10000 for the graphics cards alone. Coincidentally, Varjo states that their first professional HMDs will be priced under $10000. For PCs intending to power the Alpha Prototype, Varjo is recommending AMD FX 9590 or Intel Core i7-6700-level CPU performance or better, AMD Radeon RX Vega or NVIDIA GeForce GTX 1080-level GPU performance or better, and at least 16 GB of DDR4 RAM.

Varjo headset prototype at GTC 2017 Europe, featuring NVIDIA CEO Jen-Hsun Huang and Varjo CEO Urho Konttori (Varjo Instagram)

In practice, positioning for the professional market requires a lot of collaboration in regards to workstation applications, certification, drivers, and the simple calculus involved in fitting a specialty technology into a workflow. Which is why the second part of today’s announcement mentions a number of development partners; Varjo lists 20th Century Fox, Airbus, Audi, BMW, Technicolor, and Volkswagen as headliners. And on the GPU side, Varjo is involved with AMD and NVIDIA, though no further details were given. But even at a glance there are interesting avenues to pursue, such as LiquidVR on AMD’s side, and Holodeck or VRWorks on NVIDIA’s.

To be clear, details in general were limited, but this is to be expected. After all, formal prototypes are just now finding its way into development partners’ hands: the Alpha Prototype headset, shipping with Unreal and Unity plugins (or a C++ API if neither are applicable), is slated for select partners before 2018, while Beta Prototypes are specified to begin shipping in Q1 2018 to partners involved in design, engineering, simulation, and entertainment. Varjo is still a developing company, and by venture capital standards they certainly are, having received a round of Series A funding just two months ago.

It’s worth noting that Varjo positions itself as a VR hardware and software company, and is now describing their headsets more as a vehicle for Bionic display. And for the time being, their currently open positions reflect continued effort in software, and for Bionic display Varjo plans to publish API information and developer resources in early 2018, around the time when Beta Prototypes are planned to ship. Now that the “20|20” headsets have successfully courted a number of interested companies, Varjo can look more broadly in both productizing a headset and planning an ecosystem that it could be successful in.

Varjo expects to launch its first commercial headsets in 2018, with a public roadmap targeting Q4 2018 for launching "professional products." Interested parties may apply for early access on their website with the form at the bottom of their webpages; to note, headsets are only loaned for the duration of the early access testing period.

Source: Varjo

19 Comments

View All Comments

ZeDestructor - Thursday, November 30, 2017 - link

I'm liking this. If it's not too expensive while working with the Oculus/Vive SDKs, it would be up there for consideration in my book.MamiyaOtaru - Tuesday, December 5, 2017 - link

you're in luck! it will be "under $10,000"Valantar - Thursday, November 30, 2017 - link

"Taking a step back, what Varjo is going for is essentially removing the screen-door effect of current VR HMDs via by offering an area of very high pixel density. But the tradeoff with that pixel density is the limited size and FOV of the OLED microdisplays, and thus foveated projection, with Varjo filling in the rest of the FOV with a lower resolution. Executed correctly, this means that the high resolution display is always aligned with the center of the user's FOV – where a user's eyes can actually resolve a high level of detail – while the coarser peripheral vision is exposed to a lower pixel density. As mentioned earlier, in principle this allows for the benefits of high resolution rendering without all of the drawbacks of a full FOV high density display."How are they supposed to do this without mechanically moving the high-density display (or the entire display setup)? After all, eyes can move quite a lot without the head moving, and unless I'm misunderstanding this entirely, the high density display will only align with the center of the user's FOV when looking straight ahead. I get the value of this for specific focused tasks where the user needs to look at a specific place/object, but for anything dynamic this would seem inferior to a pure foveated rendering approach - at least that can follow the user's eye movement as quick as the GPU can render new frames (and the eye tracker can inform the GPU), and you won't have the "oh, you're looking a bit to the side? yeah, there's no detail there" effect.

SunnyNW - Thursday, November 30, 2017 - link

I had this same exact question. How is it that they are using eye-tracking?jordanclock - Thursday, November 30, 2017 - link

That is answered in the very next paragraph: Eye-tracking to move the lens/display with your eyes.Valantar - Thursday, November 30, 2017 - link

No, it isn't answered. Sure, there's eye tracking. Eye tracking does not move a display, it tracks eye movement. Do the displays actually physically move? Is there some optical trickery making this work? Or are they simply stalling for time, trying to hide that this has a fixed central "high detail" area while they figure out a flexible solution for the next revision? There's no substance at all in this claim. If eye tracking was all you needed, this would be a fixed problem, given how eye tracking HMD concepts have been shown off for years.Diji1 - Friday, December 1, 2017 - link

As I understand it a mirror moves. It sounds gimmicky but I have read people saying it's works very well after they tried it and works as decribed - you see an extremely clear image.boeush - Thursday, November 30, 2017 - link

Most likely, the microdisplay itself won't move; instead they'll probably be moving a small mirror (via a couple of fast X-Y galvo motors) that repositions the microdisplay's projection into/within the optical combiner. Whereas the low-res backdrop would stay fixed, covering the full 100 degree FOV...Valantar - Thursday, November 30, 2017 - link

How are they going to do that without getting some weird glitches when the mirror moves? Wouldn't you then run into issues of "double vision" (i.e. imperfect display edge overlapping), perspective shifts in the central image, or possibly focus issues from the mirror bringing the microdisplay out of the focal plane of the lens? Sounds immensely complicated to me.Also, from how I read the descriptions in the article, the microdisplays and peripheral displays are physically overlapping/adjacent, making a solution like this very difficult. For both to be in focus, they'd have to be within a very short distance of each other. Of course, that problem is indeed solveable with a mirror setup.

extide - Thursday, November 30, 2017 - link

Yeah it seems like they are trying to go light on the details right now on purpose, BUT I would guess the displays are static and then the lens moves a bit in the X-Y directions which could keep the high res part in your FoV. Although, I'm not sure...