Cheap Supercomputers: LANL has 750-node Raspberry Pi Development Clusters

by Ian Cutress on November 14, 2017 2:30 PM EST- Posted in

- Servers

- HPC

- Enterprise

- Trade Shows

- SC17

- Supercomputing 17

- Raspberry Pi

- Pi

- LANL

One of the more esoteric announcements to come out of SuperComputing 17, an annual conference on high-performance computing, is that one of the largest US scientific institutions is investing in Raspberry Pi-based clusters to aid in development work. The Los Alamos National Laboratory’s High Performance Computing Division now has access to 750-node Raspberry Pi clusters as part of the first step towards a development program to assist in programming much larger machines.

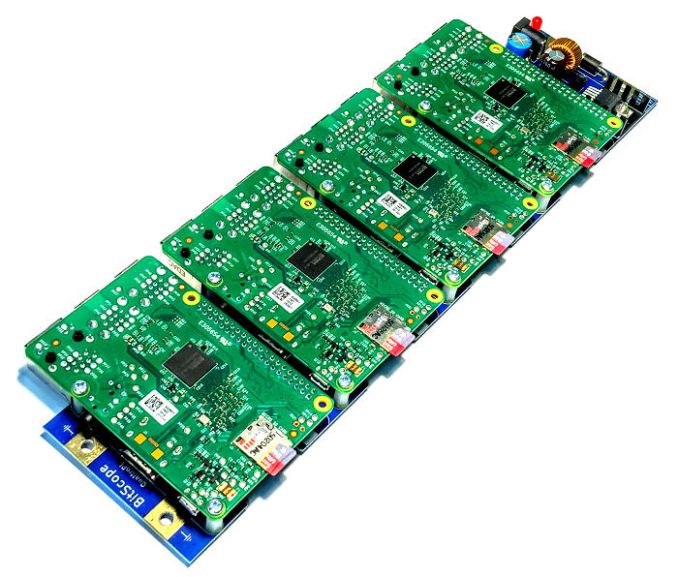

The platform at LANL leverages a modular cluster design from BitScope Designs, with five rack-mount Bitscope Cluster Modules, each with 150 Raspberry Pi boards with integrated network switches. With each of the 750 chips packing four cores, it offers a 3000-core highly parallelizable platform that emulates an ARM-based supercomputer, allowing researchers to test development code without requiring a power-hungry machine at significant cost to the taxpayer. The full 750-node cluster, running 2-3 W per processor, runs at 1000W idle, 3000W at typical and 4000W at peak (with the switches) and is substantially cheaper, if also computationally a lot slower. After development using the Pi clusters, frameworks can then be ported to the larger scale supercomputers available at LANL, such as Trinity and Crossroads.

“It’s not like you can keep a petascale machine around for R&D work in scalable systems software. The Raspberry Pi modules let developers figure out how to write this software and get it to work reliably without having a dedicated testbed of the same size, which would cost a quarter billion dollars and use 25 megawatts of electricity.” Said Gary Grider, leader of the High Performance Computing Division at Los Alamos National Laboratory.

The collaboration between LANL and BitScope was formed after the inability to find a suitable dense server that offered a platform for several-thousand-node networking and optimization – most solutions on the market were too expensive, and anyone offering something like the Pi in a dense form factor was ‘just people building clusters with Tinker Toys and Lego’. After the collaboration, the company behind the modular Raspberry Pi rack and blade designs, BitScope, plans to sell the 150-node Cluster Modules at retail in the next few months. No prices were given yet, although BitScope says that each node will be about $120 fully provisioned using the element14 version of the latest Raspberry Pi (normally $35 at retail). That means that a 150-note Cluster Module will fall in around $18k-$20k each.

The Bitscope Cluster Module is currently being displayed this week at Supercomputing 17 in Denver over at the University of New Mexico stand.

Related Reading

Sources: BitScope, EurekAlert

26 Comments

View All Comments

ScottSoapbox - Tuesday, November 14, 2017 - link

I'm pretty sure the supercomputer needs 1.21 gigawatts of electricity, Gary.MrSpadge - Tuesday, November 14, 2017 - link

You mean Jiggawatts, don't you?LordConrad - Wednesday, November 15, 2017 - link

Only for time travel, which wouldn't work anyways as there is no Flux Capacitor.Lord of the Bored - Wednesday, November 15, 2017 - link

No flux capacitor THAT YOU KNOW OF. This IS Los Alamos, who KNOWS what crazy stuff they get to behind the classified signage.Elstar - Tuesday, November 14, 2017 - link

Very cool. Although I wouldn't call this a "cheap supercomputer". It is surely too slow for any real world application. As the quotes in the article imply, the machine is a scale model of a supercomputer for training programmers. It helps them test for scalability and fix embarrassing bugs before they power up a real computer and start burning megawatts.Mil0 - Tuesday, November 14, 2017 - link

Depending on clock speed(/cooling) a Raspberry 3 achieves 3-6 GFlops. Assuming optimal performance&scaling it achieves 4.5 TFlops.#500 of the top500 of supercomputers is about 600 TFlops. Assuming there are more than 500 supercomputers, I'd say this beautiful beast gets pretty friggin close.

MrSpadge - Tuesday, November 14, 2017 - link

By that standard my desktop with a GTX1070 with 7.2 TFlops plus an i3 is also a supercomputer.Elstar - Tuesday, November 14, 2017 - link

And raw FLOPS aren't everything. The core-to-core bandwidth/latency on any GPU will run many circles around the node-to-node bandwidth/latency of this machine.bananaforscale - Thursday, November 16, 2017 - link

Going by the tech of yesteryear, it is.Alexvrb - Wednesday, November 15, 2017 - link

Basically, this thing is GREAT as a low-cost development testbed for massively parallel software designed to run on a real supercomputer... but that's about it. Such low performance would be matched by 4 Epyc 7551P boards, which would also eat less power and consume less space.