The NVIDIA GeForce GTX 1080 Ti Founder's Edition Review: Bigger Pascal for Better Performance

by Ryan Smith on March 9, 2017 9:00 AM ESTRise of the Tomb Raider

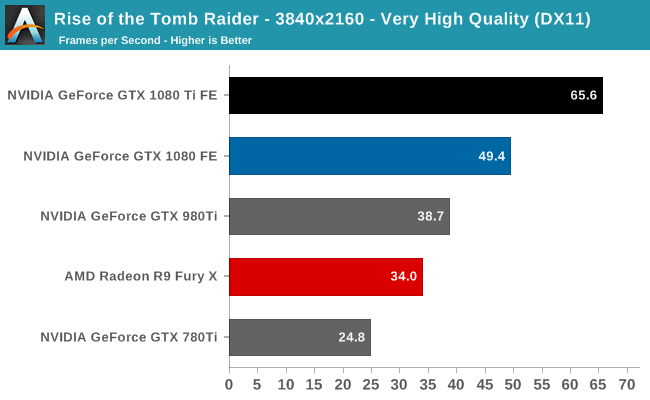

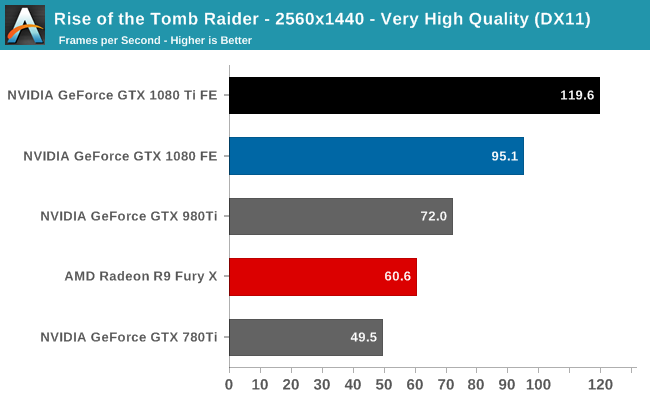

Starting things off in our benchmark suite is the built-in benchmark for Rise of the Tomb Raider, the latest iteration in the long-running action-adventure gaming series. One of the unique aspects of this benchmark is that it’s actually the average of 4 sub-benchmarks that fly through different environments, which keeps the benchmark from being too weighted towards a GPU’s performance characteristics under any one scene.

As we’re looking at the highest of high-end cards here, the performance comparisons are pretty straightforward. There’s the GTX 1080 Ti versus the GTX 1080, and then for owners with older cards looking for an upgrade, there’s the GTX 1080 Ti versus the GTX 980 Ti and GTX 780 Ti. It goes without saying that the GTX 1080 Ti is the fastest card that is (or tomorrow will be) on the market, so the only outstanding question is just how much faster NVIDIA’s latest card really is.

As you’d expect, the GTX 1080 Ti’s performance lead is dependent in part on the resolution tested. The higher the resolution the more GPU-bound a game is, and the more opportunity there is for the card to stretch its 3GB advantage in VRAM. In the case of Tomb Raider, the GTX 1080 Ti ends up being 33% faster than the GTX 1080 at 4K, and 26% faster at 1440p.

Otherwise against the 28nm GTX 980 Ti and GTX 780 Ti, the performance gains become very large very quickly. The GTX 1080 Ti holds a 70% lead over the GTX 980 here at 4K, and it’s a full 2.6x faster than the GTX 780 Ti. The end result is that whereas the GTX 980 Ti was the first card to crack 30fps at 4K on Tomb Raider, the GTX 1080 Ti is the first card that can actually average 60fps or better.

161 Comments

View All Comments

Jon Tseng - Thursday, March 9, 2017 - link

Launch day Anandtech review?My my wonders never cease! :-)

Ryan Smith - Thursday, March 9, 2017 - link

For my next trick, watch me pull a rabbit out of my hat.blanarahul - Thursday, March 9, 2017 - link

Ooh.YukaKun - Thursday, March 9, 2017 - link

/clapsGood article as usual.

Cheers!

Yaldabaoth - Thursday, March 9, 2017 - link

Rocky: "Again?"Ryan Smith - Thursday, March 9, 2017 - link

No doubt about it. I gotta get another hat.Anonymous Blowhard - Thursday, March 9, 2017 - link

And now here's something we hope you'll really like.close - Friday, March 10, 2017 - link

Quick question: shouldn't the memory clock in the table on the fist page be expressed in Hz instead of bps being a clock and all? Or you could go with throughput but that would be just shy of 500GBps I think...Ryan Smith - Friday, March 10, 2017 - link

Good question. Because of the various clocks within GDDR5(X)*, memory manufacturers prefer that we list the speed as bandwidth per pin instead of frequency. The end result is that the unit is in bps rather than Hz.* http://images.anandtech.com/doci/10325/GDDR5X_Cloc...

close - Friday, March 10, 2017 - link

Probably due to the QDR part that's not obvious from reading a just the frequency. Thanks.