More Details on Broxton: Quad Core, ECC, Up to 18 EUs of Gen9

by Ian Cutress on August 18, 2016 7:00 AM EST

An interesting talk regarding the IoT aspects of Intel’s Next Generation Atom Core, Goldmont, and the Broxton SoCs for the market offered a good chunk of information regarding the implementations of the Broxton-M platform. Users may remember the Broxton name from the cancelled family of smartphone SoCs several months ago, but the core design and SoC integration is still mightily relevant for IoT and mini-PCs, as well as being at the heart of Intel’s new IoT platform based on Joule which was announced at IDF this week as well.

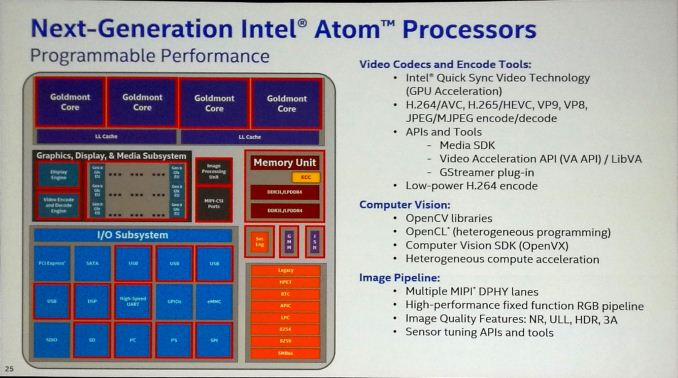

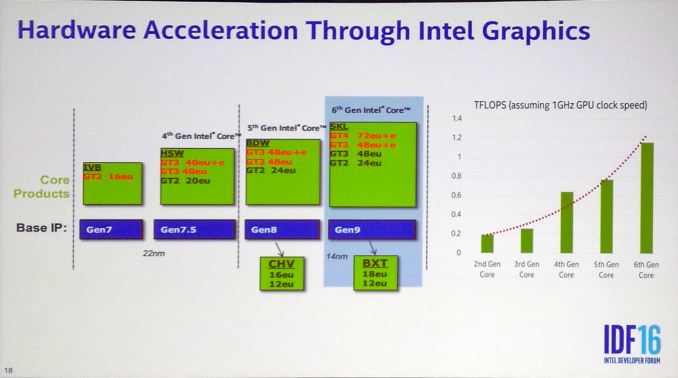

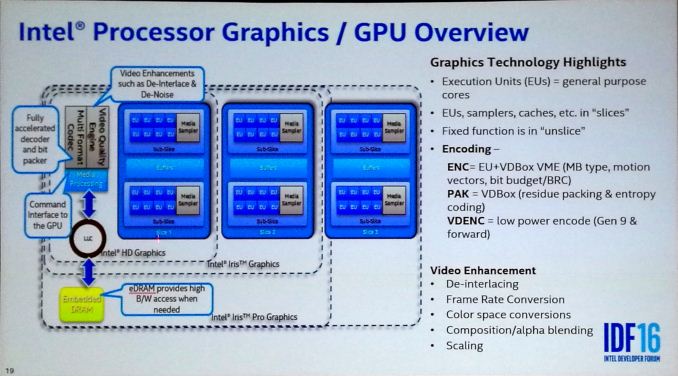

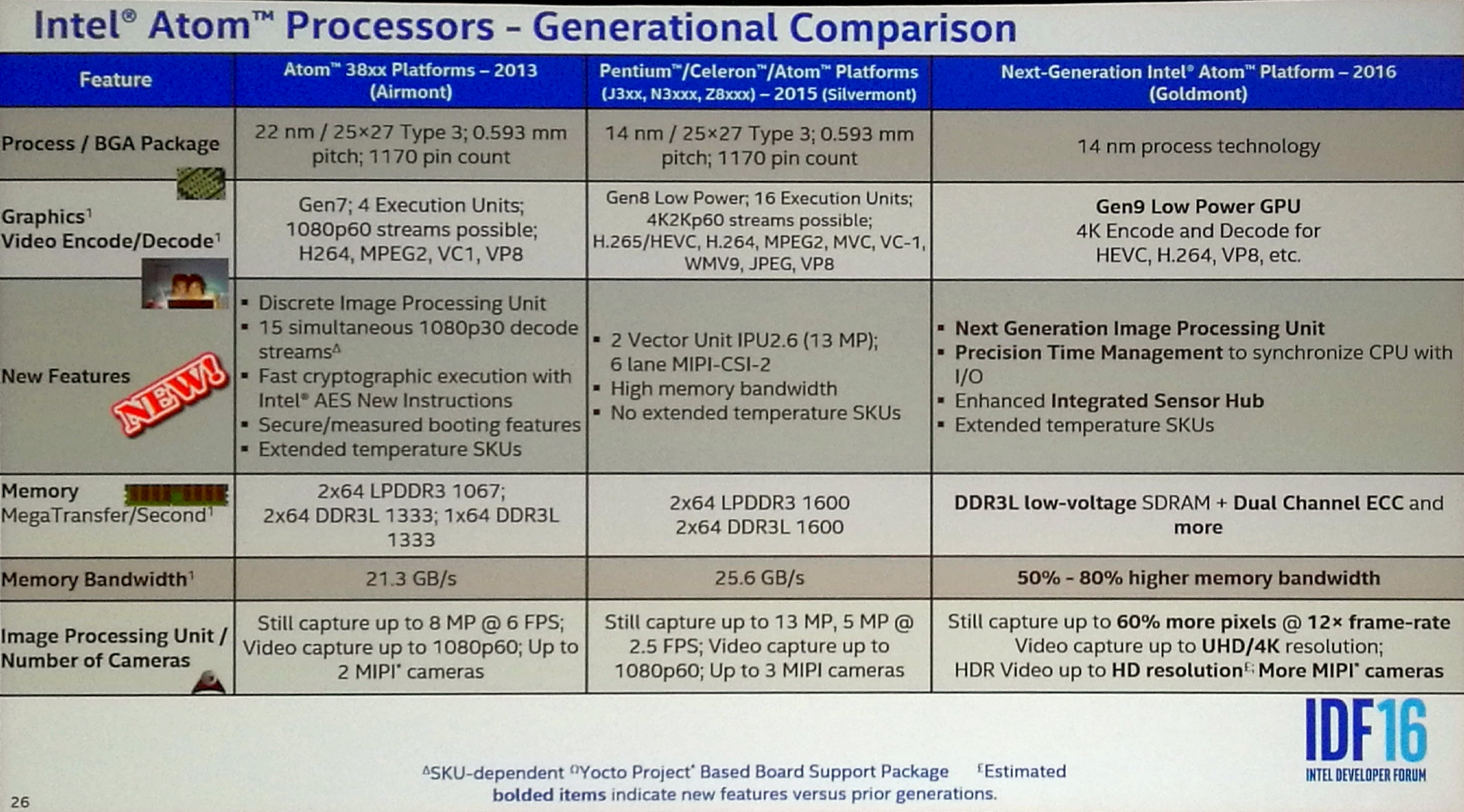

Broxton in the form that was described to us will follow the previous Braswell platform in the sense that it runs in a quad-core configuration (for most SKUs) consisting of two sets of dual cores sharing a common L3 cache, but will be paired with Intel’s Gen9 graphics. The GPU configuration will be either in a 12 execution unit (EU) or 18 EU format, suggesting that the core will have a single graphics slice and will implement Intel’s binning strategy to determine which EUs are used on each sub-slice.

It was listed that Broxton will support VP9 encode and decode, as well as H.264. HEVC will be decode but they were hazy in clarifying decode support, saying that ‘currently we have a hybrid situation but this will change in the future’. There will also be OpenCV libraries available for Computer Vision type workloads to take advantage of optimized libraries that focus specifically on the graphics architecture.

It’s worth noting that on this next slide it notes the memory controller supporting DDR3L and LPDDR4, and later on in the presentation it only stated DDR3L. We asked about this, and were told that LPDDR4 was in the initial design specification, but may or may not be in a final product (or only in certain SKUs). However, DDR3L is guaranteed.

It was confirmed that Broxton is to be produced on Intel’s 14nm process, featuring a Gen9 GPU with 4K encode and decode support for HEVC (though not if this is hardware accelerated or hybrid or not). The graphics part will feature an upgraded image processing unit, which will be different to other Gen9 implementations, and we will see the return of extended temperature processors (-40C to 110C) for embedded applications.

One of the big plus points for Broxton will be the support of dual channel ECC memory. This opens up a few more markets where ECC is a fundamental requirement to the operation. The slides also state a 50-80% higher memory bandwidth over Braswell, which is an interesting statement if the platform does not support LPDDR4 (or it’s a statement limited to the specific SKUs where LPDDR4 is supported).

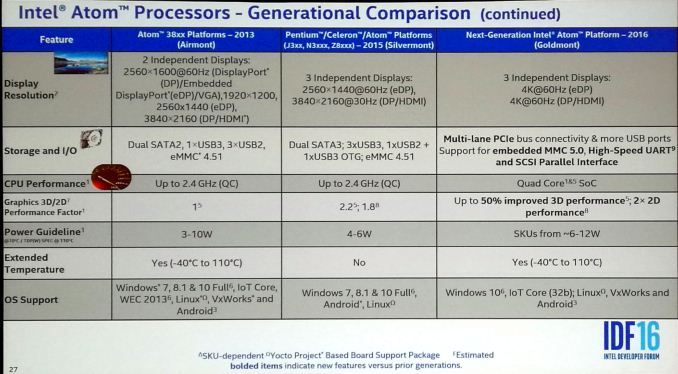

All the displays from the Broxton SoC will support 4K60 outputs on eDP and DP/HDMI, along with more USB ports and support for eMMC 5.0. The support for 4K on HDMI might suggest full HDMI 2.0 support, however it is not clear if this is 4:2:0 chroma support or higher. The Broxton SKUs in this presentation were described as having a 6-12W TDP, with support on a number of Linux flavors, Android, and Windows 10. We asked about Windows 7 support, and we were told that while Broxton will likely support it, given the limited timeframe it is not expected to be promoted as a feature. We asked other questions on frequency, and were told to expect quad-core parts perhaps around 1.8-2.6 GHz. This would be in line with what we expect – a small bump over Braswell.

We are still waiting on more detailed information regarding Goldmont and Goldmont-based SoCs like Broxton, and will likely have to wait until they enter the market before the full ecosystem of products is announced.

19 Comments

View All Comments

colinisation - Thursday, August 18, 2016 - link

I wonder if we will ever see Intel use Core and Atom on the same die a la big.littlenandnandnand - Thursday, August 18, 2016 - link

That could be a good way to get them to make mainstream 6+ core chips.Cygni - Thursday, August 18, 2016 - link

The question would be: why? Unless you are talking about Core coming to a mobile device, there really isn't much/any use case for an Atom core grafted onto a desktop or laptop CPU.satai - Thursday, August 18, 2016 - link

- a way to get more multithread power into laptop/tablet space?- a way to get the lowest power possible when the load is small in laptop/tablet space?

- a way to get more multithread power in highend/pro for some kinds of load?

Samus - Friday, August 19, 2016 - link

Why? Because it's a really good idea. A quick tweak of the scheduler and a branch monitor would properly thread weak and strong cores on the fly.Colinisation is on to something I suspect we will see within the next few years from Intel.

ianmills - Friday, August 19, 2016 - link

Intel Atore!jospoortvliet - Sunday, August 21, 2016 - link

I already don't see how this SOC is at all relevant in the IoT market, with a TDP of 6-12 watt - while IoT devices are in the miliwatt range.Throwing in the Core architecture would make it even further from relevant.

I am not saying this is a nice SOC for some embedded usage like in tv's or cars perhaps but you won't be wearing it and it is overkill for your fridge... so not an IoT this. I guess Intel just uses the term for marketing purposes and it is sad Anandtech.com doesn't call them out on it.

BlueBlazer - Thursday, August 18, 2016 - link

This could be the new best HTPC chip with low power and full hardware video processing.nathanddrews - Thursday, August 18, 2016 - link

Two things:1. Price would have to be around $100.

2. HDR10 (must) and DolbyVision (nice to have) output guaranteed

One potential third item:

3. PlayReady 3.0 hardware DRM (SL3000). We'll likely never see 4K Netflix via PC without it. I'm not sure what Amazon and Vudu use for authentication, but it could be something similar.

name99 - Thursday, August 18, 2016 - link

"but the core design and SoC integration is still mightily relevant for IoT and mini-PCs"That word, IoT, I do not think it means what you think it means...

IoT for the most part refers to compute moving seriously down in the stack --- smart scales, BT-enabled thermometers and blood pressure cuffs, room air temperature and quality monitors, etc. These are devices that require three months (at least) of life off one battery, not devices that need quad-core and 18EU GPUs.

MiniPCs are not the same thing as IoT, not even close.

Just because Intel PR throws out insane crap every IDF doesn't mean that you have to report it as though it actually makes sense... In the real world this looks like yet another Atom. Meaning a CPU that's relevant to people who actually NEED Windows and irrelevant to everyone else (ie the ACTUAL IoT) who will continue to use ARM just like before, with nothing about this chip making Atom any more compelling.