The Apple iPad Pro Review

by Ryan Smith, Joshua Ho & Brandon Chester on January 22, 2016 8:10 AM ESTSoC Analysis: On x86 vs ARMv8

Before we get to the benchmarks, I want to spend a bit of time talking about the impact of CPU architectures at a middle degree of technical depth. At a high level, there are a number of peripheral issues when it comes to comparing these two SoCs, such as the quality of their fixed-function blocks. But when you look at what consumes the vast majority of the power, it turns out that the CPU is competing with things like the modem/RF front-end and GPU.

x86-64 ISA registers

Probably the easiest place to start when we’re comparing things like Skylake and Twister is the ISA (instruction set architecture). This subject alone is probably worthy of an article, but the short version for those that aren't really familiar with this topic is that an ISA defines how a processor should behave in response to certain instructions, and how these instructions should be encoded. For example, if you were to add two integers together in the EAX and EDX registers, x86-32 dictates that this would be equivalent to 01d0 in hexadecimal. In response to this instruction, the CPU would add whatever value that was in the EDX register to the value in the EAX register and leave the result in the EDX register.

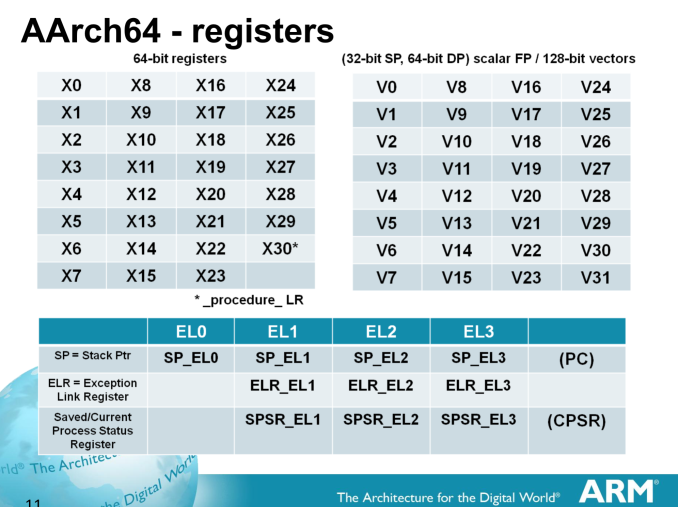

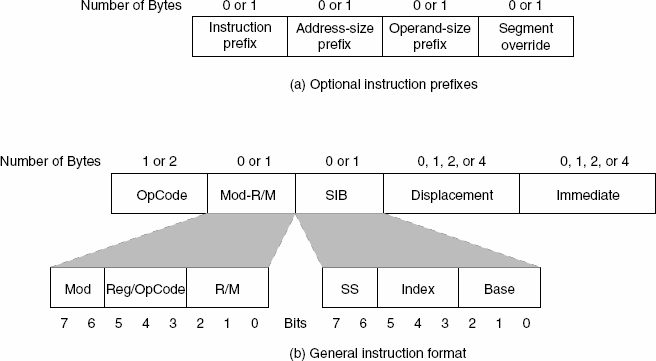

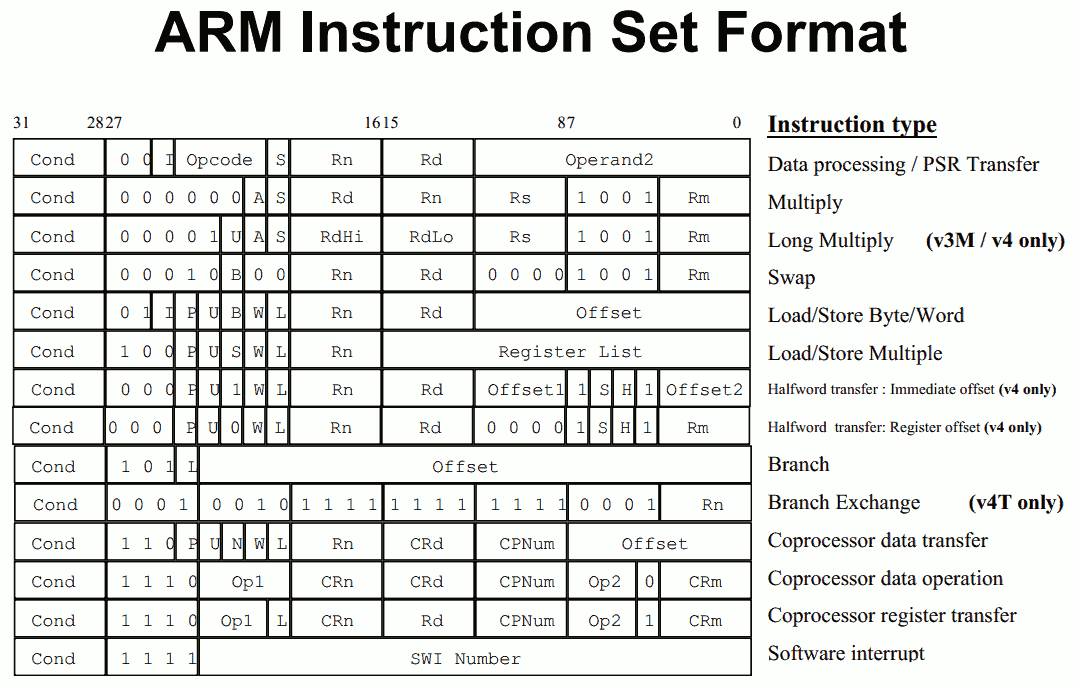

The fundamental difference between x86 and ARM is that x86 is a relatively complex ISA, while ARM is relatively simple by comparison. One key difference is that ARM dictates that every instruction is a fixed number of bits. In the case of ARMv8-A and ARMv7-A, all instructions are 32-bits long unless you're in thumb mode, which means that all instructions are 16-bit long, but the same sort of trade-offs that come from a fixed length instruction encoding still apply. Thumb-2 is a variable length ISA, so in some sense the same trade-offs apply. It’s important to make a distinction between instruction and data here, because even though AArch64 uses 32-bit instructions the register width is 64 bits, which is what determines things like how much memory can be addressed and the range of values that a single register can hold. By comparison, Intel’s x86 ISA has variable length instructions. In both x86-32 and x86-64/AMD64, each instruction can range anywhere from 8 to 120 bits long depending upon how the instruction is encoded.

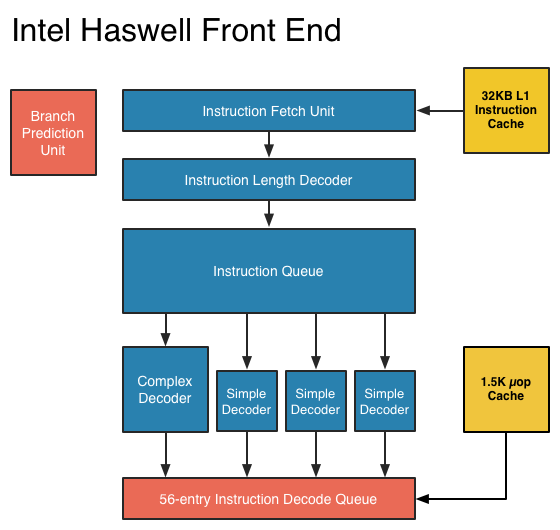

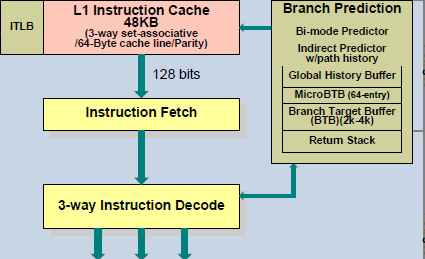

At this point, it might be evident that on the implementation side of things, a decoder for x86 instructions is going to be more complex. For a CPU implementing the ARM ISA, because the instructions are of a fixed length the decoder simply reads instructions 2 or 4 bytes at a time. On the other hand, a CPU implementing the x86 ISA would have to determine how many bytes to pull in at a time for an instruction based upon the preceding bytes.

A57 Front-End Decode, Note the lack of uop cache

While it might sound like the x86 ISA is just clearly at a disadvantage here, it’s important to avoid oversimplifying the problem. Although the decoder of an ARM CPU already knows how many bytes it needs to pull in at a time, this inherently means that unless all 2 or 4 bytes of the instruction are used, each instruction contains wasted bits. While it may not seem like a big deal to “waste” a byte here and there, this can actually become a significant bottleneck in how quickly instructions can get from the L1 instruction cache to the front-end instruction decoder of the CPU. The major issue here is that due to RC delay in the metal wire interconnects of a chip, increasing the size of an instruction cache inherently increases the number of cycles that it takes for an instruction to get from the L1 cache to the instruction decoder on the CPU. If a cache doesn’t have the instruction that you need, it could take hundreds of cycles for it to arrive from main memory.

Of course, there are other issues worth considering. For example, in the case of x86, the instructions themselves can be incredibly complex. One of the simplest cases of this is just some cases of the add instruction, where you can have either a source or destination be in memory, although both source and destination cannot be in memory. An example of this might be addq (%rax,%rbx,2), %rdx, which could take 5 CPU cycles to happen in something like Skylake. Of course, pipelining and other tricks can make the throughput of such instructions much higher but that's another topic that can't be properly addressed within the scope of this article.

By comparison, the ARM ISA has no direct equivalent to this instruction. Looking at our example of an add instruction, ARM would require a load instruction before the add instruction. This has two notable implications. The first is that this once again is an advantage for an x86 CPU in terms of instruction density because fewer bits are needed to express a single instruction. The second is that for a “pure” CISC CPU you now have a barrier for a number of performance and power optimizations as any instruction dependent upon the result from the current instruction wouldn’t be able to be pipelined or executed in parallel.

The final issue here is that x86 just has an enormous number of instructions that have to be supported due to backwards compatibility. Part of the reason why x86 became so dominant in the market was that code compiled for the original Intel 8086 would work with any future x86 CPU, but the original 8086 didn’t even have memory protection. As a result, all x86 CPUs made today still have to start in real mode and support the original 16-bit registers and instructions, in addition to 32-bit and 64-bit registers and instructions. Of course, to run a program in 8086 mode is a non-trivial task, but even in the x86-64 ISA it isn't unusual to see instructions that are identical to the x86-32 equivalent. By comparison, ARMv8 is designed such that you can only execute ARMv7 or AArch32 code across exception boundaries, so practically programs are only going to run one type of code or the other.

Back in the 1980s up to the 1990s, this became one of the major reasons why RISC was rapidly becoming dominant as CISC ISAs like x86 ended up creating CPUs that generally used more power and die area for the same performance. However, today ISA is basically irrelevant to the discussion due to a number of factors. The first is that beginning with the Intel Pentium Pro and AMD K5, x86 CPUs were really RISC CPU cores with microcode or some other logic to translate x86 CPU instructions to the internal RISC CPU instructions. The second is that decoding of these instructions has been increasingly optimized around only a few instructions that are commonly used by compilers, which makes the x86 ISA practically less complex than what the standard might suggest. The final change here has been that ARM and other RISC ISAs have gotten increasingly complex as well, as it became necessary to enable instructions that support floating point math, SIMD operations, CPU virtualization, and cryptography. As a result, the RISC/CISC distinction is mostly irrelevant when it comes to discussions of power efficiency and performance as microarchitecture is really the main factor at play now.

408 Comments

View All Comments

MaxIT - Saturday, February 13, 2016 - link

Reality check: the "nonplussed" product sold more than the well established surface pro in the last quarter.... So maybe you are just expressing YOUR opinion, and not "everyone's "....lilmoe - Friday, January 22, 2016 - link

The performance is "great" for iOS, FTFY. It would suffer on anything "Pro"....ddriver - Friday, January 22, 2016 - link

Performance is better than a high end workstation from 10 years ago, a system which was capable of running professional tasks which are still nowhere to be found on mobile platforms. And it has nothing to do with performance. It has to do with forcing a shift in the market, from devices used by their owners to devices, being used by their makers to exploit their owners commercially. And professional productivity just ain't it. Not content creation but content consumption. People flew in space using kilohertz computers with kilobytes of memory, today we have gigahertz and gigabytes in our pockets, and the best we can do with it is duck face photos. That's what apple did to computing, and other companies are getting on that train as well, seeing how profitable it is to exploit society, it is in nobody's interest to empower it.Phantom_Absolute - Friday, January 22, 2016 - link

I just created an account here to say...well said my friendlilmoe - Friday, January 22, 2016 - link

I was trying really hard to understand what you were trying to say.Computers didn't get us to the moon. They sure helped, a LOT, but it was good ole rockets that did.

Anyway, point is (and I exaggerate), even if you shrink the latest 8 core Xeon E3 coupled with the fastest Nvidia Quattro into a 3-5W envelope and stick in an iPad, it won't make it anything close to a "Pro" product. It's about overall FUNCTION.

An iPad "Pro" with a revamped version of iOS, more standard ports, and a SLOWER SoC would be a much better "Pro" product than what we have here.

Even Android sucks for Pro tablets. Only Microsoft has a thing here.

ddriver - Friday, January 22, 2016 - link

What I am trying to say is mobile hardware IS INDEED capable of running professional workloads. Of course it won't be the bloated contemporary workstation software, but people have ran workstation software on slower machines than that, and it was useful. So yes, this device has enough performance for professional tasks. There is no hardware lacking, only the software is.I can assure you, no matter how many rockets you have, you will never reach the moon absent computer guidance. The rocket is merely power, but without control, power never constructive and always destructive.

lilmoe - Friday, January 22, 2016 - link

"There is no hardware lacking""The rocket is merely power, but without control, power never constructive and always destructive"

You seem convinced that you can be productive on a screen with only a fast SoC attached. I don't know where to start.

With all due respect, your analogies are ridiculously irrelevant (hence why I was having trouble understanding them). Workstations in the past had much more FUNCTION that any iPad today. IT'S NOT ONLY ABOUT THE COMPUTING POWER. These workstations, despite lacking power by today's standards, were built with certain function in mind, and were used for their intended tasks.

iPads are consumption devices, first and foremost. Apple did nothing for "computing", but they did a lot for consumerism. iDevices got popular because they addressed consumption needs by lots of consumers that they didn't even know they needed/wanted, I'll give them that. But Apple's *speed* of forcing "new technology" on people's throats, and turning perfectly functional products unusable is unprecedented, and bad. Your "Pro" device is NO exception, and isn't going to last, nor function, as long as the _workstations from 10 years ago_...

The Pro moniker is being abused. What does it even mean now? Relative speed? Function? Value? Multiple products in one? I don't know anymore. But I'd like to believe that Microsoft's definition of a Pro products sounds easier on my ears.

You seem to be extremely sold on marketed idea that disposable technology with a timed bomb to obsolescence is a good thing. Technology that does nothing but harm the industry and its consumers. To each their own I guess.

ddriver - Friday, January 22, 2016 - link

You are ridiculously ignorant. Both a workstation from 10 years ago and this product are in terms of hardware general purpose computers. What specifies one as a workstation and another as a content consumption device is the software that runs on that general purpose computer. A 10000$ contemporary workstation would only be good for content consumption without the workstation grade software. Much the same way that this device can be good enough for workstation use with the proper software. Once again, clean up your ears - there is no limitation on a hardware level. It is all about the software.Your problem with understanding my analogies stems from the fact you are a narrow minded person, and this is not an insult but a sad fact, most propel are, it is not your fault, it is something done to you, something you are yet to overcome. You are not capable of outside the box thinking, you are conceptually limited to only what is in the box. Why is why you perceive outside the box opinions as alien and hard to understand.

lilmoe - Friday, January 22, 2016 - link

lol, I should really convert to the Apple religion just to stop being ignorant. Take care man.ddriver - Friday, January 22, 2016 - link

You should just sit down and carefully reevaluate your whole life, I mean if "apple religion" is what you were able to take out of all the apple bashing I went through :D Since you obviously missed that obvious thing, let me put it out directly - I am criticizing apple for crippling good hardware to useless toys.