The Apple iPad Pro Review

by Ryan Smith, Joshua Ho & Brandon Chester on January 22, 2016 8:10 AM ESTSoC Analysis: On x86 vs ARMv8

Before we get to the benchmarks, I want to spend a bit of time talking about the impact of CPU architectures at a middle degree of technical depth. At a high level, there are a number of peripheral issues when it comes to comparing these two SoCs, such as the quality of their fixed-function blocks. But when you look at what consumes the vast majority of the power, it turns out that the CPU is competing with things like the modem/RF front-end and GPU.

x86-64 ISA registers

Probably the easiest place to start when we’re comparing things like Skylake and Twister is the ISA (instruction set architecture). This subject alone is probably worthy of an article, but the short version for those that aren't really familiar with this topic is that an ISA defines how a processor should behave in response to certain instructions, and how these instructions should be encoded. For example, if you were to add two integers together in the EAX and EDX registers, x86-32 dictates that this would be equivalent to 01d0 in hexadecimal. In response to this instruction, the CPU would add whatever value that was in the EDX register to the value in the EAX register and leave the result in the EDX register.

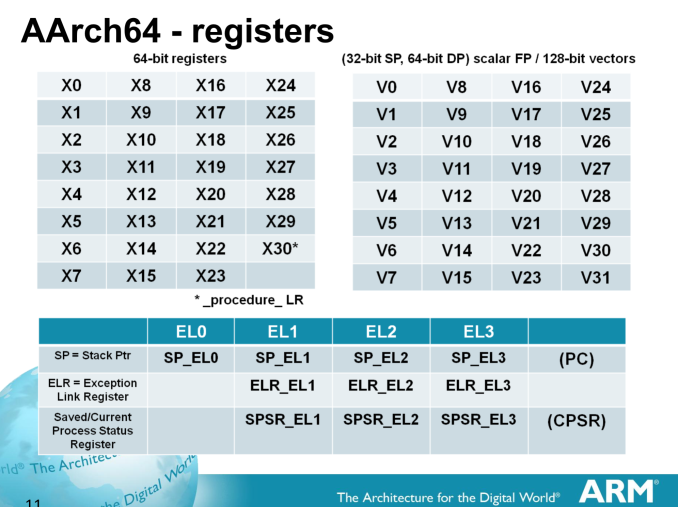

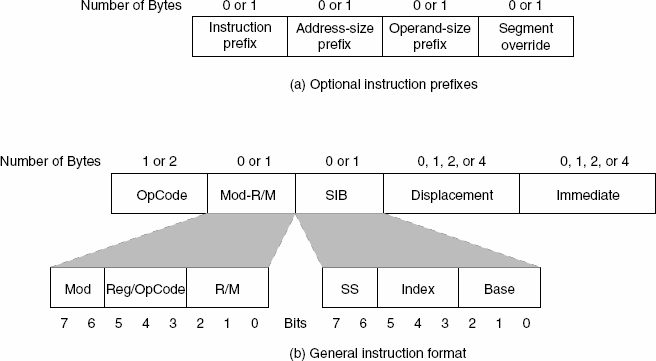

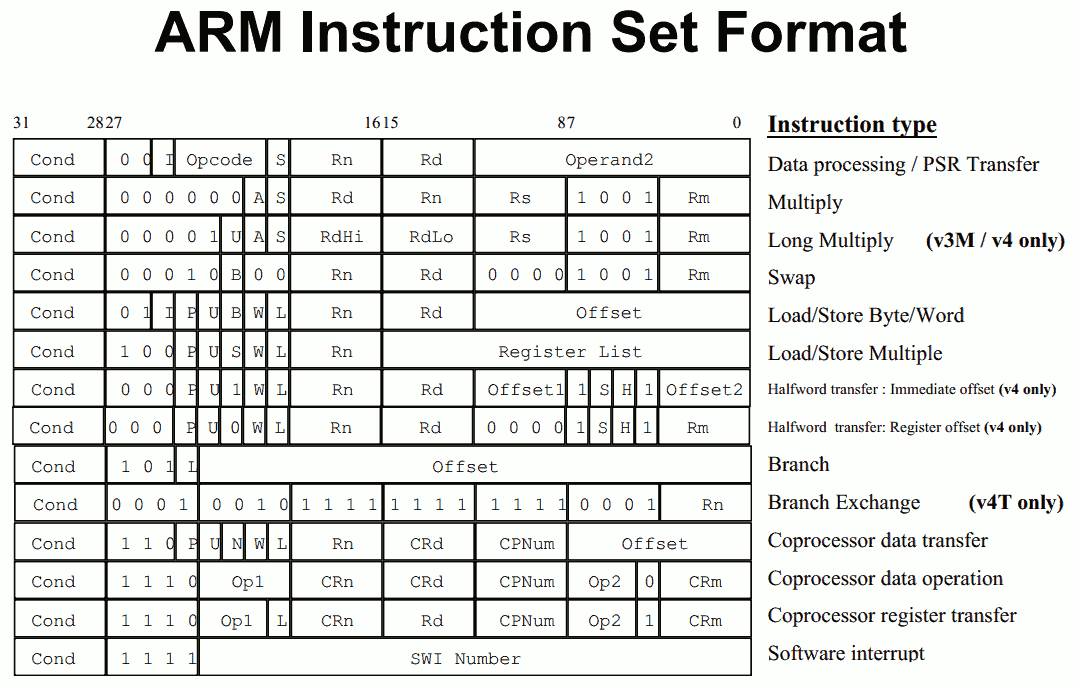

The fundamental difference between x86 and ARM is that x86 is a relatively complex ISA, while ARM is relatively simple by comparison. One key difference is that ARM dictates that every instruction is a fixed number of bits. In the case of ARMv8-A and ARMv7-A, all instructions are 32-bits long unless you're in thumb mode, which means that all instructions are 16-bit long, but the same sort of trade-offs that come from a fixed length instruction encoding still apply. Thumb-2 is a variable length ISA, so in some sense the same trade-offs apply. It’s important to make a distinction between instruction and data here, because even though AArch64 uses 32-bit instructions the register width is 64 bits, which is what determines things like how much memory can be addressed and the range of values that a single register can hold. By comparison, Intel’s x86 ISA has variable length instructions. In both x86-32 and x86-64/AMD64, each instruction can range anywhere from 8 to 120 bits long depending upon how the instruction is encoded.

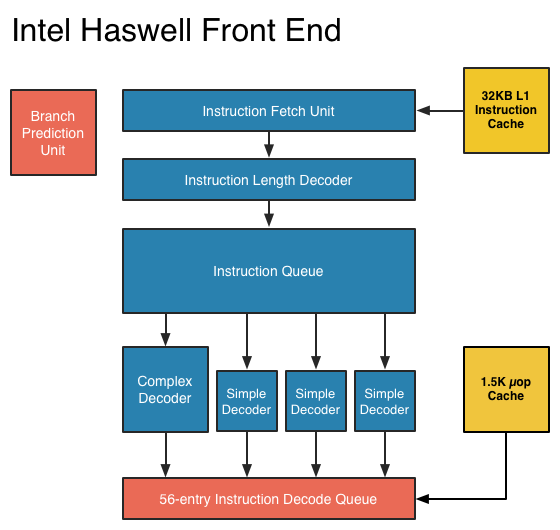

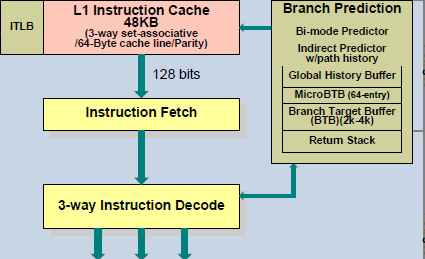

At this point, it might be evident that on the implementation side of things, a decoder for x86 instructions is going to be more complex. For a CPU implementing the ARM ISA, because the instructions are of a fixed length the decoder simply reads instructions 2 or 4 bytes at a time. On the other hand, a CPU implementing the x86 ISA would have to determine how many bytes to pull in at a time for an instruction based upon the preceding bytes.

A57 Front-End Decode, Note the lack of uop cache

While it might sound like the x86 ISA is just clearly at a disadvantage here, it’s important to avoid oversimplifying the problem. Although the decoder of an ARM CPU already knows how many bytes it needs to pull in at a time, this inherently means that unless all 2 or 4 bytes of the instruction are used, each instruction contains wasted bits. While it may not seem like a big deal to “waste” a byte here and there, this can actually become a significant bottleneck in how quickly instructions can get from the L1 instruction cache to the front-end instruction decoder of the CPU. The major issue here is that due to RC delay in the metal wire interconnects of a chip, increasing the size of an instruction cache inherently increases the number of cycles that it takes for an instruction to get from the L1 cache to the instruction decoder on the CPU. If a cache doesn’t have the instruction that you need, it could take hundreds of cycles for it to arrive from main memory.

Of course, there are other issues worth considering. For example, in the case of x86, the instructions themselves can be incredibly complex. One of the simplest cases of this is just some cases of the add instruction, where you can have either a source or destination be in memory, although both source and destination cannot be in memory. An example of this might be addq (%rax,%rbx,2), %rdx, which could take 5 CPU cycles to happen in something like Skylake. Of course, pipelining and other tricks can make the throughput of such instructions much higher but that's another topic that can't be properly addressed within the scope of this article.

By comparison, the ARM ISA has no direct equivalent to this instruction. Looking at our example of an add instruction, ARM would require a load instruction before the add instruction. This has two notable implications. The first is that this once again is an advantage for an x86 CPU in terms of instruction density because fewer bits are needed to express a single instruction. The second is that for a “pure” CISC CPU you now have a barrier for a number of performance and power optimizations as any instruction dependent upon the result from the current instruction wouldn’t be able to be pipelined or executed in parallel.

The final issue here is that x86 just has an enormous number of instructions that have to be supported due to backwards compatibility. Part of the reason why x86 became so dominant in the market was that code compiled for the original Intel 8086 would work with any future x86 CPU, but the original 8086 didn’t even have memory protection. As a result, all x86 CPUs made today still have to start in real mode and support the original 16-bit registers and instructions, in addition to 32-bit and 64-bit registers and instructions. Of course, to run a program in 8086 mode is a non-trivial task, but even in the x86-64 ISA it isn't unusual to see instructions that are identical to the x86-32 equivalent. By comparison, ARMv8 is designed such that you can only execute ARMv7 or AArch32 code across exception boundaries, so practically programs are only going to run one type of code or the other.

Back in the 1980s up to the 1990s, this became one of the major reasons why RISC was rapidly becoming dominant as CISC ISAs like x86 ended up creating CPUs that generally used more power and die area for the same performance. However, today ISA is basically irrelevant to the discussion due to a number of factors. The first is that beginning with the Intel Pentium Pro and AMD K5, x86 CPUs were really RISC CPU cores with microcode or some other logic to translate x86 CPU instructions to the internal RISC CPU instructions. The second is that decoding of these instructions has been increasingly optimized around only a few instructions that are commonly used by compilers, which makes the x86 ISA practically less complex than what the standard might suggest. The final change here has been that ARM and other RISC ISAs have gotten increasingly complex as well, as it became necessary to enable instructions that support floating point math, SIMD operations, CPU virtualization, and cryptography. As a result, the RISC/CISC distinction is mostly irrelevant when it comes to discussions of power efficiency and performance as microarchitecture is really the main factor at play now.

408 Comments

View All Comments

Valantar - Tuesday, January 26, 2016 - link

I have to say I'm a bit confused by the large portion of this review comparing the iPad Pro to the Pixel C, all the while nearly neglecting the Surface Pro 4. You have a long section praising the pen experience with the Pencil, without a single comparison to the (included) Surface Pen? That's just weird. Sure, the SP4 runs a full desktop OS, but it's a far more natural comparison in terms of size, weight, power and compatible accessories. I get that not all of your reviewers can get access to every product, but for the sake of that part of the review, acces to a SP4 would have been essential.Constructor - Tuesday, January 26, 2016 - link

I'm not defending this review necessarily, which is a bit odd and lacking in some regards, but there are various interesting Youtube demonstrations and reviews which make exactly that comparison.This is an interesting comparison of tracking accuracy and latency: https://www.youtube.com/watch?v=niD1N1d4nTc

This is from a designer's perspective:

https://www.youtube.com/watch?v=YaO_ucAZ4dQ

And comparing iPad Pro, Surface Pro 3 and Wacom Cintiq:

https://www.youtube.com/watch?v=JlspvcF-DKs

glenn.tx - Wednesday, January 27, 2016 - link

I agree completely. It's quite disappointing. The comparisons seem to be cherry picked.bebby - Tuesday, January 26, 2016 - link

What I miss in the discussion and review so far is the fact that google obviously does not yet support the higher resolution of the ipad pro for their apps. I wonder if there is intent behind that. It is very annoying as a user.Google is getting more and more important as a software/app provider but so far they have not been successfull with any of their hardware ventures (motorola, google glass, tablets, etc.).

ipad pro would be perfect with working google apps.

Constructor - Tuesday, January 26, 2016 - link

Looks like Google doesn't want to be seen boosting the competition's platform even though that's where they make most of their money on mobile, ironically.(Can't say I'd miss any of their software, though. Apart from an occasional picture search I'm not using any of it.)

Not that Apple is falling all over themselves in making software for other platforms either (even if they sporadically do, for their own purposes).

Zingam - Tuesday, January 26, 2016 - link

A Chargeable Pen? Apple's sense of humor never fails to amaze me!Constructor - Tuesday, January 26, 2016 - link

Which other pen with the same capabilities (zero-calbration pixel-precise resolution + pressure + tilt + orientation, near-zero latency, near-zero parallax) a) even exists and then b) does not need its own power supply?phexac - Tuesday, January 26, 2016 - link

The only way I would call this device "Pro" is if I could actually use it at work. I drive a bulldozer and, though I've tried, digging ditches with iPad Pro is terribly inefficient, and that's a problem that I don't see software makers fixing any time soon for a touch only device. Furthermore, the charging port has compatibility issues and would not accept the hose I use to refuel the bulldozer. To add insult to injury, you cannot sit on the iPad while using it! I couldn't help chuckling at the expectation that Apple apparently has for its consumers to either stand or kneel while SUPPORTING THE IPAD'S weight and trying to use it to move a mound of gravel at the same time.Finally, I have found in my experiments that even adding a keyboard to this device does not solve the problem. I have tried both the Apple iPad Pro keyboard and a Bluetooth one I could use wirelessly while sitting on a stack of cement bags. iPad lacks the basic ability to self-propel around the construction site and requires me to carry it from one task to another.

Better luck next time, Apple! I will stick with my Caterpillar earthmover!

Constructor - Tuesday, January 26, 2016 - link

Yep. The exact same argumentation as above in many cases! B-)althaz - Tuesday, January 26, 2016 - link

What I don't understand is the constant comparisons to the Surface Pro 3 - particularly in terms of the keyboard which changed quite significantly with the Surface Pro 4 (the pen also changed significantly).