GeForce + Radeon: Previewing DirectX 12 Multi-Adapter with Ashes of the Singularity

by Ryan Smith on October 26, 2015 10:00 AM ESTAshes of the Singularity: Unlinked Explicit Multi-Adapter with AFR

Based off of Oxide’s Nitrous engine, Ashes of the Singularity will be the first full game released using the engine. Oxide had previously used the engine as part of their Star Swarm technical demo, showcasing the benefits of vastly improved draw call throughput under Mantle and DirectX 12. As one might expect then, for their first retail game Oxide is developing a game around Nitrous’s DX12 capabilities, with an eye towards putting a large number of draw calls to good use and to develop something that might not have been as good looking under DirectX 11.

That resulting game is Ashes of the Singularity, a massive-scale real time strategy game. Ashes is a spiritual successor of sorts to 2007’s Supreme Commander, a game with a reputation for its technical ambition. Similar to Supreme Commander, Oxide is aiming high with Ashes, and while the current alpha is far from optimized, they have made it clear that even the final version of the game will push CPUs and GPUs hard. Between a complex game simulation (including ballistic and line of sight checks for individual units) and the rendering resources needed to draw all of those units and their weapons effects in detail over a large playing field, I’m expecting that the final version of Ashes will be the most demanding RTS we’ve seen in some number of years.

Because of its high resource requirements Ashes is also a good candidate for multi-GPU scaling, and for this reason Oxide is working on implementing DirectX 12 explicit multi-adapter support into the game. For Ashes, Oxide has opted to start by implementing support for unlinked mode, both because this is a building block for implementing linked mode later on and because from a tech demo point of view this allows Oxide to demonstrate unlinked mode’s most nifty feature: the ability to utilize multiple dissimilar (non-homogenous) GPUs within a single game. EMA with dissimilar GPUs has been shown off in bits and pieces at developer events like Microsoft’s BUILD, but this is the first time an in-game demo has been made available outside of those conferences.

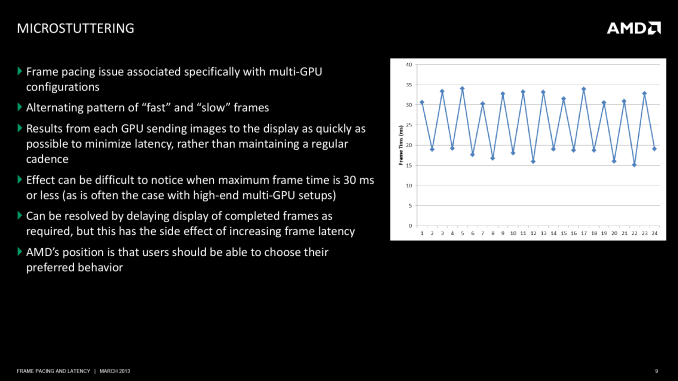

In order to demonstrate EMA and explicit synchronization in action, Oxide has started things off by using a basic alternate frame rendering implementation for the game. As we briefly mentioned in our technical overview of DX12 explicit multi-adapter, EMA puts developers in full control of the rendering process, which for Oxide meant implementing AFR from scratch. This includes assigning frames to each GPU, handling frame buffer transfers from the secondary GPU to the primary GPU, and most importantly of all controlling frame pacing, which is typically the hardest part of AFR to get right.

Because Oxide is using a DX12 EMA AFR implementation here, this gives Ashes quite a bit of flexibility as far as GPU compatibility goes. From a performance standpoint the basic limitations of AFR are still in place – due to the fact that each GPU is tasked with rendering a whole frame, all utilized GPUs need to be close in performance for best results – otherwise Oxide is able to support a wide variety of GPUs with one generic implementation. This includes not only AMD/AMD and NVIDIA/NVIDIA pairings, but GPU pairings that wouldn’t typically work for Crossfire and SLI (e.g. GTX Titan X + GTX 980 Ti). But most importantly of course, this allows Ashes to support using an AMD video card and an NVIDIA video card together as well. In fact beyond the aforementioned performance limitations, Ashes’ AFR mode should work on any two DX12 compliant GPUs.

From a technical standpoint, Oxide tells us that they’ve had a bit of a learning curve in getting EMA working for Ashes – particularly since they’re the first – but that they’re happy with the results. Obviously the fact that this even works is itself a major accomplishment, and in our experience frame pacing with v-sync disabled and tearing enabled feels smooth on the latest generation of high-end cards. Otherwise Oxide is still experimenting with the limits of the hardware and the API; they’ve told us that so far they’ve found that there’s plenty of bandwidth over PCIe for shared textures, and meanwhile they’re incurring a roughly 2ms penalty in transferring data via GPUs.

With that said and to be very clear here, the game itself is still in its alpha state, and the multi-adapter support is not even at alpha (ed: nor is it in the public release at this time). So Ashes’ explicit multi-adapter support is a true tech demo, intended to first and foremost show off the capabilities of EMA rather than what performance will be like in the retail game. As it stands the AFR-enabled build of Ashes occasionally crashes at load time for no obvious reason when AFR is enabled. Furthermore there are stability/corruption issues with newer AMD and NVIDIA drivers, which has required us to use slightly older drivers that have been validated to work. Overall while AMD and NVIDIA have their DirectX 12 drivers up and running, as has been the case with past API launches it’s going to take some time for the two GPU firms to lock down every new feature of the API and driver model and to fully knock out all of their driver bugs.

Finally, Oxide tells us that going forward they will be developing support for additional EMA modes in Ashes. As the current unlinked EMA implementation is stabilized, the next thing on their list will be to add support for linked EMA for better performance on similar GPUs. Oxide is still exploring linked EMA, but somewhat surprisingly they tell us that unlinked EMA already unlocks much of the performance of their AFR implementation. A linked EMA implementation in turn may only improve multi-GPU scaling by a further 5-10%. Beyond that, they will also be looking into alternative implementations of multi-GPU rendering (e.g. work sharing of individual frames), though that is farther off and will likely hinge on other factors such as hardware capabilities and the state of DX12 drivers from each vendor.

The Test

For our look at Ashes’ multi-adapter performance, we’re using Windows 10 with the latest updates on our GPU testbed. This provides plenty of CPU power for the game, and we’ve selected sufficiently high settings to ensure that we’re GPU-bound at all times.

For GPUs we’re using NVIDIA’s GeForce GTX Titan X and GTX 980 Ti, along with AMD’s Radeon R9 Fury X and R9 Fury for the bulk of our testing. As roughly comparable cards in price and performance, the GTX 980 Ti and R9 Fury X are our core comparison cards, with the additional GTX and Fury cards to back them up. Meanwhile we’ve also done a limited amount of testing with the GeForce GTX 680 and Radeon HD 7970 to showcase how well Ashes’ multi-adapter support works on older cards.

Finally, on the driver side of matters we’re using the most recent drivers from AMD that work correctly in multi-adapter mode with this build of Ashes. For AMD that’s Catalyst 15.8 and for NVIDIA that’s release 355.98. We’ve also thrown in single-GPU results with the latest drivers (15.0 and 358.50 respectively) to quickly showcase where single-GPU performance stands with these newest drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 Fury X ASUS STRIX R9 Fury AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 355.98 NVIDIA Release 358.50 AMD Catalyst 15.8 Beta AMD Catalyst 15.10 Beta |

| OS: | Windows 10 Pro |

180 Comments

View All Comments

andrew_pz - Tuesday, October 27, 2015 - link

Radeon placed in 16x slot, GeFroce installed to 4x slot only. WHY?It's cheat!

silverblue - Tuesday, October 27, 2015 - link

There isn't a 4x slot on that board. To quote the specs..."- 4 x PCI Express 3.0 x16 slots (PCIE1/PCIE2/PCIE4/PCIE5: x16/8/16/0 mode or x16/8/8/8 mode)"

Even if the GeForce was in an 8x slot, I really doubt it would've made a difference.

Ryan Smith - Wednesday, October 28, 2015 - link

Aye. And just to be clear here, both cards are in x16 slots (we're not using tri-8 mode).brucek2 - Tuesday, October 27, 2015 - link

The vast majority of PCs, and 100% of consoles, are single GPU (or less.) Therefore developers absolutely must ensure their game can run satisfactorily on one GPU, and have very little to gain from investing extra work in enabling multi GPU support.To me this suggests that moving the burden of enabling multi-gpu support from hardware sellers (who can benefit from selling more cards) to game publishers (who basically have no real way to benefit at all) is that the only sane decision is not invest any additional development or testing on multi gpu support and that therefore multi GPU support will effectively be dead in the DX12 world.

What am I missing?

willgart - Tuesday, October 27, 2015 - link

well... you no longer need to change your card to a big one, you can just upgrade your pc with a low or middle entry card to get a good boost! and you keep your old one. from a long term point of view we win, not the hardware resellers.imagine today you have a GTX970, in 4 years you can get a GTX 2970 and have a stronger system than a single 2980 card... specialy the FPS / $ is very interesting.

and when you compare the setup HD7970+GTX680, maybe the cost is 100$ today(?) can be compared to a single GTX980 which cost nearly 700$...

brucek2 - Tuesday, October 27, 2015 - link

I understand the benefit to the user. What I'm worried is missing is incentive to the game developer. For them the new arrangement sounds like nothing but extra cost and likely extra technical support hassle to make multi-gpu work. Why would they bother? To use your example of a user with 7970+680, the 680 alone would at least meet the console-equivalent setting, so they'd probably just tell you to use that.)prtskg - Wednesday, October 28, 2015 - link

It would make their game run better and thus improve their brand name.brucek2 - Wednesday, October 28, 2015 - link

Making it run "better" implies it runs "worse" for the 95%+ of PC users (and 100% of console users) who do not have multi-GPU. That's a non-starter. The publisher has to make it a good experience for the overwhelmingly common case of single gpu or they're not going to be in business for very long. Once they've done that, what they are left with is the option to spend more of their own dollars so that a very tiny fraction of users can play the same game at higher graphics settings. Hard to see how that's going to improve their brand name more than virtually anything else they'd choose to spend that money on, and certainly not for the vast majority of users who will never see or know about it.BrokenCrayons - Wednesday, October 28, 2015 - link

You're not missing anything at all. Multi-GPU systems, at least in the case of there being more than one discrete GPU, represent a small number of halo desktop computers. Desktops, gaming desktops in particular, are already a shrinking market and even the large majority of such systems contain only a single graphics card. This means there's minimal incentive for a developer of a game to bother soaking up the additional cost of adding support for multi GPU systems. As developers are already cost-sensitive and working in a highly competitive business landscape, it seems highly unlikely that they'll be willing to invest the human resources in the additional code or soak up the risks associated with bugs and/or poor performance. In essence, DX12 seems poised to end multi GPU gaming UNLESS the dGPU + iGPU market is large enough in modern computers AND the performance benefits realized are worth the cost to the developers to write code for it. There are, after all, a lot more computers (even laptops and a very limited number of tablets) that contain an Intel graphics processor and an NV or more rarely an AMD dGPU. Though even then, I'd hazard a guess to say that the performance improvement is minimal and not worth the trouble. Plus most computers sold contain only whatever Intel happens to throw onto the CPU die so even that scenario is of limited benefit in a world of mostly integrated graphics processors.mayankleoboy1 - Wednesday, October 28, 2015 - link

Any idea what LucidLogix are doing these days?Last i remember, they had released some software solutions which reduced battery drain on Samsung devices (by dynamically decreasing the game rendering quality