The IBM POWER8 Review: Challenging the Intel Xeon

by Johan De Gelas on November 6, 2015 8:00 AM EST- Posted in

- IT Computing

- CPUs

- Enterprise

- Enterprise CPUs

- IBM

- POWER

- POWER8

Inside the S822L: Hardware Components

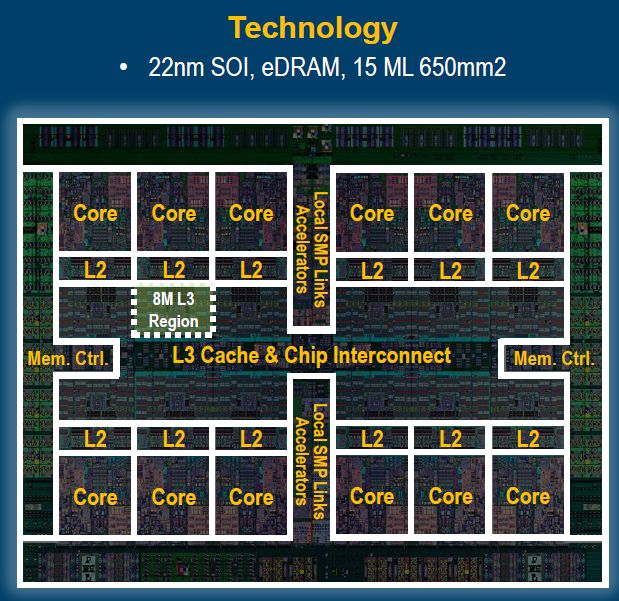

The 2U Rack-mount S822L server contains two IBM POWER8 DCM sockets. Each socket thus contains two cores connected by a 32GBps interconnect. The reason for using a Multi-Chip-Module (MCM) is pretty simple. Smaller five-to-six core dies are a lot cheaper to produce than the massive 650 mm² monolithic 12-core dies. As a result the latter are reserved for IBM's high-end (E880 and a like). So while most POWER8 presentations and news posts on the net talk about the multi-core die below...

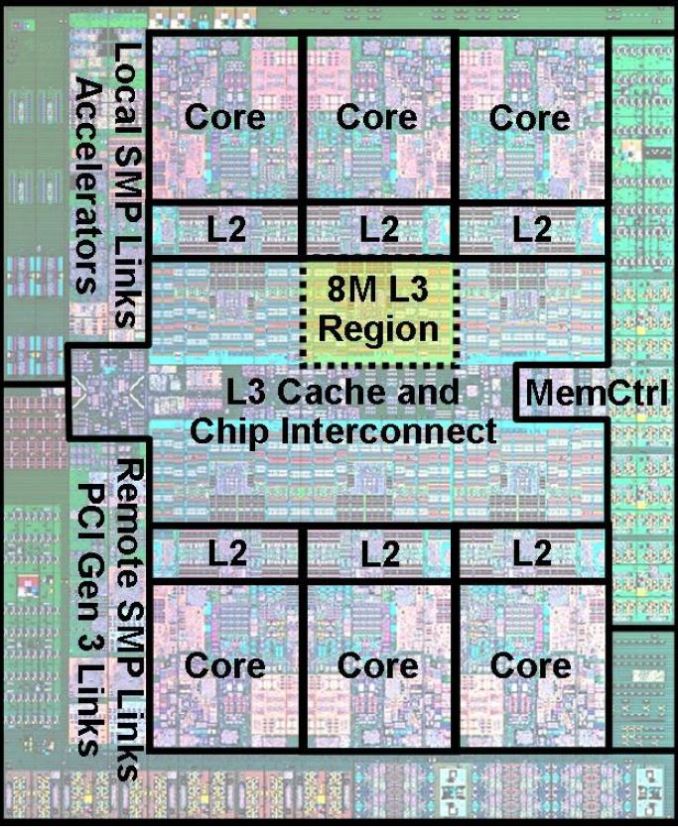

... it is actually an MCM with two six core dies like the one below that is challenging the 10 to 18 core Xeons. The massive monolithic 10-12 core dies are in fact reserved for much more expensive IBM servers.

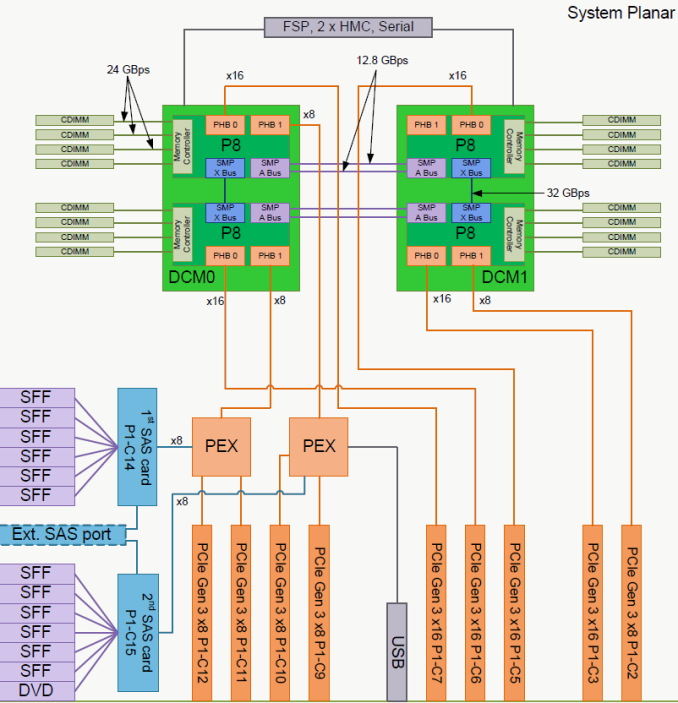

The layout of the S822L is well illustrated by the scheme inside the manual.

Each DCM offers 48 PCIe Gen 3 lanes. 32 of those lanes are directly connected to the processor while 16 connect to PCIe switches. The PCIe switches have "only" 8 lanes upstream to the DCM, but offer 24 lanes to "medium" speed devices downstream. As it unlikely that both your SAS controllers and your network controllers will gobble up the full PCIe x8 bandwidth, this is a very elegant way to offer additional PCIe lanes.

146 Comments

View All Comments

LemmingOverlord - Friday, November 6, 2015 - link

Mate... Bite your tongue! Johan is THE man when it comes to Datacenter-class hardware. Obviously he doesn't get the same exposure as teh personal technology guys, but he is definitely one of the best reviewers out there (inside and outside AT).joegee - Friday, November 6, 2015 - link

He's been doing class A work since Ace's Hardware (maybe before, I found him on Ace's though.) He is a cut above the rest.nismotigerwvu - Friday, November 6, 2015 - link

Johan,I think you had a typo on the first sentence of the 3rd paragraph on page 1.

"After seeing the reader interestin POWER8 in that previous article..."

Nice read overall and if I hadn't just had my morning cup of coffee I would have missed it too.

Ryan Smith - Friday, November 6, 2015 - link

Good catch. Thanks!Essence_of_War - Friday, November 6, 2015 - link

That performance per watt, it is REALLY hard to keep up with the Xeons there!III-V - Friday, November 6, 2015 - link

IBM's L1 data cache has a 3-cycle access time, and is twice as large (64KB) as Intel's, and I think I remember it accounting for something like half the power consumption of the core.Essence_of_War - Friday, November 6, 2015 - link

Whoa, neat bit of trivia!JohanAnandtech - Saturday, November 7, 2015 - link

Interesting. Got a link/doc to back that up? I have not found such detailed architectural info.Henriok - Friday, November 6, 2015 - link

Very nice to see tests of non-x86 hardware. It's interesting too se a test of the S822L when IBM just launched two even more price competitive machines, designed and built by Wistron and Tyan, as pure OpenPOWER machines: the S812LC and S822LC. These can't run AIX, and are substantially cheaper than the IBM designed machines. They might lack some features, but they would probably fit nicely in this test. And they are sporting the single chip 12 core version of the POWER8 processor (with cores disabled).DanNeely - Friday, November 6, 2015 - link

"The server is powered by two redundant high quality Emerson 1400W PSUs."The sticker on the PSU is only 80+ (no color). Unless the hotswap support comes with a substantial penalty (if so why); this design looks to be well behind the state of the art. With data centers often being power/hvac limited these days, using a relatively low efficiency PSU in an otherwise very high end system seems bizarre to me.