The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTSkylake's iGPU: Intel Gen9

Both the Skylake processors here use Intel’s HD 530 graphics solution. When I first heard the name, alarm bells went off in my head with questions: why is the name different, has the architecture changed, and what does this mean fundamentally?

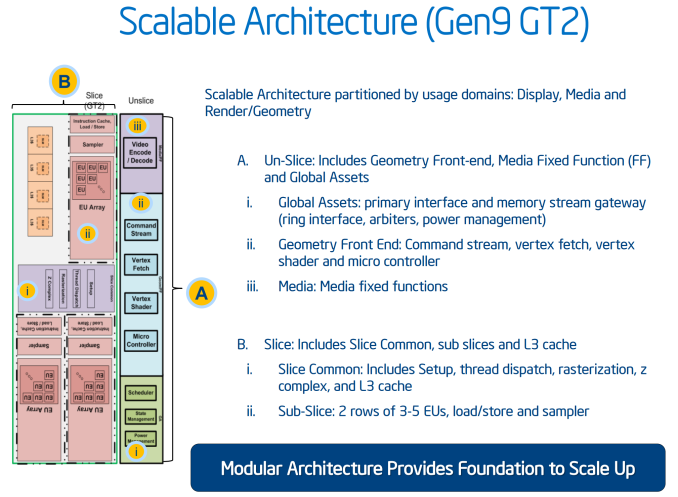

Not coming up with many details, we did the obvious thing – check what information comes directly out of the processor. Querying HD 530 via Intel's OpenCL driver reports a 24 EU design running at 1150 MHz. This is different than what GPU-Z indicates, which points to a 48 EU design instead, although GPU-Z is not often correct on newer graphics modules before launch day. We can confirm that this is a 24 EU design, and this most likely follows on from Intel’s 8th Generation graphics in the sense that we have a base GT2 design featuring three sub-slices of 8 EUs each.

As far as we can tell, Intel calls the HD 530 graphics part of its 9th Generation (i.e. Gen9). We have been told directly by Intel that they have changed their graphics naming scheme from a four digit (e.g. HD4600) to a three digit (HD 530) arrangement in order "to minimize confusion" (direct quote). Personally we find that it adds more confusion, because the HD 4600 naming is not directly linked to the HD 530 naming. While you could argue that 5 is more than 4, but we already have HD 5200, HD 5500, Iris 6100 and others. So which is better, HD 530 or HD 5200? At this point it will already create a miasma of uncertainty, probably exaggerated until we get a definite explanation of the stack nomenclature.

Naming aside, Generation 9 graphics comes with some interesting enhancements. The slice and un-slice now have individual power and clock domains, allowing for a more efficient use of resources depending on the load (e.g. some un-slice not needed for some compute tasks). This lets the iGPU better balance power usage between fixed-function operation and programmable shaders.

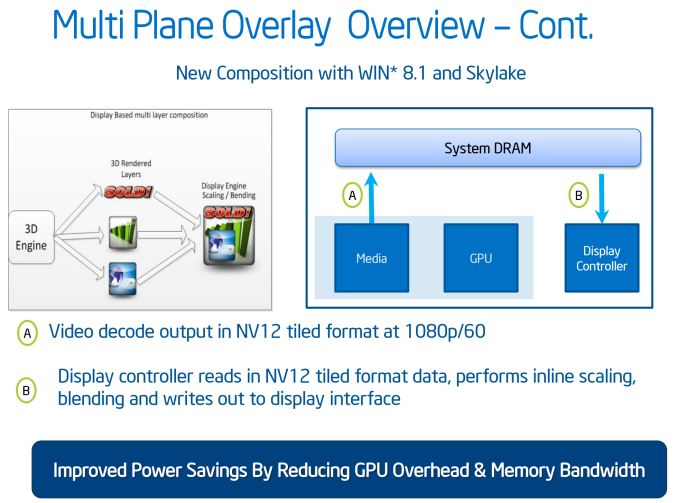

Generation 9 will support a feature called Multi Plane Overlay, which is a similar feature to AMD’s video playback path adjustments in Carrizo. The principle here is that when a 3D engine has to perform certain operations to an image (blend, resize, scale), the data has to travel from the processor into DRAM then to the GPU to be worked on, then back out to DRAM before it hits the display controller, a small but potentially inefficient operation in mobile environments. What Multi Plane Overlay does is add fixed function hardware to the display controller to perform this without ever hitting the GPU, minimizing power consumption from the GPU and taking out a good portion of DRAM data transfers. This comes at a slight hit for die area overall due to the added fixed function units.

As shown above, this feature will be supported on Win 8.1 with Skylake’s integrated graphics. That being said, not all imaging can be moved in this way, but where possible the data will take the shorter path.

To go along with the reduced memory transfer, Gen9 has support for memory color stream compression. We have seen this technology come into play for other GPUs, where by virtue of fixed function hardware and lossless algorithms this means that smaller quantities of image and texture data is transferred around the system, again saving power and reducing bandwidth constraints. The memory compression is also used with a scalar and format conversion pipe to reduce the encoding pressure on the execution units, reducing power further.

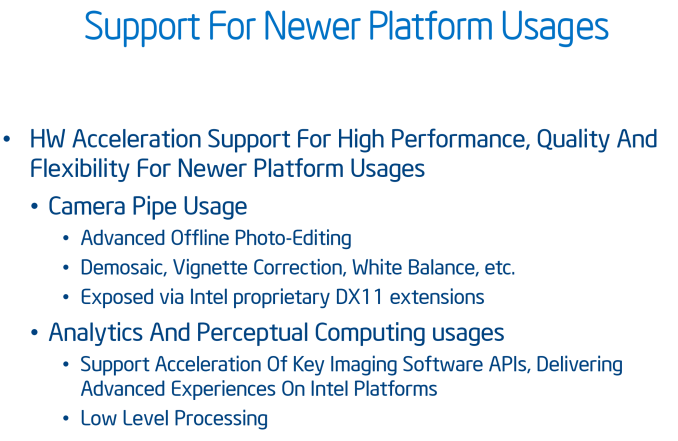

Adding into the mix, we have learned that Gen9 includes a feature called the ‘Camera Pipe’ for quick standard adjustments to images via hardware acceleration. This adjusts the programmable shaders to work in tandem for specific DX11 extensions on common image manipulation processes beyond resize/scale. The Camera Pipe is teamed with SDKs to help developers connect into optimized imaging APIs.

Media Encoding & Decoding

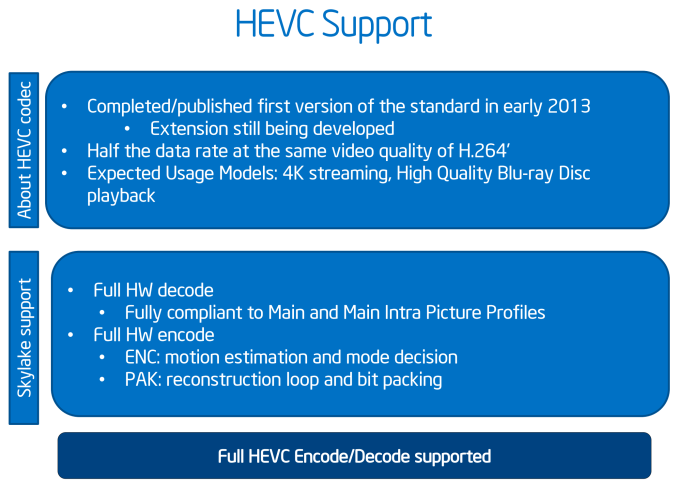

In the world of encode/decode, we get the following:

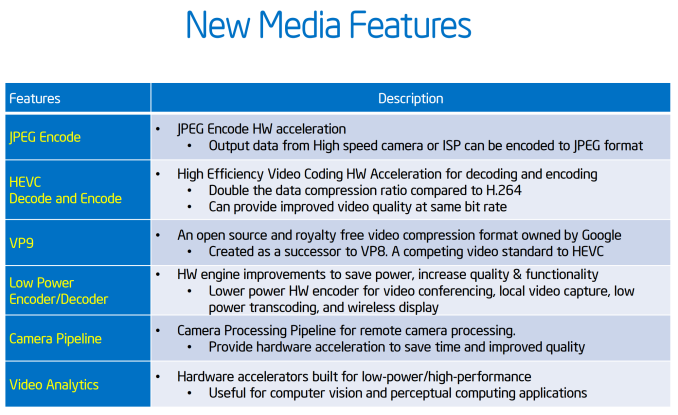

Whereas Broadwell implemented HEVC decoding in a "hybrid" fashion using a combination of CPU resources, GPU shaders, and existing GPU video decode blocks, Skylake gets a full, low power fixed function HEVC decoder. For desktop users this shouldn't impact things by too much - maybe improve compatibility a tad - but for mobile platforms this should significantly cut down on the amount of power consumed by HEVC decoding and increase the size and bitrate that the CPU can decode. Going hand-in-hand with HEVC decoding, HEVC encoding is now also an option with Intel's QuickSync encoder, allowing for quicker HEVC transcoding, or more likely real-time HEVC uses such as video conferencing.

Intel is also hedging their bets on HEVC by also implementing a degree of VP9 support on Skylake. VP9 is Google's HEVC alternative codec, with the company pushing it as a royalty-free option. Intel calls VP9 support on Skylake "partial" for both encoding and decoding, indicating that VP9 is likely being handled in a hybrid manner similar to how HEVC was handled on Broadwell.

Finally, JPEG encoding is new for Skylake and set to support images up to 16K*16K.

Video Support

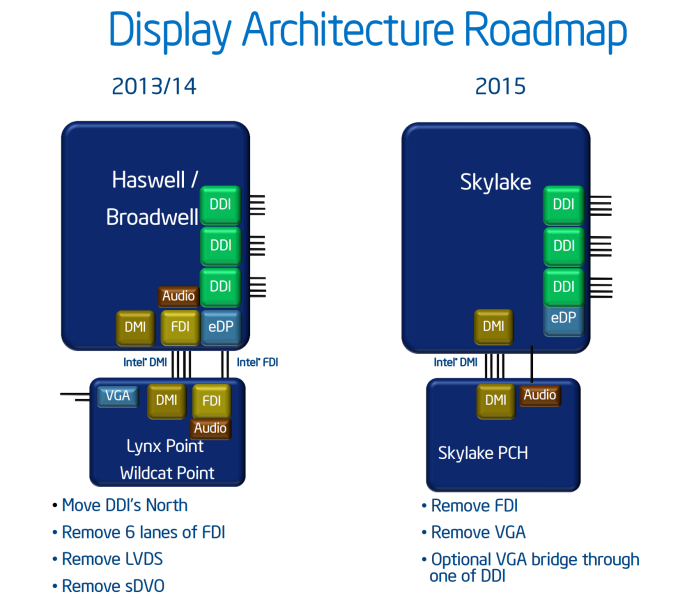

The analog (VGA) video connector has now been completely removed from the CPU/chipset combination, meaning that any VGA/D-Sub video connection has to be provided via an active digital/analog converter chip. This has been a long time coming, and is part of a previous committment made by Intel several years ago to remove VGA by 2015. Removing analog display functionality will mean added cost for legacy support in order to drive analog displays. Arguably this doesn’t mean much for Z170 as the high end platform is typically used with a discrete graphics card that has HDMI or DisplayPort, but we will see motherboards with VGA equipped in order to satisfy some regional markets with specific requirements.

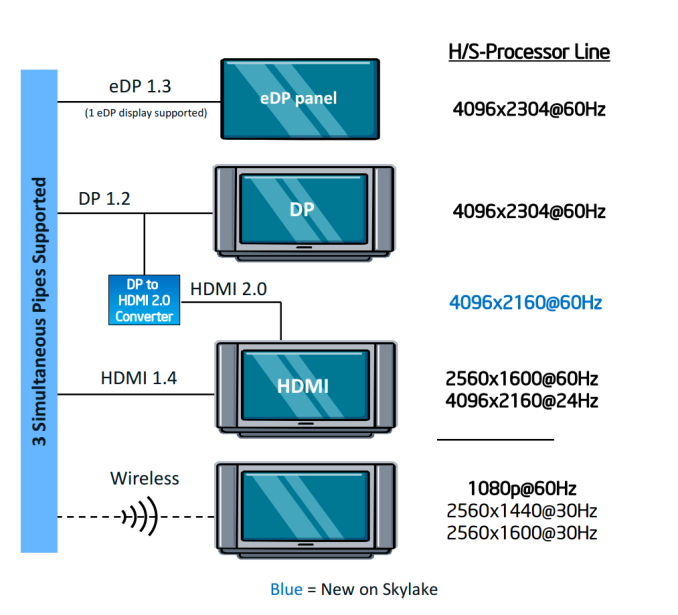

HDMI 2.0 is not supported by default, and only the following resolutions are possible on the three digital display controllers:

A DP to HDMI 2.0 converter, specifically an LS-Pcon, is required to do the adjustments, be it on the motherboard itself or as an external adapter. We suspect that there will not be many takers buying a controller to do this, given the capabilities and added benefits listed by the Alpine Ridge controller.

477 Comments

View All Comments

xxxGODxxx - Saturday, October 31, 2015 - link

Hi guys I would like to know whether I should buy the 6600k with a z170 mobo at $417 or should I buy a 3930k with a x79 mobo at $330? I'm not too sure if the extra IPC of the 6600k is enough to warrant the extra $87 over the 3930k especially since I will be overclocking the cpu and I will be gaming on a r9 390 (maybe I will add one more 390 in the future) at 1440p.Toyevo - Wednesday, November 25, 2015 - link

Even now I hesitate at updating a Phenom II X4 945. The Samsung 950 Pro pushed me over the line, and with it the need for PCIe M.2 only available in recent generations. There's no holy grail in CPUs, only what's relevant for each individual today. Of several other systems I have, none demand any change yet. On the Intel side my 2500K (and up) I wouldn't bother with even Skylake. With AMD my FX6300 (and up) are more power hungry but entirely adequate. And our E5-2xxx servers sit on Ivy Bridge until early 2017.What does all this mean? Not a lot.. In the same way many of you see Skylake as a non event, I equally saw Broadwell and Haswell as non events. 20 years ago the jumps were staggering, overclocking wasn't nearly as trendy, nor as straight forward, but entirely necessary, the cost of new hardware prohibitively expensive. The generations were so definitive and fast back then.

i_will_eat_you - Saturday, December 12, 2015 - link

This is a good review, especially the look at memory latency. The 4690K is left out however from a lot of benchmarks. If you include that then I don't see much of an attraction to skylake. There is also the concern about the new rootkit support skylake introduces with protected code execution. This is not something I see being used for the good of the consumer.My one gripe is the lack of benchmarks for intense game engines (simulations, etc). Total war is there which is a step forward but I'm not sure if that benchmark really measures simulation engine performance.

If you take games such as Sins of a Solar Empire or Supreme Commander then they have a separate thread for graphics so tend to maintain a decent frame rate even when the game engine runs at a crawl. The more units you add to the map and the more that is going on the slower it goes. But this is not in FPS. It means that ordering a ship across the solar system might take 10 s when there are 1000 units in the game but 5 minutes when there are 100000 units in the game. I would love to see some benchmarks measuring engine performance of games such as this with the unit limits greatly increased. It is a bit of a niche but many sim games (RTS, etc) scale naturally which means you can increase the unit limit, map size, AI difficulty, number of AIs, etc as your hardware becomes more powerful.

This is especially relevant with CPUs such as the broadwell which might gain a big advantage each game loop processing the very large simulation engine dataset.

systemBuilder - Tuesday, July 19, 2016 - link

Wow your review really sucked. Where are the benchmarks for the i5-6600k? Did you forget?POPCORNS - Friday, August 19, 2016 - link

To me, It doesn't matter if there's no IPC improvement over Sandy Bridge, Ivy Bridge or Haswell,Because I've upgraded from a Wolfdale Celeron (E3300) to a Skylake (6700K), lol.

oranos - Thursday, December 29, 2016 - link

This article seems to be confused. DDR4 brings more sustained framerates for higher resolutions (especially 4k). Really a waste of time doing a 1080p comparison.oranos - Thursday, December 29, 2016 - link

if you wanted to do a proper test for DDR4 gaming performance you should run a 6700K and GTX 1080 minimum and run multiple games in 4K for testing.