The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTTotal War: Attila

The second strategy game in our benchmark suite, Total War: Attila is the latest game in the Total War franchise. Total War games have traditionally been a mix of CPU and GPU bottlenecks, so it takes a good system on both ends of the equation to do well here. In this case the game comes with a built-in benchmark that plays out over a large area with a fortress in the middle, making it a good GPU stress test.

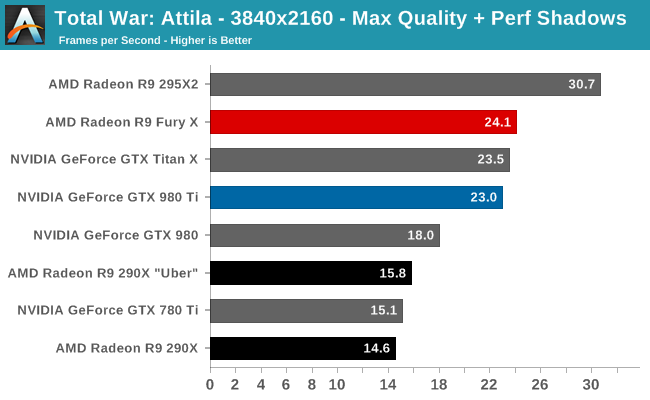

Attila is the third win in a row for AMD at 4K. Here the R9 Fury X beats the GTX 980 Ti by 5% at the Max quality setting. However as this benchmark is very forward looking (read: ridiculously GPU intensive), the actual performance at 4K Max isn’t very good. No single GPU card can average 30fps here, and framerates will easily dip below 20fps. Since this is a strategy game we don’t have the same high bar for performance requirements, but sub-30fps still won’t cut it.

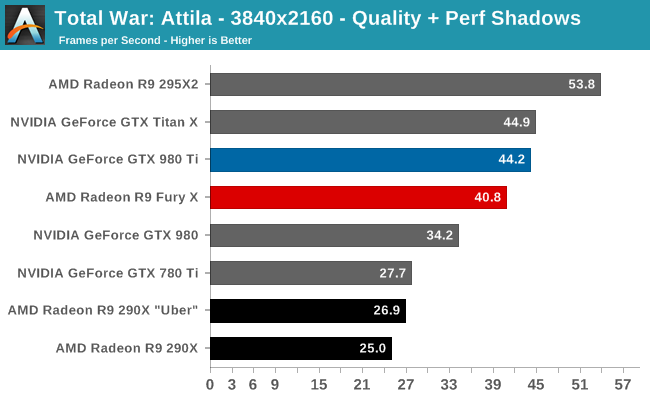

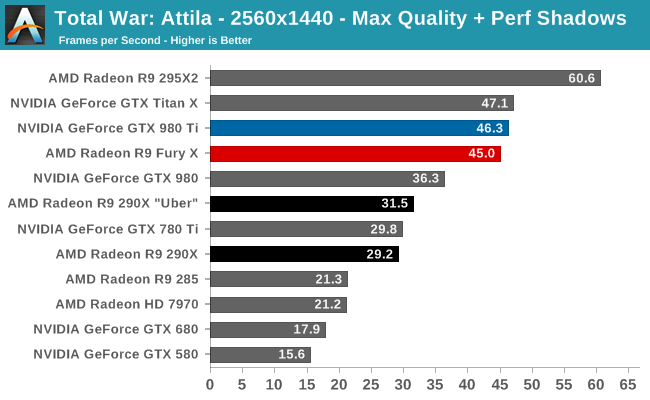

In which case we have to either compromise on quality or resolution, and in either case AMD’s lead dissolves. At 4K Quality and 1440p Max, the R9 Fury X trails the GTX 980 Ti by 8% and 3% respectively. And actually the 1440p results are still a good showing, but given AMD’s push for 4K, to lose to the GTX 980 Ti by more at the resolution they favor is a bit embarrassing.

Meanwhile, Atilla has always seemed to love pushing shaders more than anything else, so it comes as no great surprise that this game is a strong showing for the R9 Fury X relative to its predecessor. The performance gains at 4K are a consistent 52%, right at the top-end of our performance expectation window, and a bit smaller (but still impressive) 43% at 1440p.

458 Comments

View All Comments

bennyg - Saturday, July 4, 2015 - link

Marketing performance. Exactly.Except efficiency was not good enough across the generations of 28nm GCN in an era where efficiency + thermal/power limits constrain performance, and look what Nvidia did over a similar era from Fermi (which was at market when GCN 1.0 was released) to Kepler to Maxwell. Plus efficiency is kind of the ultimate marketing buzzword in all areas of tech and not having any ability to mention it (plus having generally inferor products) hamstrung their marketing all along

xenol - Monday, July 6, 2015 - link

Efficiency is important because of three things:1. If your TDP is through the rough, you'll have issues with your cooling setup. Any time you introduce a bigger cooling setup because your cards run that hot, you're going to be mocked for it and people are going to be weary of it. With 22nm or 20nm nowhere in sight for GPUs, efficiency had to be a priority, otherwise you're going to ship cards that take up three slots or ship with water coolers.

2. You also can't just play to the desktop market. Laptops are still the preferred computing platform and even if people are going for a desktop, AIOs are looking much more appealing than a monitor/tower combo. So you want to have any shot in either market, you have to build an efficient chip. And you have to convince people they "need" this chip, because Intel's iGPUs do what most people want just fine anyway.

3. Businesses and such with "always on" computers would like it if their computers ate less power. Even if you can save a handful of watts, multiplying that by thousands and they add up to an appreciable amount of savings.

xenol - Monday, July 6, 2015 - link

(Also by "computing platform" I mean the platform people choose when they want a computer)medi03 - Sunday, July 5, 2015 - link

ATI is the reason both Microsoft and Sony use AMDs APUs to power their consoles.It might be the reason why APUs even exist.

tipoo - Thursday, July 2, 2015 - link

That was then, this is now. Now, AMD together with the acquisition, has a lower market cap than Nvidia.Murloc - Thursday, July 2, 2015 - link

yeah, no.ddriver - Thursday, July 2, 2015 - link

ATI wasn't bigger, AMD just paid a preposterous and entirely unrealistic amount of money for it. Soon after the merger, AMD + ATI was worth less than what they paid for the latter, ultimately leading to the loss of its foundries, putting it in an even worse position. Let's face it, AMD was, and historically has always been betrayed, its sole purpose is to create the illusion of competition so that the big boys don't look bad for running unopposed, even if this is what happens in practice.Just when AMD got lucky with Athlon a mole was sent to make sure AMD stays down.

testbug00 - Sunday, July 5, 2015 - link

foundries didn't go because AMD bought ATI. That might have accelerated it by a few years however.Foundry issue and cost to AMD dates back to the 1990's and 2000-2001.

5150Joker - Thursday, July 2, 2015 - link

True, AMD was at a much better position in 2006 vs NVIDIA, they just got owned.3DVagabond - Friday, July 3, 2015 - link

When was Intel the underdog? Because that's who's knocked them down (The aren't out yet.).