The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTMiddle Earth: Shadow of Mordor

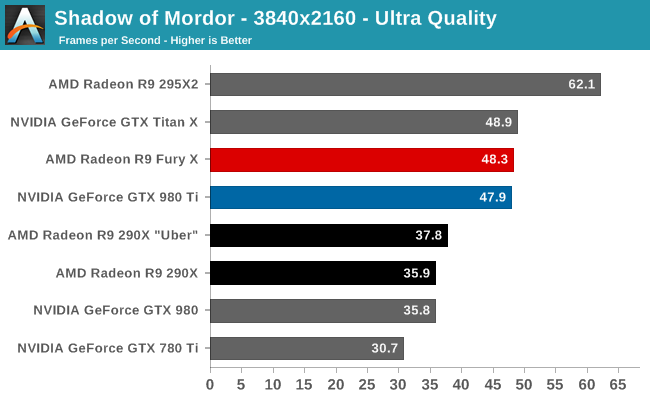

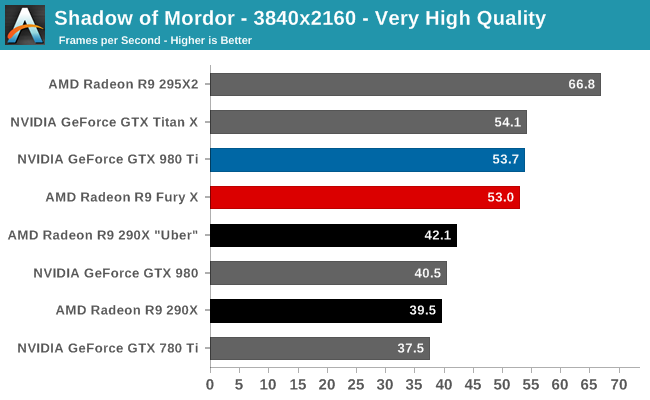

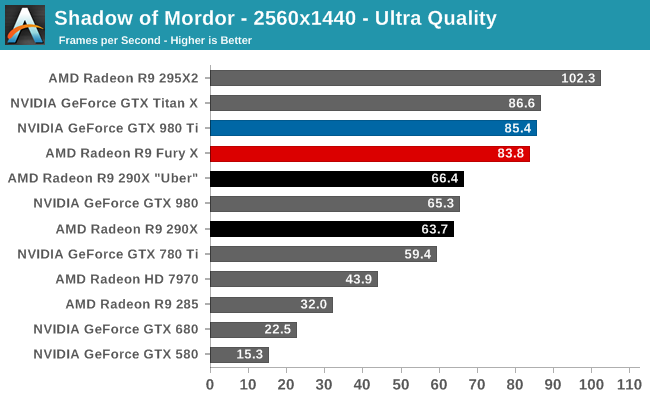

Our next benchmark is Monolith’s popular open-world action game, Middle Earth: Shadow of Mordor. One of our current-gen console multiplatform titles, Shadow of Mordor is plenty punishing on its own, and at Ultra settings it absolutely devours VRAM, showcasing the knock-on effect of current-gen consoles have on VRAM requirements.

With Shadow of Mordor things finally start looking up for AMD, as the R9 Fury X scores its first win. Okay, it’s more of a tie than a win, but it’s farther than the R9 Fury X has made it so far.

At 4K with Ultra settings the R9 Fury X manages an average of 48.3fps, a virtual tie with the GTX 980 Ti and its 47.9fps. Dropping down to Very High quality does see AMD pull back just a bit, but with a difference between the two cards of just 0.7fps, it’s hardly worth worrying about. Even 2560 looks good for AMD here, trailing the GTX 980 Ti by just over 1fps, at an average framerate of over 80fps. Overall the R9 Fury X delivers 98% to 101% of the performance of the GTX 980 Ti, more or less tying the direct competitor to AMD’s latest card.

Meanwhile compared to the R9 290X, the R9 Fury X doesn’t see quite the same gains. Performance is a fairly consistent 26-28% ahead of the R9 290X, less than what we’ve seen elsewhere. Earlier we discussed how the R9 Fury X’s performance gains will depend on which part of the GPU is getting stressed the most; tasks that stress the shaders show the most gains, and tasks that stress geometry or the ROPs potentially show the lowest gains. In the case of SoM, I believe we’re seeing at least a partial case of being geometry/ROP influenced.

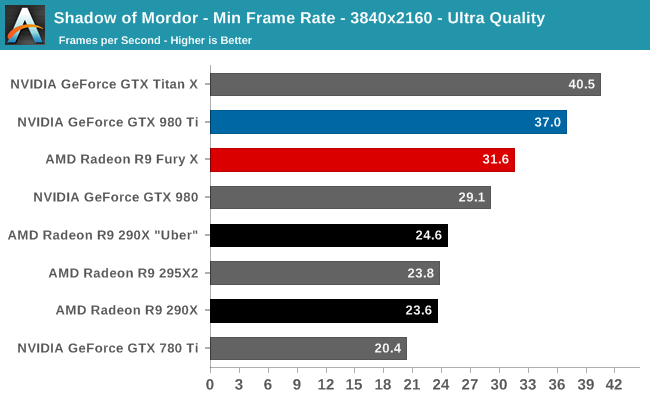

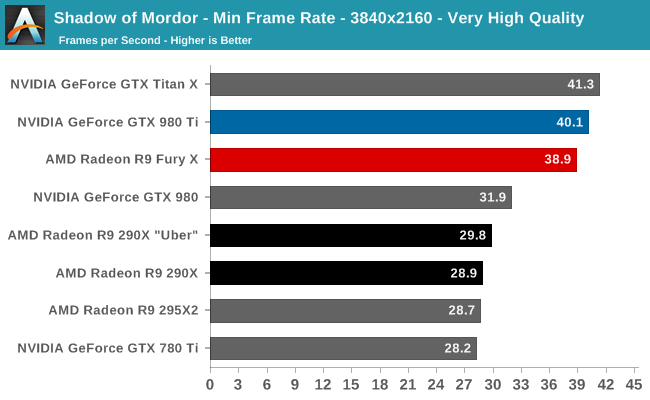

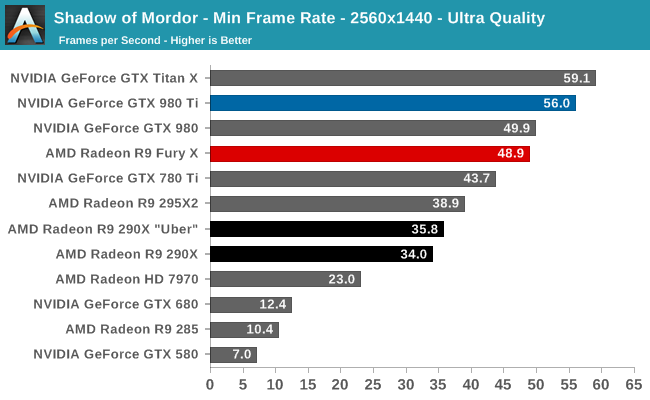

Unfortunately for AMD, the minimum framerate situation isn’t quite as good as the averages. These framerates aren’t bad – the R9 Fury X is always over 30fps – but even accounting for the higher variability of minimum framerates, they’re trailing the GTX 980 Ti by 13-15% with Ultra quality settings. Interestingly at 4K with Very High quality settings the minimum framerate gap is just 3%, in which case what we are most likely seeing is the impact of running Ultra settings with only 4GB of VRAM. The 4GB cards don’t get punished too much for it, but for R9 Fury X and its 4GB of HBM, it is beginning to crack under the pressure of what is admittedly one of our more VRAM-demanding games.

458 Comments

View All Comments

Scali - Tuesday, July 7, 2015 - link

Even better, there are various vendors that sell a short version of the GTX970 (including Asus and Gigabyte for example), so it can take on the Nano card directly, as a good choice for a mini-ITX based HTPC.And unlike the Nano, the 970 DOES have HDMI 2.0, so you can get 4k 60 Hz on your TV.

Oxford Guy - Thursday, July 9, 2015 - link

28 GB/s + XOR contention is fast performance indeed, at half the speed of a midrange card from 2007.Gothmoth - Monday, July 6, 2015 - link

so in short another BULLDOZER.... :-(after all the hype not enough and too late.

i agree the card is not bad.. but after all the HYPE it IS a disappointment.

OC results are terrible... and AMD said it will be an overclockers dream.

add to that that i read many complains about the noisy watercooler (yes for retail versions not early preview versions).

iamserious - Monday, July 6, 2015 - link

It looks ugly. Loliamserious - Monday, July 6, 2015 - link

Also. I understand it's a little early but I thought this card was supposed to blow the GTX 980Ti out of the water with it's new memory. The performance to price ratio is decent but I was expecting a bit larger jump in performance increase. Perhaps with the driver updates things will change.Scali - Tuesday, July 7, 2015 - link

Hum, unless I missed it, I didn't see any mention of the fact that this card only supports DX12 level 12_0, where nVidia's 9xx-series support 12_1.That, combined with the lack of HDMI 2.0 and the 4 GB limit, makes the Fury X into a poor choice for the longer term. It is a dated architecture, pumped up to higher performance levels.

FMinus - Tuesday, July 7, 2015 - link

Whilst it's beyond me why they skimped on HDMI 2.0 - there's adapters if you really want to run this card on a TV. It's not such a huge drama tho, the cards will drive DP monitors in the vast majority, so, I'm much more sad at the missing DVI out.Scali - Wednesday, July 8, 2015 - link

I think the reason why there's no HDMI 2.0 is simple: they re-used their dated architecture, and did not spend time on developing new features, such as HDMI 2.0 or 12_1 support.With nVidia already having this technology on the market for more than half a year, AMD is starting to drop behind. They were losing sales to nVidia, and their new offerings don't seem compelling enough to regain their lost marketshare, hence their profits will be limited, hence their investment in R&D for the next generation will be limited. Which is a problem, since they need to invest more just to get where nVidia already is.

It looks like they may be going down the same downward spiral as their CPU division.

sa365 - Tuesday, July 7, 2015 - link

Well at least AMD aren't cheating by allowing the driver to remove AF despite what settings are selected in game. Just so they can win benchmarks.How about some fair, like for like benchmarking and see where these cards really stand.

FourEyedGeek - Tuesday, July 7, 2015 - link

As for the consoles having 8 GB of RAM, not only is that shared, but the OS uses 3 GB to 3.5 GB, meaning there is only a max of 5 GB for the games on those consoles. A typical PC being used with this card will have 8 to 16 GB plus the 4 GB on the card. Giving a total of 12 GB to 20 GB.In all honesty at 4K resolutions, how important is Anti-Aliasing on the eye? I can't imagine it being necessary at all, let alone 4xMSAA.