The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTHBM: The 4GB Question

Having taken a look at HBM from a technical perspective, there’s one final matter to address with Fiji’s implementation of HBM, and that is the matter of capacity.

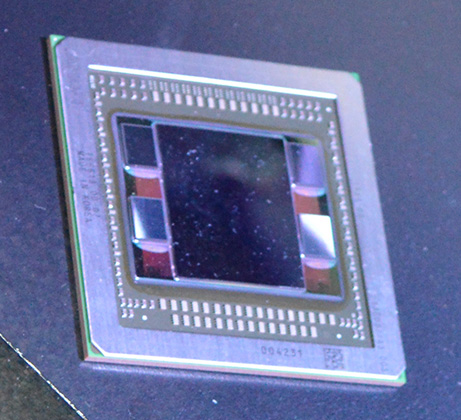

For HBM1, the maximum capacity of a HBM stack is 1GB, which in turn is made possible through the use of 4 256MB (2Gb) memory dies. With a 1GB/stack limit, this means that AMD can only equip the R9 Fury X and its siblings with 4GB of VRAM when using 4 stacks. Larger stacks are not possible, and while in principle it would be possible to do an 8 stack HBM1 design, doing so would double the width of the memory bus and invite a whole slew of issues with it at the same time. Ultimately for reasons ranging from interposers to where to place the stacks, the most AMD can get out of HBM1 is 4GB of VRAM.

To address the elephant in the room then, the question arises of whether 4GB is going to be enough VRAM. 4GB is as much VRAM as was on the R9 290X in 2013, it’s as much VRAM as was on the GTX 980 in 2014. But it’s also less VRAM than the 6GB that is on the GTX 980 Ti in 2015 (never mind the GTX Titan X at this point) and it’s less VRAM than the 8GB that is on the just-launched R9 390X. Even ignoring NVIDIA for a moment, R9 Fury X offers less VRAM than AMD’s next-lower tier of video cards.

This is quite a bit of a role reversal in the video card industry, as traditionally AMD has offered more VRAM than the competition. Thanks in large part to their favoring wider memory buses (which means more memory chips), AMD has offered greater memory capacities at similar prices than traditionally stingy NVIDIA. Now however they are on the other foot, and the timing is not all that great.

| Console Memory Capacity | |||

| Capacity | |||

| Xbox 360 | 512MB (Shared) | ||

| Playstation 3 | 256MB + 256MB | ||

| Xbox One | 8GB (Shared) | ||

| Playstation 4 | 8GB (Shared) | ||

| Fiji | 4GB (Dedicated VRAM) | ||

Perhaps the single biggest influence here over VRAM requirements right now is the current-generation consoles, which launched back in 2013 with 8GB of RAM each. To be fair to AMD and to be technically correct these are shared memory devices, so that 8GB gets split between GPU resources and CPU resources, and even this comes after Microsoft and Sony set aside a significant amount of memory for their OSes and background tasks. Still, when using the current-gen consoles as a baseline, the current situation makes it possible to build a game that requires over 4GB of VRAM (if only just over), and if that game is naïvely ported over to the PC, there could be issues.

Throwing an extra wrench into things is that PCs have more going on than just console games. PC gamers buying high-end cards like the R9 Fury X will be running at resolutions greater than 1080p and likely with higher quality settings than the console equivalent, driving up the VRAM requirements. The Windows Desktop Window Manager responsible for rendering and compositing the different windows together in 3D space consumes its own VRAM as well. So the current PC situation pushes VRAM requirements higher still.

The reality of the situation is that AMD knows where they stand. 4GB is the most they can equip Fiji with, so it’s what they will have to make-do with until HBM2 comes along with greater densities. In the meantime the marketing side of AMD needs to convince potential buyers that 4GB is enough, and the technical side of AMD needs to come up with other solutions to help mitigate the problem.

On the latter point, while AMD can’t do anything about the amount of VRAM they have, they can and are working on doing a better job of using it. AMD has been rather straightforward in admitting that up until now they’ve never seriously dedicated resources to VRAM management on their cards, as they’ve always had enough VRAM that they have never considered it an issue. Until Fiji there was always enough VRAM.

Which is why for Fiji, AMD tells us they have dedicated two engineers to the task of VRAM optimizations. To be clear here, there’s little AMD can to do reduce VRAM consumption, but what they can do is better manage what resources are placed in VRAM and what resources are paged out to system RAM. Even this optimization can’t completely resolve the 4GB issue, but it can help up to a point. So long as game isn’t actively trying to use all 4GB of resources at once, then intelligent paging can help ensure that only the resources that are actively in use reside in VRAM and therefore are immediately available to the GPU when requested.

As for the overall utility of this kind of optimization, it’s going to depend on a number of factors, including the OS, the game’s own resource management, and ultimately the real working set needs of a game. The situation AMD faces right now is one where they have to simultaneously fight an OS/driver paradigm that wastes memory, and the games that will be running on their GPUs traditionally treat VRAM like it’s going out of style. The limitations of DirectX 11/WDDM 1.x prevent full reuse of certain types of assets by developers, and all the while it’s extremely common for games to claim much (if not all) available VRAM for their own use with the intent of ensuring they have enough VRAM for future use, and otherwise caching as many resources as possible for better performance.

The good news here is that the current situation leaves overhead that AMD can optimize around. AMD has been creating both generic and game-specific memory optimizations in order to better manage VRAM usage and what resources are held in local VRAM versus paging out to system memory. By controlling duplicate resources and clamping down on overzealous caching by games, it is possible to get more mileage out of the 4GB of VRAM AMD has.

Longer term, AMD is looking at the launch of Windows 10 and DirectX 12 to change the situation for the better. The low-level API will allow careful developers to avoid duplicate assets in the first place, and WDDM 2.0 overall is said to be a bit nicer about how it handles VRAM consumption. None the less the first DirectX 12 games aren’t launching for a few more months, and it will be longer still until those games are in the majority. As a result the situation AMD faces is one where they need to do well with Windows 8.1 and DirectX 11 games, as those games aren’t going anywhere right away and they will be the games that stress Fiji the most.

So with that in mind, let’s attempt to answer the question at hand: is 4GB enough VRAM for R9 Fury X? Is it enough for a $650 card?

The short answer is yes, at the moment it’s enough, if just barely.

To be clear, we can without fail “break” the R9 Fury X and place it in situations where performance nosedives because it has run out of VRAM. However of the tests we’ve put together, those cases are essentially edge cases; any scenario we come up with that breaks the R9 Fury X also results in average framerates that are too low to be playable in the first place. So it is very difficult (though I do not believe impossible) to come up with a scenario where the R9 Fury X would produce playable framerates if only it had more VRAM.

Case in point, in our current gaming test suite Shadows of Mordor and Grand Theft Auto V are the two most VRAM-hungry games. Attempting to break the R9 Fury X with Shadow of Mordor is ineffective at best; even with the HD texture pack installed (which is not the default for our test suite) the game’s built-in benchmark hardly registers a difference. Both the average and minimum framerates are virtually unchanged from our results without the HD texture pack. Meanwhile playing the game is much the same, though it’s entirely possible there are scenarios in the game not covered by that or the benchmark where more than 4GB of VRAM is truly required.

| Breaking Fiji: VRAM Usage Testing | ||||

| R9 Fury X | GTX 980 Ti | |||

| Shadows of Mordor Ultra, Avg | 47.7 fps | 49 fps | ||

| Shadows of Mordor Ultra, Min | 31.6 fps | 38 fps | ||

| GTA V, "Breaker", Avg | 21.7 fps | 26.2 fps | ||

| GTA V, "Breaker", 99th Perc. | 6 fps | 17.8 fps | ||

Meanwhile with GTA5 we can break the R9 Fury X, but only at unplayable settings. The card already teeters on the brink with our standard 4K “Very High” settings, which includes 4x MSAA but no “advanced” draw distance enhancements, with minimum framerates well below the GTX 980 Ti. Turning up the draw distance in turn further halves those minimums, driving the minimum framerate to 6fps as the R9 Fury X is forced to swap between VRAM and system RAM over the very slow PCIe bus.

But in both of these cases the average framerate is below 30fps (never mind 60fps), and not just for the R9 Fury X, but for the GTX 980 Ti as well. No scenario we’ve tried that breaks the R9 Fury X leaves it or the GTX 980 Ti running a game at 30fps or better, typically because in order to break the R9 Fury X we have to run with MSAA, which is itself a performance killer.

Unfortunately for AMD they are pushing the R9 Fury X as a 4K gaming card, and for a good reason. AMD’s performance traditionally scales better with resolution (i.e. deteriorates more slowly), so AMD’s best chance of catching up to NVIDIA is at 4K. However this also stresses R9 Fury X’s 4GB of VRAM all the more, which puts them in VRAM-limited situations all the sooner. It’s not quite a catch-22 situation, but it’s also not a situation AMD is going to want to be in.

Ultimately even at 4K AMD is okay for the moment, but only just. If VRAM requirements increase any more than they already have – if games start requiring 6-8GB at the very high end – then the R9 Fury X (and every other 4GB card for that matter) is going to be in trouble. And in the meantime anything worse than 4K, be it multi-monitor setups or 5K displays, is going to exacerbate the problem.

AMD believes their situation will get better with Windows 10 and DirectX 12, but until DX12 games actually come out in large numbers, all we can do is look at the kind of games we have today. And right now what we’re seeing are signs that the 4GB era is soon to come to an end. 4GB is enough right now, but I suspect 4GB cards now have less than 2 years to go until they’re undersized, which is a difficult situation to be in for a $650 video card.

458 Comments

View All Comments

chizow - Friday, July 3, 2015 - link

Pretty much, AMD supporters/fans/apologists love to parrot the meme that Intel hasn't innovated since original i7 or whatever, and while development there has certainly slowed, we have a number of 18 core e5-2699v3 servers in my data center at work, Broadwell Iris Pro iGPs that handily beat AMD APU and approach low-end dGPU perf, and ultrabooks and tablets that run on fanless 5W Core M CPUs. Oh, and I've upgraded also managed to find meaningful desktop upgrades every few years for no more than $300 since Core 2 put me back in Intel's camp for the first time in nearly a decade.looncraz - Friday, July 3, 2015 - link

None of what you stated is innovation, merely minor evolution. The core design is the same, gaining only ~5% or so IPC per generation, same basic layouts, same basic tech. Are you sure you know what "innovation" means?Bulldozer modules were an innovative design. A failure, but still very innovative. Pentium Pro and Pentium 4 were both innovative designs, both seeking performance in very different ways.

Multi-core CPUs were innovative (AMD), HBM is innovative (AMD+Hynix), multi-GPU was innovative (3dfx), SMT was innovative (IBM, Alpha), CPU+GPU was innovative (Cyrix, IIRC)... you get the idea.

Doing the exact same thing, more or less the exact same way, but slightly better, is not innovation.

chizow - Sunday, July 5, 2015 - link

Huh? So putting Core level performance in a passive design that is as thin as a legal pad and has 10 hours of battery life isn't innovation?Increasing iGPU performance to the point it not only provides top-end CPU performance, and close to dGPU performance, while convincingly beating AMD's entire reason for buying ATI, their Fusion APUs isn't innovation?

And how about the data center where Intel's *18* core CPUs are using the same TDP and sockets, in the same U rack units as their 4 and 6 core equivalents of just a few years ago?

Intel is still innovating in different ways, that may not directly impact the desktop CPU market but it would be extremely ignorant to claim they aren't addressing their core growth and risk areas with new and innovative products.

I've bought more Intel products in recent years vs. prior strictly because of these new innovations that are allowing me to have high performance computing in different form factors and use cases, beyond being tethered to my desktop PC.

looncraz - Friday, July 3, 2015 - link

Show me intel CPU innovations since after the pentium 4.Mind you, innovations can be failures, they can be great successes, or they can be ho-hum.

P6->Core->Nehalem->Sandy Bridge->Haswell->Skylake

The only changes are evolutionary or as a result of process changes (which I don't consider CPU innovations).

This is not to say that they aren't fantastic products - I'm rocking an i7-2600k for a reason - they just aren't innovative products. Indeed, nVidia's Maxwell is a wonderfully designed and engineered GPU, and products based on it are of the highest quality and performance. That doesn't make them innovative in any way. Nothing technically wrong with that, but I wonder how long before someone else came up with a suitable RAM just for GPUs if AMD hadn't done it?

chizow - Sunday, July 5, 2015 - link

I've listed them above and despite slowing the pace of improvements on the desktop CPU side you are still looking at 30-45% improvement clock for clock between Nehalem and Haswell, along with pretty massive improvements in stock clock speed. Not bad given they've had literally zero pressure from AMD. If anything, Intel dominating in a virtual monopoly has afforded me much cheaper and consistent CPU upgrades, all of which provided significant improvements over the previous platform:E6600 $284

Q6600 $299

i7 920 $199!

i7 4770K $229

i7 5820K $299

All cheaper than the $450 AMD wanted for their ENTRY level Athlon 64 when they finally got the lead over Intel, which made it an easy choice to go to Intel for the first time in nearly a decade after AMD got Conroe'd in 2006.

silverblue - Monday, July 6, 2015 - link

I could swear that you've posted this before.I think the drop in prices were more of an attempt to strangle AMD than anything else. Intel can afford it, after all.

chizow - Monday, July 6, 2015 - link

Of course I've posted it elsewhere because it bears repeating, the nonsensical meme AMD fanboys love to parrot about AMD being necessary for low prices and strong competition is a farce. I've enjoyed unparalleled stability at a similar or higher level of relative performance in the years that AMD has become UNCOMPETITIVE in the CPU market. There is no reason to expect otherwise in the dGPU market.zoglike@yahoo.com - Monday, July 6, 2015 - link

Really? Intel hasn't innovated? I really hope you are trolling because if you believe that I fear for you.chizow - Thursday, July 2, 2015 - link

Let's not also discount the fact that's just stock comparisons, once you overclock the cards as many are interested in doing in this $650 bracket, especially with AMD's clams Fury X is an "Overclocker's Dream", we quickly see the 980Ti cannot be touched by Fury X, water cooler or not.Fury X wouldn't have been the failure it is today if not for AMD setting unrealistic and ultimately, unattained expectations. 390X WCE at $550-$600 and its a solid alternative. $650 new "Premium" Brand that doesn't OC at all, has only 4GB, has pump whine issues and is slower than Nvidia's same priced $650 980Ti that launched 3 weeks before it just doesn't get the job done after AMD hyped it from the top brass down.

andychow - Thursday, July 2, 2015 - link

Yeah, "Overclocker's dream", only overclocks by 75 MHz. Just by that statement, AMD has totally lost me.