The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTCompute

Shifting gears, we have our look at compute performance. As an FP64 card, the R9 Fury X only offers the bare minimum FP64 performance for a GCN product, so we won’t see anything great here. On the other hand with a theoretical FP32 performance of 8.6 TFLOPs, AMD could really clean house on our more regular FP32 workloads.

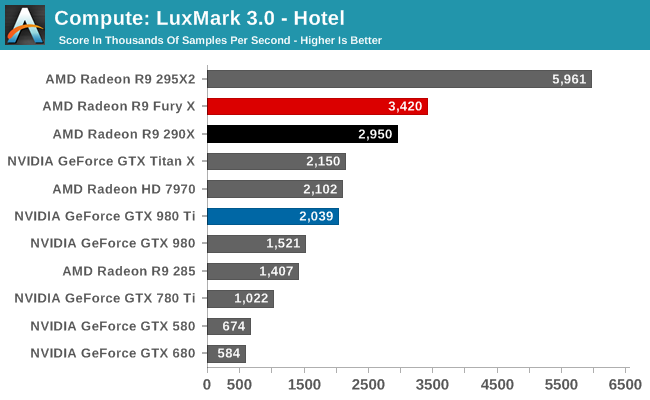

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

The results with LuxMark ended up being quite a bit of a surprise, and not for a good reason. Compute workloads are shader workloads, and these are workloads that should best illustrate the performance improvements of R9 Fury X over R9 290X. And yet while the R9 Fury X is the fastest single GPU AMD card, it’s only some 16% faster, a far cry from the 50%+ that it should be able to attain.

Right now I have no reason to doubt that the R9 Fury X is capable of utilizing all of its shaders. It just can’t do so very well with LuxMark. Given the fact that the R9 Fury X is first and foremost a gaming card, and OpenCL 1.x traction continues to be low, I am wondering whether we’re seeing a lack of OpenCL driver optimizations for Fiji.

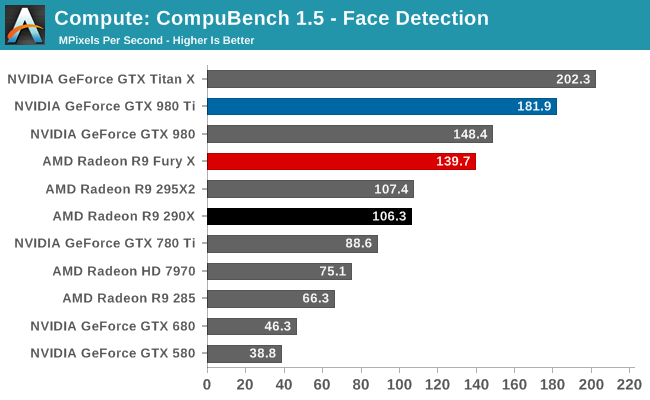

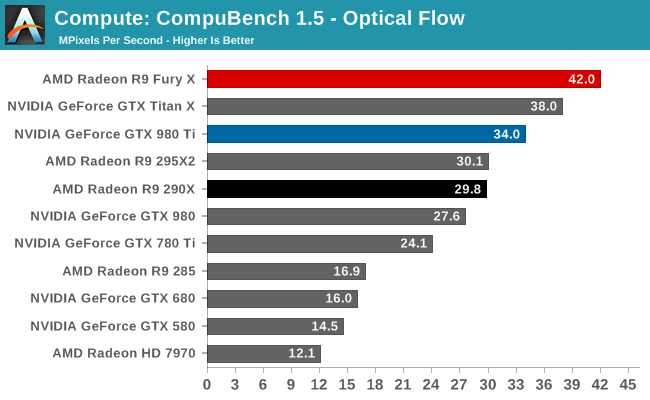

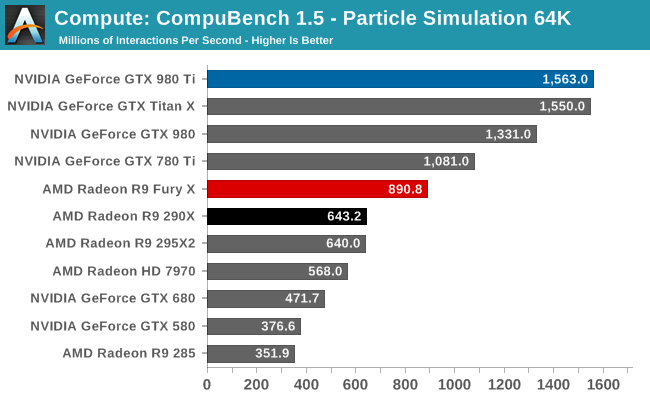

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Quickly taking some of the air out of our driver theory, the R9 Fury X’s performance on CompuBench is quite a bit better, and much closer to what we’d expect given the hardware of the R9 Fury X. The Fury X only wins overall at Optical Flow, a somewhat memory-bandwidth heavy test that to no surprise favors AMD’s HBM additions, but otherwise the performance gains across all of these tests are 40-50%. Overall then the outcome over who wins is heavily test dependent, though this is nothing new.

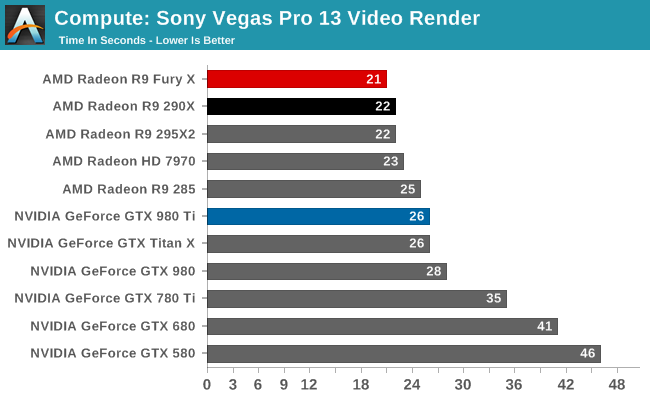

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

At this point Vegas is becoming increasingly CPU-bound and will be due for replacement. The Fury X none the less shaves off an additional second of rendering time, bringing it down to 21 seconds.

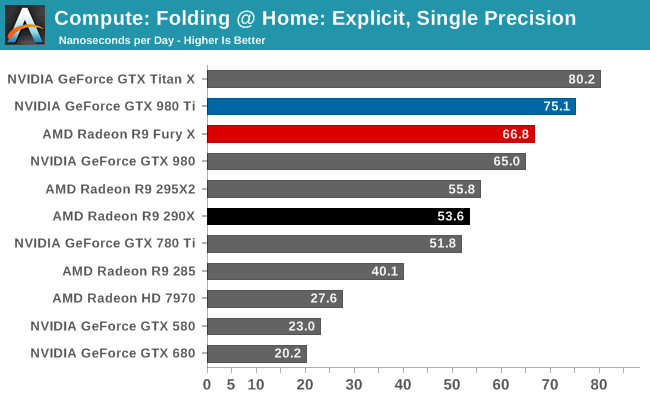

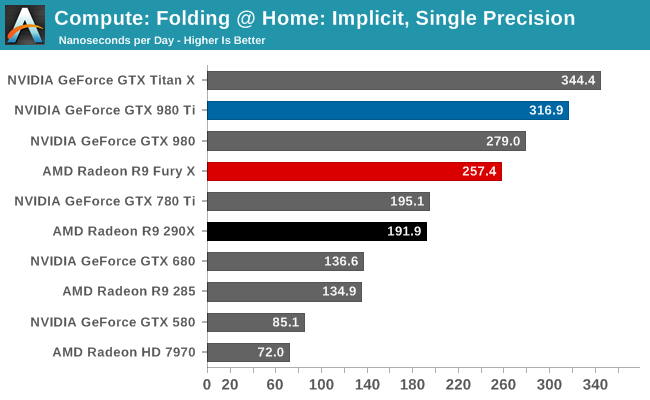

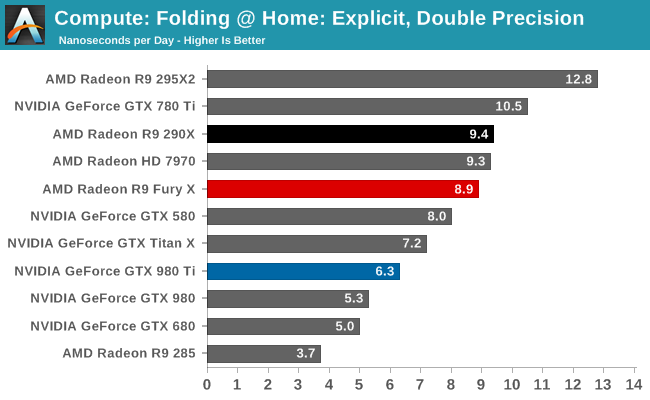

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Both of the FP32 tests for FAHBench show smaller than expected performance gains given the fact that the R9 Fury X has such a significant increase in compute resources and memory bandwidth. 25% and 34% respectively are still decent gains, but they’re smaller gains than anything we saw on CompuBench. This does lend a bit more support to our theory about driver optimizations, though FAHBench has not always scaled well with compute resources to begin with.

Meanwhile FP64 performance dives as expected. With a 1/16 rate it’s not nearly as bad as the GTX 900 series, but even the Radeon HD 7970 is beating the R9 Fury X here.

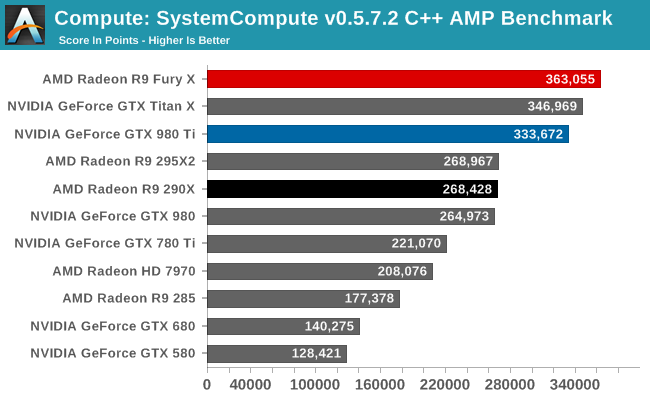

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

Our C++ AMP benchmark is another case of decent, though not amazing, GPU compute performance gains. The R9 Fury X picks up 35% over the R9 290X. And in fact this is enough to vault it over NVIDIA’s cards to retake the top spot here, though not by a great amount.

458 Comments

View All Comments

testbug00 - Sunday, July 5, 2015 - link

You don't need architecture improvements to use DX12/Vulkan/etc. The APIs merely allow you to implement them over DX11 if you choose to. You can write a DX12 game without optimizing for any GPUs (although, not doing so for GCN given consoles are GCN would be a tad silly).If developers are aiming to put low level stuff in whenever they can than the issue becomes that due to AMD's "GCN everywhere" approach developers may just start coding for PS4, porting that code to Xbox DX12 and than porting that to PC with higher textures/better shadows/effects. In which Nvidia could take massive performance deficites to AMD due to not getting the same amount of extra performance from DX12.

Don't see that happening in the next 5 years. At least, not with most games that are console+PC and need huge performance. You may see it in a lot of Indie/small studio cross platform games however.

RG1975 - Thursday, July 2, 2015 - link

AMD is getting there but, they still have a little bit to go to bring us a new "9700 Pro". That card devastated all Nvidia cards back then. That's what I'm waiting for to come from AMD before I switch back.Thatguy97 - Thursday, July 2, 2015 - link

would you say amd is now the "geforce fx 5800"piroroadkill - Thursday, July 2, 2015 - link

Everyone who bought a Geforce FX card should feel bad, because the AMD offerings were massively better. But now AMD is close to NVIDIA, it's still time to rag on AMD, huh?That said, of course if I had $650 to spend, you bet your ass I'd buy a 980 Ti.

Thatguy97 - Thursday, July 2, 2015 - link

oh believe me i remember they felt bad lol but im not ragging on amd but nvidia stole their thunder with the 980 tiKateH - Thursday, July 2, 2015 - link

C'mon, Fury isn't even close to the Geforce FX level of fail. It's really hard to overstate how bad the FX5800 was, compared to the Radeon 9700 and even the Geforce 4600Ti.The Fury X wins some 4K benchmarks, the 980Ti wins some. The 980Ti uses a bit less power but the Fury X is cooler and quieter.

Geforce FX level of fail would be if the Fury X was released 3 months from now to go up against the 980Ti with 390X levels of performance and an air cooler.

Thatguy97 - Thursday, July 2, 2015 - link

To be fair the 5950 ultra was actually decentMorawka - Thursday, July 2, 2015 - link

your understating nvidia's scores.. the won 90% of all benchmarks, not just "some". a full 120W more power under furmark load and they are using HBM!!looncraz - Thursday, July 2, 2015 - link

Furmark power load means nothing, it is just a good way to stress test and see how much power the GPU is capable of pulling in a worst-case scenario and how it behaves in that scenario.While gaming, the difference is miniscule and no one will care one bit.

Also, they didn't win 90% of the benchmarks at 4K, though they certainly did at 1440. However, the real world isn't that simple. A 10% performance difference in GPUs may as well be zero difference, there are pretty much no game features which only require a 10% higher performance GPU to use... or even 15%.

As for the value argument, I'd say they are about even. The Fury X will run cooler and quieter, take up less space, and will undoubtedly improve to parity or beyond the 980Ti in performance with driver updates. For a number of reasons, the Fury X should actually age better, as well. But that really only matters for people who keep their cards for three years or more (which most people usually do). The 980Ti has a RAM capacity advantage and an excellent - and known - overclocking capacity and currently performs unnoticeably better.

I'd also expect two Fury X cards to outperform two 980Ti cards with XFire currently having better scaling than SLI.

chizow - Thursday, July 2, 2015 - link

The differences in minimums aren't miniscule at all, and you also seem to be discounting the fact 980Ti overclocks much better than Fury X. Sure XDMA CF scales better when it works, but AMD has shown time and again, they're completely unreliable for timely CF fixes for popular games to the point CF is clearly a negative for them right now.