AMD Launches Carrizo: The Laptop Leap of Efficiency and Architecture Updates

by Ian Cutress on June 2, 2015 9:00 PM ESTThe Platform

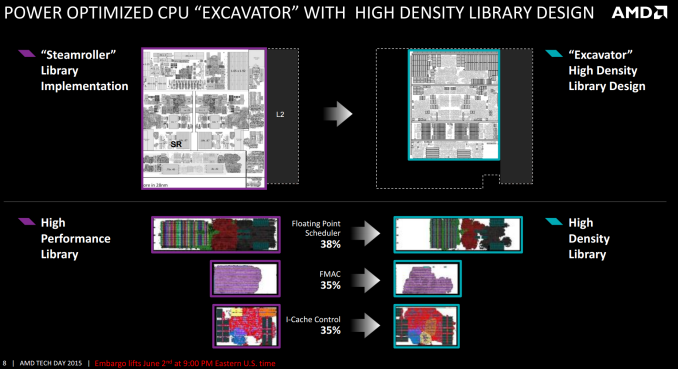

From a design perspective, Carrizo is the biggest departure to AMD’s APU line since the introduction of Bulldozer cores. While the underlying principle of two INT pipes and a shared FP pipe between dual schedulers is still present, the fundamental design behind the cores, the caches and the libraries have all changed. Part of this was covered at ISSCC, which we will also revisit here.

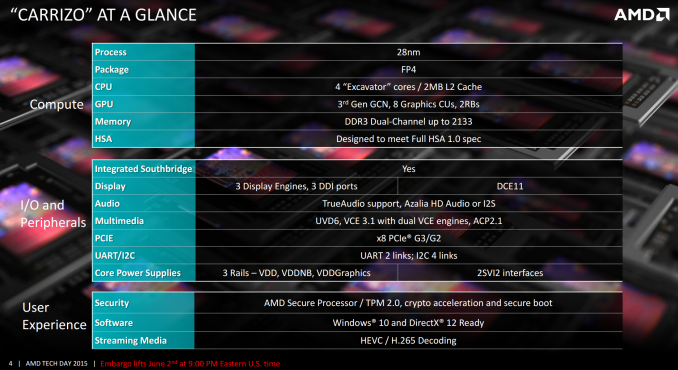

On a high level, Carrizo will be made at the 28nm node using a similar silicon tapered metal stack more akin to a GPU design rather than a CPU design. The new FP4 package will be used, but this will be shared with Carrizo-L, the new but currently unreleased lower-powered ‘Cat’ core based platform that will play in similar markets for lower cost systems. The two FP4 models are designed to be almost plug-and-play, simplifying designs for OEMs. All Carrizo APUs currently have four Excavator cores, more commonly referred to as a dual module design, and as a result the overall design will have 2MB of L2 cache.

Each Carrizo APU will feature AMD’s Graphics Core Next 1.2 architecture, listed above as 3rd Gen GCN, with up to 512 streaming processors in the top end design. Memory will still be dual channel, but at DDR3-2133. As noted in the previous slides where AMD tested on DDR3-1600, probing the memory power draw and seeing what OEMs decide to use an important aspect we wish to test. In terms of compute, AMD states that Carrizo is designed to meet the full HSA 1.0 specification as was released earlier this year. Barring any significant deviations in the specification, AMD expects Carrizo to be certified when the final version is ratified.

Carrizo integrates the southbridge/IO hub into the silicon design of the die itself, rather than a separate on package design. This brings the southbridge down from 40nm+ to 28nm, saving power and reducing long distance wires between the processor and the IO hub. This also allows the CPU to control the voltage and frequency of the southbridge more than before, offering further potential power saving improvements. Carrizo will also support three displays, allowing for potentially interesting combinations when it comes to more office oriented products and docks. TrueAudio is also present, although the number of titles that support it is few and the quality of both audio codecs and laptop speakers leaves a lot to be desired. Hopefully we will see the TrueAudio DSP opened up in an SDK at some point, allowing more than just specific developers to work with it.

External graphics is supported by a PCIe 3.0 x8 interface, and the system relies on three main rails for voltage across the SoC which allows for separate voltage binning of each of the parts. AMD’s Secure Processor, with cryptography acceleration, secure boot and BitLocker support are all in the mix.

137 Comments

View All Comments

renegade800x - Thursday, June 4, 2015 - link

Although viewable it's far from being "perfectly" fine. 15.6 should be FHD.albert89 - Tuesday, June 23, 2015 - link

You don't need a strong CPU since win8 because most laptops use atom, Celeron or Pentium processors. AMD APU's are the natural choice !mabsark - Wednesday, June 3, 2015 - link

AMD should make Steam Box's. They already do APUs, chipsets (which are going on die) and memory. It would be pretty simple for AMD to partner with a motherboard maker. Imagine a Steam Box about the size of a router, with a nano-ITX motherboard, a 14 nm APU with HBM, wifi, a few USB ports and an HDMI port to connect to a TV.An AMD/Valve partnership could potentially revolutionise the console market, providing cheap yet powerful and efficient console-type PCs.

Refuge - Wednesday, June 3, 2015 - link

HBM isn't coming to APU's anytime soon.Cryio - Saturday, June 6, 2015 - link

Probably the first APU after Carrizocoder111 - Wednesday, June 3, 2015 - link

Aren't Steamboxes supposed to run Linux?AMD drivers for Linux are a bit weird. Catalyst is the official supported driver but it's buggy.

Open source drivers are quite good but they are slower than Catalyst and don't support latest OpenGL spec. There is no Mantle/Vulcan/HSA/Crossfire support with Open-Source drivers either. OpenCL is in alpha stage.

So AMD would need to man up and do the Linux drivers properly. They are working on it and making good progress but I doubt it is ready to be used at the moment as it is...

Besides, lots of games these days get developed with Nvidia's "help" to ensure they run well on Nvidia GPUs and run like crap on AMD GPUs. And if the games are built using Intel Compiler, they'll run like crap on AMD CPUs as well. All of these tactics are anticompetitive and should be illegal IMO but who said the world is fair...

And don't get me wrong, I love AMD, I use Linux + AMD dGPU + APU, but I don't think it's ready for the masses yet.

AS118 - Wednesday, June 3, 2015 - link

I agree. I'm a double AMD Linux gamer and I've run into the exact same problems as you have, and I wish they'd be more serious about Linux. Sure they have Microsoft's support, but I feel that they should take Linux more seriously outside of the enterprise (where they do take Linux more seriously).yankeeDDL - Wednesday, June 3, 2015 - link

I disagree.For casual gaming on laptops, 1366x768 is just fine. You'll need a lot more horsepower to drive a fullHD screen and battery life will suffer.

I won't say that there's no benefit gaming at fullHD vs 1366x768: obviously, the visuals are better, but if you want an "all rounder" laptop which does not weight one ton (like "real" gaming laptops) and that it is below $500, it's not bad at all.

BrokenCrayons - Wednesday, June 3, 2015 - link

I personally would rather have a cheap 1366x768 panel. I don't care about color accuracy much, light bleed, panel responsiveness or much of anything else and haven't since we transitioned from passive to active matrix screens in the 486 to original Pentium era of notebook computers. In fact, I see higher resolutions as an unnecessary (because I have to scale things anyway to easily read text and interact with UI elements and because native resolution gaming on higher res screens demands more otherwise unnecessary GPU power) drain on battery life that invariably drives up the cost of the system to get otherwise identical performance. The drive for progressively smaller, higher pixel density displays is a pointless struggle to fill in comparable checkboxes between competitors to appease a consumer audience that has been swept up in the artificially fabricated frenzy over an irrelevant device specification.yankeeDDL - Wednesday, June 3, 2015 - link

I think it depends on the use, ultimately.For office work (i.e.: much reading/writing emails), a reasonably high resolution helps making the text sharp and easier on the eyes.

For home use (web browsing, watching videos, casual gaming) though, I find it a lot less relevant.

Personally, at home, I rather have a <$400 laptop always ready to be used for anything, to be moved around, even in the kitchen, than a $1000 laptop which I would need to treat with gloves for fears of damaging. Since Kaveri I also started recommending AMD again to my friends and family: much cheaper than Intel and with a decent GPU makes them a lot more versatile. Again, my opinion, based on my use. As they say: to each his own...