The NVIDIA GeForce GTX 980 Ti Review

by Ryan Smith on May 31, 2015 6:00 PM ESTGrand Theft Auto V

The final game in our review of the GTX 980 Ti is our most recent addition, Grand Theft Auto V. The latest edition of Rockstar’s venerable series of open world action games, Grand Theft Auto V was originally released to the last-gen consoles back in 2013. However thanks to a rather significant facelift for the current-gen consoles and PCs, along with the ability to greatly turn up rendering distances and add other features like MSAA and more realistic shadows, the end result is a game that is still among the most stressful of our benchmarks when all of its features are turned up. Furthermore, in a move rather uncharacteristic of most open world action games, Grand Theft Auto also includes a very comprehensive benchmark mode, giving us a great chance to look into the performance of an open world action game.

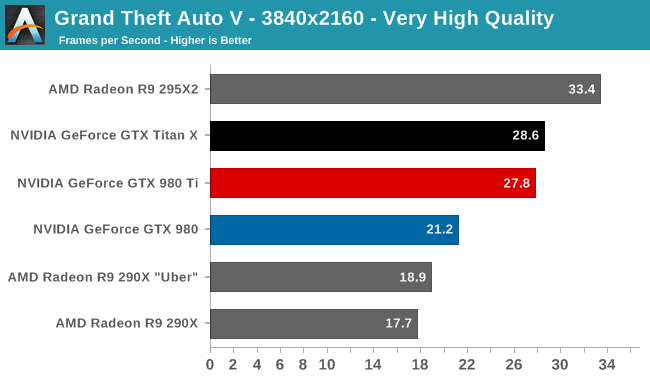

On a quick note about settings, as Grand Theft Auto V doesn't have pre-defined settings tiers, I want to quickly note what settings we're using. For "Very High" quality we have all of the primary graphics settings turned up to their highest setting, with the exception of grass, which is at its own very high setting. Meanwhile 4x MSAA is enabled for direct views and reflections. This setting also involves turning on some of the advanced redering features - the game's long shadows, high resolution shadows, and high definition flight streaming - but it not increasing the view distance any further.

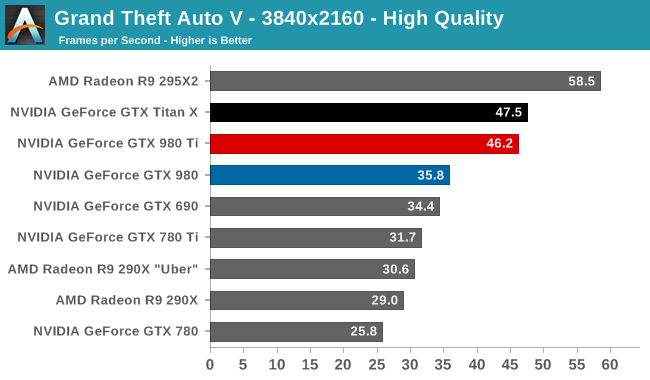

Otherwise for "High" quality we take the same basic settings but turn off all MSAA, which significantly reduces the GPU rendering and VRAM requirements.

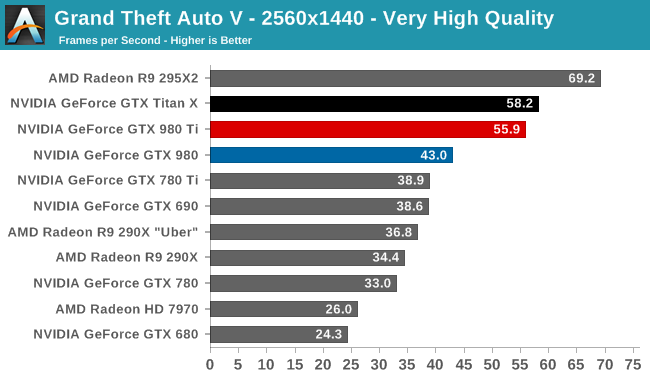

After initially expecting Grand Theft Auto to be a walk in the park performance wise, the PC version of the game has instead turned out to be a very demanding games for our GPUs. Even at 1440p we can’t have very high quality with MSAA and still crack 60fps, though we can get very close.

Ultimately GTA doesn’t do any better than any other game in setting apart our GM200 cards. GTX 980 Ti trails GTX Titan by 4% or less, essentially the average outcome at this point. Also average is the GTX 980 Ti’s lead over the GTX 980, with the newest card beating the older GTX 980 by 29-31% across our three settings. Finally, against the GTX 780 the GTX 980 Ti has another strong showing, with a 69-79% lead.

On an absolute basis we can see that at 4K we can’t have 4x MSAA and even crack 30fps on a single-GPU card, with GTX 980 Ti topping out at 27.8 fps. Taking out MSAA brings us up to 46.2fps, which is still well off 60fps, but also well over the 30fps cap that this game was originally designed against on the last-generation consoles.

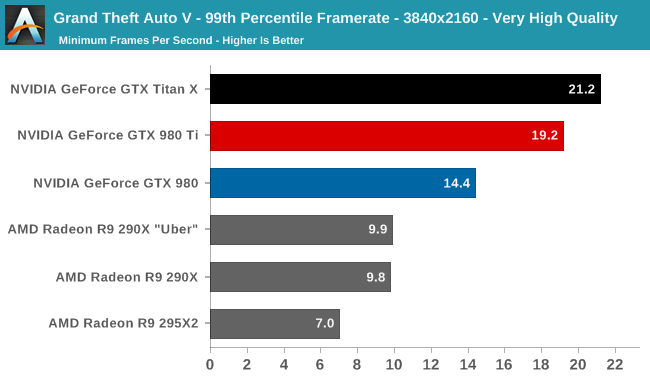

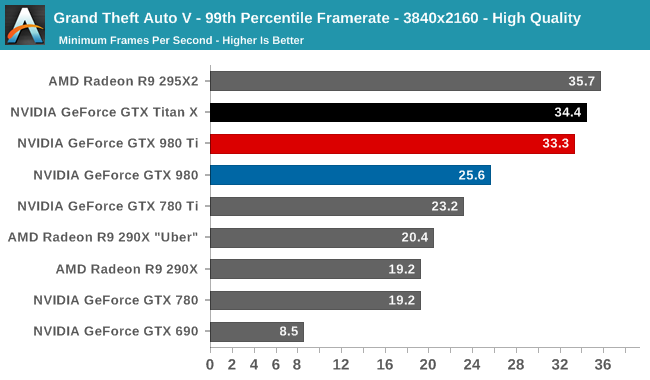

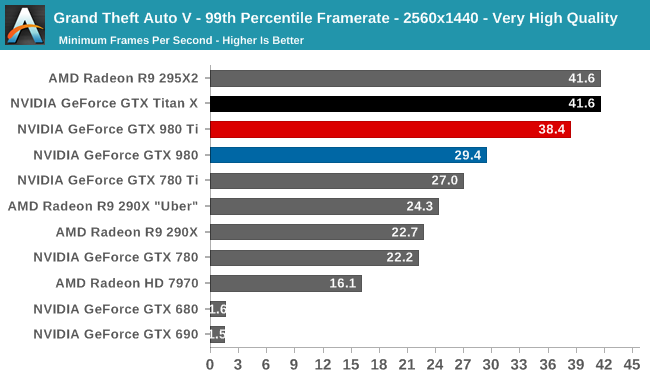

Along with an all-around solid benchmark scene, the other interesting benchmarking feature of GTA is that it also generates frame percentiles on its own, allowing us to see the percentiles without going back and recording the game with FRAPS. Taking a look at the 99th percentile in this case, what we find is that at each setting GTA crushes some group of cards due to a lack of VRAM.

At 4K very high quality, 4GB cards have just enough VRAM to stay alive, with the multi-GPU R9 295X2 getting crushed due to the additional VRAM requirements of AFR pushing it over the edge. Not plotted here are the 3GB cards, which saw their framerates plummet to the low single-digits, essentially struggling to complete this benchmark. Meanwhile 1440p at high quality crushes our 2GB cards, with less VRAM than a Radeon HD 7970 falling off of the cliff.

As for what this means for the GTX 980 Ti, the situation finds the GTX 980 Ti trailing the GTX Titan X in 99th percentile framerates by anywhere between 3% and 10%. This test is not designed to push more than 6GB of VRAM, so I’m not entirely convinced this isn’t a wider than normal variance (especially at the low framerates for 4K), though the significant and rapid asset streaming this benchmark requires may be taking its toll on the GTX 980 Ti, which has less VRAM for additional caching.

290 Comments

View All Comments

chizow - Monday, June 1, 2015 - link

Yes, its unprecedented to launch a full stack of rebrands with just 1 new ASIC, as AMD has done not once, not 2x, not even 3x, but 4 times with GCN (7000 to Boost/GE, 8000 OEM, R9 200, and now R9 300) Generally it is only the low-end, or a gap product to fill a niche. The G92/b isn't even close to this as it was rebranded numerous times over a short 9 month span (Nov 2007 to July 2008), while we are bracing ourselves for AMD rebrands going back to 2011 and Pitcairn.Gigaplex - Monday, June 1, 2015 - link

If it's the 4th time as you claim, then by definition, it's most definitely not unprecedented.chizow - Monday, June 1, 2015 - link

The first 3 rebrands were still technically within that same product cycle/generation. This rebrand certainly isn't, so rebranding an entire stack with last-gen parts is certainly unprecedented. At least, relative to Nvidia's full next-gen product stack. Hard to say though given AMD just calls everything GCN 1.x, like inbred siblings they have some similarities, but certainly aren't the same "family" of chips.Refuge - Monday, June 1, 2015 - link

Thanks Gigaplex, you beat me to it... lolchizow - Monday, June 1, 2015 - link

Cool maybe you can beat each other and show us the precedent where a GPU maker went to market with a full stack of rebrands against the competition's next generation line-up. :)FlushedBubblyJock - Wednesday, June 10, 2015 - link

Nothing like total fanboy denialKevin G - Monday, June 1, 2015 - link

The G92 got its last prebrand in 2009 and was formally replaced on in 2010 by the GTX 460. It had a full three year life span on the market.The GTS/GTX 200 series as mostly rebranded. There was the GT200 chip on the high end that was used for the GTX 260 and up. The low end silently got the GT216 for the Geforce 210 a year after the GTX 260/280 launch. At this time, AMD was busy launching the Radeon 4000 series which brought a range of new chips to market as a new generation.

Pitcairn came out in 2012, not 2011. This would mimic the life span of the G92 as well as the number of rebrands. (It never had a vanilla edition, it started with the Ghz edition as the 7870.)

chizow - Monday, June 1, 2015 - link

@Kevin G, nice try at revisionist history, but that's not quite how it went down. G92 was rebranded numerous times over the course of a year or so, but it did actually get a refresh from 65nm to 55nm. Indeed, G92 was even more advanced than the newer GT200 in some ways, with more advanced hardware encoding/decoding that was on-die, rather than on a complementary ASIC like G80/GT200.Also, at the time, prices were much more compacted at the time due to economic recession, so the high-end was really just a glorified performance mid-range due to the price wars started by the 4870 and the economics of the time.

Nvidia found it was easier to simply manipulate the cores on their big chip than to come out with a number of different ASICs, which is how we ended up with GTX 260 core 192, core 216 and the GTX 275:

Low End: GT205, 210, GT 220, GT 230

Mid-range: GT 240, GTS 250

High-end: GTX 260, GTX 275

Enthusiast: GTX 280, GTX 285, GTX 295

The only rebranded chip in that entire stack is the G92, so again, certainly not the precedent for AMD's entire stack of Rebrandeon chips.

Kevin G - Wednesday, June 3, 2015 - link

@chizowOut of that list of GTS/GTX200 series, the new chip in that line up in 2008 was the GT200 and the GT218 that was introduced over a year later in late 2009. For 9 months on the market the three chips used in the 200 series were rebrands of the G94, rebrands of the G92 and the new GT200. The ultra low end at this time was filled in by cards still carrying the 9000 series branding.

The G92 did have a very long life as it was introduced as the 8800GTS with 512 MB in late 2007. In 2008 it was rebranded the 9800GTX roughly six months after it was first introduced. A year later in 2009 the G92 got a die shrink and rebranded as both the GTS 150 for OEMs and GTS 250 for consumers.

So yeah, AMD's R9 300 series launch really does mimic what nVidia did with the GTS/GTX 200 series.

FlushedBubblyJock - Wednesday, June 10, 2015 - link

G80 was not G92 not G92b nor G94 mr kevin g