The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTMeet The GeForce GTX Titan X

Now that we’ve had a chance to look at the GM200 GPU at the heart of GTX Titan X, let’s take a look at the card itself.

From a design standpoint NVIDIA put together a very strong card with the original GTX Titan, combining a revised, magnesium-less version of their all-metal shroud with a high performance blower and vapor chamber assembly. The end result was a high performance 250W card that was quieter than some open-air cards, much quieter than a bunch of other blowers, and shiny to look at to boot. This design was further carried forward for the reference GTX 780 series, its stylings copied for the GTX Titan Z, and used with a cheaper cooling apparatus for the reference GTX 980.

For GTX Titan X, NVIDIA has opted to leave well enough alone, having made virtually no changes to the shroud or cooling apparatus. And truth be told it’s hard to fault NVIDIA right now, as this design remains the gold (well, aluminum) standard for a blower. Looks aside, after years of blowers that rattled, or were too loud, or didn’t cool discrete components very well, NVIDIA is sitting on a very solid design that I’m not really sure how anyone would top (but I’d love to see them try).

In any case, our favorite metal shroud is back once again. Composed of a cast aluminum housing and held together using a combination of rivets and screws, it’s as physically solid a shroud as we’ve ever seen. Meanwhile having already done a partial black dye job for GTX Titan Black and GTX 780 Ti – using black lettering a black-tinted polycarbonate window – NVIDIA has more or less completed the dye job by making the metal shroud itself almost completely black. What remains are aluminum accents and the Titan lettering (Titan, not Titan X, curiously enough) being unpainted aluminum as well. The card measures 10.5” long overall, which at this point is NVIDIA’s standard size for high-end GTX cards.

Drilling down we have the card’s primary cooling apparatus, composed of a nickel-tipped wedge-shaped heatsink and ringed radial fan. The heatsink itself is attached to the GPU via a copper vapor chamber, something that has been exclusive to GTX 780/Titan cards and provides the best possible heat transfer between the GPU and heatsink. Meanwhile the rest of the card is covered with a black aluminum baseplate, providing basic heatsink functionality for the VRMs and other components while also protecting them.

Finally at the bottom of the stack we have the card itself, complete with the GM200 GPU, VRAM chips, and various discrete components. Unlike the shroud and cooler, GM200’s PCB isn’t a complete carry-over from GK110, but it is none the less very similar with only a handful of changes made. This means we’re looking at the GPU and VRAM chips towards the front of the card, while the VRMs and other discrete components occupy the back. New specifically to GTX Titan X, NVIDIA has done some minor reworking to improve airflow to the discrete components and reduce temperatures, along with employing molded inductors.

As with GK110, NVIDIA still employs a 6+2 phase VRM design, with 6 phases for the GPU and another 2 for the VRAM. This means that GTX Titan X has a bit of power delivery headroom – NVIDIA allows the power limit to be increased by 10% to 275W – but hardcore overclockers will find that there isn’t an extreme amount of additional headroom to play with. Based on our sample the actual shipping voltage at the max boost clock is fairly low at 1.162v, so in non-TDP constrained scenarios there is some additional headroom through overvolting, up to 1.237v in the case of our sample.

In terms of overall design, the need to house 24 VRAM chips to get 12GB of VRAM means that the GTX Titan X has chips on the front as well as the back. Unlike the GTX 980 then, for this reason NVIDIA is once again back to skipping the backplate, leaving the back side of the card bare just as with the previous GTX Titan cards.

Moving on, in accordance with GTX Titan X’s 250W TDP and the reuse of the GTX Titan cooler, power delivery for the GTX Titan X is identical to its predecessors. This means a 6-pin and an 8-pin power connector at the top of the card, to provide up to 225W, with the final 75W coming from the PCIe slot. Interestingly the board does have another 8-pin PCIe connector position facing the rear of the card, but that goes unused for this specific GM200 card.

Meanwhile display I/O follows the same configuration we saw on GTX 980. This is 1x DL-DVI-I, 3x DisplayPort 1.2, and 1x HDMI 2.0, with a total limit of 4 displays. In the case of GTX Titan the DVI port is somewhat antiquated at this point – the card is generally overpowered for the relatively low maximum resolutions of DL-DVI – but on the other hand the HDMI 2.0 port is actually going to be of some value here since it means GTX Titan X can drive a 4K TV. Meanwhile if you have money to spare and need to drive more than a single 4K display, GTX Titan X also features a pair of SLI connectors for even more power.

In fact 4K will be a repeating theme for GTX Titan X, as this is one of the primary markets/use cases NVIDIA will be going after with the card. With GTX 980 generally good up to 2560x1440, the even more powerful GTX Titan X is best suited for 4K and VR, the two areas where GTX 980 came up short. In the case of 4K even a single GTX Titan X is going to struggle at times – we’re not at 60fps at 4K with a single GPU quite yet – but GTX Titan should be good enough for framerates between 30fps and 60fps at high quality settings. To fill the rest of the gap NVIDIA is also going to be promoting 4Kp60 G-Sync monitors alongside the GTX Titan X, as the 30-60fps range is where G-sync excels. And while G-sync can’t make up for lost frames it can take some of the bite out of sub-60fps framerates, making it a smoother/cleaner experience than it would otherwise be.

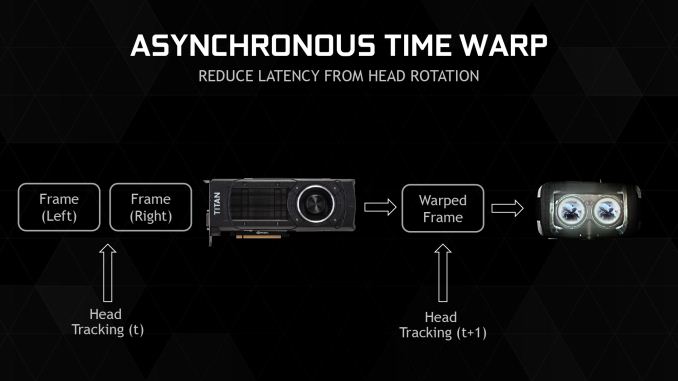

Longer term NVIDIA also sees the GTX Titan X as their most potent card for VR headsets., and they made sure that GTX Titan X was on the showfloor for GDC to drive a few of the major VR demos. Certainly VR will take just about whatever rendering power you can throw at it, if only in the name of reducing rendering latency. But overall we’re still very early in the game, especially with commercial VR headsets still being in development.

Finally, speaking of the long term, I wanted to hit upon the subject of the GTX Titan X’s 12GB of VRAM. With most other Maxwell cards already using 4Gb VRAM chips, the inclusion of 12GB of VRAM in NVIDIA’s flagship card was practically a given, especially since it doubles the 6GB of VRAM the original GTX Titan came with. At the same time however I’m curious to see just how long it takes for games to grow into this space. The original GTX Titan was fortunate enough to come out with 6GB right before the current-generation consoles launched, and with them their 8GB memory configurations, leading to a rather sudden jump in VRAM requirements that the GTX Titan was well positioned to handle. Much like 6GB in 2013, 12GB is overkill in 2015, but unlike the original GTX Titan I suspect 12GB will remain overkill for a much longer period of time, especially without a significant technology bump like the consoles to drive up VRAM requirements.

276 Comments

View All Comments

looncraz - Tuesday, March 17, 2015 - link

If the most recent slides (allegedly leaked from AMD) hold true, the 390x will be at least as fast as the Titan X, though with only 8GB of RAM (but HBM!).A straight 4096SP GCN 1.2/3 GPU would be a close match-up already, but any other improvements made along the way will potentially give the 390X a fairly healthy launch-day lead.

I think nVidia wanted to keep AMD in the dark as much as possible so that they could not position themselves to take more advantage of this, but AMD decided to hold out, apparently, until May/June (even though they apparently already have some inventory on hand) rather than give nVidia a chance to revise the Titan X before launch.

nVidia blinked, it seems, after it became apparent AMD was just going to wait out the clock with their current inventory.

zepi - Wednesday, March 18, 2015 - link

Unless AMD has achieved considerable increase in perf/w, they are going to have really hard time tuning those 4k shaders to a reasonable frequency without being a 450W card.Not that being a 500W is necessarily a deal breaker for everyone, but in practice cooling a 450W card without causing ear shattering level of noise is very difficult compared to cooling a 250W card.

Let us wait and hope, since AMD really would need to get a break and make some money on this one...

looncraz - Wednesday, March 18, 2015 - link

Very true. We know that with HBM there should already be a fairly beefy power savings (~20-30W vs 290X it seems).That doesn't buy them room for 1,280 more SPs, of course, but it should get them a healthy 256 of them. Then, GCN 1.3 vs 1.1 should have power advantages as well. GCN 1.2 vs 1.0 (R9 285 vs R9 280) with 1792 SPs showed a 60W improvement, if we assume GCN 1.1 to GCN 1.3 shows a similar trend the 390X should be pulling only about 15W more than the 290X with the rumored specs without any other improvements.

Of course, the same math says the 290X should be drawing 350W, but that's because it assumes all the power is in the SPs... But I do think it reveals that AMD could possibly do it without drawing much, if any, more power without making any unprecedented improvements.

Braincruser - Wednesday, March 18, 2015 - link

Yeah, but the question is, How well will the memory survive on top of a 300W GPU?Because the first part in a graphic card to die from high temperatures is the VRAM.

looncraz - Thursday, March 19, 2015 - link

It will be to the side, on a 2.5d interposer, I believe.GPU thermal energy will move through the path of least resistance (technically, to the area with the greatest deltaT, but regulated by the material thermal conductivity coefficient), which should be into the heatsink or water block. I'm not sure, but I'd think the chips could operate in the same temperature range as the GPU, but maybe not. It may be necessary to keep them thermally isolated. Which shouldn't be too difficult, maybe as simple as not using thermal pads at all for the memory and allowing them to passively dissipate heat (or through interposer mounted heatsinks).

It will be interesting to see what they have done to solve the potential issues, that's for sure.

Xenonite - Thursday, March 19, 2015 - link

Yes, I agree that AMD would be able to absolutely destroy NVIDIA on the performance front if they designed a 500W GPU and left the PCB and waterblock design to their AIB partners.I would also absolutely love to see what kind of performance a 500W or even a 1kW graphics card would be able to muster; however, since a relatively constant 60fps presented with less than about 100ms of total system latency has been deemed sufficient for a "smooth and responsive" gaming experience, I simply can't imagine such a card ever seeing the light of day.

And while I can understand everyone likes to pretend that they are saving the planet with their <150W GPUs, the argument that such a TDP would be very difficult to cool does not really hold much water IMHO.

If, for instance, the card was designed from the ground up to dissipate its heat load over multiple 200W~300W GPUs, connected via a very-high-speed, N-directional data interconnect bus, the card could easily and (most importantly) quietly be cooled with chilled-watercooling dissipating into a few "quad-fan" radiators. Practically, 4 GM200-size GPUs could be placed back-to-back on the PCB, with each one rendering a quarter of the current frame via shared, high-speed frame buffers (thereby eliminating SLI-induced microstutter and "frame-pacing" lag). Cooling would then be as simple as installing 4 standard gpu-watercooling loops with each loop's radiator only having to dissipate the TDP of a single GPU module.

naxeem - Tuesday, March 24, 2015 - link

They did solve that problem with a water-cooling solution. 390X WCE is probably what we'll get.ShieTar - Wednesday, March 18, 2015 - link

Who says they don't allow it? EVGA have already anounced two special models, a superclocked one and one with a watercooling-block:http://eu.evga.com/articles/00918/EVGA-GeForce-GTX...

Wreckage - Tuesday, March 17, 2015 - link

If by fast you mean June or July. I'm more interested in a 980ti so I don't need a new power supply.ArmedandDangerous - Saturday, March 21, 2015 - link

There won't ever be a 980 Ti if you understand Nvidia's naming schemes. Ti's are for unlocked parts, there's nothing to further unlock on the 980 GM204.