The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTCompute

Shifting gears, we have our look at compute performance.

As we outlined earlier, GTX Titan X is not the same kind of compute powerhouse that the original GTX Titan was. Make no mistake, at single precision (FP32) compute tasks it is still a very potent card, which for consumer level workloads is generally all that will matter. But for pro-level double precision (FP64) workloads the new Titan lacks the high FP64 performance of the old one.

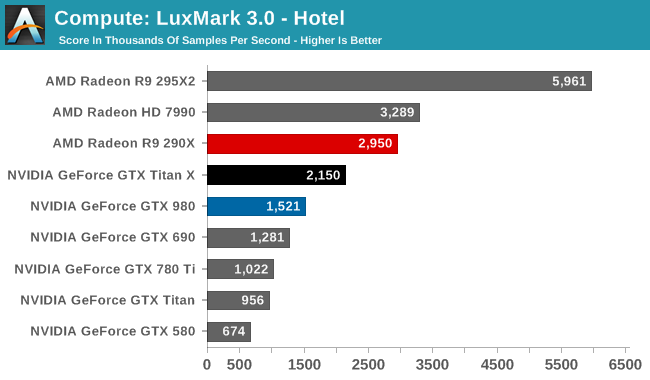

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

While in LuxMark 2.0 AMD and NVIDIA were fairly close post-Maxwell, the recently released LuxMark 3.0 finds NVIDIA trailing AMD once more. While GTX Titan X sees a better than average 41% performance increase over the GTX 980 (owing to its ability to stay at its max boost clock on this benchmark) it’s not enough to dethrone the Radeon R9 290X. Even though GTX Titan X packs a lot of performance on paper, and can more than deliver it in graphics workloads, as we can see compute workloads are still highly variable.

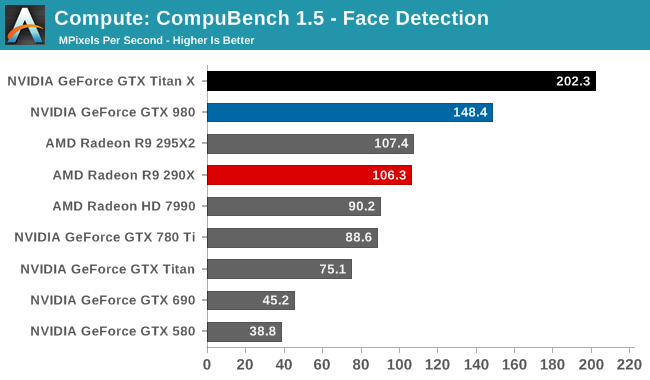

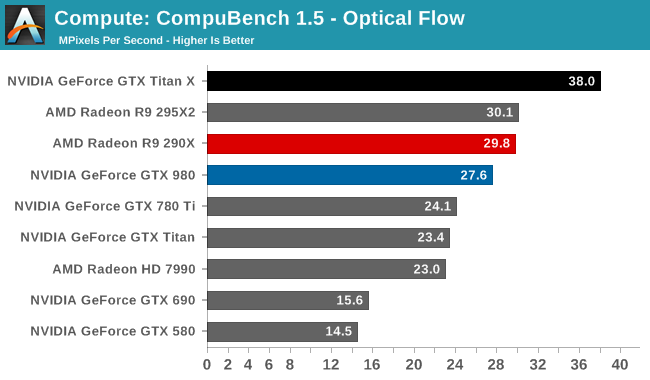

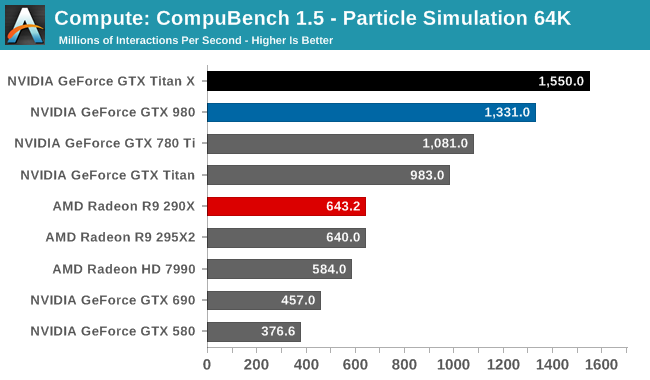

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Although GTX Titan X struggled at LuxMark, the same cannot be said for CompuBench. Though the lead varies with the specific sub-benchmark, in every case the latest Titan comes out on top. Face detection in particular shows some massive gains, with GTX Titan X more than doubling the GK110 based GTX 780 Ti's performance.

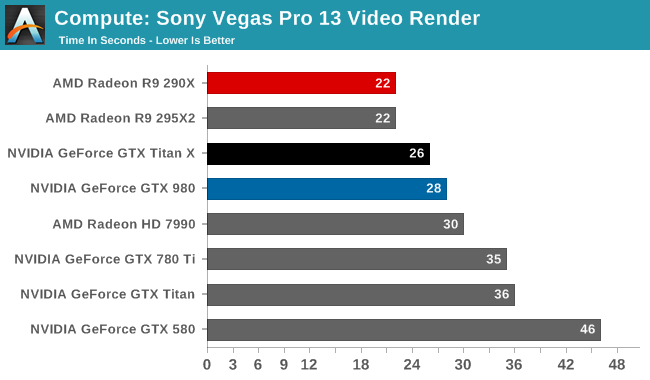

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

Traditionally a benchmark that favors AMD, GTX Titan X closes the gap some. But it's still not enough to surpass the R9 290X.

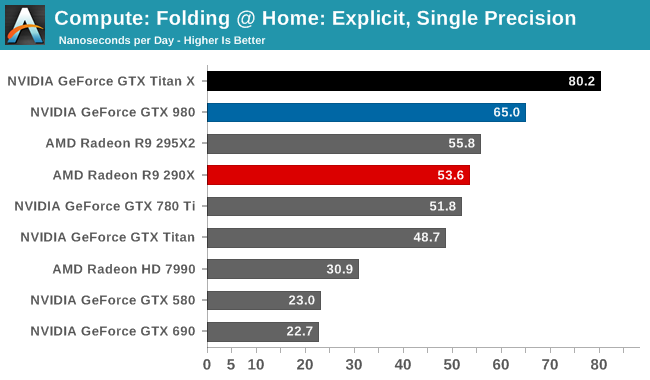

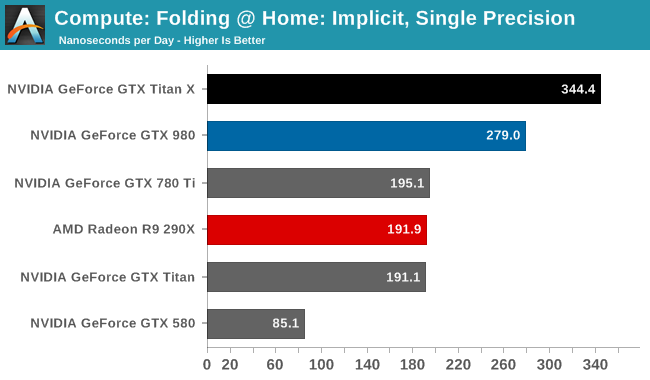

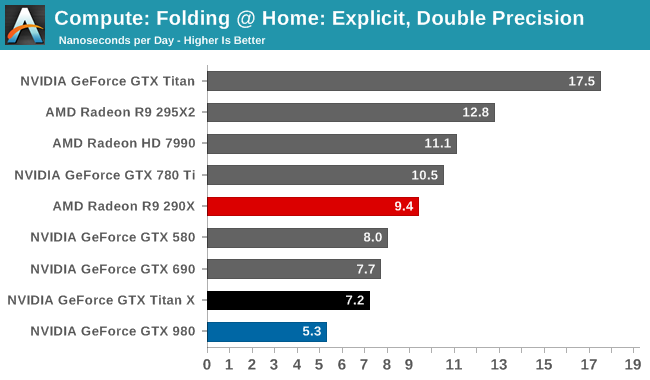

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Folding @ Home’s single precision tests reiterate just how powerful GTX Titan X can be at FP32 workloads, even if it’s ostensibly a graphics GPU. With a 50-75% lead over the GTX 780 Ti, the GTX Titan X showcases some of the remarkable efficiency improvements that the Maxwell GPU architecture can offer in compute scenarios, and in the process shoots well past the AMD Radeon cards.

On the other hand with a native FP64 rate of 1/32, the GTX Titan X flounders at double precision. There is no better example of just how much the GTX Titan X and the original GTX Titan differ in their FP64 capabilities than this graph; the GTX Titan X can’t beat the GTX 580, never mind the chart-topping original GTX Titan. FP64 users looking for an entry level FP64 card would be well advised to stick with the GTX Titan Black for now. The new Titan is not the prosumer compute card that was the old Titan.

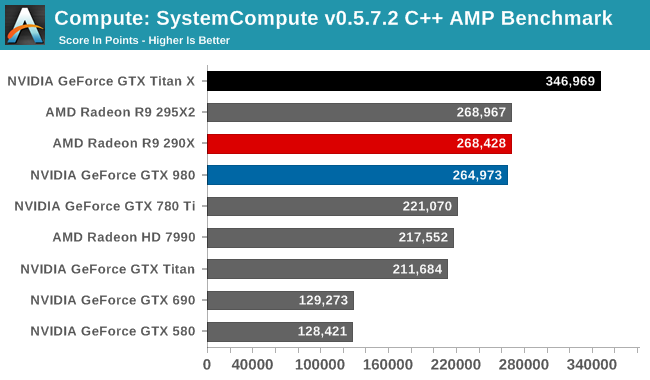

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

With the GTX 980 already performing well here, the GTX Titan X takes it home, improving on the GTX 980 by 31%. Whereas GTX 980 could only hold even with the Radeon R9 290X, the GTX Titan X takes a clear lead.

Overall then the new GTX Titan X can still be a force to be reckoned with in compute scenarios, but only when the workloads are FP32. Users accustomed to the original GTX Titan’s FP64 performance on the other hand will find that this is a very different card, one that doesn’t live up to the same standards.

276 Comments

View All Comments

Jdubo - Thursday, March 19, 2015 - link

290x was the original Titan killer. Not only did it kill the original release but killed its over-inflated price as well. I suspect the next reiteration of AMD flagship card will be Titan X killer as well. History usually repeats itself over and over again.jay401 - Thursday, March 19, 2015 - link

You say this is not the same type of pro-sumer card as the previous Titan yet the price is the same. No thanks.Ballist1x - Thursday, March 19, 2015 - link

No gtx970/970 sli in the review;) Anand you let the consumers down...H3ld3r - Thursday, March 19, 2015 - link

R9 290x only haves 4Gb at 5ghz and does a awsome job at 4k. the 295 only operates with 4Gb the other 4 are mirrored and shines in 4k. So i can't understand everybody concerns with 4k gaming with upcoming fiji. This Titan X has 12GB at 7Ghz and only shows how gddr5 is obsolete.oranos - Friday, March 20, 2015 - link

The ratio of potential buyers to comments on this article is atronomical.leignheart - Friday, March 20, 2015 - link

Hello everyone, I would like you to read the final words on the Titan X. It says the performance increase over a single gtx 980 is 33%, except the price is 100% over the gtx 980. If you are lucky enough to pay just 1000$ for the Titan X. Please people do not waste your money on this card. If you do then Nvidia will keep releasing Extremely overpriced cards. DO NOT BUY THIS CARD.Please instead wait for the gtx 980 TI if you want dx12. I will certainly pay 1 grand and more for a card, but this card is a particular rip off at that price point. Don't just throw your money away. Read the performance chart yourself, it is in no way shape or form worth 1000$.

Dug - Monday, March 30, 2015 - link

I suppose we can't buy a Rolex, Tesla, a vacation condo, or even a pony?Paying for the best available is always more money. Get a job where another $500 doesn't affect you when you purchase something. Plus price is only perception on worth. People could say $20 is too much for a video card and they would be right.

themac79 - Friday, March 20, 2015 - link

I wish they would have thrown in 780sli, which is what I run. I would like to have more VRAM, but I'm running all the new games pretty much maxed out. I made the mistake of buying them when they first came out and payed over $600 a piece. I will definitely wait for price drops this time.H3ld3r - Friday, March 20, 2015 - link

You need is more transistors, memory speed, stream processors, bus, rops, tmu's not memory amountArchetype - Friday, March 20, 2015 - link

4K gaming not quite there yet. Not going to pay $500+ for it. And in the mean time still jamming Full HD games like a baws using my old 280X "on my Full HD monitor".