The NVIDIA GeForce GTX Titan X Review

by Ryan Smith on March 17, 2015 3:00 PM ESTCivilization: Beyond Earth

Shifting gears from action to strategy, we have Civilization: Beyond Earth, the latest in the Civilization series of strategy games. Civilization is not quite as GPU-demanding as some of our action games, but at Ultra quality it can still pose a challenge for even high-end video cards. Meanwhile as the first Mantle-enabled strategy title Civilization gives us an interesting look into low-level API performance on larger scale games, along with a look at developer Firaxis’s interesting use of split frame rendering with Mantle to reduce latency rather than improving framerates.

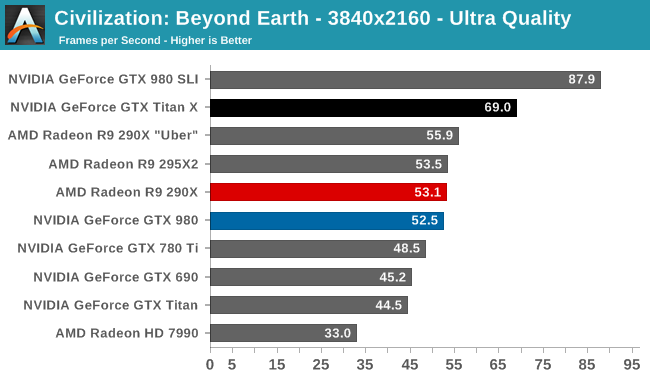

Though not as intricate as Crysis 3 or Shadow of Mordor, Civilization still requires a very powerful GPU to run it at 4K if you want to hit 60fps. In fact of our single-GPU configurations the GTX Titan X is the only card to crack 60fps, delivering 69fps at the game’s most extreme setting. This is once again well ahead of the GTX 980 – beating it by 31% at 4K – and 40%+ ahead of the GK110 cards. On the other hand this is the closest AMD’s R9 290XU will get, with the GTX Titan X only beating it by 23% at 4K.

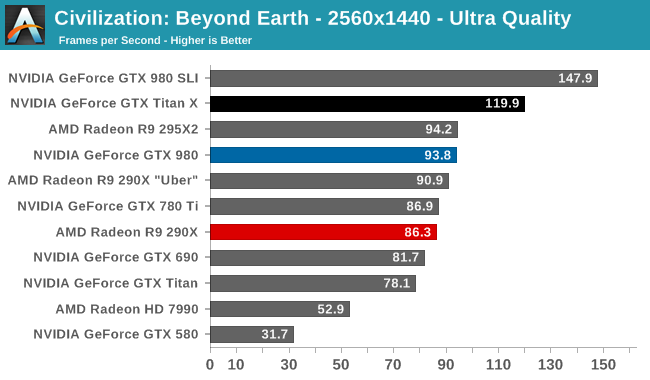

Meanwhile at 1440p it’s entirely possible to play Civilization at 120fps, making it one of a few games where the GTX Titan X can keep up with high refresh rate 1440p monitors.

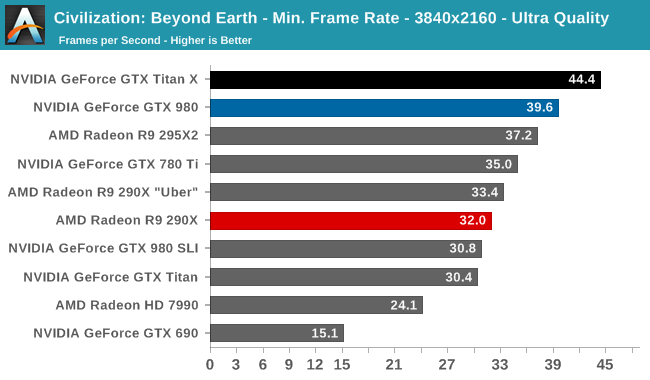

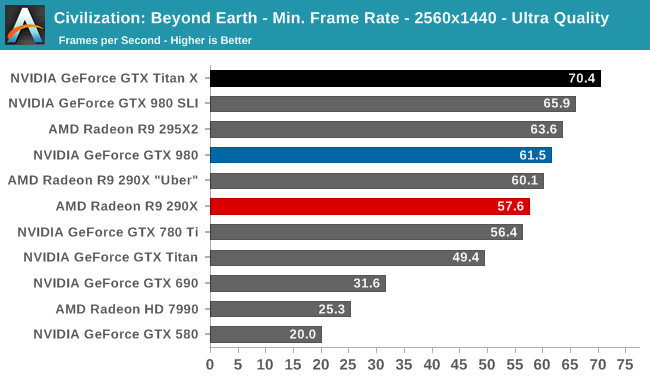

When it comes to minimum framerates the GTX Titan X doesn’t dominate quite like it does at average framerates, but it still handily takes the top spot. Even at its worst, the GTX Titan X can still deliver 44fps at 4K under Civilization.

276 Comments

View All Comments

chizow - Wednesday, March 18, 2015 - link

And custom-cooled, higher clocked cards should? It took months for AMD to bring those to market and many of them cost more than the original reference cards and are also overclocked.http://www.newegg.com/Product/ProductList.aspx?Sub...

Like I said, AMD fanboys made this bed, time to lie in it.

Witchunter - Wednesday, March 18, 2015 - link

I hope you do realize calling out AMD fanboys in each and every one of your comments essentially paints you as Nvidia fanboy in the eyes of other readers. I'm here to read some constructive comments and all I see is you bitching about fanboys and being one yourself.chizow - Wednesday, March 18, 2015 - link

@Witchunter, the difference is, I'm not afraid to admit I'm a fan of the best, but I'm going to at least be consistent on my views and opinions. Whereas these AMD fanboys are crying foul for the same thing they threw a tantrum over a few years ago, ultimately leading to this policy to begin with. You don't find that ironic, that what they were crying about 4 years ago is suddenly a problem when the shoe is on the other foot? Maybe that tells you something about yourself and where your own biases reside? :)Crunchy005 - Wednesday, March 18, 2015 - link

@chizow either way you don't really offer constructive criticism and you call people dishonest without proving them wrong in any way and offering facts. You are one of the biggest fanboys out there and it kind of makes you lose credibility.Crunchy005 - Wednesday, March 18, 2015 - link

Ok wanted to add to this, I do like some of the comments you make but you are so fan boyish I am unable to take much stock in what you say. If you could offer more facts and stop just bashing AMD and praising the all powerful Nvidia is better in every way, despite the fact that AMD has advantages and has outperformed Nvidia in many ways, so has Nvidia outperformed AMD, they leap frog...if you did that we might all like to hear what you have to say.FlushedBubblyJock - Thursday, April 2, 2015 - link

I know what the truth is so I greatly enjoy what he says.If you can't handle the truth, that should be your problem, not everyone else's, obviously.

chizow - Monday, March 23, 2015 - link

Like I said, I'm not here to sugarcoat things or keep it constructive, I'm here to set the record straight and keep the discussion honest. If that involves bruising some fragile AMD fanboy egos and sensibilities, so be it.I'm completely comfortable in my own skin knowing I'm a fan of the best, and that just happens to be Nvidia for graphics cards for the last near-decade since G80, and I'm certainly not afraid to tell you why that's the case backed with my usual facts, references etc. etc. You're free to verify my sources and references if you like to come to your own conclusion, but at the end of the day, that's the whole point of the internet, isn't it? Lay out the facts, let informed people make their own conclusions?

In any case, the entire discussion and you can be the judge of whether my take on the topic is fair, you can clearly see, AMD fanboys caused this dilemma for themselves, many of which are the ones you see crying in this thread. Queue that Alanis Morissette song....

http://anandtech.com/comments/3987/amds-radeon-687...

http://anandtech.com/show/3988/the-use-of-evgas-ge...

Phartindust - Wednesday, March 18, 2015 - link

Um, AMD doesn't manufacture after market cards.dragonsqrrl - Tuesday, March 17, 2015 - link

"use less power"...right, and why would these non reference cards consume less power? Just hypothetically speaking, ignoring for a moment all the benchmarks out there that suggest otherwise.

squngy - Tuesday, March 17, 2015 - link

Undervolting?