NVIDIA Mobile Overclocking - There and Back Again

by Jarred Walton on February 20, 2015 2:25 PM EST- Posted in

- Laptops

- Gaming

- NVIDIA

- Overclocking

- Notebooks

The past few months have been a bit interesting on the mobile side of the fence for NVIDIA. Starting with the R346 drivers (347.09 beta and later), NVIDIA apparently decided to lock down overclocking of their mobile GPUs. While this is basically a non-issue for the vast majority of users, for the vocal minority of enthusiasts the reaction has been understandably harsh. Accusations have ranged from “bait and switch” (e.g. selling a laptop GPU that could be overclocked and then removing that “feature”) to “this is what happens when there’s no competition”, and everything in between.

NVIDIA for their part has had a few questionable posts as well, at one point stating that overclocking was just “a bug introduced into our drivers” – a bug that has apparently been around for how many years now? And with a 135MHz overclocking limit no less…. But there’s light at the end of the tunnel, as NVIDIA has now posted that they will be re-enabling overclocking with their next driver update, due in March. So that’s the brief history, but let’s talk about a few other aspects of what all of this means.

First and foremost, anyone that claims enabling/disabling overclocking of mobile GPUs is going to have a huge impact on NVIDIA’s bottom line is, in my view, spouting hyperbole and trying to create a lot of drama. I understand there are people that want this feature, and that’s fine, but for every person that looks at overclocking a mobile GPU there are going to be 100 more (1000 more?) that never give overclocking a first thought, let alone a second one. And for many of those people, disabling overclocking entirely isn't really a bad idea – a way to protect the user from themselves, basically. I also don’t think that removing overclocking was ever done due to the lack of competition, though it might have had a small role to play. At most, I think NVIDIA might have disabled overclocking because it’s a way to keep people from effectively turning a GTX 780M into a GTX 880M, or the current GTX 980M into a… GTX 1080M (or whatever they call the next version).

NVIDIA’s story carries plenty of weight with me, as I’ve been reviewing and helping people with laptops for over a decade. Their initial comment was, “Overclocking is by no means a trivial feature, and depends on thoughtful design of thermal, electrical, and other considerations. By overclocking a notebook, a user risks serious damage to the system that could result in non-functional systems, reduced notebook life, or many other effects.” This is absolutely true, and I’ve seen plenty of laptops where the GPU has failed after 2-3 years of use, and that’s without overclocking. I've also seen a few GTX 780M notebooks where running at stock speeds isn't 100% stable, especially for prolonged periods of time. Sometimes it’s possible to fix the problem; many people simply end up buying a new laptop and moving on, disgruntled at the OEM for building a shoddy notebook.

For users taking something like a Razer Blade and trying to overclock the GPU, I also think pushing the limits of the hardware beyond what the OEM certified is just asking for trouble. Gaming GPUs and “thin and light” are generally at opposite ends of the laptop spectrum, and in our experience the laptops can already get pretty toasty while gaming. So if you have a laptop that is already nearing the throttling point, overclocking the GPU is going to increase the potential of throttling or potentially even damage the hardware. Again, I’ve seen enough failed laptops that there’s definitely an element of risk – many laptops seem to struggle to run reliably for more than 2-3 years under frequent gaming workloads, so increasing the cooling demands is just going to exacerbate the problem.

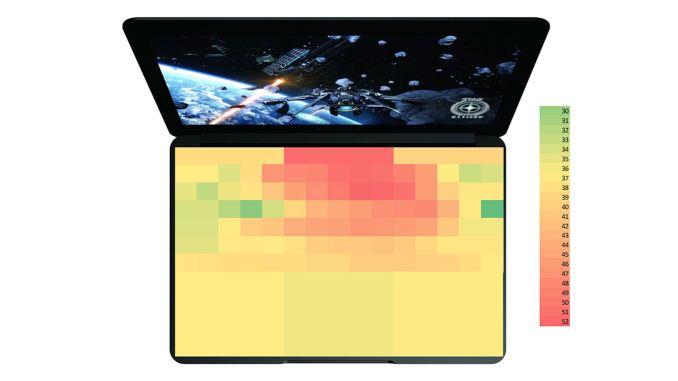

On the other hand, if you have a large gaming notebook with a lot of cooling potential and the system generally doesn't get hot, sure, it’s nice to be able to push the hardware a bit further if you want. Built-in throttling features should also protect the hardware from any immediate damage. We don’t normally investigate overclocking potential on notebooks as it can vary even between units of the same model, and in many cases it voids the warranty, but enthusiasts are a breed apart. My personal opinion is that for a gaming laptop, you should try to keep GPU temperatures under 85C to be safe (which is what most OEMs tend to target); when laptops exceed that “safe zone” (with or without overclocking), I worry about the long-term reliability prospects. If you have a GPU that’s running at 70C under load, however, you can probably reliably run the clocks at the maximum +135MHz that NVIDIA allows.

We’re currently in the process of testing a couple of gaming notebooks, and given the timing of this we’re going to use this as an opportunity to try some overclocking – with the older 344.75 drivers for now. We’ll have a separate article digging into the overclocking results later, but again I’d urge users to err on the side of caution rather than trying to redline your mobile GPU. What that means in practice is that mobile GPU overclocking is mostly going to be of use for people with larger gaming notebooks – generally the high-end Alienware, ASUS, Clevo, and MSI models. There may be other laptops where you can squeeze out some extra performance (e.g. some models with GTX 850M/860M, or maybe even some older GT 750M laptops), but keep an eye on the thermals if you want to go that route.

36 Comments

View All Comments

Khenglish - Friday, February 20, 2015 - link

That's the way it used to work, and I hope they revert to that model.Cakefish - Saturday, February 21, 2015 - link

I agree!ExarKun333 - Friday, February 20, 2015 - link

This is really a uninformed post. Lots of people happily game on a laptop, and GPU matters there just as it does on a desktop machine. An extra 10-20% is just that, more performance. That may mean enabling MSAA or hitting more acceptable frame-rates on newer games. For laptops that can be configured with dual GPUs, overclocking a single GPU (or lesser SKU) is essentially no problem at all. Jarred's comments on not attempting this on a thin and light laptop make a lot of sense. In the end, thermal throttling pretty much prevents failures or the like. So why not try, assuming you have a non-compact model?Meaker10 - Saturday, February 21, 2015 - link

980M SLI tweaked goes toe to toe with a 970 desktop sli system, except there is no 3.5gb issue and you actually have 8GB of vram.Cakefish - Saturday, February 21, 2015 - link

There's a reason why laptops exist. Some people literally cannot get a desktop (like me) because of space and portability requirements. However, those people also desire the highest performance possible.whyso - Friday, February 20, 2015 - link

Overclocking without touching the voltage is pretty much harmless. Considering you cannot touch the voltage....should have no problem.HighTech4US - Friday, February 20, 2015 - link

Harmless you say!!!Where exactly does the extra (maybe even excessive) heat go. You do know that upping the frequency does up the heat.

And excessive heat will kill GPUs and CPUs over time.

Alexvrb - Friday, February 20, 2015 - link

If they didn't have power and thermal throttling, you'd be right. But if you're not allowed to tamper with power targets, thermal targets, and voltage (like you can on a desktop)? There's really not too much harm in a slight overclock. You might have less consistent performance though, if you have it banging against the limit a lot. So you should only overclock as much as your cooling can handle (which likely means a very mild overclock).Cakefish - Saturday, February 21, 2015 - link

That's why throttling exists. They will throttle before they reach danger levels.JarredWalton - Saturday, February 21, 2015 - link

Maybe. In three years, a GPU that routinely hits 85C (and then throttles a bit) is more likely to have problems than a GPU that stays at 75C and never throttles. Is it enough of a difference to matter? Probably not, as in three years the GPU will be getting old regardless.