Samsung SM951 (512GB) PCIe SSD Review

by Kristian Vättö on February 24, 2015 8:00 AM ESTAnandTech Storage Bench - The Destroyer

The Destroyer has been an essential part of our SSD test suite for nearly two years now. It was crafted to provide a benchmark for very IO intensive workloads, which is where you most often notice the difference between drives. It's not necessarily the most relevant test to an average user, but for anyone with a heavier IO workload The Destroyer should do a good job at characterizing performance.

| AnandTech Storage Bench - The Destroyer | ||||||||||||

| Workload | Description | Applications Used | ||||||||||

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox | ||||||||||

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite | ||||||||||

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox | ||||||||||

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware | ||||||||||

| Video Playback | Copy and watch movies | Windows 8 | ||||||||||

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 | ||||||||||

The table above describes the workloads of The Destroyer in a bit more detail. Most of the workloads are run independently in the trace, but obviously there are various operations (such as backups) in the background.

| AnandTech Storage Bench - The Destroyer - Specs | ||||||||||||

| Reads | 38.83 million | |||||||||||

| Writes | 10.98 million | |||||||||||

| Total IO Operations | 49.8 million | |||||||||||

| Total GB Read | 1583.02 GB | |||||||||||

| Total GB Written | 875.62 GB | |||||||||||

| Average Queue Depth | ~5.5 | |||||||||||

| Focus | Worst case multitasking, IO consistency | |||||||||||

The name Destroyer comes from the sheer fact that the trace contains nearly 50 million IO operations. That's enough IO operations to effectively put the drive into steady-state and give an idea of the performance in worst case multitasking scenarios. About 67% of the IOs are sequential in nature with the rest ranging from pseudo-random to fully random.

| AnandTech Storage Bench - The Destroyer - IO Breakdown | |||||||||||

| IO Size | <4KB | 4KB | 8KB | 16KB | 32KB | 64KB | 128KB | ||||

| % of Total | 6.0% | 26.2% | 3.1% | 2.4% | 1.7% | 38.4% | 18.0% | ||||

I've included a breakdown of the IOs in the table above, which accounts for 95.8% of total IOs in the trace. The leftover IO sizes are relatively rare in between sizes that don't have a significant (>1%) share on their own. Over a half of the transfers are large IOs with one fourth being 4KB in size.

| AnandTech Storage Bench - The Destroyer - QD Breakdown | ||||||||||||

| Queue Depth | 1 | 2 | 3 | 4-5 | 6-10 | 11-20 | 21-32 | >32 | ||||

| % of Total | 50.0% | 21.9% | 4.1% | 5.7% | 8.8% | 6.0% | 2.1% | 1.4 | ||||

Despite the average queue depth of 5.5, a half of the IOs happen at queue depth of one and scenarios where the queue depths is higher than 10 are rather infrequent.

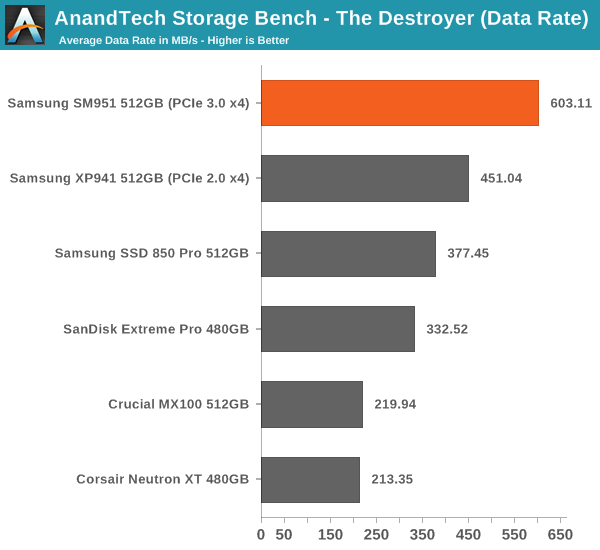

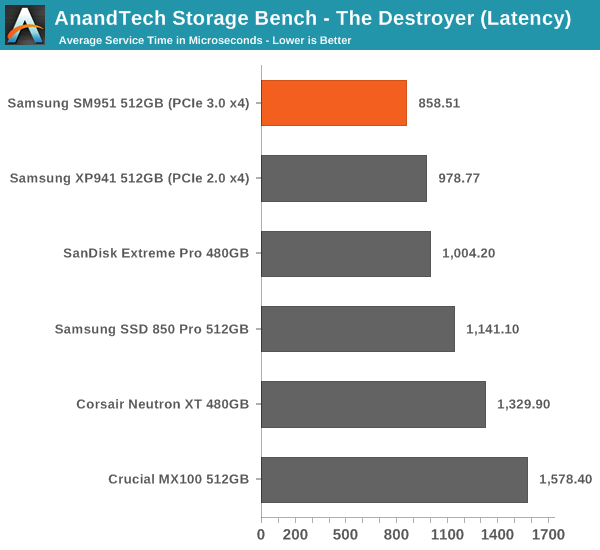

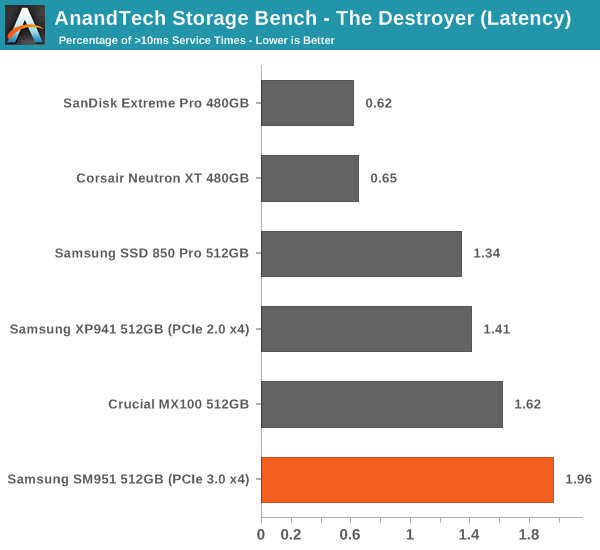

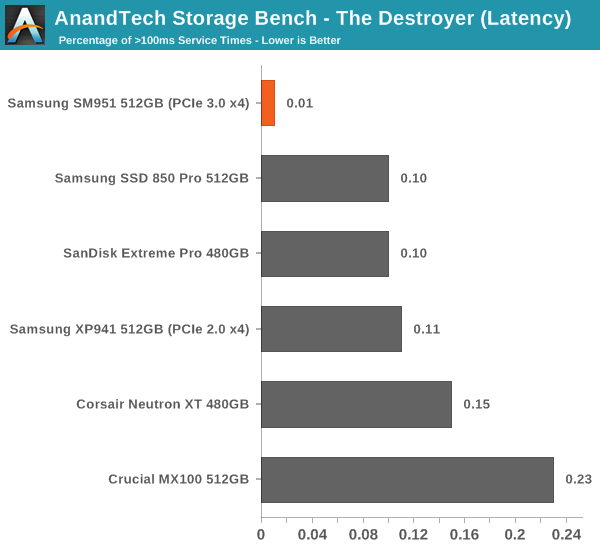

The two key metrics I'm reporting haven't changed and I'll continue to report both data rate and latency because the two have slightly different focuses. Data rate measures the speed of the data transfer, so it emphasizes large IOs that simply account for a much larger share when looking at the total amount of data. Latency, on the other hand, ignores the IO size, so all IOs are given the same weight in the calculation. Both metrics are useful, although in terms of system responsiveness I think the latency is more critical. As a result, I'm also reporting two new stats that provide us a very good insight to high latency IOs by reporting the share of >10ms and >100ms IOs as a percentage of the total.

The SM951 takes the lead easily and provides ~34% increase in data rate over the XP941. The advantage over some of the slower SATA 6Gbps drives is nearly threefold, which speaks for the performance benefit that PCIe and especially PCIe 3.0 provide.

The latency benefit isn't as significant, which suggests that the SM951 provides substantial boost in large IO performance, but the performance at small IO sizes isn't dramatically better.

Despite the lowest average latency, the SM951 actually has the most >10ms IO with nearly 2% of the IOs having higher latency than 10ms. I did some thermal throttling testing (see the dedicated page for full results) and the SM951 seems to throttle fairly aggressively, so my hypothesis is that the high number is due to throttling, which limits the drive's throughput momentarily (and hence increases the latency) to cool down the drive.

However, the SM951 has the least >100ms IOs, which means that despite the possible throttling the maximum service times stay between 10ms and 100ms.

128 Comments

View All Comments

Kevin G - Tuesday, February 24, 2015 - link

"I also verified that the SM951 is bootable in tower Mac Pros (2012 and earlier)."Excellent. The old 2010/2012 towers continue to show that being expandable provides long term benefit. I'm glad that I picked up my tower Mac Pro when I did.

Now to find a carrier that'll convert the 4x PCIe 3.0 link of the M.2 connector to an 8x PCIe 2.0 link for a Mac Pro. (Two two M.2s to a single 16x PCIe 2.0 link.)

extide - Tuesday, February 24, 2015 - link

You will need a PLX chip to do that, you can't just put 2 x4 devices into an x8 slot...jimjamjamie - Wednesday, February 25, 2015 - link

It's pretty hilarious how many people drink the shiny plastic trash bin kool-aid.Tunnah - Tuesday, February 24, 2015 - link

I'm not super knowledgeable on the whole thing, but isn't NVMe really only a big deal for enterprise, as it's more a benefit for multi drive setups ?Kristian Vättö - Tuesday, February 24, 2015 - link

It's of course a bigger deal for enterprises because the need for performance is higher. However, NVMe isn't just a buzzword for the client space because it reduced the protocol latency, which in turn results in higher performance at low queue depths that are common for client workloads.knweiss - Sunday, March 1, 2015 - link

Kristian, did you ever test how much influence the filesystem has? I would love to see a filesystem comparison on the various platforms with NVMe drivers (Windows, Linux, FreeBSD, etc).The_Assimilator - Tuesday, February 24, 2015 - link

Hopefully NVMe will be standard on SSDs by the time Skylake and 100-series chipsets arrive.sna1970 - Tuesday, February 24, 2015 - link

What is the point of this expensive drive when you can have the same numbers using 2 SSD in Raid 0 ?and please no one says to me risk of Data Loss .. SSD are not mechanical and the chance of loosing 1 SSD is the same of 2 of them.

Kristian Vättö - Tuesday, February 24, 2015 - link

RAID only tends to increase high QD and large IO transfers where the IO load can easily be distributed between two or more drives. Low QD performance at small IO sizes can actually be worse due to additional overhead from the RAID drivers.dzezik - Tuesday, February 24, 2015 - link

Hi sna1970. You misses Bernouli's "introduced the principle of the maximum product of the probabilities of a system of concurrent errors" it is quite old 1782 but is is still valid. Have You ever been in school. Do You have mathematics classes?