The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

GPU Scaling

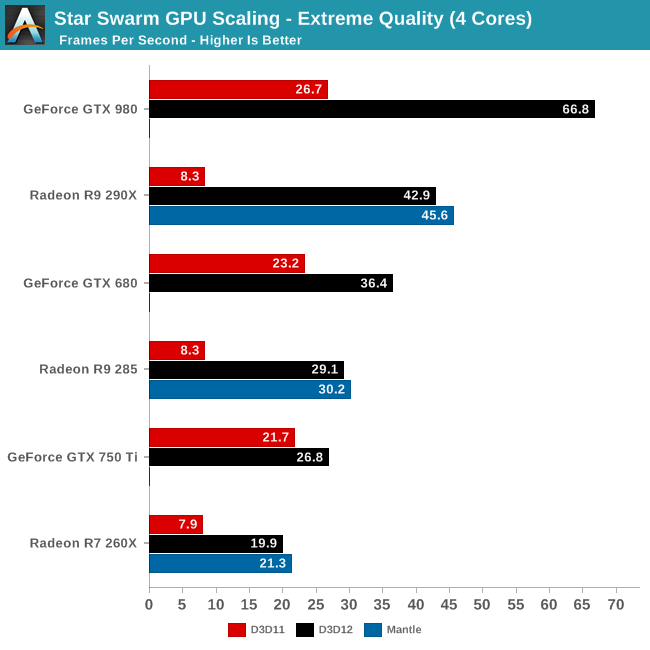

Switching gears, let’s take a look at performance from a GPU standpoint, including how well Star Swarm performance scales with more powerful GPUs now that we have eliminated the CPU bottleneck. Until now Star Swarm has never been GPU bottlenecked on high-end NVIDIA cards, so this is our first time seeing just how much faster Star Swarm can get until it runs into the limits of the GPU itself.

As it stands, with the CPU bottleneck swapped out for a GPU bottleneck, Star Swarm starts to favor NVIDIA GPUs right now. Even accounting for performance differences, NVIDIA ends up coming out well ahead here, with the GTX 980 beating the R9 290X by over 50%, and the GTX 680 some 25% ahead of the R9 285, both values well ahead of their average lead in real-world games. With virtually every aspect of this test still being under development – OS, drivers, and Star Swarm – we would advise not reading into this too much right now, but it will be interesting to see if this trend holds with the final release of DirectX 12.

Meanwhile it’s interesting to note that largely due to their poor DirectX 11 performance in this benchmark, AMD sees the greatest gains from DirectX 12 on a relative basis and comes close to seeing the greatest gains on an absolute basis as well. The GTX 980’s performance improves by 150% and 40.1fps when switching APIs; the R9 290X improves by 416% and 34.6fps. As for AMD’s Mantle, we’ll get back to that in a bit.

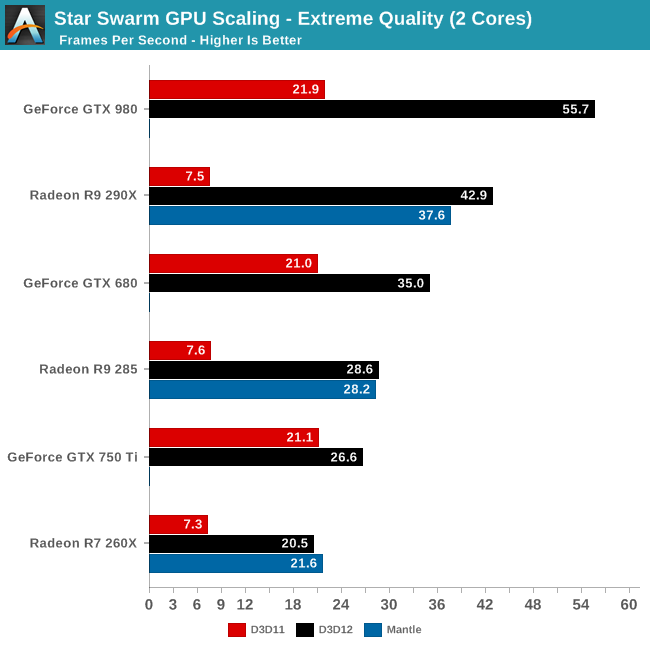

Having already established that even 2 CPU cores is enough to keep Star Swarm fed on anything less than a GTX 980, the results are much the same here for our 2 core configuration. Other than the GTX 980 being CPU limited, the gains from enabling DirectX 12 are consistent with what we saw for the 4 core configuration. Which is to say that even a relatively weak CPU can benefit from DirectX 12, at least when paired with a strong GPU.

However the GTX 750 Ti result in particular also highlights the fact that until a powerful GPU comes into play, the benefits today from DirectX 12 aren’t nearly as great. Though the GTX 750 Ti does improve in performance by 26%, this is far cry from the 150% of the GTX 980, or even the gains for the GTX 680. While AMD is terminally CPU limited here, NVIDIA can get just enough out of DirectX 11 that a 2 core configuration can almost feed the GTX 750 Ti. Consequently in the NVIDIA case, a weak CPU paired with a weak GPU does not currently see the same benefits that we get elsewhere. However as DirectX 12 is meant to be forward looking – to be out before it’s too late – as GPU performance gains continue to outstrip CPU performance gains, the benefits even for low-end configurations will continue to increase.

245 Comments

View All Comments

james.jwb - Saturday, February 7, 2015 - link

Every single paragraph is completely misinformed, how embarrassing.Alexey291 - Saturday, February 7, 2015 - link

Yeah ofc it is ofc it is.inighthawki - Saturday, February 7, 2015 - link

>> There's no benefit for me who only uses a Windows desktop as a gaming machine.Wrong. Game engines that utilize DX12 or Mantle, and actually optimized for it, can increase the number of draw calls that usually cripples current generation graphics APIs. This leads to a large number of increased objects in game. This is the exact purpose of the starswarm demo in the article. If you cannot see the advantage of this, then you are too ignorant about the technology you're talking about, and I suggest you go learn a thing or two before pretending to be an expert in the field. Because yes, even high end gaming rigs can benefit from DX12.

You also very clearly missed the pages of the article discussing the increased consistency of performance. DX12 will have better frame to frame deltas making the framerate more consistent and predictable. Let's not even start with discussions on microstutter and the like.

>> Dx12 is not interesting either because my current build is actually limited by vsync. Nothing else but 60fps vsync (fake fps are for kids). And it's only a mid range build.

If you have a mid range build and limited by vsync, you are clearly not playing quite a few games out there that would bring your rig to its knees. 'Fake fps' is not a term, but I assume you are referring to unbounded framerate by not synchronizing with vsync. Vsync has its own disadvantages. Increase input lag and framerate halving by missing the vsync period. Now if only directx supported proper triple buffering to allow reduces input latency with vsync on. It's also funny how you insult others as 'kids' as if you are playing in a superior mode, yet you are still on a 60Hz display...

>> So why should I bother if all I do in Windows at home is launch steam (or a game from an icon on the desktop) aaaand that's it?

Because any rational person would want better, more consistent performance in games capable of rendering more content, especially when they don't even have a high end rig. The fact that you don't realize how absurdly stupid your comment is makes the whole situation hilarious. Have fun with your high overhead games on your mid range rig.

ymcpa - Wednesday, February 11, 2015 - link

Question is what do you lose from installing it? There might not be much gain initially as developers learn to take full advantage and they will be making software optimized for windows 7. However, as more people switch, you will start seeing games optimized for dx12. If you wait for that point, you will be paying for the upgrade. If you do it now you get it for free and I don't see how you will lose anything, other than a day to install the OS and possibly reinstall apps.Morawka - Friday, February 6, 2015 - link

Historically new DX release's have seen a 2-3 year adoption lag by game developers. Now, some AAA Game companies always throw in a couple of the new features at launch, the core of these engines will use DX 11 for the next few years.However with DX 12, The benifits are probably going to be to huge to ignore. Previous DX releases were new effects and rendering technologies. with DX 12, it effectively is cutting the minimum system requirements by 20-30% on the CPU side and probably 5%-10% on the GPU side.

So DX12 adoption should be much faster IMHO. But it's no biggie if it's not.

Friendly0Fire - Saturday, February 7, 2015 - link

DX12 also has another positive: backwards compatibility. Most new DX API versions introduce new features which will only work on either the very latest generation, or on the future generation of GPUs. DX12 will work on cards released in 2010!That alone means devs will be an awful lot less reluctant to use it, since a significant proportion of their userbase can already use it.

dragonsqrrl - Saturday, February 7, 2015 - link

"DX12 will work on cards released in 2010"Well, at least if you have an Nvidia card.

Pottuvoi - Saturday, February 7, 2015 - link

DX11 supported 'DX9 SM3' cards as well.DX12 will be similar, it will have layer which works on old cards, but the truly new features will not be available as hardware just is not there.

dragonsqrrl - Sunday, February 8, 2015 - link

Yes but you still need the drivers to enable that API level support. Every DX12 article I've read, including this one, has specifically stated that AMD will not provide DX12 driver support for GPU's prior to GCN 1.0 (HD7000 series).Murloc - Saturday, February 7, 2015 - link

I don't think so given that they're giving out free upgrades and the money-spending gamers who benefit from this the most will mostly upgrade, or if they're not interested in computers besides gaming, will be upgraded when they change their computer.