The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Mid Quality Performance

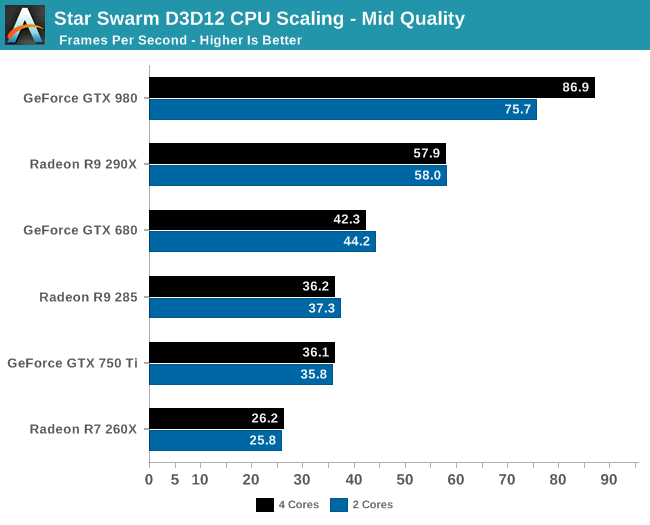

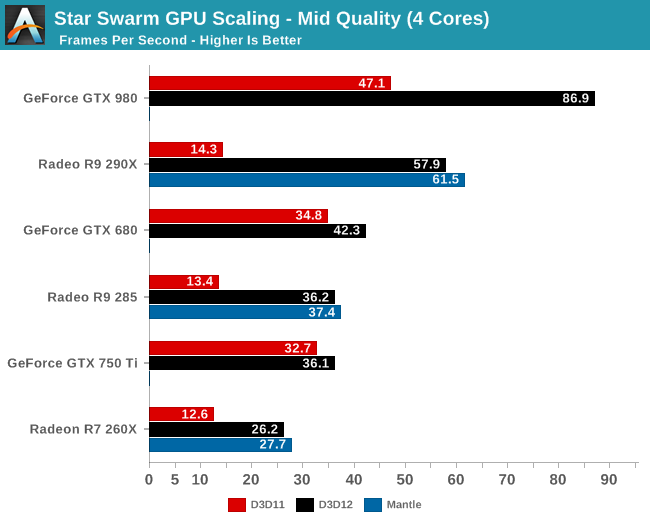

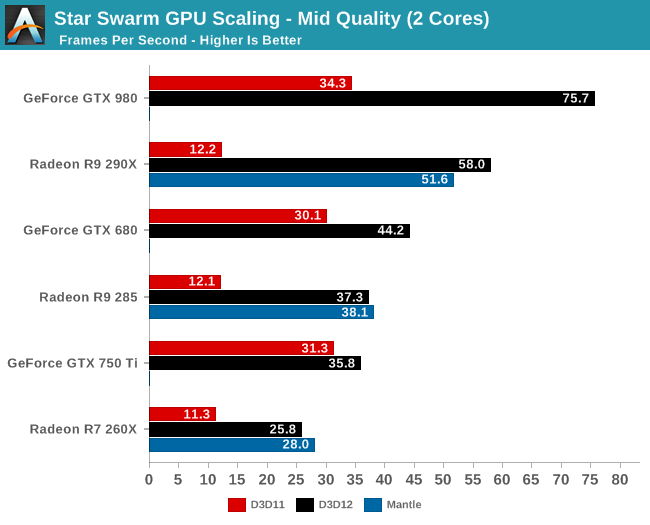

Since our evaluation so far has been focused on performance with Star Swarm’s most resource intensive Extreme setting, we wanted to shake things up by trying a lower quality setting.

In this case Star Swarm’s various quality levels adjust both the CPU and GPU workload, with the Mid quality setting reducing both the number of draw calls generated and the amount of work generated per frame for the GPU. As a result we’re not adjusting just the CPU or the GPU workload, but it can give us an idea of what to expect from DirectX 12 and Star Swarm at lower settings more suitable for weaker systems.

Even with this lower quality setting, the CPU results tell us that only the GTX 980 is truly CPU bottlenecked with 2 cores. Everything else from the 290X on down can reach its GPU limit with a relatively weak CPU.

Overall the numbers are different, but the lineup is the same whether it’s Extreme quality or Mid quality. Every vendor still sees massive gains from enabling DirectX 12, though the overall gains aren’t quite as great as with Extreme quality. Meanwhile GTX 750 Ti in particular continues to see the weakest gains from DirectX 12, at only 14% for a 2 core configuration, thanks to a combination of NVIDIA’s lower CPU consumption and earlier GPU bottleneck.

245 Comments

View All Comments

Alexey291 - Friday, February 6, 2015 - link

It can be as free as it likes. In fact for all I care they can pay me to install it... Still not going to bother. And you know why? There's no benefit for me who only uses a Windows desktop as a gaming machine.Not a single one. Dx12 is not interesting either because my current build is actually limited by vsync. Nothing else but 60fps vsync (fake fps are for kids). And it's only a mid range build.

So why should I bother if all I do in Windows at home is launch steam (or a game from an icon on the desktop) aaaand that's it?

Nuno Simões - Friday, February 6, 2015 - link

Clearly, you need to read the article again.Alexey291 - Saturday, February 7, 2015 - link

There's a difference in a benchmark. Well surprise surprise. On the other hand games are likely to be optimised before release. Even games by Ubisoft MIGHT be optimised somewhat.So the difference between dx11 and 12 will be imperceptible as usual. Just like the difference between ten and eleven was even though benchmarks have always shown that 11 is more efficient and generally faster.

Nuno Simões - Saturday, February 7, 2015 - link

So, it's faster and more efficient, but that's worthless?What happened between 10 and 11 is that developers used those improevments to add to substance, not speed, and that is probably going to happen again. But anyway you see it, there is a gain in something. And, besides, just the gains from frametimes and lower buffers is worth it. Less stutter is always a good thing.

And the bad optimisation from some developers is hardly DX's fault, or any other API for that matter. Having a better API doesn't suddenly turn all other API's into s*it.

inighthawki - Saturday, February 7, 2015 - link

What are you blabbering on about? No benefit? Fake fps? Do you even know anything about PCs or gaming?Alexey291 - Saturday, February 7, 2015 - link

You don't actually understand that screen tearing is a bad thing do you? :)Murloc - Saturday, February 7, 2015 - link

I've never seen screen tearing in my life and I don't use vsync despite having a 60 Hz monitor.Fact is, you and I have not much to gain from DX12, apart for the new features (e.g. I have a dx10 card and I can't play games with the dx11 settings which do add substance to games, so it does have a benefit, you can't say there is no difference).

So whether you upgrade or not will not influence the choices of game engine developers.

The CPU bottlenecked people are using 144Hz monitors and willing to spend money to get the best so they do gain something. Not everybody is you.

Besides, I will be upgrading to windows 10 because the new features without the horrible w8 stuff are really an improvement, e.g. the decent copy/paste dialog that is already in w8.

Add the fact that it's free for w8/8.1 owners and it will see immediate adoption.

Some people stayed on XP for years instead of going to Vista before 7, but they eventually upgraded anyway because the difference in usability is quite significant. Not being adopted as fast as you some guy on the internet think would be fast enough is no excuse to stop development, otherwise someone else will catch up.

inighthawki - Saturday, February 7, 2015 - link

I well understand what screen tearing is, but not locking to vsync doesn't suddenly make it 'fake FPS.' You literally just made up a term that means nothing/. You're also ignoring the common downside of vsync: input lag. For many people it is extremely noticeable and makes games unplayable unless they invest in low latency displays with fast refresh rates.Margalus - Saturday, February 7, 2015 - link

it does make it "fake fps" in a way. It doesn't matter if fraps is telling you that you are getting 150fps, your monitor is only capable of showing 60fps. So that 150fps is a fallacy, you are only seeing 60fps. And without vsync enabled, those 60fps that your monitor is showing are comprised of pieces more than one frame in each, hence the tearing.but other than that, I can disagree with most everything that poster said..

inighthawki - Saturday, February 7, 2015 - link

Correct, I realize that. But it's still not really fake. Just because you cannot see every frame it renders does not mean that it doesn't render them, or that there isn't an advantage to doing so. By enabling vsync, you are imposing a presentation limit on the application. It must wait until the vsync to present the frame, which means the application must block until it does. The faster the GPU is at rendering frames, the larger impact this has on input latency. By default, DirectX allows you to queue three frames ahead. this means if your GPU can render all three frames within one refresh period of your monitor, you will have a 3 frame latency (plus display latency) between when it's rendered and when you see it on screen, since each frame needs to be displayed in the order it is rendered. With vsync off, you get tearing because there is no wait on presents. The moment you finish is the moment you can swap the backbuffer and begin the next frame. You always have (nearly) the minimal amount of latency possible. This is avoidable with proper implementations of triple buffering that allow the developer to discard old frames. In all cases, the fps still means something. Rendering at 120fps and only seeing 60 of them doesn't make it useless to do so.