The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Mid Quality Performance

Since our evaluation so far has been focused on performance with Star Swarm’s most resource intensive Extreme setting, we wanted to shake things up by trying a lower quality setting.

In this case Star Swarm’s various quality levels adjust both the CPU and GPU workload, with the Mid quality setting reducing both the number of draw calls generated and the amount of work generated per frame for the GPU. As a result we’re not adjusting just the CPU or the GPU workload, but it can give us an idea of what to expect from DirectX 12 and Star Swarm at lower settings more suitable for weaker systems.

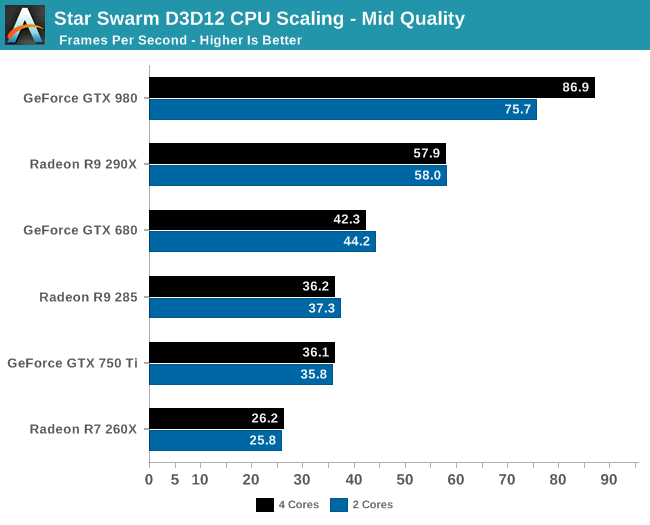

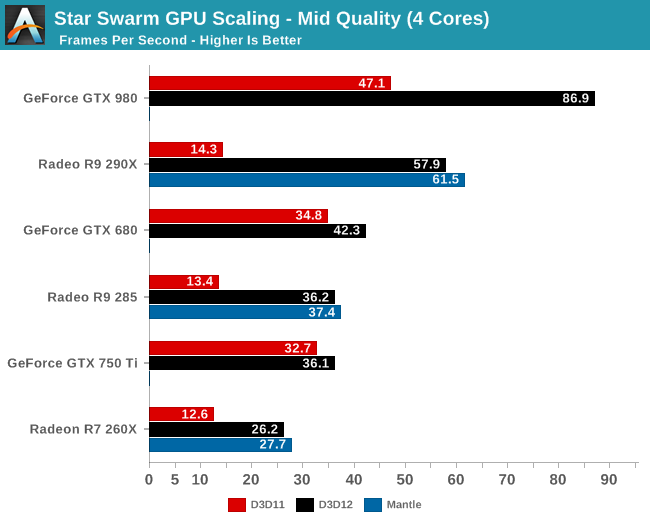

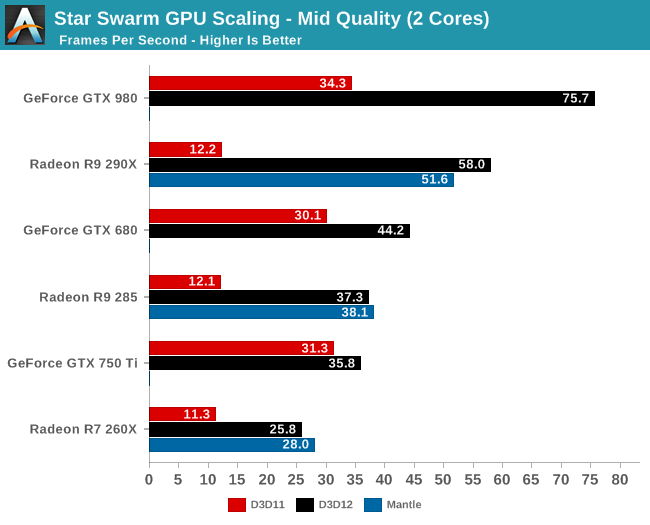

Even with this lower quality setting, the CPU results tell us that only the GTX 980 is truly CPU bottlenecked with 2 cores. Everything else from the 290X on down can reach its GPU limit with a relatively weak CPU.

Overall the numbers are different, but the lineup is the same whether it’s Extreme quality or Mid quality. Every vendor still sees massive gains from enabling DirectX 12, though the overall gains aren’t quite as great as with Extreme quality. Meanwhile GTX 750 Ti in particular continues to see the weakest gains from DirectX 12, at only 14% for a 2 core configuration, thanks to a combination of NVIDIA’s lower CPU consumption and earlier GPU bottleneck.

245 Comments

View All Comments

junky77 - Friday, February 6, 2015 - link

Looking at the CPU scaling graphs and CPU/GPU usage, it doesn't look like the situation in other games where CPU can be maxed out. It does seem like this engine and test might be really tailored for this specific case of DX12 and Mantle in a specific wayThe interesting thing is to understand whether the DX11 performance shown here is optimal. The CPU usage is way below max, even for the one core supposedly taking all the load. Something is bottlenecking the performance and it's not the number of cores, threads or clocks.

eRacer1 - Friday, February 6, 2015 - link

So the GTX 980 is using less power than the 290X while performing ~50% better, and somehow NVIDIA is the one with the problem here? The data is clear. The GTX 980 has a massive DX12 (and DX11) performance lead and performance/watt lead over 290X.The_Countess666 - Thursday, February 19, 2015 - link

it also costs twice as much.and this is the first time in roughly 4 generations that nvidia's managed to release a new generation first. it would be shocking is there wasn't a huge performance difference between AMD and nvidia at the moment.

bebimbap - Friday, February 6, 2015 - link

TDP and power consumption are not the same thing, but are relatedif i had to write a simple equation it would be something to the effect of

TDP(wasted heat) = (Power Consumption) X (process node coeff) X (temperature of silicon coeff) X (Architecture coeff)

so basically TDP or "wasted heat" is related to power consumption but not the same thing

Since they are on the same process node by the same foundry, the difference in TDP vs power consumed would be because of Nvidia currently has the more efficient architecture, and that also leads to their chips being cooler, both of which lead to less "wasted heat"

A perfect conductor would have 0 TDP and infinite power consumption.

Mr Perfect - Saturday, February 7, 2015 - link

Erm, I don't think you've got the right term there with TDP. TDP is not defined as "wasted heat", but as the typical power draw of the board. So if TDP for the GTX 980 is 165 watts, that just means that in normal gaming use it's drawing 165 watts.Besides, if a card is drawing 165watts, it's all going to become heat somewhere along the line. I'm not sure you can really decide how many of those watts are "wasted" and how many are actually doing "work".

Wwhat - Saturday, February 7, 2015 - link

No, he's right TDP means Thermal design power and defines the cooling a system needs to run at full power.Strunf - Saturday, February 7, 2015 - link

It's the same... if a GC draws 165W it needs a 165W cooler... do you see anything moving on your card exept the fans? no, so all power will be transformed into heat.wetwareinterface - Saturday, February 7, 2015 - link

no it's not the same. 165w tdp means the cooler has to dump 165w worth of heat.165w power draw means the card needs to have 165w of power available to it.

if the card draws 300w of power and has 200w of heat output that means the card is dumping 200w of that 300w into the cooler.

Strunf - Sunday, February 8, 2015 - link

It's impossible for the card to draw 300W and only output 200W of heat... unless of course now GC defy the laws of physics.grogi - Sunday, April 5, 2015 - link

What is it doing with the remaining 100W?