The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

GPU Scaling

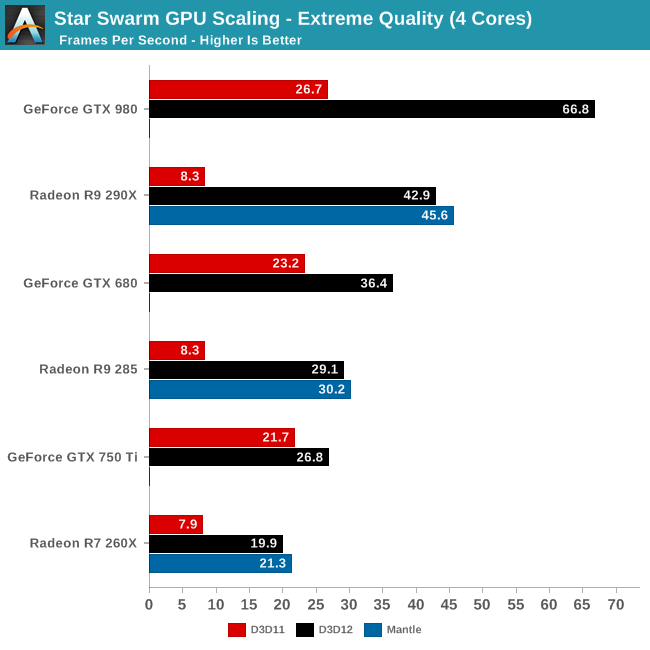

Switching gears, let’s take a look at performance from a GPU standpoint, including how well Star Swarm performance scales with more powerful GPUs now that we have eliminated the CPU bottleneck. Until now Star Swarm has never been GPU bottlenecked on high-end NVIDIA cards, so this is our first time seeing just how much faster Star Swarm can get until it runs into the limits of the GPU itself.

As it stands, with the CPU bottleneck swapped out for a GPU bottleneck, Star Swarm starts to favor NVIDIA GPUs right now. Even accounting for performance differences, NVIDIA ends up coming out well ahead here, with the GTX 980 beating the R9 290X by over 50%, and the GTX 680 some 25% ahead of the R9 285, both values well ahead of their average lead in real-world games. With virtually every aspect of this test still being under development – OS, drivers, and Star Swarm – we would advise not reading into this too much right now, but it will be interesting to see if this trend holds with the final release of DirectX 12.

Meanwhile it’s interesting to note that largely due to their poor DirectX 11 performance in this benchmark, AMD sees the greatest gains from DirectX 12 on a relative basis and comes close to seeing the greatest gains on an absolute basis as well. The GTX 980’s performance improves by 150% and 40.1fps when switching APIs; the R9 290X improves by 416% and 34.6fps. As for AMD’s Mantle, we’ll get back to that in a bit.

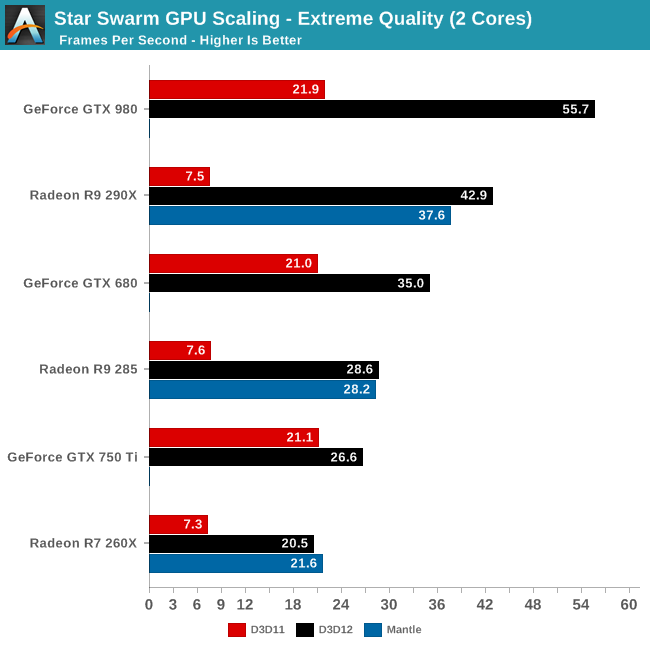

Having already established that even 2 CPU cores is enough to keep Star Swarm fed on anything less than a GTX 980, the results are much the same here for our 2 core configuration. Other than the GTX 980 being CPU limited, the gains from enabling DirectX 12 are consistent with what we saw for the 4 core configuration. Which is to say that even a relatively weak CPU can benefit from DirectX 12, at least when paired with a strong GPU.

However the GTX 750 Ti result in particular also highlights the fact that until a powerful GPU comes into play, the benefits today from DirectX 12 aren’t nearly as great. Though the GTX 750 Ti does improve in performance by 26%, this is far cry from the 150% of the GTX 980, or even the gains for the GTX 680. While AMD is terminally CPU limited here, NVIDIA can get just enough out of DirectX 11 that a 2 core configuration can almost feed the GTX 750 Ti. Consequently in the NVIDIA case, a weak CPU paired with a weak GPU does not currently see the same benefits that we get elsewhere. However as DirectX 12 is meant to be forward looking – to be out before it’s too late – as GPU performance gains continue to outstrip CPU performance gains, the benefits even for low-end configurations will continue to increase.

245 Comments

View All Comments

akamateau - Thursday, February 26, 2015 - link

I think that Anand has uncovered something unexpected in the data sets they have collected. The graphs showing frame time and consistency show something else besides how fast Dx12 and Mantle generate frames.Dx12 and Mantle frame times are essentially the same. The variation are only due to run-time variations inside the game simulation. When the game simulation begins there far more AI objects and draw calls compared to later in the game when many of these objects have been destroyed in the game simulation. That is the reason the frame times get faster and smooth out.

Can ANYBODY now say with a straight face that Dx12 is NOT Mantle?

Since Mantle is an AMD derived instruction set it acts as a performance benchmark between both nVidia and AMD cards and shows fairly comparable and consistant results.

However when we look at Dx11 we see that R9-290 is serious degraded by Dx11 in violation of the AMD vs Intel settlement agreement.

How both nVidia and AMD cards be essentially equivalent running Dx12 and Mantle and so far apart running Dx11? That is the question that Intel needs to answer to the FTC and the Justice Department.

This Dx11 benchmark is the smoking gun that could cost Intel BILLIONS.

Thermalzeal - Wednesday, March 4, 2015 - link

Looks like my hunch was correct. Microsoft reporting that DX12 will bump performance on Xbox One ~20%.Game_5abi - Monday, March 9, 2015 - link

so if i have to build a pc should i go for fx series due to their more cores or there is no benefit of having more than 4 cores (and should buy a i5 processor)?peevee - Friday, March 13, 2015 - link

"The bright side of all of this is that with Microsoft’s plans to offer Windows 10 as a free upgrade for Windows 7/8/8.1 users, the issue is largely rendered moot. Though DirectX 12 isn’t being backported, Windows users will instead be able to jump forward for free, so unlike Windows 8 this will not require spending money on a new OS just to gain access to the latest version of DirectX. "But this is not true, Win10 is only going to be free for a year or so and then you'll have to pay EVERY year.

Dorek - Wednesday, March 18, 2015 - link

"But this is not true, Win10 is only going to be free for a year or so and then you'll have to pay EVERY year."Wrong. Windows 10 will be free for one year, and after that you pay once to upgrade. If you get it during that one-year period, you pay 0 times.