The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

GPU Scaling

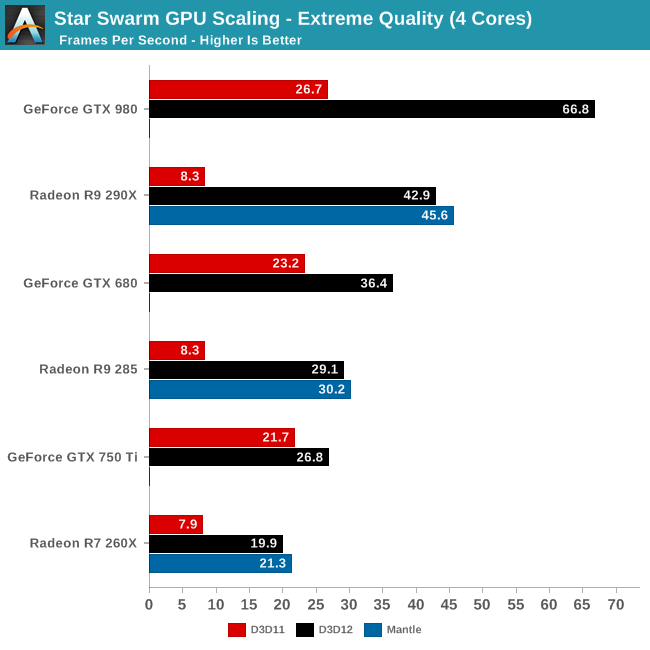

Switching gears, let’s take a look at performance from a GPU standpoint, including how well Star Swarm performance scales with more powerful GPUs now that we have eliminated the CPU bottleneck. Until now Star Swarm has never been GPU bottlenecked on high-end NVIDIA cards, so this is our first time seeing just how much faster Star Swarm can get until it runs into the limits of the GPU itself.

As it stands, with the CPU bottleneck swapped out for a GPU bottleneck, Star Swarm starts to favor NVIDIA GPUs right now. Even accounting for performance differences, NVIDIA ends up coming out well ahead here, with the GTX 980 beating the R9 290X by over 50%, and the GTX 680 some 25% ahead of the R9 285, both values well ahead of their average lead in real-world games. With virtually every aspect of this test still being under development – OS, drivers, and Star Swarm – we would advise not reading into this too much right now, but it will be interesting to see if this trend holds with the final release of DirectX 12.

Meanwhile it’s interesting to note that largely due to their poor DirectX 11 performance in this benchmark, AMD sees the greatest gains from DirectX 12 on a relative basis and comes close to seeing the greatest gains on an absolute basis as well. The GTX 980’s performance improves by 150% and 40.1fps when switching APIs; the R9 290X improves by 416% and 34.6fps. As for AMD’s Mantle, we’ll get back to that in a bit.

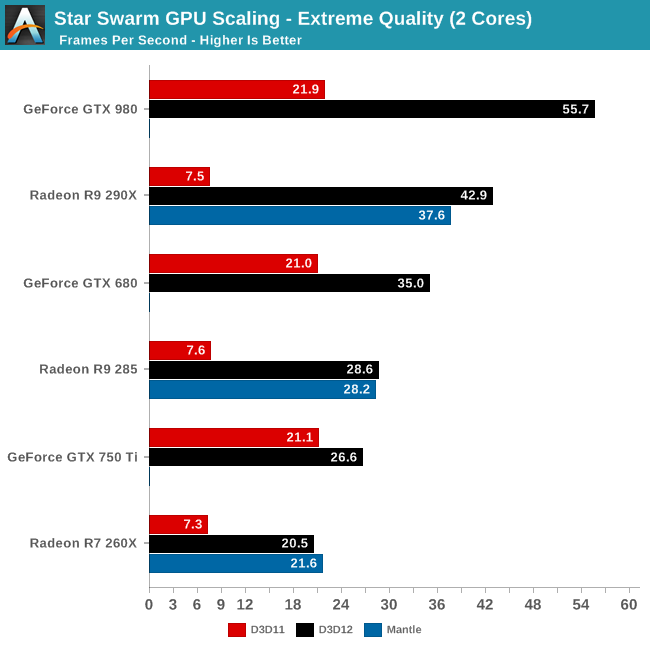

Having already established that even 2 CPU cores is enough to keep Star Swarm fed on anything less than a GTX 980, the results are much the same here for our 2 core configuration. Other than the GTX 980 being CPU limited, the gains from enabling DirectX 12 are consistent with what we saw for the 4 core configuration. Which is to say that even a relatively weak CPU can benefit from DirectX 12, at least when paired with a strong GPU.

However the GTX 750 Ti result in particular also highlights the fact that until a powerful GPU comes into play, the benefits today from DirectX 12 aren’t nearly as great. Though the GTX 750 Ti does improve in performance by 26%, this is far cry from the 150% of the GTX 980, or even the gains for the GTX 680. While AMD is terminally CPU limited here, NVIDIA can get just enough out of DirectX 11 that a 2 core configuration can almost feed the GTX 750 Ti. Consequently in the NVIDIA case, a weak CPU paired with a weak GPU does not currently see the same benefits that we get elsewhere. However as DirectX 12 is meant to be forward looking – to be out before it’s too late – as GPU performance gains continue to outstrip CPU performance gains, the benefits even for low-end configurations will continue to increase.

245 Comments

View All Comments

alaricljs - Friday, February 6, 2015 - link

It takes time to devise such tests and more time validate that the test is really doing what you want and yet more time to DO the testing... and meanwhile I'm pretty sure they're not going to just drop everything else that's in the pipeline.monstercameron - Friday, February 6, 2015 - link

and amd knows that well but maybe nvidia should also...maybe?JarredWalton - Friday, February 6, 2015 - link

As an owner -- an owner that actually BOUGHT my 970, even though I could have asked for one -- of a GTX 970, I can honestly say that the memory segmentation issue isn't much of a concern. The reality is that when you're running settings that are coming close to 3.5GB of VRAM use, you're also coming close to the point where the performance is too low to really matter in most games.Case in point: Far Cry 4, or Assassin's Creed Unity, or Dying Light, or Dragon Age: Inquisition, or pretty much any other game I've played/tested, the GTX 980 is consistently coming in around 20-25% faster than the GTX 970. In cases where we actually come close to the 4GB VRAM on those cards (e.g. Assassin's Creed Unity at 4K High or QHD Ultra), both cards struggle to deliver acceptable performance. And there are dozens of other games that won't come near 4GB VRAM that are still providing unacceptable performance with these GPUs at QHD Ultra settings (Metro: Last Light, Crysis 3, Company of Heroes 2 -- though that uses SSAA so it really kills performance at higher quality settings).

Basically, with current games finding a situation where GTX 980 performs fine but the GTX 970 performance tanks is difficult at best, and in most cases it's a purely artificial scenario. Most games really don't need 4GB of textures to look good, and when you drop texture quality from Ultra to Very High (or even High), the loss in quality is frequently negligible while the performance gains are substantial.

Finally, I think it's worth noting again that NVIDIA has had memory segmentation on other GPUs, though perhaps not quite at this level. The GTX 660 Ti has a 192-bit memory interface with 2GB VRAM, which means there's 512MB of "slower" VRAM on one of the channels. That's one fourth of the total VRAM and yet no one really found cases where it mattered, and here we're talking about 1/8 of the total VRAM. Perhaps games in the future will make use of precisely 3.75GB of VRAM at some popular settings and show more of an impact, but the solution will still be the same: twiddle a few settings to get back to 80% of the GTX 980 performance rather than worrying about the difference between 10 FPS and 20 FPS, since neither one is playable.

shing3232 - Friday, February 6, 2015 - link

Those people who own two 970 will not agree with you.JarredWalton - Friday, February 6, 2015 - link

I did get a second one, thanks to Zotac (I didn't pay for that one, though). So sorry to disappoint you. Of course, there are issues at times, but that's just the way of multiple GPUs, whether it be SLI or CrossFire. I knew that going into the second GPU acquisition.At present, I can say that Far Cry 4 and Dying Light are not working entirely properly with SLI, and neither are Wasteland 2 or The Talos Principle. Assassin's Creed: Unity seems okay to me, though there is a bit of flicker perhaps on occasion. All the other games I've tried work fine, though by no means have I tried "all" the current games.

For CrossFire, the list is mostly the same with a few minor additions. Assassin's Creed: Unity, Company of Heroes 2, Dying Light, Far Cry 4, Lords of the Fallen, and Wasteland 2 all have problems, and scaling is pretty poor on at least a couple other games (Lichdom: Battlemage and Middle-Earth: Shadow of Mordor scale, but more like by 25-35% instead of 75% or more).

Overall, GTX 970 SLI and R9 290X CF are basically tied at both 4K and QHD testing in my results across quite a few games, with NVIDIA taking a slight lead at 1080p and lower. In fact for single GPUs, 290X wins on average by 10% at 4K (but neither card is typically playable except at lower quality settings), while the difference is 1% or less at QHD Ultra.

Cryio - Saturday, February 7, 2015 - link

"Overall, GTX 970 SLI and R9 290X CF are basically tied at both 4K and QHD testing in my results across quite a few games, with NVIDIA taking a slight lead at 1080p and lower."By virtue of *every* benchmark I've seen on the internets, on literally every game, 4K, maxed out settings, CrossFire of 290Xs are faster than both SLI 970s *and* 980s.

In 1080p and 1440p, by all intents and purposes, 290Xs trade blows with 970s and the 980s reign supreme. But at 4K, the situation completely shifts and the 290Xs come on top.

JarredWalton - Saturday, February 7, 2015 - link

Note that my list of games is all relatively recent stuff, so the fact that CF fails completely in a few titles certainly hurts -- and that's reflected in my averages. If we toss out ACU, CoH2, DyLi, FC4, LotF... then yes, it would do better, but then I'm cherry picking results to show the potential rather than the reality of CrossFire.Kjella - Saturday, February 7, 2015 - link

Owner of 2x970s here, reviews show that 2x780 Ti generally wins current games at 3840x2160 with only 3GB of memory so it doesn't seem to matter much today, I've seen no non-synthetic benchmarks at playable resolutions/frame rates to indicate otherwise. Nobody knows what future games will bring but I would have bought them as a "3.5 GB" card too, though of course I feel a little cheated that they're worse than the 980 GTX in a way I didn't expect.JarredWalton - Saturday, February 7, 2015 - link

I don't have 780 Ti (or 780 SLI for that matter), but interestingly GTX 780 just barely ends up ahead of a single GTX 970 at QHD Ultra and 4K High/Ultra settings. There are times where 970 leads, but the times when 780 leads are by slightly higher margins. Effectively, GTX 970 is equal to GTX 780 but at a lower price point and with less power.mapesdhs - Tuesday, February 10, 2015 - link

That's the best summary I've read on all this IMO, ie. situations which would demonstrate

the 970's RAM issue are where performance isn't good enough anyway, typically 4K

gaming, so who cares? Right now, if one wants better performance at that level, then

buy one or more 980, 290X, whatever, because two of any lesser card aren't going

to be quick enough by definition.

I bought two 980s, first all-new GPU purchase since I bought two of EVGA's infamous

GTX 460 FTW cards when they first came out. Very pleased with the 980s, they're

excellent cards. Bought a 3rd for benchmarking, etc., the three combined give 8731

for Fire Strike Ultra (result no. 4024577), I believe the highest S1155 result atm, but

the fps numbers still aren't really that high.

Truth is, by the time a significant number of people will be concerned about a typical

game using more than 3.5GB RAM, GPU performance needs to be a heck of a lot

quicker than a 970. It's a non-issue. None of the NVIDIA-hate I've seen changes the

fact that the 970 is a very nice card, and nothing changes how well it performs as

shown in initial reviews. I'll probably get one for my brother's bday PC I'm building,

to go with a 3930K setup.

Most of those complaining about all this are people who IMO have chosen to believe

that NVIDIA did all of this deliberately, because they want that to be the case, irrespective

of what actually happened, and no amount of evidence to the contrary will change their

minds. The 1st Rule gets broken again...

As I posted elsewhere, all those complainig about the specs discrepancy do however

seem perfectly happy for AMD (and indeed NVIDIA) to market dual-GPU cards as

having double RAM numbers which is completely wrong, not just misleading. Incredible

hypocrasy here.

Ian.