GeForce GTX 970: Correcting The Specs & Exploring Memory Allocation

by Ryan Smith on January 26, 2015 1:00 PM ESTSegmented Memory Allocation in Software

So far we’ve talked about the hardware, and having finally explained the hardware basis of segmented memory we can begin to understand the role software plays, and how software allocates memory among the two segments.

From a low-level perspective, video memory management under Windows is the domain of the combination of the operating system and the video drivers. Strictly speaking Windows controls video memory management – this being one of the big changes of Windows Vista and the Windows Display Driver Model – while the video drivers get a significant amount of input in hinting at how things should be laid out.

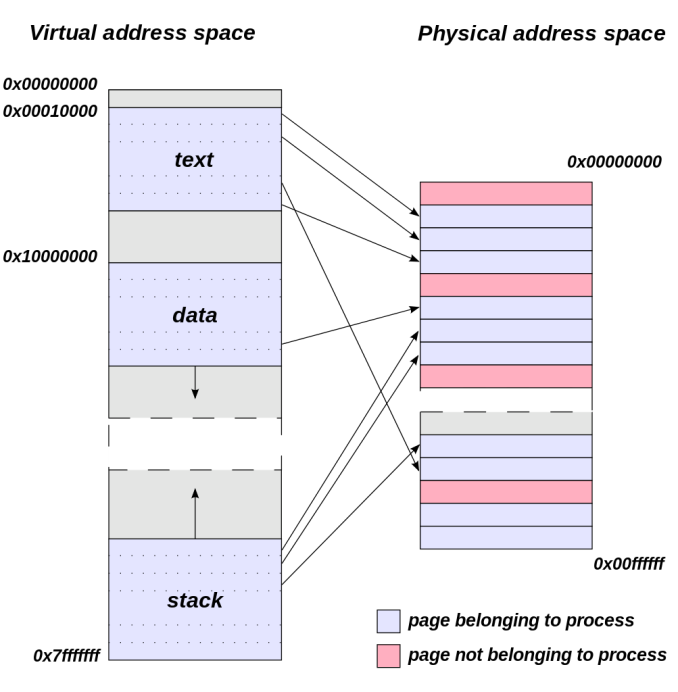

Meanwhile from an application’s perspective all video memory and its address space is virtual. This means that applications are writing to their own private space, blissfully unaware of what else is in video memory and where it may be, or for that matter where in memory (or even which memory) they are writing. As a result of this memory virtualization it falls to the OS and video drivers to decide where in physical VRAM to allocate memory requests, and for the GTX 970 in particular, whether to put a request in the 3.5GB segment, the 512MB segment, or in the worst case scenario system memory over PCIe.

Virtual Address Space (Image Courtesy Dysprosia)

Without going quite so far to rehash the entire theory of memory management and caching, the goal of memory management in the case of the GTX 970 is to allocate resources over the entire 4GB of VRAM such that high-priority items end up in the fast segment and low-priority items end up in the slow segment. To do this NVIDIA focuses up to the first 3.5GB of memory allocations on the faster 3.5GB segment, and then finally for memory allocations beyond 3.5GB they turn to the 512MB segment, as there’s no benefit to using the slower segment so long as there’s available space in the faster segment.

The complex part of this process occurs once both memory segments are in use, at which point NVIDIA’s heuristics come into play to try to best determine which resources to allocate to which segments. How NVIDIA does this is very much a “secret sauce” scenario for the company, but from a high level identifying the type of resource and when it was last used are good ways to figure out where to send a resource. Frame buffers, render targets, UAVs, and other intermediate buffers for example are the last thing you want to send to the slow segment; meanwhile textures, resources not in active use (e.g. cached), and resources belonging to inactive applications would be great candidates to send off to the slower segment. The way NVIDIA describes the process we suspect there are even per-application optimizations in use, though NVIDIA can clearly handle generic cases as well.

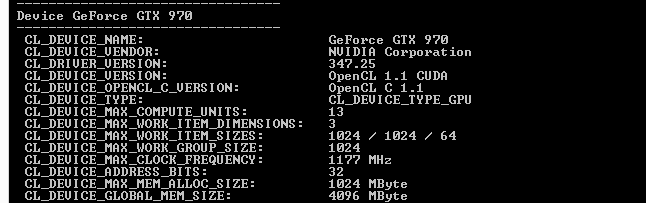

From an API perspective this is applicable towards both graphics and compute, though it’s a safe bet that graphics is the more easily and accurately handled of the two thanks to the rigid nature of graphics rendering. Direct3D, OpenGL, CUDA, and OpenCL all see and have access to the full 4GB of memory available on the GTX 970, and from the perspective of the applications using these APIs the 4GB of memory is identical, the segments being abstracted. This is also why applications attempting to benchmark the memory in a piecemeal fashion will not find slow memory areas until the end of their run, as their earlier allocations will be in the fast segment and only finally spill over to the slow segment once the fast segment is full.

| GeForce GTX 970 Addressable VRAM | |||

| API | Memory | ||

| Direct3D | 4GB | ||

| OpenGL | 4GB | ||

| CUDA | 4GB | ||

| OpenCL | 4GB | ||

The one remaining unknown element here (and something NVIDIA is still investigating) is why some users have been seeing total VRAM allocation top out at 3.5GB on a GTX 970, but go to 4GB on a GTX 980. Again from a high-level perspective all of this segmentation is abstracted, so games should not be aware of what’s going on under the hood.

Overall then the role of software in memory allocation is relatively straightforward since it’s layered on top of the segments. Applications have access to the full 4GB, and due to the fact that application memory space is virtualized the existence and usage of the memory segments is abstracted from the application, with the physical memory allocation handled by the OS and driver. Only after 3.5GB is requested – enough to fill the entire 3.5GB segment – does the 512MB segment get used, at which point NVIDIA attempts to place the least sensitive/important data in the slower segment.

398 Comments

View All Comments

vegemeister - Saturday, January 31, 2015 - link

They do *not* stand. Anandtech's own 970 review did not present frame interval statistics. FPS measurements are only useful if you are comparing trials in a controlled experiment. Overclocking, changing AA levels, and the like.FlushedBubblyJock - Friday, January 30, 2015 - link

Oh blindly accepting NO EXCUSE looks to be the standard here: " The incorrect number, provided to me (and other reviewers) by AMD PR around 3 months ago was 2 billion transistors. The actual transistor count for Bulldozer is apparently 1.2 billion transistors. I don't have an explanation as to why the original number was wrong, just that the new number has been triple checked by my contact and is indeed right. "LOL - it's okay man, AMD did it huge and never gave a reason for the giant PR lie, and we all were required to pretend it didn't matter, as we were told in the write up, here.

http://www.anandtech.com/show/5176/amd-revises-bul...

Jon Tseng - Monday, January 26, 2015 - link

Hey man if you really think this is a terrible card I'd be happy to buy yours off you (I assume from your righteously aggrieved tone you /must/ be a betrayed GTX 970 owner right?).How about you sell it to me for $200? I can do PayPal! After all given its /such/ a PoS card I'm sure you can't argue its worth any more than that right?

Then I can go and muck around SLI-ing AC:Unity at stupid resolutions (plus I hear it might be able to actually run Crysis), and you can go off and sue NVidia for the extra $150. Then we're both happy! :-) :-)

tuxRoller - Monday, January 26, 2015 - link

Hey, given the card is so fantastic, why not $400?cuex - Tuesday, January 27, 2015 - link

http://www.newegg.com/Product/ProductList.aspx?Sub...how about a kg of shit?

Jon Tseng - Tuesday, January 27, 2015 - link

Ummm... Because I can buy one new for $350?Oxford Guy - Tuesday, January 27, 2015 - link

"Hey man if you really think this is a terrible card I'd be happy to buy yours off you..."This is a red herring.

Jon Tseng - Tuesday, January 27, 2015 - link

Not really. I'm actually making two serious underlying points:1) A lot of the people who are raising up a sh*tstorm on this thread ("OMG NVDA ARE EVIL THIS IS AN EVIL MASTER PLAN JEN HSUN IS THE ANTICHRIST") likely don't own a GTX 970. Note that comment from GTX 970 owners (myself included) is largely positive.

By calling out people to put up and sell out I am highlighting this fact - most critics can't put up and sell out because they are not GTX 970 owners with first hand experience of the product.

2) The underlying logic of critics is that based on the news we heard yesterday this card is "gimped" - i.e. it's suddenly worth less than we thought it was.

However this is despite the fact that the real-world performance of the card (which we all have seen in independent benchmarks) has not changed, and the price of the card has not changed this card has suddenly become bad overnight. Remember there were plenty of UHD benchmarks on real world games conducted at time of launch. Those numbers have not changed.

By posing rhetorical question "has the card suddenly changed overnight so that its real world value has fallen from $350 to $200" I am trying to bring out the incongruity between the two positions.

Sushisamurai - Tuesday, January 27, 2015 - link

I think ur getting trolled...Anyways, this just reminds me of the more in-depth analysis of Apple's A8X chip - If only the chip got "faster" overnight....

neilbrysonmc - Wednesday, January 28, 2015 - link

I'm a new GTX 970 owner as well and I'm loving it. As long as I can run almost every game on ultra @ 1080p, I'm fine with it.