Acer XB280HK 4K G-SYNC Monitor Review

by Chris Heinonen & Jarred Walton on January 28, 2015 10:00 AM ESTA Brief Overview of G-SYNC

While the other performance characteristics of a monitor are usually going to be the primary concern, with a G-SYNC display the raison d'etre is gaming. As such, we’re going to start by going over the gaming aspects of the XB270HK. If you don’t care about gaming, there’s really not much point in paying the price premium for a G-SYNC enabled display; you could get very much the same experience and quality at a lower price. Along with the G-SYNC qualification, it should go without saying but let’s make this clear: you’ll want an NVIDIA GTX level graphics card to take advantage of G-SYNC. With that out of the way, let’s talk briefly about what G-SYNC does and why it’s useful for gaming. We’ve covered a lot of this before, but for those less familiar with the reason we can benefit from technologies like G-SYNC we’ll cover the highlights.

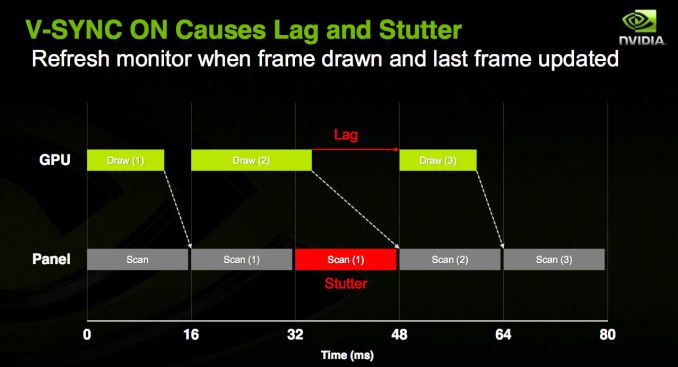

While games render their content internally, we view the final output on a display. The problem with this approach is that the display normally updates the content being shown at fixed intervals, and in the era of LCDs that usually means a refresh rate of 60Hz (though there are LCDs that can be run at higher rates). If a game renders faster than 60 FPS (Frames Per Second), there’s extra work being done that doesn’t provide much benefit, whereas rendering slower than 60 FPS creates problems. As the display content is updated 60 times per second, there are several options available.

- Show the same content on the screen for two consecutive updates (VSYNC On).

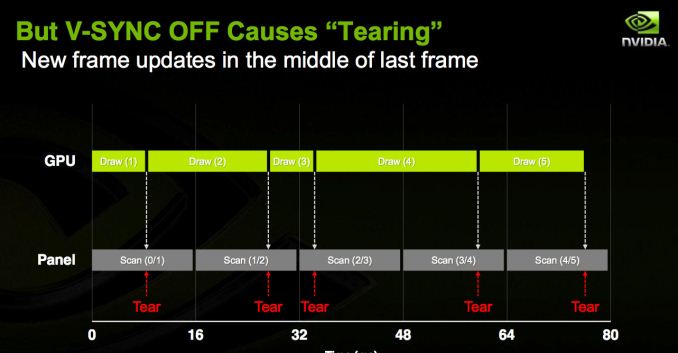

- Show the latest frame as soon as possible, even if the change occurs during a screen update (VSYNC Off).

- Create additional rendering buffers so that internal rendering rate isn’t limited by the refresh rate (Triple Buffering).

As you might have guessed, there are problems with each of these approaches. In the case of enabling VSYNC, this potentially limits your frame rate for each frame to half (or one-third, one-fourth, etc.) your refresh rate. In the worst case, if you have a PC capable of rendering a game at 59 FPS it will always take just a bit too long to finish the frame and the display will wait for updates, effectively giving you 30FPS. Perhaps even worse, if a game runs between 55-65 FPS, you’ll get a bunch of frames at 60 FPS and a bunch more at 30 FPS, which can result in the game stuttering or feeling choppy.

Turning VSYNC off only partially addresses the problem, as now the display will get parts of two or more frames each update, leading to image tearing. Triple buffering tries to get around the issue by using two off-screen buffers in addition to the on-screen buffer, allowing the game to always keep rendering as fast as possible (one of the back buffers always hold a complete screen update), but it can result in multiple frames being drawn but never displayed, it requires even more VRAM (which can be particularly problematic with 4K content), and it can potentially introduce an extra frame of lag between input sent to the PC and when that input shows up on the screen.

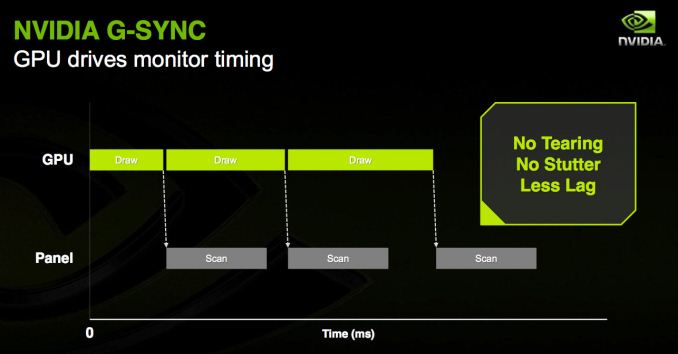

G-SYNC is thus a solution to the above problems, allowing for adaptive refresh rates. Your PC can render frames as fast as it’s able, and the display will swap to the latest frame as soon as it’s available. The result can be a rather dramatic difference in the smoothness of a game, particularly when you’re only able to hit 40-50 FPS, and this is all done with no image tearing. It’s a win-win scenario...with a few drawbacks.

First as noted above is that G-SYNC is for NVIDIA GPUs only. (AMD’s FreeSync alternative should start showing up in displays later this month, as working products were demoed at CES). The second is that the cost of licensing G-SYNC technology from NVIDIA along with some additional hardware requirements means that G-SYNC displays carry a price premium compared to otherwise identical but non-G-SYNC hardware.

4K G-SYNC in Practice

We’ve had G-SYNC displays for most of the past year, though the earliest option was a DIY kit where you had to basically mod your monitor, but the Acer XB280HK is something new: a 4Kp60 G-SYNC display. That’s potentially important because where high-end GPUs might easily run most games at frame rates above 60 FPS at 1920x1080 and even 2560x1440, even a couple of GTX 980 GPUs will struggle to break 60 FPS at 4K with a lot of recent releases. My personal feeling is that G-SYNC with 60Hz displays makes the most sense when you can reach 40-55 FPS; if you’re running slower than that, you need to lower the quality settings or resolution, while if you’re running faster than that it’s close enough to 60 FPS that a few minor tweaks to settings (or a slight overclock of the GPU) can make up the difference.

To get straight to the point, G-SYNC on the XB280HK works just as you would expect. For those times when frame rates are hovering in the “optimal” 40+ FPS range, it’s great to get the improved smoothness and lack of tearing. In fact, in many cases even when you’re able to average more than 60 FPS, G-SYNC is beneficial as it keeps the minimum frame rates still feeling as smooth as possible – so if you’re getting occasional dips to 50 FPS but mostly staying at or above 60, you won’t notice the stutter much if at all. Without G-SYNC (and with VSYNC enabled), those dips end up dropping to 30 FPS, and that’s a big enough difference that you can see and feel it.

There are problems however, and the biggest is that the native resolution of 3840x2160 still isn’t really ready for prime time (i.e. mainstream users). If you’re running a single GPU, you’re definitely going to fall short of 40 FPS in plenty of games, so you’ll need to further reduce the image quality or lower the resolution – and in fact, there are plenty of times where I’ve run the XB280HK at QHD or even 1080p to improve frame rates (depending on the game and GPU I was using). But why buy a 4K screen to run it at QHD or 1080p? Along with this, while G-SYNC can refresh the panel at rates as low as 30Hz, I find that anything below 40Hz will start to see the pixels on the screen decay, resulting in a slight flicker; hence, the desire to stay above 40 FPS.

The high resolution also means working in normal applications at 100% scaling can be a bit of an eyestrain (please, no comments from the young bucks with eagle eyes; it’s a real concern and I speak from personal experience), and running at 125% or 150% scaling doesn’t always work properly. Before anyone starts to talk about how DPI scaling has improved, let me quickly point out that during the holiday season, at least three major games I know of shipped in a state where they would break if your Windows DPI was set to something other than 100%. Oops. I keep hoping things will improve, but the software support for HiDPI still lags behind where it ought to be.

The other problem with 4Kp60 is that… well, 60Hz just isn’t the greatest experience in the world. I have an older 1080p 3D Vision display that would run the Windows desktop at 120Hz, and while it’s not the sort of night and day difference of some technologies, I definitely think 75-85 Hz would be a much better “default” than 60Hz. There’s also something to be said for tearing being less noticeable at higher refresh rates. And there’s an alternative to 4Kp60 G-SYNC: 1440p144 (QHD with 144Hz) G-SYNC also exists.

Without getting too far off the subject, we have a review of the ASUS ROG Swift PG278Q in the works. Personally, I find the experience of QHD with G-SYNC and refresh rates of 30-144 Hz to be superior for the vast majority of use cases to 4K 30-60Hz G-SYNC. Others will likely disagree and that’s fine, but on a 27” or 28” panel I just feel QHD is a better option – not to mention gaming at acceptable frame rates at QHD is much easier to achieve than 4K gaming.

There are some other aspects of using this display that I noticed, and while they're probably not a huge issue as most people will be using the XB280HK with NVIDIA GPUs, it’s worth noting that the behavior of my XB280HK with AMD GPUs has at times been quirky. For example, I purchased a longer DisplayPort cable because the included 2m cable can be a bit of a tight reach for my work area. The 3m cable I bought worked fine on all the NVIDIA GPUs I tested, but when I switched to an AMD GPU… no such luck. I had to drop to 4K @ 24Hz to get a stable image, so I ended up moving my PC around and going back to the original cable.

I’ve also noticed that a very large number of games with AMD GPUs don’t properly scale the resolution to fill the whole screen, so QHD with AMD GPUs often results in a lot of black borders. Perhaps even worse, every game with Mantle support that I’ve tried fails to scale the resolution to the full screen when using Mantle. So Dragon Age Inquisition at 1080p with Mantle fills the middle fourth of the display area and everything else is black. The problem would seem to lie with the drivers and/or Mantle, but it’s odd nonetheless – odd and undesirable; let's hope this gets fixed.

69 Comments

View All Comments

inighthawki - Friday, January 30, 2015 - link

In what way is it incorrect?perpetualdark - Wednesday, February 4, 2015 - link

Hertz refers to cycles per second, and with G-Sync the display matches the number of cycles per second to the framer per second the graphics card is able to send to the display, so in actuality, Hertz is indeed the correct term and it is being used correctly. At 45fps, the monitor is also at 45hz refresh rate.edzieba - Wednesday, January 28, 2015 - link

"We are still using DisplayPort 1.2 which means utilizing MST for 60Hz refresh rates." Huh-what? DP1.2 has the bandwidth to carry 4k60 with a single stream. Previous display controllers could not do so unless paired, but that was a problem at the sink end. There are several 4k60 SST monitors available now (e.g. P2415Q)..TallestJon96 - Wednesday, January 28, 2015 - link

Sync is a great way to make 4k more stable and usable. However, this is proprietary, costs more, and 4k scaling is just ok. Any one interested in this is better off waiting for a better, cheaper solution that isn't stuck with NVIDIA.As mentioned before, the SWIFT is simply a better option, better performance at 1440p, better UI scaling, higher maximum FPS. Only downside is lower Res, but 1440p certainly isn't bad.

A very niche product with a premium, but all that being said I bet Crisis at 4k with G-Sync is amazing.

Tunnah - Wednesday, January 28, 2015 - link

"Other 4K 28” IPS displays cost at least as much and lack G-SYNC, making them a much worse choice for gaming than the Acer. "But you leave out the fact that 4K 28" TN panels are a helluva lot cheaper. Gamers typically look for TN panels anyway because of refresh issues, so the comparison should be to other TN panels, not to IPS, and that comparison is G-SYNC is extremely expensive. It's a neat feature and all, but I would argue it's much better to spend the extra on competent graphics cards that could sustain 60fps rather than a monitor that handles the framerate drop better.

Tunnah - Wednesday, January 28, 2015 - link

Response time issues evenMidwayman - Wednesday, January 28, 2015 - link

If it ran 1080 @ 144hz as well as 4k@ 60hz this would be a winning combo. Getting stuck with 60hz really sucks for FPS games. I wouldn't mind playing my RPGs at 40-60fps with gsync though.DanNeely - Wednesday, January 28, 2015 - link

"Like most G-SYNC displays, the Acer has but a single DisplayPort input. G-SYNC only works with DisplayPort, and if you didn’t care about G-SYNC you would have bought a different monitor."Running a second or third cable and hitting the switch input button on your monitor if you occasionally need to put a real screen on a second box is a lot easier than swapping the cable behind the monitor and a lot cheaper than a non-VGA KVM (and the only 4k capable options on the market are crazy expensive).

The real reason is probably that nVidia was trying to limit the price premium from getting any higher than it already is, and avoiding a second input helped simplify the chip design. (In addition to the time element for a bigger design, big FPGAs aren't cheap.)

JarredWalton - Wednesday, January 28, 2015 - link

Well, you're not going to do 60Hz at 4K with dual-link DVI, and HDMI 2.0 wasn't available when this was being developed. A second input might have been nice, but that's just an added expense and not likely to be used a lot IMO. You're right on keeping the cost down, though -- $800 is already a lot to ask, and if you had to charge $900 to get additional inputs I don't think most people would bite.Mustalainen - Wednesday, January 28, 2015 - link

I was waiting for the DELL P2715Q but decided to get this monitor instead(about 2 weeks ago). Before I got this I borrowed a ASUS ROG SWIFT PG278Q that I used for a couple of weeks. The SWIFT was probably the best monitor that I had used until that point in time. But to be completely honest, I like the XB280HK better. The colors, viewing angles (and so on) are pretty much the same(in my opinion) as I did my "noob" comparison. My monitor has some minor blb in the bottom, barely notable while the SWIFT seems "flawless". The SWIFT felt as is was built better and has better materials. Still, the 4k was a deal breaker for me. The picture just looks so much better compared to 1440p. The difference between 1440p and 4k? Well after using the XB280HK I started to think that my old 24" 1200p was broken. It just looked as it had these huge pixels. This never happened with the SWIFT. And the hertz? Well I'm not a gamer. I play some RPGs now and then but most of the time my screen is filled with text and code. The 60hz seems to be sufficient in these cases. I got the XB280HK for 599 euro and compared to other monitors in that price range it felt as a good option. I'm very happy with it and dare to recommend this to anyone thinking about getting a 4k monitor. If IPS is your thing, wait for the DELL. This is probably the only regret I have(not having patience to wait for the DELL).I would also like to point out that the hype of running a 4k monitor seems to be exaggerated. I manage to run my games at medium settings with a single 660 gtx. Considering I run 3 monitors with different resolutions and still have playable fps just shows that you don't need a 980 or 295 to power one of these things(maybe if the settings are maxed out and you want max fps).