NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

GPU Performance Benchmarks

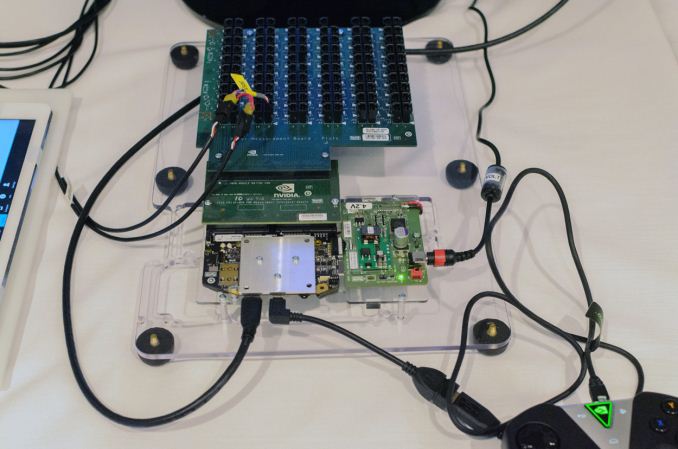

As part of today’s announcement of the Tegra X1, NVIDIA also gave us a short opportunity to benchmark the X1 reference platform under controlled circumstances. In this case NVIDIA had several reference platforms plugged in and running, pre-loaded with various benchmark applications. The reference platforms themselves had a simple heatspreader mounted on them, intended to replicate the ~5W heat dissipation capabilities of a tablet.

The purpose of this demonstration was two-fold. First to showcase that X1 was up and running and capable of NVIDIA’s promised features. The second reason was to showcase the strong GPU performance of the platform. Meanwhile NVIDIA also had an iPad Air 2 on hand for power testing, running Apple’s latest and greatest SoC, the A8X. NVIDIA has made it clear that they consider Apple the SoC manufacturer to beat right now, as A8X’s PowerVR GX6850 GPU is the fastest among the currently shipping SoCs.

It goes without saying that the results should be taken with an appropriate grain of salt until we can get Tegra X1 back to our labs. However we have seen all of the testing first-hand and as best as we can tell NVIDIA’s tests were sincere.

| NVIDIA Tegra X1 Controlled Benchmarks | |||||

| Benchmark | A8X (AT) | K1 (AT) | X1 (NV) | ||

| BaseMark X 1.1 Dunes (Offscreen) | 40.2fps | 36.3fps | 56.9fps | ||

| 3DMark 1.2 Unlimited (Graphics Score) | 31781 | 36688 | 58448 | ||

| GFXBench 3.0 Manhattan 1080p (Offscreen) | 32.6fps | 31.7fps | 63.6fps | ||

For benchmarking NVIDIA had BaseMark X 1.1, 3DMark Unlimited 1.2 and GFXBench 3.0 up and running. Our X1 numbers come from the benchmarks we ran as part of NVIDIA’s controlled test, meanwhile the A8X and K1 numbers come from our Mobile Bench.

NVIDIA’s stated goal with X1 is to (roughly) double K1’s GPU performance, and while these controlled benchmarks for the most part don’t make it quite that far, X1 is still a significant improvement over K1. NVIDIA does meet their goal under Manhattan, where performance is almost exactly doubled, meanwhile 3DMark and BaseMark X increased by 59% and 56% respectively.

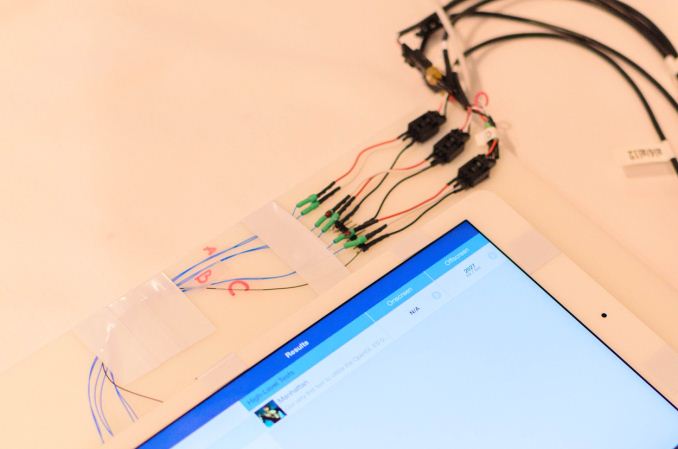

Finally, for power testing NVIDIA had an X1 reference platform and an iPad Air 2 rigged to measure the power consumption from the devices’ respective GPU power rails. The purpose of this test was to showcase that thanks to X1’s energy optimizations that X1 is capable of delivering the same GPU performance as the A8X GPU while drawing significantly less power; in other words that X1’s GPU is more efficient than A8X’s GX6850. Now to be clear here these are just GPU power measurements and not total platform power measurements, so this won’t account for CPU differences (e.g. A57 versus Enhanced Cyclone) or the power impact of LPDDR4.

Top: Tegra X1 Reference Platform. Bottom: iPad Air 2

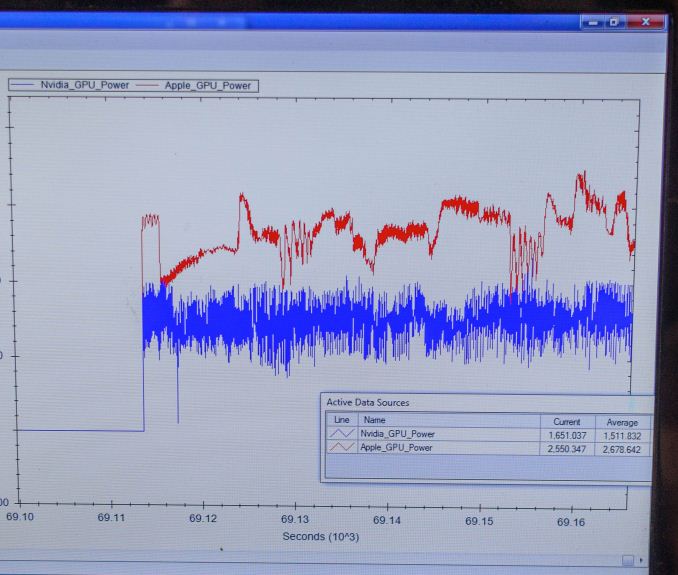

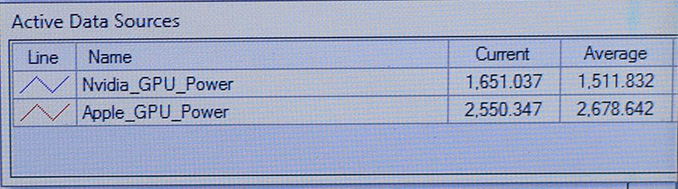

For power testing NVIDIA ran Manhattan 1080p (offscreen) with X1’s GPU underclocked to match the performance of the A8X at roughly 33fps. Pictured below are the average power consumption (in watts) for the X1 and A8X respectively.

NVIDIA’s tools show the X1’s GPU averages 1.51W over the run of Manhattan. Meanwhile the A8X’s GPU averages 2.67W, over a watt more for otherwise equal performance. This test is especially notable since both SoCs are manufactured on the same TSMC 20nm SoC process, which means that any performance differences between the two devices are solely a function of energy efficiency.

There are a number of other variables we’ll ultimately need to take into account here, including clockspeeds, relative die area of the GPU, and total platform power consumption. But assuming NVIDIA’s numbers hold up in final devices, X1’s GPU is looking very good out of the gate – at least when tuned for power over performance.

194 Comments

View All Comments

stacey94 - Monday, January 5, 2015 - link

There is a massive memory bandwidth deficiency to overcome. It might have the raw processing power but it won't perform anywhere near as well.kron123456789 - Monday, January 5, 2015 - link

Well, memory bandwidth is a different story)))texasti89 - Saturday, January 10, 2015 - link

3D stacked memory and technologies like NVLINK which are expected to arrive in 2016 will the solve memory bandwidth limitations. We might very well soon see a massive 1 TB/s bandwidth on mobile SoCs. I didn't think bandwidth is the hurdle but rather the power wall which we can overcome by scaling manufacturing process.Someguyperson - Monday, January 5, 2015 - link

If you actually read the chart, the 1 TFLOP number was reached with FP16 operations and not FP32 operations, like literally EVERYONE ELSE uses. The quoted FP32 number is 0.5 TFLOPs, so it wouldn't be until 2017-18 that Tegra could actually reach the Xbox One performance without cheating the numbers.Jumangi - Monday, January 5, 2015 - link

Its doesn't need more than that for the GPU. More GPU power for a Core M is wasted for the type of products its used for. You build the chip that is balanced for the market your selling too. Why is this so beyond people who always look at every chip on the same level?LocutusEstBorg - Monday, January 5, 2015 - link

As long as it's only on the unprofitable inconsistent disaster that is Android, it's completely useless to the end user. Not a single game will be optimised for it and every game on the Play Store will continue to run like crap and crash on half the devices.They need to adopt a well managed OS like Windows Phone with proper drivers and release optimised apps on the Windows Store.

kron123456789 - Monday, January 5, 2015 - link

If they could get an x86 license it would be much better.darkich - Monday, January 5, 2015 - link

Lol, what an Android hating troll.pSupaNova - Monday, January 5, 2015 - link

If games run well on half of Android devices thats still 20x the installed user base of Windows Phone based devices.Also how many OEMs ever made a profit using Windows Phone?

tipoo - Monday, January 5, 2015 - link

Is this impression first hand? What device? Because my low end Moto G never crashes and the play store is completely smooth, more so than my iPad Mini in fact. This is a low end Android device with only Cortex A7 cores and 1GB memory backing them up.