Benchmarked - Assassin's Creed: Unity

by Jarred Walton on November 20, 2014 8:30 AM ESTTest System and Benchmarks

With that introduction out of the way, let's just get straight to the benchmarks, and then I'll follow up with a discussion of image quality and other aspects at the end. As usual, the test system is what I personally use, which is a relatively high-end Haswell configuration. Most of the hardware was purchased at retail over the past year or so, and that means I don't have access to every GPU configuration available, but I did just get a second ZOTAC GTX 970 so I can at least finally provide some SLI numbers (which I'll add to the previous Benchmarked articles in the near future).

| Gaming Benchmarks Test Systems | |

| CPU | Intel Core i7-4770K (4x 3.5-3.9GHz, 8MB L3) Overclocked to 4.1GHz Underclocked to 3.5GHz with two cores ("i3-4330") |

| Motherboard | Gigabyte G1.Sniper M5 Z87 |

| Memory | 2x8GB Corsair Vengeance Pro DDR3-1866 CL9 |

| GPUs | Desktop GPUs: Sapphire Radeon R9 280 Sapphire Radeon R9 280X Gigabyte Radeon R9 290X EVGA GeForce GTX 770 EVGA GeForce GTX 780 Zotac GeForce GTX 970 Reference GeForce GTX 980 Laptops: GeForce GTX 980M (MSI GT72 Dominator Pro) GeForce GTX 880M (MSI GT70 Dominator Pro) GeForce GTX 870M (MSI GS60 Ghost 3K Pro) GeForce GTX 860M (MSI GE60 Apache Pro) |

| Storage | Corsair Neutron GTX 480GB |

| Power Supply | Rosewill Capstone 1000M |

| Case | Corsair Obsidian 350D |

| Operating System | Windows 7 64-bit |

We're testing with NVIDIA's 344.65 drivers, which are "Game Ready" for Assassin's Creed: Unity. (I also ran a couple sanity checks with the latest 344.75 drivers and found no difference in performance.) On the AMD side, testing was done with the Catalyst 14.11.2 driver that was released to better support ACU. AMD also released a new beta driver for Far Cry 4 and Dragon Age: Inquisition (14.11.2B), but I have not had a chance to check performance with that yet. No mention is made of improvements for ACU with the driver, so it should be the same as the 14.11.2 driver we used.

One final note is that thanks to the unlocked nature of the i7-4770K and the Gigabyte motherboard BIOS, I'm able to at least mostly simulate lower performance Haswell CPUs. I didn't run a full suite of tests with a second "virtual" CPU, but I did configure the i7-4770K to run similar to a Core i3-4330 (3.5GHz, 2C/4T) – the main difference being the CPU still has 8MB L3 cache where the i3-4330 only has 4MB L3. I tested just one GPU with the slower CPU configuration, the GeForce GTX 980, but this should be the best-case result for what you could get from a Core i3-4330.

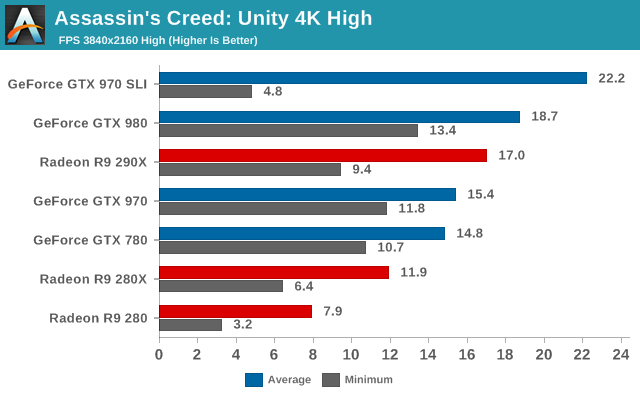

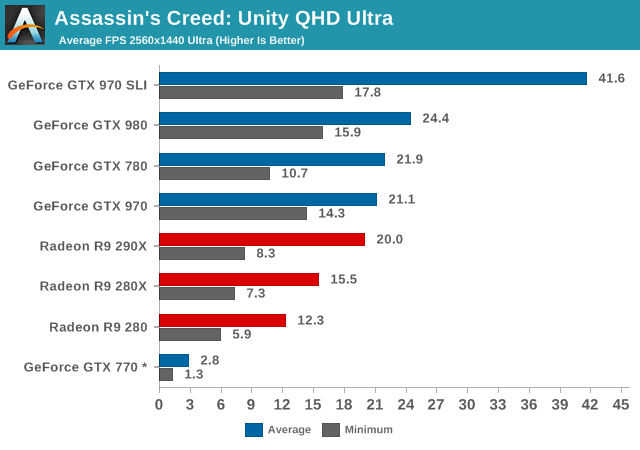

Did I mention that Assassin's Creed: Unity is a beast to run? Yeah, OUCH! 4K gaming is basically out of the question on current hardware, and even QHD is too much at the default Ultra settings. Also notice how badly the GTX 770 does at the Ultra settings, which appears to be due to the 2GB of VRAM; I logged system usage for the GTX 770 at QHD Ultra and found that the game was trying to allocate nearly 3GB of VRAM use, which on a 2GB card means there's going to be a lot of texture thrashing. (4K with High quality also uses around 3GB of VRAM, if you're wondering.) The asterisk is there because I couldn't actually run the benchmark, so I used a "Synchronize" from the top of a tower instead, which is typically slightly less demanding than our actual benchmark run.

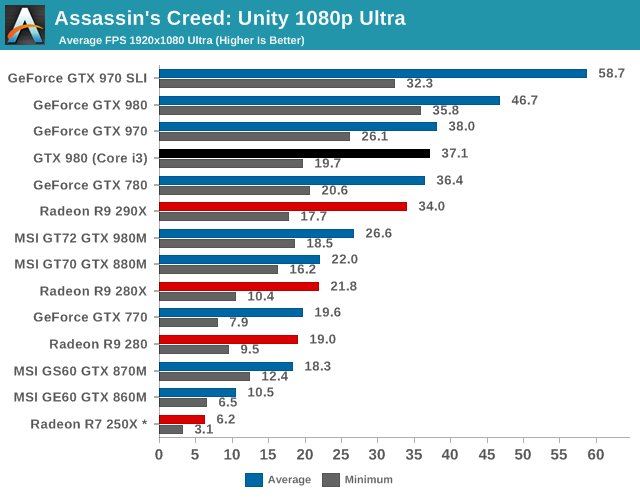

Anyway, all of the single GPUs are basically unplayable at QHD Ultra settings, and a big part of that looks to be the higher resolution textures. Dropping the texture quality to High can help, but really the game needs a ton of GPU horsepower to make QHD playable. GTX 970 SLI basically gets there, though again I'd suggest dropping the texture quality to High in order to keep minimum frame rates closer to 30. Even at 1080p, I'd suggest avoiding the Ultra setting – or at least Ultra texture quality – as there's just a lot of stutter. Sadly, the GTX 980M and 880M both have 8GB GDDR5, but their performance with Ultra settings is too low to really be viable, though they do show a bit better minimums relative to the other GPUs.

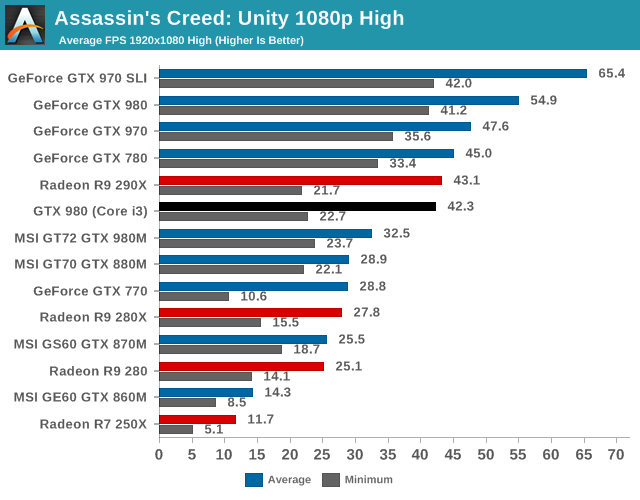

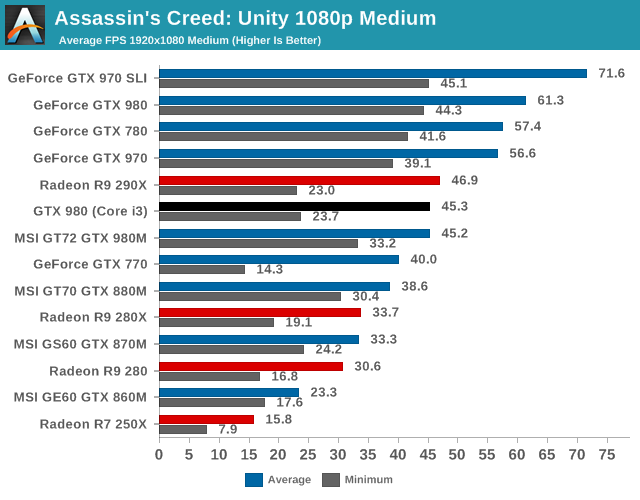

As we continue down the charts, NVIDIA's GTX 780 and 970 (and faster) cards finally reach the point where performance is totally acceptable at 1080p High (and you can tweak a few settings like turning on HBAO+ and Soft Shadows without too much trouble). What's scary is that looking at the minimum frame rates along with the average FPS, the vast majority of GPUs are still struggling at 1080p High, and it's really only 1080p Medium where most midrange and above GPUs reach the point of playability.

There's a secondary aspect to the charts that you've probably noticed as well. Sadly, AMD's GPUs really don't do well right now with Assassin's Creed: Unity. Some of it is almost certainly drivers, and some of it may be due to the way things like GameWorks come into play. Whatever the cause, ACU is not going to be a great experience on any of the Radeon GPUs right now.

I did some testing of CrossFire R9 290X as well, and while it didn't fail to run, performance was not better than a single 290X – and minimum frame rates were down – so CrossFire (without any attempt to create a custom profile) isn't viable yet. Also note that while SLI "works", there are also rendering issues at times. Entering/exiting the menu/map, or basically any time there's a full screen post processing filter, you get severe flicker (a good example is when you jump off a tower into a hay cart, you'll notice flicker on the peripheral as well as on Arno's clothing). I believe these issues happen on all the multi-GPU rigs, so it might be more of a game issue than a driver issue.

I even went all the way down to 1600x900 Medium to see if that would help any of AMD's GPUs; average frame rates on the R9 290X basically top out at 48FPS with minimums still at 25 or so. I did similar testing on NVIDIA and found that with the overclocked i7-4770K ACU maxes out at just over 75 FPS with minimums of 50+ FPS. We'll have to see if AMD and/or Ubisoft Montreal can get things working better on Radeon GPUs, but for now it's pretty rough. That's not to say the game is unplayable on an R9 290X, as you can certainly run 1080p High, but there are going to be occasional stutters. Anything less than the R9 290/290X and you'll basically want to use Low or Medium quality (with some tweaking).

Finally, I mentioned how 2GB GPUs are really going to have problems, especially at higher texture quality settings. The GeForce GTX 770 is a prime example of this; even at 1080p High, minimum frame rates are consistently dropping into the low teens and occasionally even single digits, and Medium quality still has very poor minimum frame rates. Interestingly, at 1600x900 Medium the minimum FPS basically triples compared to 1080p Medium, so if the game is using more than 2GB VRAM at 1080p Medium it's not by much. This also affects the GTX 860M (1366x768 Low is pretty much what you need to run on that GPU), and the 1GB R7 250X can't even handle that. And it probably goes without saying, but Intel's HD 4600 completely chokes with ACU – 3-7 FPS at 1366x768 is all it can manage.

What About the CPU?

I mentioned earlier that I also underclocked the Core i7-4770K and disabled a couple CPU cores to simulate a Core i3-4330. It's not a fully accurate simulation, but just by way of reference the multi-threaded Cinebench 11.5 score went from 8.08 down to 3.73, which looks about right give or take a few percent. I only tested the GTX 980 with the slower CPU, but this is basically the "best case" for what a Core i3 could do.

Looking at the above 1080p charts, you can see that with the slower CPU the GTX 980 takes quite the hit to performance. In fact, the GTX 980 with a "Core i3" Haswell CPU starts looking an awful lot like the R9 290X: it's playable in a pinch, but the minimum frame rates will definitely create some choppiness at times. I don't have an AMD rig handy to do any testing, unfortunately, but I'd be surprised if the APUs are much faster than the Core i3.

In short, not only do you need a fast GPU, but you also need a fast CPU. And the "just get a $300 console" argument doesn't really work either, as frame rates on the consoles aren't particularly stellar either from what I've read. At least one site has found that both the PS4 and Xbox One fail to maintain a consistent 30FPS or higher frame rate.

122 Comments

View All Comments

Cellar Door - Thursday, November 20, 2014 - link

The amount of less then intelligent comments in here is simply appalling! Do you realize how many PS4 and Xbox One games are locked at 30fps?In reality if you were put infront of a tv with one of those games, and not told about the 30fps, you wouldn't even realize what is happening.

Stable 30fps vs stutter now there is a difference, why people don't understand this is beyond me....

Death666Angel - Thursday, November 20, 2014 - link

You forget the abysmal controllers console gamers have to use. Using a controller and having low FPS is much different to using a mouse and having low FPS.TheSlamma - Friday, November 21, 2014 - link

There is nothing 'abysmal' about it.. man it bothers me people are latching onto that word now and using it like candy. The 360 and Xbone controllers are wonderful controllers, you just don't have the skill to use them it sounds like. Good gamers can use all input types. After a 6 month break I hopped into BF4 the other day and still had a 2:1 KDR on my first match never even played the map so yes I'm good with KB/M, but I can also pickup my PS4 or Xbox 360 controller and crush it in games that play better with controllers.theMillen - Friday, November 21, 2014 - link

and while we're on the topic of controllers as well as AC:U... this is one of those games that DEFINITELY plays better with a controller!inighthawki - Thursday, November 20, 2014 - link

Yeah, like yours. 30fps is visually smooth, but the issue is with input. A controller is less sensitive to input due to the large disconnect from the screen. It's the same concept that makes touch screens feel unresponsive even at high smooth framerates.nathanddrews - Thursday, November 20, 2014 - link

Halo: Combat Evolved was 30fps locked on Xbox and is considered by many to be a great game. While I PREFER to change settings in games to get the frame rate to match my monitor (144Hz), I still enjoy games that play at low frame rates. I can't tell you how many hours I put into Company of Heroes on my crappy laptop... that thing barely cracked 30fps when nothing was happening.nathanddrews - Thursday, November 20, 2014 - link

I forgot to add that my desire for smooth or higher framerates also varies greatly by game. RTS games can get away with 20-30fps as long as the jerkiness it doesn't interfere with my ability to select units. For action games, I prefer 60fps+ and for shooters or other fast-paced games, I want all 144.ELPCU - Thursday, November 20, 2014 - link

It really depends on game/person IMO.Here is my experience.

In one old game(SD gundam online : random korean gundam online game kappa), I was playing 40 FPS for a while. And it was playable experience and then I upgraded my gigs. .

After upgrade, I was able to push until full 60 FPS without any frame drop. Using full 60FPS fixed for a while. and then I had a technical problem of my upgraded gig. Thus, I go back to old computer and played with 40 FPS. There was a MASSIVE difference after downgrading.

Experience itself was horrible.

But it really depends on game and person. Again, depends how you accept 30 FPS. The game I mention was really sensitive. every move needs to be quick and responsive.

AC : Unity? I can agree these kinds of game is okay to have somewhat lower FPS. Though as I said it can be fairly bad if you are very used to using 60 fps for a while. Downgrade is way more feasible than upgrade, so it is more about how people accept it. If you downgrade straightforward from 60 FPS fully FIXED(using it for several months) to 30 FPS, you might say it was definitely horrible experience.

By the way, my problem of playing AC Unity was occasional freezing(Frame drop below 10 or 15FPS for about 1 sec.) for about 1 sec. Worse thing is after frame drop, my mouse cursor pops random place. I was playing with GTX 670 FTW SLI. 3930k OC, 32GB RAM.

This unity frame drop issue was the most terrible one. At first crash issue was even with frame drop, but Patch 2 fixs many crash problem. However, frame drop issue still persist. I was using LOW option for all graphic with 1600x900 resolution, which gives normally 60 FPS(70~80FPS if I turn my vertical sync off), but there was still this freezing issue. it intensifys when I put more graphic option or higher resolution. It forces me using 1600x900 with low graphic option, no AA or other random graphic sauce on it. Typically 60~80 FPS, but it was still horrible.

Murloc - Friday, November 21, 2014 - link

Easy answer: because that's just your opinion. I don't need more than 30 fps, most people can't tell the difference after that. I've played for years with 25 fps and it's fine. 23 fps is where it gets noticeable.Mr.r9 - Thursday, November 20, 2014 - link

"a lot of people might want a GPU upgrade this holiday season". What about people who already have a 780 or 290/x coupled with a 120Hz FHD or 60Hz QHD monitors?Isn't it more reasonable to say: Don't buy this game for now, wait a few months. I don't believe that ACU is "tougher" to run than Metro or Crysis.