The Google Nexus 9 Review

by Joshua Ho & Ryan Smith on February 4, 2015 8:00 AM EST- Posted in

- Tablets

- HTC

- Project Denver

- Android

- Mobile

- NVIDIA

- Nexus 9

- Lollipop

- Android 5.0

The Secret of Denver: Binary Translation & Code Optimization

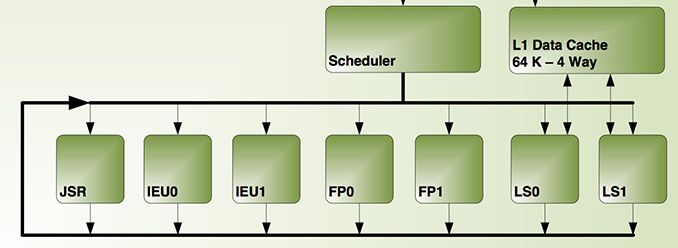

As we alluded to earlier, NVIDIA’s decision to forgo a traditional out-of-order design for Denver means that much of Denver’s potential is contained in its software rather than its hardware. The underlying chip itself, though by no means simple, is at its core a very large in-order processor. So it falls to the software stack to make Denver sing.

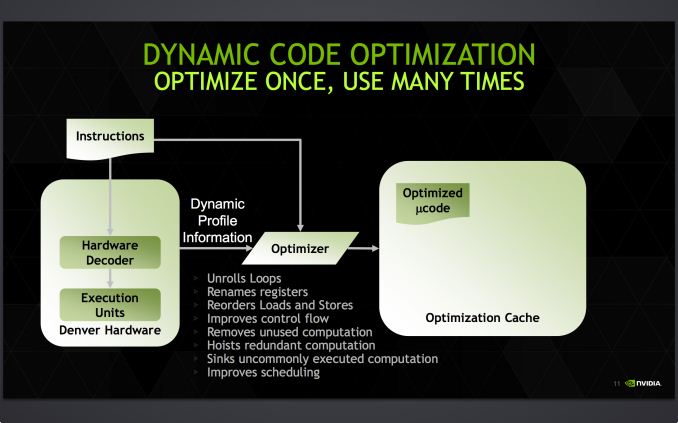

Accomplishing this task is NVIDIA’s dynamic code optimizer (DCO). The purpose of the DCO is to accomplish two tasks: to translate ARM code to Denver’s native format, and to optimize this code to make it run better on Denver. With no out-of-order hardware on Denver, it is the DCO’s task to find instruction level parallelism within a thread to fill Denver’s many execution units, and to reorder instructions around potential stalls, something that is no simple task.

Starting first with the binary translation aspects of DCO, the binary translator is not used for all code. All code goes through the ARM decoder units at least once before, and only after Denver realizes it has run the same code segments enough times does that code get kicked to the translator. Running code translation and optimization is itself a software task, and as a result this task requires a certain amount of real time, CPU time, and power. This means that it only makes sense to send code out for translation and optimization if it’s recurring, even if taking the ARM decoder path fails to exploit much in the way of Denver’s capabilities.

This sets up some very clear best and worst case scenarios for Denver. In the best case scenario Denver is entirely running code that has already been through the DCO, meaning it’s being fed the best code possible and isn’t having to run suboptimal code from the ARM decoder or spending resources invoking the optimizer. On the other hand then, the worst case scenario for Denver is whenever code doesn’t recur. Non-recurring code means that the optimizer is never getting used because that code is never seen again, and invoking the DCO would be pointless as the benefits of optimizing the code are outweighed by the costs of that optimization.

Assuming that a code segment recurs enough to justify translation, it is then kicked over to the DCO to receive translation and optimization. Because this itself is a software process, the DCO is a critical component due to both the code it generates and the code it itself is built from. The DCO needs to be highly tuned so that Denver isn’t spending more resources than it needs to in order to run the DCO, and it needs to produce highly optimal code for Denver to ensure the chip achieves maximum performance. This becomes a very interesting balancing act for NVIDIA, as a longer examination of code segments could potentially produce even better code, but it would increase the costs of running the DCO.

In the optimization step NVIDIA undertakes a number of actions to improve code performance. This includes out-of-order optimizations such as instruction and load/store reordering, along register renaming. However the DCO also behaves as a traditional compiler would, undertaking actions such as unrolling loops and eliminating redundant/dead code that never gets executed. For NVIDIA this optimization step is the most critical aspect of Denver, as its performance will live and die by the DCO.

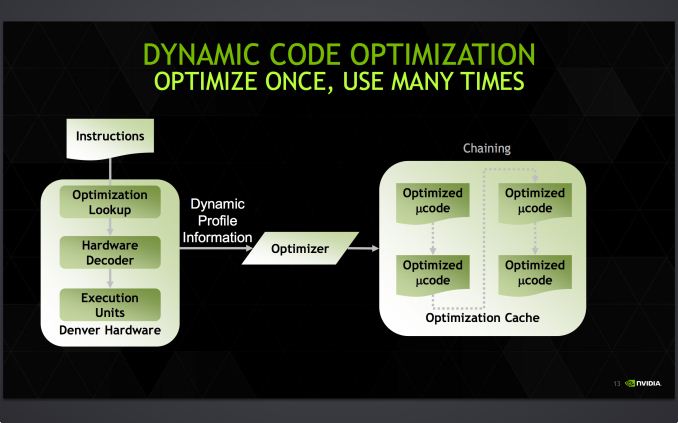

Denver's optimization cache: optimized code can call other optimized code for even better performance

Once code leaves the DCO, it is then stored for future use in an area NVIDIA calls the optimization cache. The cache is a 128MB segment of main memory reserved to hold these translated and optimized code segments for future reuse, with Denver banking on its ability to reuse code to achieve its peak performance. The presence of the optimization cache does mean that Denver suffers a slight memory capacity penalty compared to other SoCs, which in the case of the N9 means that 1/16th (6%) of the N9’s memory is reserved for the cache. Meanwhile, also resident here is the DCO code itself, which is shipped and stored as already-optimized code so that it can achieve its full performance right off the bat.

Overall the DCO ends up being interesting for a number of reasons, not the least of which are the tradeoffs are made by its inclusion. The DCO instruction window is larger than any comparable OoOE engine, meaning NVIDIA can look at larger code blocks than hardware OoOE reorder engines and potentially extract even better ILP and other optimizations from the code. On the other hand the DCO can only work on code in advance, denying it the ability to see and work on code in real-time as it’s executing like a hardware out-of-order implementation. In such cases, even with a smaller window to work with a hardware OoOE implementation could produce better results, particularly in avoiding memory stalls.

As Denver lives and dies by its optimizer, it puts NVIDIA in an interesting position once again owing to their GPU heritage. Much of the above is true for GPUs as well as it is Denver, and while it’s by no means a perfect overlap it does mean that NVIDIA comes into this with a great deal of experience in optimizing code for an in-order processor. NVIDIA faces a major uphill battle here – hardware OoOE has proven itself reliable time and time again, especially compared to projects banking on superior compilers – so having that compiler background is incredibly important for NVIDIA.

In the meantime because NVIDIA relies on a software optimizer, Denver’s code optimization routine itself has one last advantage over hardware: upgradability. NVIDIA retains the ability to upgrade the DCO itself, potentially deploying new versions of the DCO farther down the line if improvements are made. In principle a DCO upgrade not a feature you want to find yourself needing to use – ideally Denver’s optimizer would be perfect from the start – but it’s none the less a good feature to have for the imperfect real world.

Case in point, we have encountered a floating point bug in Denver that has been traced back to the DCO, which under exceptional workloads causes Denver to overflow an internal register and trigger an SoC reset. Though this bug doesn’t lead to reliability problems in real world usage, it’s exactly the kind of issue that makes DCO updates valuable for NVIDIA as it gives them an opportunity to fix the bug. However at the same time NVIDIA has yet to take advantage of this opportunity, and as of the latest version of Android for the Nexus 9 it seems that this issue still occurs. So it remains to be seen if BSP updates will include DCO updates to improve performance and remove such bugs.

169 Comments

View All Comments

ABR - Thursday, February 5, 2015 - link

I don't know if it would change this conclusion, but load-every-15-seconds is still only testing "screenager" behavior. For example while I'm reading this comments page it's a lot longer than 15 seconds. More like 30 seconds, scroll, 30 seconds, scroll, 5-10 minutes load another. Reading e-books is another low-intensity usage. Not saying that gaming and other continuous usage patterns aren't out there, but a lot of what people say they use tablets for is lower intensity.lucam - Thursday, February 5, 2015 - link

Upspin, send your resume to Anand and write next time your article. Looking fwd to reading your pearl of wisdom...Affectionate-Bed-980 - Wednesday, February 4, 2015 - link

You guys really need to stop using that gray/black surface for the background to show off your black devices. It really makes it hard to see the details.gijames1225 - Wednesday, February 4, 2015 - link

It's a shame that NVidia couldn't get Denver out on a smaller process at launch. They're giving the A8 a run for it's money, but the 28nm process is killer at this point.WereCatf - Wednesday, February 4, 2015 - link

"it seems to be clear that an all-metal unibody design would’ve greatly improved the design of the Nexus 9 and justified its positioning better."I don't quite agree. This article mentions several times the author's wish for full-body aluminum design, but as someone who already has a tablet with a nearly full aluminum body I do have to point out that it tends to be quite slippery in one's hands; you need a much tighter grip just to hold it without it slipping and this makes it tiring to hold in the long run. A tablet with a sort of rubbery, non-slip back won't look as pretty, but it will certainly be much more comfortable and I definitely would choose practicality over looks.

danbob999 - Wednesday, February 4, 2015 - link

Also metal blocks wireless signal. Asus Transformer Prime has abysmal wifi and GPS reception because of that.There is no rational advantage to metal cases. Only looks, which is debatable.

WereCatf - Wednesday, February 4, 2015 - link

Aye, my tablet had that issue. Luckily it's easy to open up and replace the antenna with a stronger one, something that helps, but not all tablets are that easy to open or have a replaceable antenna.Impulses - Wednesday, February 4, 2015 - link

Metal would also make it heavier... Plastic doesn't have to mean back flex, it's just a design/QC issue they didn't address. My OG TF had a textured plastic back that was pretty solid, several years ago. It still creaked a little but it was mostly because of the mating of the back to the metal frame, no flex tho.olivaw - Wednesday, February 4, 2015 - link

I wonder if nVidia is "crazy enough" to develop a runtime that would JIT from android bytecode directly to denver. As it is, there are two layers of compilation going on, if ART could by swapped by an nVidia runtime things could get really interesting!joe0185 - Wednesday, February 4, 2015 - link

The browser tests are pretty worthless as it is but they are made even more worthless by the omission of version information. If AnandTech is going to include Javascript benchmarks they should at least include the browser version. What version of Chrome are you running on each device? There have been pretty dramatic improvements in Chrome on Android over the past year.