The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTGRID 2

The final game in our benchmark suite is also our racing entry, Codemasters’ GRID 2. Codemasters continues to set the bar for graphical fidelity in racing games, and with GRID 2 they’ve gone back to racing on the pavement, bringing to life cities and highways alike. Based on their in-house EGO engine, GRID 2 includes a DirectCompute based advanced lighting system in its highest quality settings, which incurs a significant performance penalty but does a good job of emulating more realistic lighting within the game world.

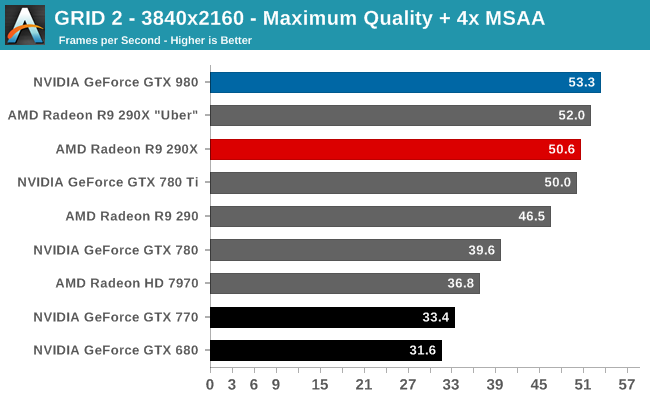

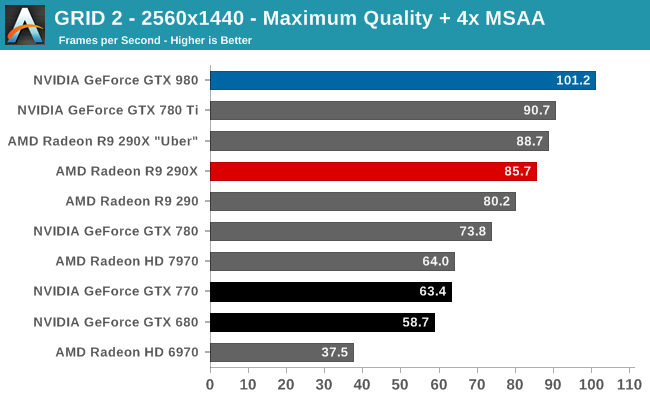

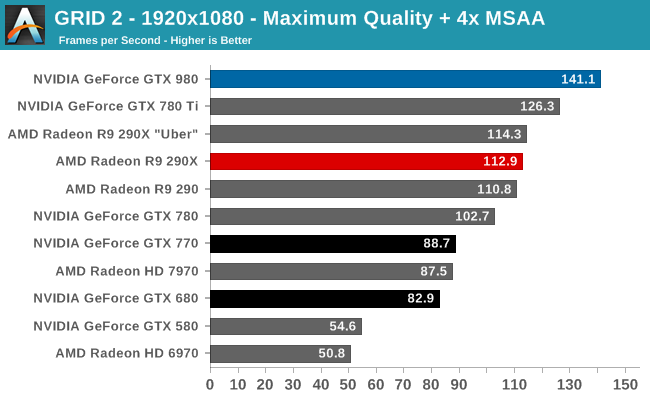

Our final game is another solid victory for the GTX 980. The GTX 980’s lead does shrink at 4K, otherwise we’re looking at a 12% advantage over the GTX 780 Ti and 14-23% over R9 290XU.

144Hz gamers will find 1080p quite useful, with the GTX 980 coming just short of averaging a matching framerate. Otherwise for 2560p one would need to settle for 101fps. Though for 4K gamers, even a single GTX 980 is more or less enough here; 53fps at 4K with Maximum quality and 4x MSAA means that at most a drop to 2x MSAA would get it above 60fps without involving a second card. Maybe this is a good case for NVIDIA’s new Multi-Frame sampled Anti-Aliasing?

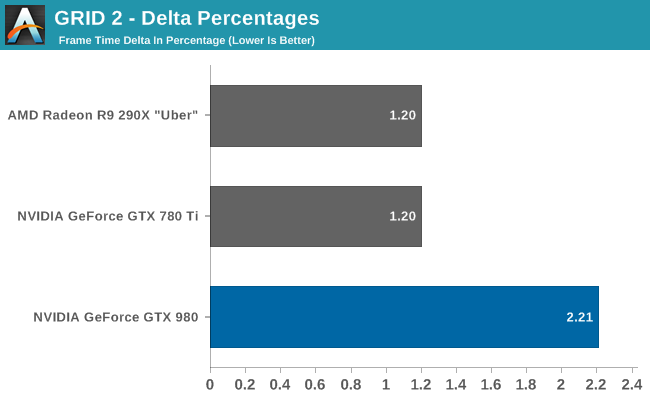

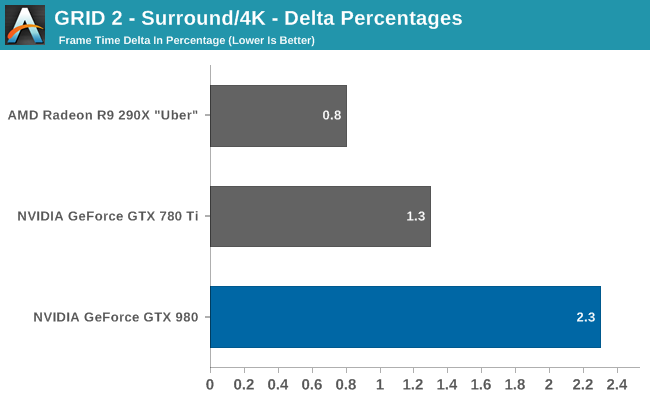

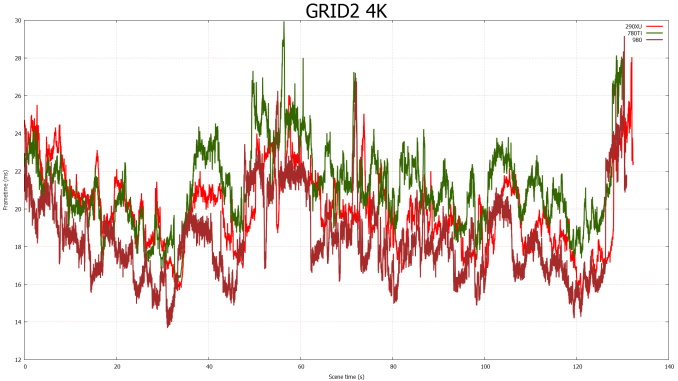

Our last set of delta percentages once again finds the GTX 980 easily below 3%. Though the variance is higher than with the other two cards, and by more than just what we would expect as a result of higher average framerates.

274 Comments

View All Comments

kron123456789 - Friday, September 19, 2014 - link

Look at "Load Power Consuption — Furmark" test. It's 80W lower with 980 than with 780Ti.Carrier - Friday, September 19, 2014 - link

Yes, but the 980's clock is significantly lowered for the FurMark test, down to 923MHz. The TDP should be fairly measured at speeds at which games actually run, 1150-1225MHz, because that is the amount of heat that we need to account for when cooling the system.Ryan Smith - Friday, September 19, 2014 - link

It doesn't really matter what the clockspeed is. The card is gated by both power and temperature. It can never draw more than its TDP.FurMark is a pure TDP test. All NVIDIA cards will reach 100% TDP, making it a good way to compare their various TDPs.

Carrier - Friday, September 19, 2014 - link

If that is the case, then the charts are misleading. GTX 680 has a 195W TDP vs. GTX 770's 230W (going by Wikipedia), but the 680 uses 10W more in the FurMark test.I eagerly await your GTX 970 report. Other sites say that it barely saves 5W compared to the GTX 980, even after they correct for factory overclock. Or maybe power measurements at the wall aren't meant to be scrutinized so closely :)

Carrier - Friday, September 19, 2014 - link

To follow up: in your GTX 770 review from May 2013, you measured the 680 at 332W in FurMark, and the 770 at 383W in FurMark. Those numbers seem more plausible.Ryan Smith - Saturday, September 20, 2014 - link

680 is a bit different because it's a GPU Boost 1.0 card. 2.0 included the hard TDP and did away with separate power targets. Actually what you'll see is that GTX 680 wants to draw 115% TDP with NVIDIA's current driver set under FurMark.Carrier - Saturday, September 20, 2014 - link

Thank you for the clarification.wanderer27 - Friday, September 19, 2014 - link

Power at the wall (AC) is going to be different than power at the GPU - which is coming from the DC PSU.There are loses and efficiency difference in converting from AC to DC (PSU), plus a little wiggle from MB and so forth.

solarscreen - Friday, September 19, 2014 - link

Here you go:http://books.google.com/books?id=v3-1hVwHnHwC&...

PhilJ - Saturday, September 20, 2014 - link

As stated in the article, the power figures are total system power draw. The GTX980 is throwing out nearly double the FPS of the GTX680, so this is causing the rest of the system (mostly the CPU) to work harder to feed the card. This in tun drives the total system power consumption up, despite the fact the GTX980 itself is drawing less power than the GTX680.