The Intel Haswell-E CPU Review: Core i7-5960X, i7-5930K and i7-5820K Tested

by Ian Cutress on August 29, 2014 12:00 PM ESTLoad Delta Power Consumption

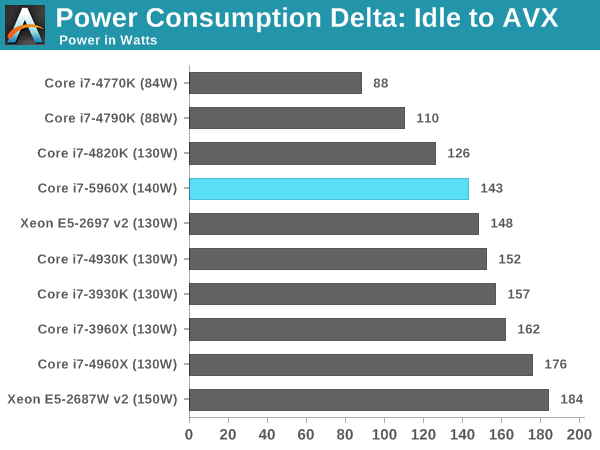

Power consumption was tested on the system while in a single MSI GTX 770 Lightning GPU configuration with a wall meter connected to the OCZ 1250W power supply. This power supply is Gold rated, and as I am in the UK on a 230-240 V supply, leads to ~75% efficiency under 50W and 90%+ efficiency at 250W, suitable for both idle and multi-GPU loading. This method of power reading allows us to compare the power management of the UEFI and the board to supply components with power under load, and includes typical PSU losses due to efficiency.

We take the power delta difference between idle and load as our tested value, giving an indication of the power increase from the CPU when placed under stress. Unfortuantely we were not in a position to test the power consumption for the two 6-core CPUs due to the timing of testing.

Because not all processors of the same designation leave the Intel fabs with the same stock voltages, there can be a mild variation and the TDP given on each CPU is understandably an absolute stock limit. Due to power supply efficiencies, we get higher results than TDP, but the more interesting results are the comparisons. The 5960X is coming across as more efficient than Sandy Bridge-E and Ivy Bridge-E, including the 130W Ivy Bridge-E Xeon.

Test Setup

| Test Setup | ||||

| Processor |

Intel Core i7-5820K Intel Core i7-5930K Intel Core i7-5960X |

6C/12T 6C/12T 8C/16T |

3.3 GHz / 3.6 GHz 3.5 GHz / 3.7 GHz 3.0 GHz / 3.5 GHz |

|

| Motherboard |

ASUS X99 Deluxe ASRock X99 Extreme4 |

|||

| Cooling |

Corsair H80i Cooler Master Nepton 140XL |

|||

| Power Supply |

OCZ 1250W Gold ZX Series Corsair AX1200i Platinum PSU |

1250W 1200W |

80 PLUS Gold 80 PLUS Platinum |

|

| Memory |

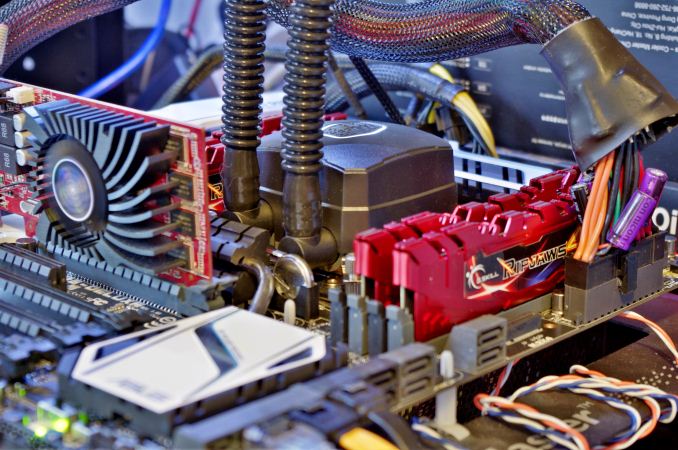

Corsair 4x8 GB G.Skill Ripjaws4 |

DDR4-2133 DDR4-2133 |

15-15-15 1.2V 15-15-15 1.2V |

|

| Memory Settings | JEDEC | |||

| Video Cards | MSI GTX 770 Lightning 2GB (1150/1202 Boost) | |||

| Video Drivers | NVIDIA Drivers 337.88 | |||

| Hard Drive | OCZ Vertex 3 | |||

| Optical Drive | LG GH22NS50 | |||

| Case | Open Test Bed | |||

| Operating System | Windows 7 64-bit SP1 | |||

| USB 2/3 Testing | OCZ Vertex 3 240GB with SATA->USB Adaptor | |||

Many thanks to...

We must thank the following companies for kindly providing hardware for our test bed:

Thank you to OCZ for providing us with PSUs and SSDs.

Thank you to G.Skill for providing us with memory.

Thank you to Corsair for providing us with an AX1200i PSU and a Corsair H80i CLC.

Thank you to MSI for providing us with the NVIDIA GTX 770 Lightning GPUs.

Thank you to Rosewill for providing us with PSUs and RK-9100 keyboards.

Thank you to ASRock for providing us with some IO testing kit.

Thank you to Cooler Master for providing us with Nepton 140XL CLCs and JAS minis.

A quick word to the manufacturers who sent us the extra testing kit for review, including G.Skill’s Ripjaws 4 DDR4-2133 CL15, Corsair for similar modules, and Cooler Master for the Nepton 140XL CLCs. We will be reviewing the DDR4 modules in due course, including Corsair's new extreme DDR4-3200 kit, but we have already tested the Nepton 140XL in a big 14-way CLC roundup. Read about it here.

203 Comments

View All Comments

jabber - Saturday, August 30, 2014 - link

At the end of the day the Xeons are just bug fixed lower power i7 chips anyway. But one way that Xeons come into their own is on the second hand market. I'll be picking up ex. corp dual CPU Xeon workstations for peanuts compared to the domestic versions. I have a 7 year old 8 core Xeon workstation that still WPrimes in 7 seconds. Not bad for a $100 box.mapesdhs - Saturday, August 30, 2014 - link

All correct, though it concerns me that the max RAM of X99 may only be 64GB much

of the time. After adding two cores and moving up to working with 4K material, that's

not going to be enough.

Performance-wise, good for a new build, but sadly probably not good enough as an

upgrade over a 3930K @ 4.7+ or anything that follows. The better storage options

might be an incentive for some to upgrade though, depending on their RAID setups

& suchlike.

Ian.

leminlyme - Tuesday, September 2, 2014 - link

They are applicable to different crowds, and computing doesn't exclude gaming, whereas Xeons to a degree do (Though I'm sure for most of them you'd be fine, I for one like those PCI lanes, as well as the per core performance on the desktop processors is just typically better. Plus form factor and all that. These fill a glorious niche that I am indeed excited about. They're pretty damn cheap for their quality too. I guess the RAM totally circumvents that benefit though.Mithan - Friday, August 29, 2014 - link

I am into gaming and nothing is worth upgrading over the 2500 if you have it. For you it's different of course :)AnnihilatorX - Saturday, August 30, 2014 - link

I am thinking of upgrading my 2500 k actually, because I got a dud CPU which won't even overclock to 4.2Ghzmindbomb - Friday, August 29, 2014 - link

That's the fault of the software. Seems unfair to blame the chip for that. DX12 should change that anyway.CaedenV - Friday, August 29, 2014 - link

How exactly will DX12 help? DX12 is good for helping wimpy hardware move from horrible settings to acceptable settings, but for the high end it will not help much at all. Beyond that, it helps the GPU be more efficient and will have little effect on the CPU. Even if it did help the CPU at all, take a look at those charts; pretty much every mid to high end CPU on the market can already saturate a GPU. If the GPU is already the bottle neck then improving the CPU does not help at all.iLovefloss - Friday, August 29, 2014 - link

DirectX12 promises to make more efficient use of multicore processors. AnandTech has already did a piece on Intel's demonstration of its benefit.bwat47 - Sunday, August 31, 2014 - link

I'm sick of hearing this nonsense. Even with reasonably high end hardware mantle and DX12 can help minimum framerates and framerate consistency considerably. I have a 2500k and a 280x, and when I use mantle I get a big boost in minimum framerate.The3D - Friday, September 12, 2014 - link

Given the yet to be released directx 12 and the overall tendency to have less cpu intensive graphics directives ( mantle) i guess the days in which we needed extra powerful cpus to run graphic intensive games are coming to an end.