ARM’s Mali Midgard Architecture Explored

by Ryan Smith on July 3, 2014 11:00 AM ESTMidgard: The Modern Mali

As ARM’s current-generation SoC GPU architecture, at the highest level the Midgard architecture is an interesting take on GPUs that in some ways looks a lot like other GPUs we’ve seen before, and in other ways (owing to its uncommon ancestry) is radically unlike other GPUs. This is coupled with the fact that as an SoC GPU supplier, ARM is in an interesting position where they can offer both CPU and GPU designs to 3rd party licensees, unlike most other GPU designers who either use their designs internally (Qualcomm, NVIDIA) or only license out GPUs and not ARM CPUs (Imagination). From a sales perspective this means ARM can offer the CPU and GPU designs together in a bundle, but perhaps more importantly it means they have the capability design the two in concert with each other, being in the position of the sole creator of the ARM ISA.

Architecturally Midgard is a direct descendant of Utgard. While there is a significant difference in how unified and discrete shaders operate, and as a result they cannot simply be swapped, the resulting shader design for Midgard still ends inheriting many of Utgard’s design elements, features, and quirks. At the same time the surrounding functionality blocks that compose the rest of the GPU have received their own upgrades over the years to improve performance and features, but are none the less distinctly descended from Utgard as well. At the end of the day this is a distinction more important for programmers than it is users (or even tech enthusiasts), but going forward it’s interesting to note just how similar Utgard and Midgard are, a similarity we don’t normally see between unified and discrete shader designs.

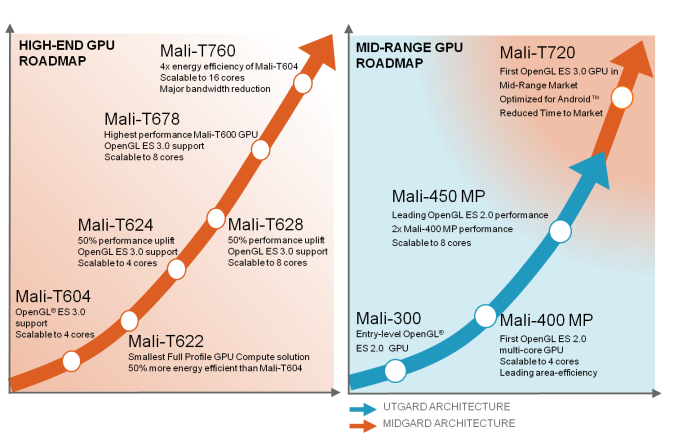

From a design standpoint Midgard is designed to span much of the range for SoC GPUs, from cheap, area-efficient designs to relatively massive designs with an eye on gaming. In doing so ARM offers a few different variations on the Midgard design that are all architecturally identical, but will vary slightly in features and internal organization. So for the purposes of today’s article we’ll be focusing on ARM’s latest and greatest design, Mali-T760, but we will also be calling out differences as necessary.

First and foremost then, let’s talk about design goals and features. Unlike the bare bones OpenGL ES 2.0 Utgard architecture, Midgard has been designed to be a more feature-rich architecture that not only offers solid graphics performance but solid compute performance too. This is in part a logical extension of what a unified shader GPU can already do – they’re innately good at mass math for graphics, so compute is only a minor stretch – but also a deliberate decision by ARM to push compute harder than they would otherwise have to for merely a graphics product.

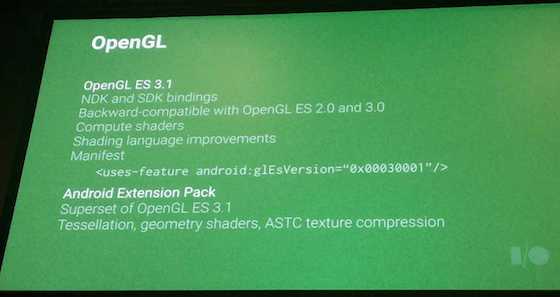

From an API standpoint then Midgard was designed as what is best described as an OpenGL ES 3.0+ part. The architecture was designed from the start to offer functionality beyond what OpenGL ES 3.0 would offer, a decision that has since benefitted ARM by allowing Midgard parts to keep up with newer API standards. In fact ARM has just recently completed OpenGL ES 3.1 conformance testing, with their updated drivers passing Khronos’s required tests. As such all Midgard parts at a hardware level can support OpenGL ES 3.1, with software support reliant on OS and device vendors shipping updated OSes and drivers that enable 3.1 functionality.

Even then Midgard has some functionality that has gone untapped, but will be enabled in the Android ecosystem through the upcoming Android Extension Pack for Android L. The AEP will further build off of OpenGL ES 3.1 by enabling features such as tessellation and geometry shaders, features that did not make it in to 3.1. As with OpenGL ES 3.1, ARM has confirmed that they expect all Midgard GPUs to support the AEP.

Finally, along with OpenGL ES support, ARM also officially offers Direct3D support on Midgard. This functionality has not yet been tapped – all Windows Phone and Windows RT devices so far have been Qualcomm or NVIDIA based – but in principle it is there. One thing to note however is that among the Mali 700 series, only Mali-T760 is Direct3D Feature Level 11_1 capable. Mali-T720 however only supports level 9_3, more befitting of the market realities and its status as a lower cost, lower complexity part.

Meanwhile from a compute standpoint Midgard is intended to be a strong competitor by supporting Android’s RenderScript framework and OpenCL 1.2 full profile. OpenCL support on SoC GPUs has been spotty due in part to the fact that the major OSes haven’t consistently supported it (iOS never has and Android only recently), and of those SoC GPUs that do support it, not all of them support the full profile as opposed to the much more restricted embedded profile. As is often the case with GPU computing just how well this functionality is used is up to the capabilities and imaginations of developers, but ARM has made it clear that they’re fully backing GPU computing even in the SoC space.

66 Comments

View All Comments

toyotabedzrock - Friday, July 4, 2014 - link

Didn't imagine also buy from ATI? Maybe that is why they are concerned with patents?Kevin G - Saturday, July 5, 2014 - link

There likely is a cross licensing agreement in place. Literally to build any modern GPU a company has to cross license patents. It works out to be zero sum in that money isn't exchanged but one company can use the other's patents (and future patents) royalty free.This also puts a rather high barrier to entry in the market.

nolaviz - Thursday, July 3, 2014 - link

Ah, the history... I led the integration of MALI55 into Zoran's APPROACH-5C. Sweet memories :)skiboysteve - Thursday, July 3, 2014 - link

Very interesting. Complete 100% opposite of the AMD architecture.Also, the fact that they can power down shader cores and even individual ALUs makes this pure ILP and zero TLP architecture really shine. Because the compiler only needs to optimize work on an ALU level.... Not on a shader or wavefront level. So then based upon demand they just feed more or less of these ALU-optomized-packets of work into the GPU and power down the rest of the ALUs. It makes complete sense. Make each ALU independent, compile work on a per ALU basis, then scale your ALU utilization at run time... And also scale your chip portfolio on ALU count. Perfect scalability in price, performance and power combined with straightforward driver work.

Awesome!

rootheday - Thursday, July 3, 2014 - link

Transaction Elimination might work for "render to texture", where the texture is then used later in the scene, but it doesn't make sense to me for the final render target that is shown on the screen.. Typically your final render target is part of a swap chain with 2 or 3 different buffers. So the CRC computed for a tile in Frame N would need to match the CRC for frame N-2 (or maybe N-3), not N-1.EdvardS - Thursday, July 3, 2014 - link

So each buffer has its own CRC buddy attached to it - problem solved :-) Longer buffer chains just reduces you temporal coherency a bit.hexgrid - Thursday, July 3, 2014 - link

Relying on a 32bit hash for transaction elimination is a very bad idea. Assuming a normal distribution of hash results, the Birthday Paradox means you've got a better than 50% chance of collision if you have more than ~5000 items being hashed. The compartmentalized comparisons (it looks like they only compare against the same tile) means the collision rate will be lower, but there will be collisions, and in some cases they will look terrible.If there's a glitch, there's an excellent chance it will persist for a while. For example, let's say I'm bringing up a "pause" menu. If the title overlay of the pause menu happens to hash to the same value as the background that it replaced, that one tile of the pause menu title won't appear. Next frame, it still won't appear, because again the hash value hasn't changed. Until something happens to actually change the title or make it disappear, the glitch will persist.

The "fix" will be to do GUI overlays in translucency and keep the background animating somehow to prevent cache misses from sticking around, but it's an ugly hardware hack that will force software workarounds.

EdvardS - Thursday, July 3, 2014 - link

What if the CRC is 64 bit for each small tile? Quite overkill and very hard to break.mkozakewich - Sunday, July 6, 2014 - link

If the pause screen fades in, wouldn't that change the image data enough that it would generate entirely different CRCs for each frame until the animation ended?At any rate, my rule of thumb would be that if it doesn't happen more often than a keyframe is dropped in a video, it'll be fine.

Krysto - Thursday, July 3, 2014 - link

I'd like you guys to do more 30x GFX loops, that how whether these GPU's only prop up high numbers at first, but then quickly get throttled. From what I noticed Imagination is the only one that doesn't throttle in that test. But I'm curious to see if K1 and T760 do.