ADATA Premier SP610 SSD (256GB & 512GB) Review: Say Hello to an SMI Controller

by Kristian Vättö on June 27, 2014 2:00 PM EST- Posted in

- Storage

- SSDs

- ADATA

- SP610

- Silicon Motion

Performance Consistency

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we do not have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the buttons below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

Ouch, this doesn't look too promising. The SMI controller seems to be very aggressive when it comes to steady-state performance, meaning that as soon as there is an empty block it prioritizes host writes over internal garbage collection. The result is fairly inconsistent performance because for a second the drive is pushing over 50K IOPS but then it must do garbage collection to free up blocks, which results in the IOPS dropping to ~2,000. Even with added over-provisioning, the behavior continues, although now more IOs happen at a higher speed because the drive has to do less internal garbage collection to free up blocks.

This may have something to do with the fact that the SM2246EN controller only has a single core. Most controllers today are at least dual-core, which means that at least in one simple scenario one core can be dedicated to host operations while the other handles internal routines. Of course the utilization of cores is likely much more complex and manufactures are not usually willing to share this information, but it would explain why the SP610 has such a large variance in performance.

As we are dealing with a budget mainstream drive, I am not going to be that harsh with the IO consistency. Most users are unlikely to put the drive under a heavy 4KB random write load anyway, so for light and moderate usage the drive should do just fine because ~2,000 IOPS at the lowest is not even that bad -- and it's still a large step ahead of any HDD.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

Just to put things in perspective, however, even the over-provisioned "384GB" SP610 ends up offering worse consistency than the 128GB JMicron JMF667H SSD. Pricing will need to be very compelling if this drive is going to stand up against drives like the Crucial MX100.

|

|||||||||

| ADATA SP610 | ADATA SP920 | JMicron JMF667H (Toshiba NAND) | Samsung SSD 840 EVO mSATA | Crucial MX100 | |||||

| Default | |||||||||

| 25% OP | |||||||||

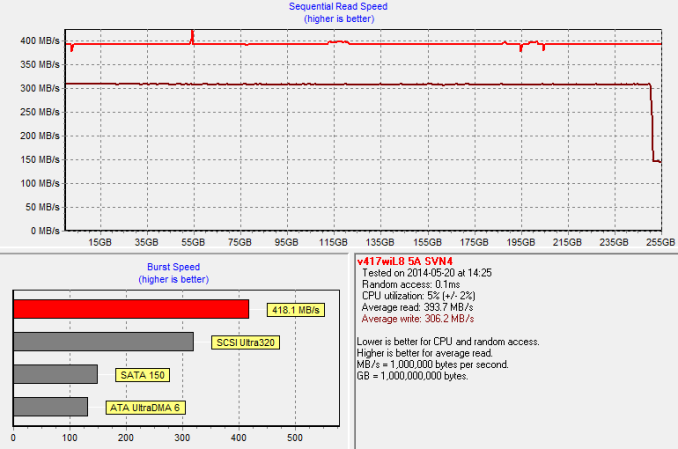

TRIM Validation

To test TRIM, I first filled all user-accessible LBAs with sequential data and continued with torturing the drive with 4KB random writes (100% LBA, QD=32) for 30 minutes. After the torture I TRIM'ed the drive (quick format in Windows 7/8) and ran HD Tach to make sure TRIM is functional.

And it is.

24 Comments

View All Comments

mapesdhs - Friday, June 27, 2014 - link

I'd say go with the EVO; Samsung drives have excellent long term consistency, at

least that's what I've found from the range of models I've obtained.

Looking at the initial spec summary, the SP610 just seems like a slower MX100,

which puts it below the EVO or any other drive in that class, so unless it's priced

like the MX100 I wouldn't bother with it.

Kristian, may I ask, why are there so many models missing from the tables? eg.

Vector/150, Neutron GTX, M500, V300, Force Series 3, M550, M5Pro Extreme,

etc. I'm glad the Extreme II is there though, that's quite a good model atm.

Would be interesting to include a few older ones too, ie. to see how performance

has moved on from the likes of the Vertex3/4 and others from bygone days. I still

bag Vertex4s and original Vectors if I can as they hold up very well to current models,

though this week I snapped up four 128GB Extreme IIs (45 UKP each) as their IOPS

rating for a 128GB seems ideal for tasks like a big Windows paging drive in a system

with 64GB RAM.

Ian.

PS. Obvious point btw, perhaps ADATA can improve the consistency issue with a fw update?

stickmansam - Friday, June 27, 2014 - link

The SP610 is actually about the same as the EVO and MX100 it seems based on overall resultsThe firmware and controller actually seem pretty competitive

I do agree that more drives should be compared if possible. Even the Bench tool seems to be missing drives that were in reviews in the past.

dj_aris - Friday, June 27, 2014 - link

Why are we still testing sata 3 drives anyway?mapesdhs - Friday, June 27, 2014 - link

Because the vast majority of people still want to know how they perform. Rememberthere will be many with older SSDs who are perhaps considering an upgrade by now,

from the likes of the venerable Crucial M4/V4, Vertex2/3, older Intels, Samsung 830, etc.

For newer reviews, it's less the sequential rates and more about the random behaviour,

consistency, and other features like encryption that people want to know about now,

especially with so many being used in laptops, notebooks, etc. I also like to know how

what's being offered anew compares wrt pricing, ie. are things really getting better?

It's great that 1TB models are finally available, but I still yearn for the day when SSDs

can exceed HDDs in offered capacities. I read that SanDisk seem determined to push

forward this is as quickly as possible, moving to 2TB+ next year. I certainly hope so.

Nothing wrong with having 4TB+ rust-spinners, but backing them up is a total pain (and

quite frankly anyone who uses a 4TB non-Enterprise SATA HDD to hold their precious

data is nuts). By contrast, having 4TB+ SSDs at least means doing backups wouldn't

be slow. When I use Macrium to create a backup image of a 256GB C-drive SSD onto

some other SSD, the speeds achieved really are impressive.

I guess the down side will be that, inevitably at first, high capacity SSDs will be expensive

purely because it'll be possible to sell them at high prices no problem, whatever they

actually cost to make. I just hope at least one vendor will break away from the price

gouging for a change and really move this forward; if nothing else, they'll grab some

hefty market share if they do.

Ian.

name99 - Friday, June 27, 2014 - link

"The benefit of ARC is that it is configurable and the client can design the CPU to fit the task, for example by adding extra instructions and registers. Generally the result is a more efficient design because the CPU has been designed specifically for the task at hand instead of being an all around solution like the most ARM cores are."This is marketing speak. In future, rather than just repeat the claims about why "CPU you've never heard of is more awesome than anything you've actually heard of" please provide numbers to back up the claim, or ditch the PR speak.

If this CPU is "more efficient" than, e.g., an ARM (or MIPS or PPC) competitor, let's have some power numbers.

My complaint is not that they are using ARC --- they can use whatever CPU they like. My complaint is that the two sentences I quoted are absolutely no different from simply telling us, e.g. "this SSD is more efficient than its competitors" with no data to back that up. Tech claims require data. If MSI aren't willing to provide data to back up a tech claim, you shouldn't be printing their advertising in a tech story.

Kristian Vättö - Saturday, June 28, 2014 - link

Nothing regarding the controller's architecture came from ADATA or SMI. In fact, I got the ARC part from Tom's Hardware, although I added the parts about ARC's benefits. If I just put ARC there and leave out the explanation, what is the usefulness of that? Yay, yet another acronym that means absolutely nothing to the reader unless it is opened to them.To be clear, I did not mean that an ARC CPU is always more efficient in every task. However, for a specific task with a limited set of operations (like in an SSD), it usually is because the design can be customized to remove unnecessary features or add ones that are needed. It's not an "ARM killer", it is simply an alternative option that can suit the task better by removing some of the limitations that off-the-self CPU designs have. Ultimately the controller is just a piece of silicon and everything it does is operated by the firmware.

epobirs - Saturday, June 28, 2014 - link

After all of these years, when I see the Argonaut name I find myself wondering when a new Star Glider will be published. (Star Fox doesn't count other than spiritually.)s44 - Saturday, June 28, 2014 - link

How do we know this is even going to be the controller in future units of this model? The bait-and-switch with the Optima deserves more than passing mention, I think.hojnikb - Saturday, June 28, 2014 - link

Well, to be fair, sandforce version of optima is faster, so really, you're getting a better drive.Still not okay, but not nearly as bad as kingston's bait and switch.

smadhu - Sunday, June 29, 2014 - link

To muddy the controller waters further, we are planning to launch a fully open source NVM Express controller this year. This is from IIT-Madras, an Indian university in conjunction with the IT Univ. of Copenhagen. The development itself is in public, the main source is at bitbucket.org/casl/ssd-controller. This is part of a larger open storage stack project called lightstor, see lightstor.org. Lightstor is an extremely ambitious effort to reinvent storage from the controller up to the application stack. The SW stack, called Lightnvm is up and running on a Linux branch. Google Lightnvm. There is also an emulator to run it now.We will be launching a Xilinx based PCIe card with our controller IP and open source CPU (1-4 cores). CPU is based on the RISC-V ISA from UCB, another partner of ours. The PCIe EP and ONFI will have to be proprietary initially but we will be completing our open source ONFI 4.0 early next year. All PHY will still have to be 3rd party since we do not want to get into analog PHY development.

Add a SATA controller instead of PCIe and you have a SATA SSD.

All HW source is BSD licensed, so anyone can download it and tape it out with no copyleft hassles. Final version should be the fastest controller out there. Idea is to beat every controller out there and not just launch a univ. test bed. Core currently runs at 700 Mhz on a 32 bit datapath.

If anybody wants to help in testing, bench-marking or coding drop us a note. If nothing else, have fun going through the source of an SSD controller. It is a lot of fun. Language is Bluespec, a very high level HDL which is easy for SW geeks to understand. Hides a lot of the HW. plumbing. Comes from MIT, another collaborator of ours so we are partial to the language.

Hopefully we will do to the storage world what Linux did to the OS world !

The core is being bench-marked right now, hope to publish something by early winter.

I apologize for what technically is an ad for our project but I figure a BSD licensed open source SSD controller qualifies for free ads !