Imagination's PowerVR Rogue Architecture Explored

by Ryan Smith on February 24, 2014 3:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- PowerVR

- PowerVR Series6

- SoCs

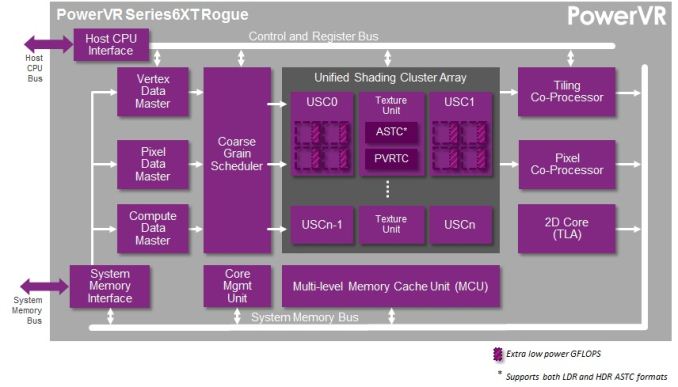

Imagination’s PowerVR Rogue Series 6/6XT USCs Dissected

Diving right into the heart of the matter, it’s probably best to start with a partial explanation for why Imagination is being so forward about their architecture after all this time.

Imagination’s principle blog, Graphics cores: trying to compare apples to apples, opens up with an argument over just what a “core” is and how it should be counted. Imagination doesn’t name any names, but from the context of their blog it’s clear that they’re worried about being in a core war and losing based on who’s counting cores and how. Time and time again we’ve seen chip and device makers promote their products both by their clockspeeds and their number of cores, leading to both MHz wars and core wars.

Both of these pieces of data are in fact absolutely essential to understanding a product, but consumers – rightfully disinterested in needing a Comp. Engineering degree to understand their purchases – will latch on to one or both numbers, which in turn rewards whoever can push a product with the highest clockspeed or the highest number of cores. The latter in turn can result in some very creative accounting, such as the Motorola X8, where components that aren’t traditionally called a core end up in the core count.

In the SoC GPU space there thankfully isn’t any creative accounting going on, but the nature of the Rogue architecture combined with Imagination’s previous method of counting cores has left Imagination shooting themselves in the foot if they were to get into a core war. If nothing else, the fact that Imagination has previously uses the term “core” to define a single USC, which depending on the specific design may be replicated several times, means that they end up having relatively low core counts.

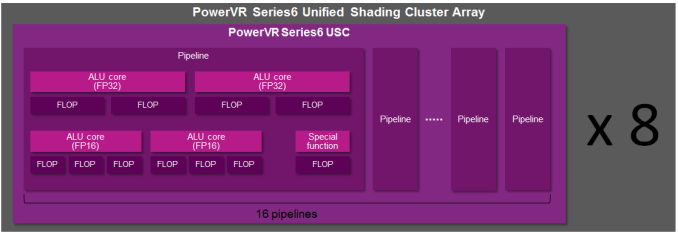

Compounding that matter is that at a pipeline or shader core level (there’s that word again), Rogue has several FP32 (or FP16) ALUs when other architectures may only have one. So even if one were to count pipelines, Imagination would come up with just 16 cores in a USC.

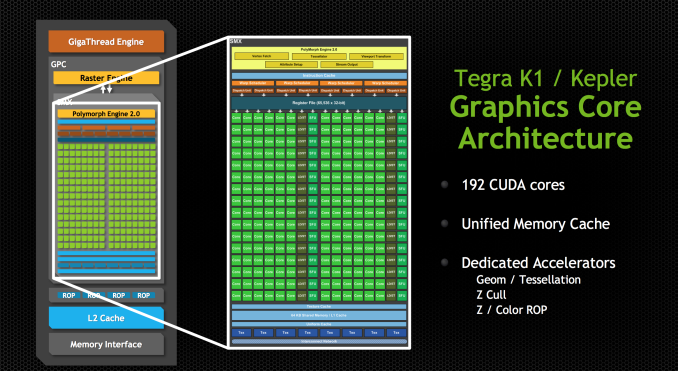

Their competition and impetus – and again Imagination hasn’t named any names – would appear to be NVIDIA, who just recently announced their Tegra K1 SoCs. A K1 contains 192 CUDA cores, each much narrower than a Rogue pipeline. So even though a high-end Rogue design would have 6 or so USCs, that would only be 96 pipelines versus 192 CUDA cores. Imagination clearly doesn’t want to be dismissed based on this metric, hence their interest in arguing what a core is, and more importantly part of the reason for their desire to open up and discuss their architecture so that we can understand why this difference matters. Imagination may only have 96 pipelines, but they have many more ALUs in each one of those pipelines.

The Rogue

And that brings us to the Rogue architecture. For today’s article and blog releases, Imagination has given us some surprisingly deep details on the Rogue USC, from which we can finally paint a picture of how thread execution works on Rogue, and furthermore gather some idea on what Rogue’s strengths are and what weaknesses it faces.

We’ll begin with differentiating between Rogue as in PowerVR Series 6, and Series 6XT. Series 6XT was the recently announced refresh for the Series 6 family, intending to further improve on PowerVR performance by undertaking several optimizations to the design. At the time of that announcement we didn’t have very many details on what those optimizations were, and now we have a much better idea.

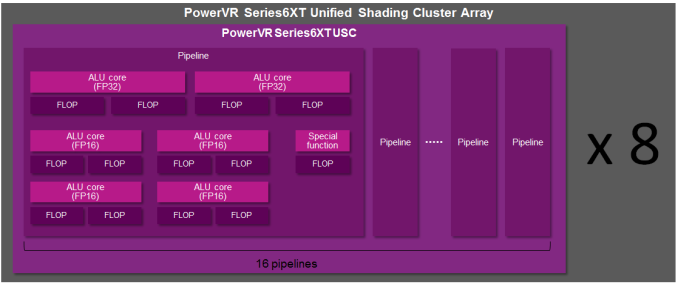

It turns out that Imagination has been doing some tinkering under the hood, and while the number of FP32 slots remains unchanged, the FP16 slots have been altered. Wait, did we say FP16 slots?! Oh yes!

It’s a shame we don’t have such similar low level details for other SoC architectures, but from a PC perspective it’s extremely interesting that Imagination has FP16 slots. As a quick refresher, on the PC side GPUs have been using FP32 operations exclusively for quite some time, and all ALUs have been FP32 for years. But of course mobile trails PC in functionality and shader complexity, so while FP16 usage is rarer in PC games and applications, it can be very common in the mobile space.

The tradeoff of course is performance for accuracy. A 16bit floating point number is pretty imprecise – a 16bit value only gives us 65K combinations – hence it’s rarely used in the PC space in favor of far more accurate 32bit floating point numbers, which offer 4 billion combinations. But a 16bit value is smaller than a 32bit value, requiring less memory to store, less bandwidth to move, less energy to compute, and fewer transistors to operate on. So in the mobile space FP16 is still in use, both on the software side and the hardware side, with developers taking care not to let the lower precision cause rendering errors.

The interesting part on the hardware side is the implementation of dedicated FP16 units, versus just running the values on FP32 units. In the PC space we do the latter, but of course FP16 operations are relatively rare. The catch being that it’s not the most efficient way to execute FP16 operations; we’re firing up a lot of extra transistors and burning extra power to do it. In the PC space this is a reasonable tradeoff, but in the mobile space it’s clear the Imagination has decided otherwise.

The end result being that Rogue has dedicated FP16 units, not unlike how NVIDIA has dedicated FP64 CUDA cores on their desktop architectures. FP16 units take up additional die space – you have to place them alongside your FP32 units – but when you need to compute an FP16 number, you don’t have to fire up a more expensive FP32 unit to do the work. Hence the inclusion of FP16 units is a tradeoff between die size and performance, with the additional performance coming from the reduction in power consumption and heat when operating on FP16 values.

That little segue aside, let’s get back to the difference between Series 6 and Series 6XT. With Series 6, Imagination has an interesting setup where their FP16 ALUs can process up to 3 operations in one cycle.

As a refresher, for a more traditional ALU we say that one ALU can achieve 2 FLOPs in one cycle because we typically count the most dense operation, the Multiply Addition (MAD), otherwise seen as a Multiply Accumulate (MAC) or Fused Multiply Addition (FMA). MAD is a special case where one ALU can do the multiply and the accumulate in one cycle, one of a handful of scenarios where multiple operations can occur at once. MADs and their variants are relatively common in graphics work, hence for GPU performance this is the number we usually quote as it’s especially useful in graphics, and being the bigger number it also makes manufacturers happier, too.

With that said, Imagination’s FP16 ALUs are more intricate than their FP32 ALUs, especially under Series 6. Unfortunately Imagination is unable to share the complete details with us of why this is (as we've noted before, they’re not yet fully open), but it’s clear from this diagram that the Series 6 FP16 ALU has been optimized for some kind of 3 operator instruction. What that operation is we don’t know, but presumably it was important enough for Imagination to give their FP16 ALUs the ability to do it in one cycle.

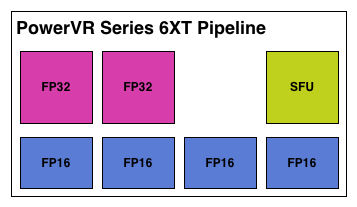

But from the perspective of an individual pipeline it’s interesting to see that with Series 6XT Imagination has shaken things up by "adding" 2 more FP16 ALUs. Keeping in mind that these are logical diagrams and not physical diagrams – in reality these ALUs are almost certainly tied together in a limited fashion rather than standing alone – Imagination has changed it so that Series 6XT can process more 2 operator FP16 instructions such as MAD. Without more details it’s hard to say for sure what’s going on, but if these “new” FP16 ALUs are really just modifications of the old FP16 ALUs, then it would be a safe assumption that Imagination can still execute their 3 operator instructions over what’s logically 2 ALUs, making these new FP16 ALUs a wider variant of the previous ALU.

Otherwise from this point of view Series 6 and Series 6XT are nearly identical. We have the 2 FP32 ALUs, and then for special functions – transcendentals and the like – a dedicated special function unit exists off to the side. On that note, for the rest of this article we will primarily be focusing on Series 6XT since it’s the newer architecture, but with the exception of FP16 operations it will be equally applicable to both Series 6 and 6XT.

95 Comments

View All Comments

Scali - Monday, February 24, 2014 - link

"the alternative to DirectX is OpenGL. Is it more suited for TBDR architecture than "brute force" ones?"In theory, D3D used to be more suited to TBDR than OpenGL, because it had explicit BeginScene() and EndScene() markers. But those have been dropped after D3D9.

I can't really think of anything off the top of my head that would make one API more suited than the other these days. They're both very similar: just bind your textures/buffers/shaders, update the constants, and fire off your geometry.

"How is PowerVR going to fight against an architecture as flexible as nvidia ones that can also be used for CUDA computing and thus being adopted into markets (and for other tasks) PowerVR cannot with their current architecture?"

PowerVR supports OpenCL, so they too can do the GPGPU-game. It all depends on who delivers the best package in terms of features, performance, power usage, etc.

Jhwzz - Tuesday, February 25, 2014 - link

>Again, as someone altready asked, tile based rendering was used on the desktop but was soon>abandoned as it could not give any real advantages over the raw power of other architectures

>that grew much faster that what PowerVR could optimize their algorithms, making tile based

>rendering less and less profitable. What makes that scenario different that what we are

> witnessing in this period where mobile resolutions are growing to be even bigger than desktop

> monitors and that games complexity is gonig to increase for the arrival of these really powerful

>GPUs (K1 in primis)?

It is a common misconception that PowerVR's desktop parts where not competitive or had compatibility problems. The last card they produced, the Kyro II was actual very competitive with the offerings from both NVidia and ATi. The claims of incompatibility where largely unfounded marketing FUD from competitors, with later drivers running the majority of content without problems. Further they did not leave the market because they could not compete on performance, instead their partner at the time, STM, decided to pull out of the market for unstated reasons, although this was most likely due to them not being able to invest the amount of money they needed to in order to take the high ground.

Mobile is VERY different to desktop space, NV and ATi where able to brute force their way to the top as both power consumption and memory bandwidth had extremely wide envelopes, this is not the case in mobile space. In Kyro II PowerVR had demonstrated that they were able to compete with considerable higher specification part (for clock & memory BW) from both NV and AMD, with considerably lower memory BW and power requirements. Although NV and AMD have evolved so have PowerVR, as such there is no reason to assume that they don't still have advantages.

>It seems PowerVR is behaving a bit like 3DFx did at the time, till it died. They were using their

>advanced but old technology to the exterme, so they rendered at 16bit instead of 32, used 16bit

>Zbuffer instead of 24 and many more "tricks" that were forced to try to hide what was quite

>clear: 3DFX didn't have the right architecture to compete with new companies like nvidia

> and ATI that started their story with the right step and much more powerful architectures

> (TNT2 simply destroyed Voodoo3 under all points, and beware, I was an Voodoo3 unfortunate > owner).

This simply makes no sense in the context of the market these cores are being target at. At the fundamental level the primary API currently used in mobile is OGLES2.0 which does not mandate anything higher than FP16 within fragment shaders. This means that the vast majority of current mobile content only use FP16 in fragment shaders, in these circumstances do you think it make sense to through area at higher precision paths? Of course it doesn’t! Further it’s not like the PowerVR architecture looks like slouch at FP32.

At the end of the day they truth will only be seen in benchmark in actual devices, not in marketing claims and FUD from various companies.

Jhwzz - Tuesday, February 25, 2014 - link

BTW you do realise that NV run many of these benchmark at 16 bit Z and 16 bit frame buffer don't you? They do thsi because the become even less comeptitive when forced to use 32 bits. So who is actually using old "technology" to hide real deficiencies in thir architectures?MrSpadge - Saturday, March 1, 2014 - link

If you can't see a difference due to using 16 bit somewhere along the rendering path, then it's actually the smart thing to do. It saves power, which can be better used elsewhere (higher clocks). Well, that was actually 3DFX's argument for why Glide with 16 bit + dithering was the smart choice back then. But "they've got the bigger bits" won (along with other advantages of the early nVidias).MrPoletski - Sunday, March 9, 2014 - link

I tmight be the smart thing to do, but when it's in a benchmark that's supposed to be at 32 bits then I call that cheating!allanmac - Monday, February 24, 2014 - link

If a new GPU architecture "deep dive" doesn't include the number of registers per multiprocessor then it's bordering on worthless.Both Intel, AMD and NVIDIA publish these numbers so the other mobile GPU vendors should as well.

Please dig up these numbers since then we can begin to compare these next gen mobile GPUs.

I suspect ARM, ImgTech and QCOM simply won't tell you... but you might be able to find the answer through a series of OpenCL tests.

boostern - Monday, February 24, 2014 - link

OpenCL tests on a GPU that isn't even in production? Before you say "test it on the iphone 5S", there is no OpenCL public libraries available on iOs, as far as I know.allanmac - Monday, February 24, 2014 - link

The first option is to ask the vendor.boostern - Monday, February 24, 2014 - link

Yes, maybe :DMrSpadge - Saturday, March 1, 2014 - link

While this is surely important the raw number of registers doesn't tell you that much either. In fact, it could be very misleading. How many registers you need first and foremost depends on the out of order window (in CPUs) or here the number of threads in flight. Which is something they didn't tell us either. Also different cache sizes and latencies would determine how bad it is to run out of register space.