Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

Loading the Server

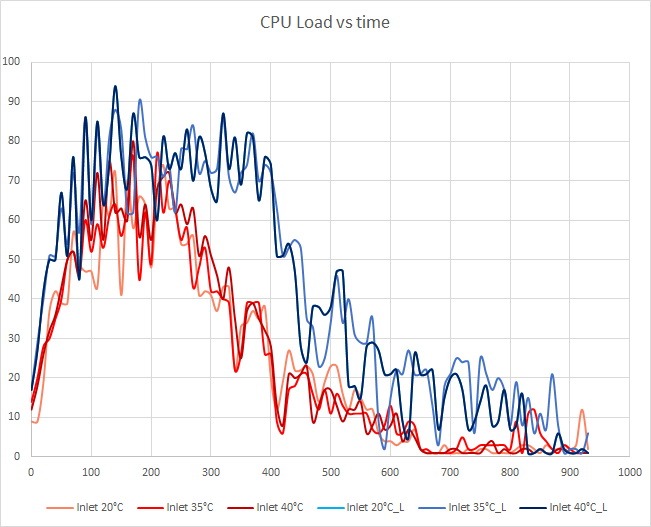

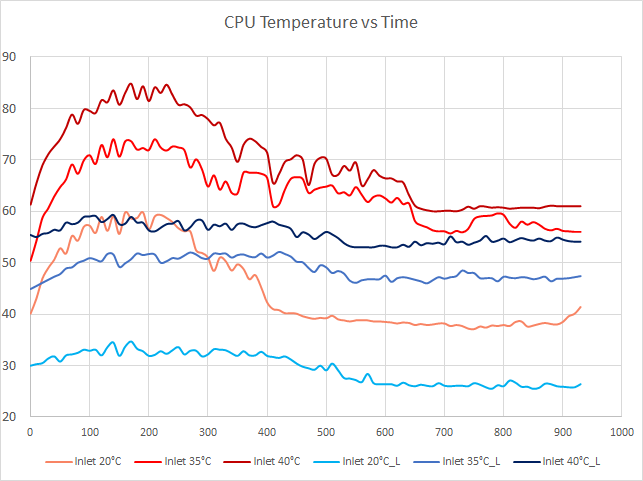

The server first gets a few warm-up runs and then we start measuring during a period of about 1000 seconds. The blue lines represent the measurements done with the Xeon E5-2650L, the orange/red lines represent the Xeon E5-2697 v2. We test with three settings:

- No heating. Inlet temperature is about 20-21°C, regulated by the CRAC

- Moderate heating. We regulate until the inlet temperature is about 35°C

- Heavy heating. We regulate until the inlet temperature is about 40°C

First we start with a stress test: what kind of CPU load do we attain? Our objective is to be able to test a realistic load for a virtualized host between 20 and 80% CPU load. Peaks above 80% are acceptable but long periods of 100% CPU load are not.

There are some small variations between the different tests, but the load curve is very similar on the same CPU. The 2.4GHz 12-core Xeon E5-2697 v2 has a CPU load between 1% and 78%. During peak load, the load is between 40% and 80%.

The 8-core 1.8GHz Xeon E5-2650L is not as powerful and has a peak load of 50% to 94%. Let's check out the temperatures. The challenge is to keep the CPU temperature below the specified Tcase.

The low power Xeon stays well below the specified Tcase. Despite the fact that it starts at 55°C when the inlet is set to 40°C, the CPU never reaches 60°C.

The results on our 12-core monster are a different matter. With an inlet temperature up to 35°C, the server is capable of keeping the CPU below 75°C (see red line). When we increase the inlet temperature to 40°C, the CPU starts at 61°C and quickly rises to 80°C. Peaks of 85°C are measured, which is very close to the specified 86°C maximum temperature. Those values are acceptable, but at first sight it seems that there is little headroom left.

The most extreme case would be to fill up all disk bays and DIMM slots and to set inlet temperature to 45°C. Our heating element is not capable of sustaining an inlet of 45°C, but we can get an idea of what would happen by measuring how hard the fans are spinning.

48 Comments

View All Comments

bobbozzo - Tuesday, February 11, 2014 - link

"The main energy gobblers are the CRACs"Actually, the IT equipment (servers & networking) use more power than the cooling equipment.

ref: http://www.electronics-cooling.com/2010/12/energy-...

"The IT equipment usually consumes about 45-55% of the total electricity, and total cooling energy consumption is roughly 30-40% of the total energy use"

Thanks for the article though.

JohanAnandtech - Wednesday, February 12, 2014 - link

That is the whole point, isn't it? IT equipment uses power to be productive, everything else is supporting the IT equipment and thus overhead that you have to minimize. From the facility power, CRACs are the most important power gobblers.bobbozzo - Tuesday, February 11, 2014 - link

So, who is volunteering to work in a datacenter with 35-40C cool aisles and 40-45C hot aisles?Thud2 - Wednesday, February 12, 2014 - link

80,0000, that's sounds like a lot.CharonPDX - Monday, February 17, 2014 - link

See also Intel's long-term research into it, at their New Mexico data center: http://www.intel.com/content/www/us/en/data-center...puffpio - Tuesday, February 18, 2014 - link

On the first page you mention "The "single-tenant" data centers of Facebook, Google, Microsoft and Yahoo that use "free cooling" to its full potential are able to achieve an astonishing PUE of 1.15-1."This article says that Facebook has a achieved a PUE of 1.07 (https://www.facebook.com/note.php?note_id=10150148...

lwatcdr - Thursday, February 20, 2014 - link

So I wonder when Google will build a data center in say North Dakota. Combine the ample wind power with cold and it looks like a perfect place for a green data center.Kranthi Ranadheer - Monday, April 17, 2017 - link

Hi Guys,Does anyone by chance have a recorded data of Temperature and processor's speed in a server room? Or can someone give me the information about the high-end and low-end values measured in any of the server rooms respectively, considering the equation temperature v/s processor's speed?