ARM and Partners Deliver First ARM Server Platform Standard

by Johan De Gelas on January 29, 2014 1:05 PM EST- Posted in

- IT Computing

- Arm

- Enterprise

- Enterprise CPUs

The demise of innovator Calxeda and the excellent performance per watt of the new Intel Avoton server were certainly not good omens for the ARM server market. However, there are still quite a few vendors that are aspiring to break into the micro server market.

AMD seems to have the best position with by far the most building blocks and experience in the server world. The 64 bit 8-core ARMv8 based Opteron A1100 should see the light in the second half of 2014. Broadcom is also well placed and has announced that it will produce a 3 GHz 16 nm quadcore server ARMv8 server CPU. ARM SoC marketleader Qualcomm has shown some interest too, but without any real product yet. Capable outsiders are Cavium with "Project Thunder" and AppliedMicro with the x-gene family.

But unless any of the players mentioned above can grab Intel-like marketshare of the micro server market, the fact remains that supporting all ARM servers is currently a very time consuming undertaking. Different interrupt controllers, different implementation of FP units... at this point in time, the ARM server platform simply does not exist. It is a mix of very different hardware running their own very customized OS kernels.

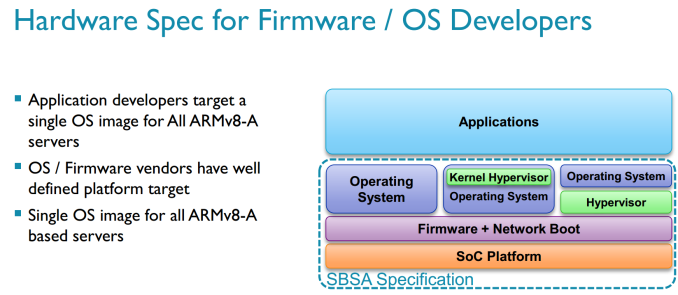

So the first hurdle to take is to develop a platform standard. And that is exactly what ARM is announcing today: the platform standard for ARMv8-A based (64-bit) servers, known as the ARM ‘Server Base System Architecture’ (SBSA) specification.

The new specification is supported by a very broad range of companies ranging from software companies such as Canonical, Citrix, Linaro, Microsoft, Red Hat and SUSE, OEMs (Dell and HP) and the most important component vendors active in this field such as AMD, Cavium, Applied Micro and Texas Instruments. In fact, the Opteron A1100 that was just announced adheres to this new spec.

All those partners of course formulated comments, but the best comment came from Frank Frankovsky, president and chairman of the Open Compute Project Foundation.

"These standardization efforts will help speed adoption of ARM in the datacenter by providing consumers and software developers with the consistency and predictability they require, and by helping increase the pace of innovation in ARM technologies by eliminating gratuitous differentiation in areas like device enumeration and boot process."

The primary goal is to ensure enough standard system architecture to enable a single OS image to run on all hardware compliant to this specification. That may sound like a fairly simple thing, but in reality it's extremely important to solidifying the ARM ecosystem and making it a viable alternative in the server space.

A few examples of the standard:

- The base server system shall implement a GICv2 interrupt controller

- As a result, the maximum number of CPUs in the system is 8

- all CPUs must have Advanced SIMD extensions and cryptography extensions.

- the system uses generic timers (Specified by ARM)

- CPUs must implement the described power state semantics

- USB 2.0 controllers must conform to EHCI 1.1, 3.0 to XHCI 1.0, SATA controllers to AHCI v1.3

14 Comments

View All Comments

BMNify - Friday, January 31, 2014 - link

thats true , OC the Calxeda and moonshot 32bit ARM prototypes where fine as a proof of concept, but their first product layout of their but ugly SLED's use of the available space what nothing but shameful ,tons of useless metal and wasted space in a given U configuration restricting airflow etc...Calxeda's NOC was apparently good but they should have sacked the SLED designers , using small self contained COM (computer On Modules) and providing a simple PCB plastic slide mount at minimal distance between PCB cards to direct airflow would have been far more rewarding and cst less to produce, as you could then re-purpose these separate COM when it became time to upgrade.... it's a shame really , lets hope someone in the server space learns that lesson and provides what people want to actually buy this time around.

on a side not its funny that Microsoft have now also joined this ARM imitative :)

http://www.computerworld.com/s/article/9245854/Mic...

lwatcdr - Monday, February 10, 2014 - link

I do not think so. This should really work well in the SAN and NAS space as well as web servers.