OCZ Vertex 460 (240GB) Review

by Kristian Vättö on January 22, 2014 9:00 AM EST- Posted in

- Storage

- SSDs

- OCZ

- Indilinx

- Vertex 460

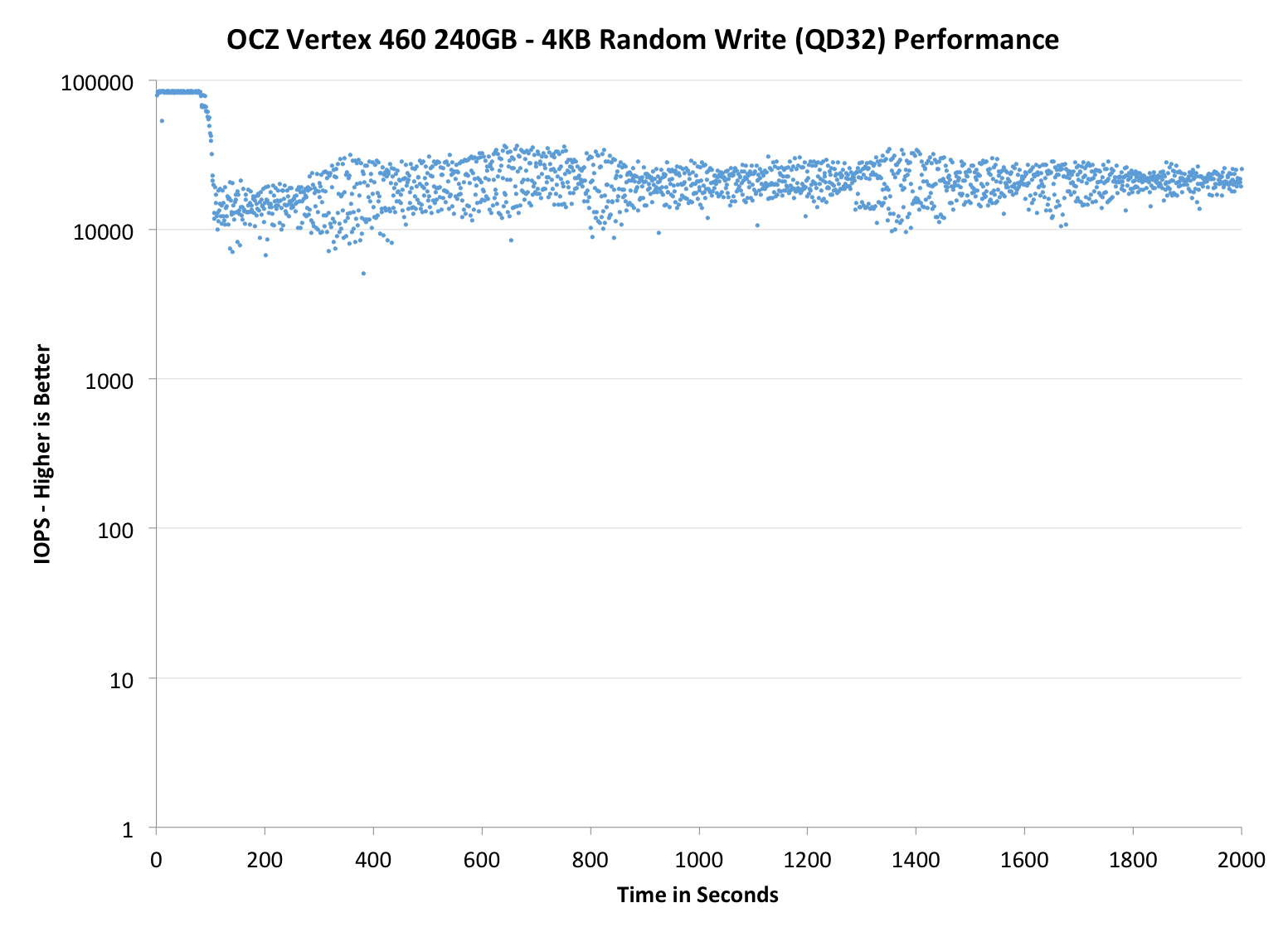

Performance Consistency

In our Intel SSD DC S3700 review Anand introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst-case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below we take a freshly secure erased SSD and fill it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. We run the test for just over half an hour, nowhere near what we run our steady state tests for but enough to give a good look at drive behavior once all spare area fills up.

We record instantaneous IOPS every second for the duration of the test and then plot IOPS vs. time and generate the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, we vary the percentage of the drive that gets filled/tested depending on the amount of spare area we're trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers are guaranteed to behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

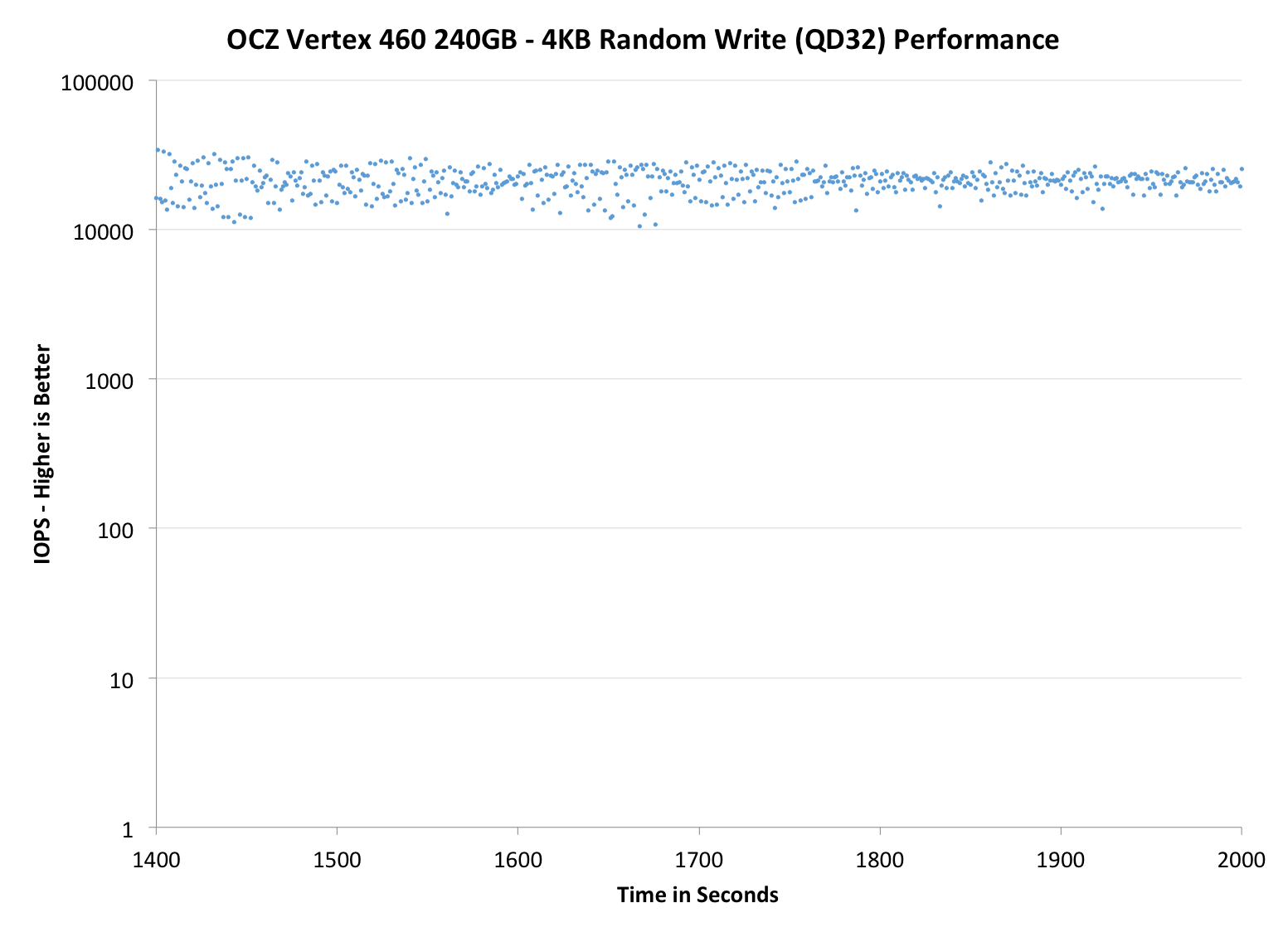

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | Sandisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

Performance consistency is more or less a match with the Vector 150. There is essentially no difference, only some slight variation which may as well be caused by the nature of how garbage collection algorithms work (i.e. the result is never exactly the same).

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

|

|||||||||

| OCZ Vertex 460 240GB | OCZ Vector 150 240GB | Corsair Neutron 240GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

| 25% OP | |||||||||

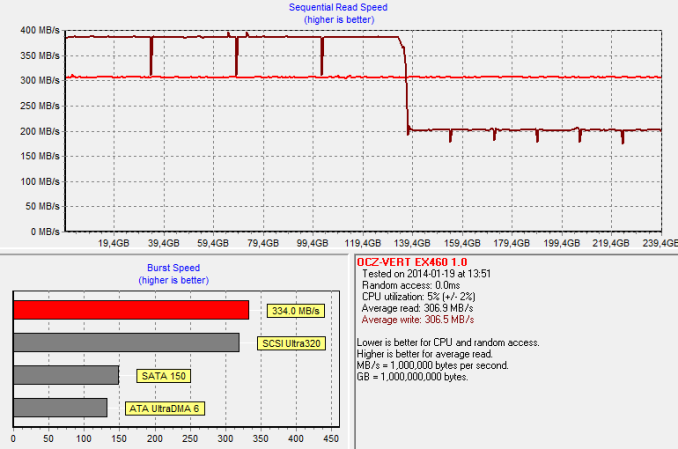

TRIM Validation

To test TRIM, I first filled all user-accessible LBAs with sequential data and continued with torturing the drive with 4KB random writes (100% LBA, QD=32) for 60 minutes. After the torture I TRIM'ed the drive (quick format in Windows 7/8) and ran HD Tach to make sure TRIM is functional.

And TRIM works. The HD Tach graph also shows the impact of OCZ's performance mode, although in a negative light. Once half of the LBAs have been filled, all data has to be reorganized. The result is a decrease in write performance as the drive is reorganizing the existing data at the same time as HD Tach is writing to it. Once the reorganization process is over, the performance will recover close to the original performance.

69 Comments

View All Comments

melgross - Wednesday, January 22, 2014 - link

This site is obsessed with this company. There are so many manufacturers, and so many drives, but they keep coming back to OCZ.Kristian Vättö - Wednesday, January 22, 2014 - link

Is there a manufacturer or drive we've missed? We don't favour any OEM over another and if there's a new product we'll review it.However, a lot depends on the company's PR. OCZ has always been good at this as they approach us and provide samples under NDA prior to the launch, so the review process is smooth for us. Some companies are fairly poor at this as we always have to approach the OEM after the launch to get a sample at all.

GrizzledYoungMan - Wednesday, January 22, 2014 - link

Since you asked, there are some SSD-related reviews I'd love to see from Anandtech:1. The Sandisk X210. It promises Extreme II performance with many of the reliability features of an enterprise drive and excellent pricing.

2. 'Hybrid' RAID 5 or RAID 6 configurations, with caching software such as LSI's Cachecade 2.0 or Adaptec's I-can't-remember-right-now. I've been specc'ing out such a system and found it startling how little in-depth, useful information there is out there about what sort of SSDs are appropriate (and why) and what configurations yield good price/performance outcomes.

3. Along those lines, I'd really like to see how enterprise SSDs you've tested like the Intel DC S3500 perform in your client testing environment. It feels like your enterprise SSD testing is woefully truncated on the false assumption that no one would use enterprise SSDs in a client/workstation setting. Testing those drives more thoroughly would seem to be a much better use of your time than testing the Nth variation of whatever OCZ drive came out this week.

I hope you find this comment helpful, it's intended as constructive criticism. Still love the site!

Kristian Vättö - Wednesday, January 22, 2014 - link

This is definitely helpful. In the end we do this for you, our readers, not four ourselves or manufacturers :)1 and 3 should definitely be possible and 2 very likely too (as long as LSI/Adaptec can deliver us the samples). I'll do my best!

GrizzledYoungMan - Wednesday, January 22, 2014 - link

Awesome! Looking forward to reading those reviews.A recommendation regarding which LSI controller to choose: go for the 9271-8i. At this point, it's the natural choice for most new system builders because it offers better performance (and Fastpath enabled by default) as the 9266 or 9261 for roughly the same price.

Also, if LSI gives you any trouble about providing samples, just tell 'em that some random guy from the internet who calls himself grizzledyoungman wants to see that review. Should do the trick.

romrunning - Thursday, January 23, 2014 - link

1) I also would like to see more tests of SSDs in RAID arrays (RAID-5/6/10 - not just 0/1).2) I would love to see some enterprise controllers tested like the Adaptec ASR-72405 and ASR-8885.

3) In addition to that, let's test more enterprise SSDs (like HGST's SSD800MM and the DC3700).

4) For SMBs who want to build a fast server with awesome I/O, it would be good to see how these SSDs perform in a database server or as a virtual host server.

lever_age - Wednesday, January 22, 2014 - link

Yeah, when products don't get reviewed, it's usually time to start bugging the company PR teams. The bigger and reputable sites have enough work already reviewing the stuff that gets sent to them by the brands that do have their acts together (and want things reviewed).JDG1980 - Wednesday, January 22, 2014 - link

I don't think it's good practice to rely upon free review samples. How do you know the company isn't cherry-picking? I believe it would be best to do what Consumer Reports does with all the items it tests: buy anonymously off-the-shelf.blanarahul - Wednesday, January 22, 2014 - link

Cherry picking MLC SSDs affects performance? That's new. Or maybe, you are wrong.chrnochime - Wednesday, January 22, 2014 - link

How is cherry picking NOT useful for better test results? That's what the definition of cherry picking is. Sounds like you either are ignorant of that fact or are defending OCZ for whatever reason.